1.前言

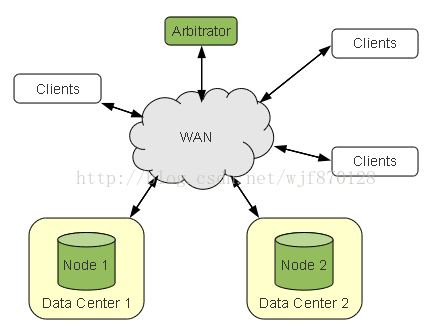

前文中我们介绍了Percona XtraDB Cluster双节点的故障情况(http://blog.csdn.net/wjf870128/article/details/45199781),由于脑裂的发生和quorum机制,通常单纯双节点的percona集群无法保证环境的可靠性。

因此引入了仲裁技术-->arbitrator来防止双节点的集群环境中由于通信故障而导致节点无法正常同步的情况发生。

上图摘自galera官网中对于arbitrator的定义,在这里arbitrator有两种功能:

- 在多节点的集群当中担任类似与已添加节点的角色,防止节点间通信中断导致的闹裂发生

- 可以用来请求一个完整的SST副本,用于备份使用。

2.安装步骤

Cluster:Percona-XtraDB-Cluster5.6.22-25

主节点:

hostname:mysql-pxc01

ip addr:192.168.48.11

备节点:

hostname:mysql-pxc02

ip addr:192.168.48.12

参考前文http://blog.csdn.net/wjf870128/article/details/45176011搭建双节点的percona xtradb cluster环境。

2.Arbitrator环境:

OS:Redhat 6.5

Software:Percona-XtraDB-Cluster-5.6.22-25.8-r978-el6-i386-bundle.tar

ip addr:192.168.48.8

3.解压缩软件包

tar -xf Percona-XtraDB-Cluster-5.6.22-25.8-r978-el6-i386-bundle.tar

4.安装依赖包nc

yum install nc

5.安装Arbitrator包

rpm -ivh Percona-XtraDB-Cluster-garbd-3-3.9-1.3494.rhel6.i686.rpm

3.调用Arbitrator使用

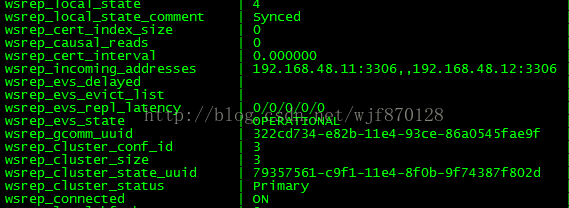

1.查看现有的双节点Percona XtraDB Cluster状态

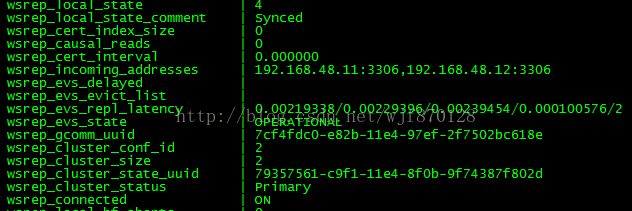

主节点:

备节点:

可以看到双节点的cluster状态正常。

2.创建Arbitrator目录

# mkdir /arbitrator3.创建Arbitrator配置文件

# touch /arbitrator/ab.cfg4.配置Arbitrator配置文件

group = my_Redhat_cluster

address = "gcomm://192.168.48.11:4567,192.168.48.12:4567"5.启动Arbitrator

# garbd -c /arbitrator/ab.cfg -l /arbitrator/ab.log &</pre><p></p><p>6.查看Arbitrator日志如下</p><p></p><pre code_snippet_id="650707" snippet_file_name="blog_20150422_6_3498325" name="code" class="html">[root@mysql-repl01 arbitrator]# cat ab.log

2015-04-22 21:49:37.274 INFO: CRC-32C: using "slicing-by-8" algorithm.

2015-04-22 21:49:37.275 INFO: Read config:

daemon: 0

name: garb

address: gcomm://192.168.48.11:4567,192.168.48.12:4567

group: my_Redhat_cluster

sst: trivial

donor:

options: gcs.fc_limit=9999999; gcs.fc_factor=1.0; gcs.fc_master_slave=yes

cfg: /arbitrator/ab.cfg

log: /arbitrator/ab.log

2015-04-22 21:49:37.278 INFO: protonet asio version 0

2015-04-22 21:49:37.279 INFO: Using CRC-32C for message checksums.

2015-04-22 21:49:37.279 INFO: backend: asio

2015-04-22 21:49:37.279 WARN: access file(./gvwstate.dat) failed(No such file or directory)

2015-04-22 21:49:37.279 INFO: restore pc from disk failed

2015-04-22 21:49:37.281 INFO: GMCast version 0

2015-04-22 21:49:37.282 INFO: (6a6899f9, 'tcp://0.0.0.0:4567') listening at tcp://0.0.0.0:4567

2015-04-22 21:49:37.282 INFO: (6a6899f9, 'tcp://0.0.0.0:4567') multicast: , ttl: 1

2015-04-22 21:49:37.283 INFO: EVS version 0

2015-04-22 21:49:37.284 INFO: gcomm: connecting to group 'my_Redhat_cluster', peer '192.168.48.11:4567,192.168.48.12:4567'

2015-04-22 21:49:37.300 INFO: (6a6899f9, 'tcp://0.0.0.0:4567') turning message relay requesting on, nonlive peers:

2015-04-22 21:49:37.723 INFO: declaring 322cd734 at tcp://192.168.48.11:4567 stable

2015-04-22 21:49:37.724 INFO: declaring 7cf4fdc0 at tcp://192.168.48.12:4567 stable

2015-04-22 21:49:37.737 INFO: Node 322cd734 state prim

2015-04-22 21:49:37.741 INFO: view(view_id(PRIM,322cd734,3) memb {

322cd734,0

6a6899f9,0

7cf4fdc0,0

} joined {

} left {

} partitioned {

})

2015-04-22 21:49:37.741 INFO: save pc into disk

2015-04-22 21:49:37.819 INFO: gcomm: connected

2015-04-22 21:49:37.819 INFO: Changing maximum packet size to 64500, resulting msg size: 32636

2015-04-22 21:49:37.820 INFO: Shifting CLOSED -> OPEN (TO: 0)

2015-04-22 21:49:37.820 INFO: Opened channel 'my_Redhat_cluster'

2015-04-22 21:49:37.820 INFO: New COMPONENT: primary = yes, bootstrap = no, my_idx = 1, memb_num = 3

2015-04-22 21:49:37.821 INFO: STATE EXCHANGE: Waiting for state UUID.

2015-04-22 21:49:37.856 INFO: STATE EXCHANGE: sent state msg: 403738d4-e82d-11e4-8fdd-e23f2cc4e259

2015-04-22 21:49:37.860 INFO: STATE EXCHANGE: got state msg: 403738d4-e82d-11e4-8fdd-e23f2cc4e259 from 0 (mysql-pxc01)

2015-04-22 21:49:37.860 INFO: STATE EXCHANGE: got state msg: 403738d4-e82d-11e4-8fdd-e23f2cc4e259 from 1 (garb)

2015-04-22 21:49:37.869 INFO: STATE EXCHANGE: got state msg: 403738d4-e82d-11e4-8fdd-e23f2cc4e259 from 2 (mysql-pxc02)

2015-04-22 21:49:37.869 INFO: Quorum results:

version = 3,

component = PRIMARY,

conf_id = 2,

members = 2/3 (joined/total),

act_id = 14292,

last_appl. = -1,

protocols = 0/7/3 (gcs/repl/appl),

group UUID = 79357561-c9f1-11e4-8f0b-9f74387f802d

2015-04-22 21:49:37.869 INFO: Flow-control interval: [9999999, 9999999]

2015-04-22 21:49:37.869 INFO: Shifting OPEN -> PRIMARY (TO: 14292)

2015-04-22 21:49:37.870 INFO: Sending state transfer request: 'trivial', size: 7

2015-04-22 21:49:37.874 INFO: Member 1.0 (garb) requested state transfer from '*any*'. Selected 0.0 (mysql-pxc01)(SYNCED) as donor.

2015-04-22 21:49:37.874 INFO: Shifting PRIMARY -> JOINER (TO: 14292)

2015-04-22 21:49:37.879 INFO: 1.0 (garb): State transfer from 0.0 (mysql-pxc01) complete.

2015-04-22 21:49:37.879 INFO: Shifting JOINER -> JOINED (TO: 14292)

2015-04-22 21:49:37.883 INFO: 0.0 (mysql-pxc01): State transfer to 1.0 (garb) complete.

2015-04-22 21:49:37.883 INFO: Member 1.0 (garb) synced with group.

2015-04-22 21:49:37.883 INFO: Shifting JOINED -> SYNCED (TO: 14292)

2015-04-22 21:49:37.889 INFO: Member 0.0 (mysql-pxc01) synced with group.

2015-04-22 21:49:40.800 INFO: (6a6899f9, 'tcp://0.0.0.0:4567') turning message relay requesting off7.查看节点状态

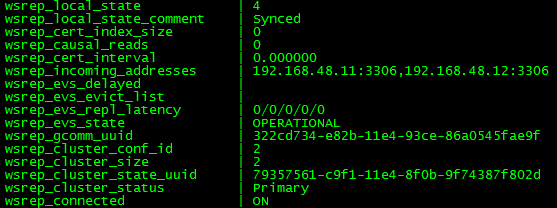

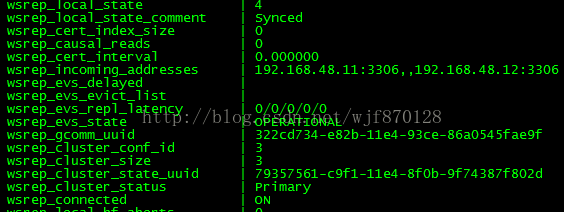

主节点:

备节点:

可以发现cluster成为3节点集群环境。

4.验证Abitrator

[root@mysql-pxc01 ~]# iptables -A INPUT -d 192.168.48.12 -s 192.168.48.11 -j REJECT

[root@mysql-pxc01 ~]# iptables -A OUTPUT -d 192.168.48.12 -s 192.168.48.11 -j REJECT2.查看主备节点状态

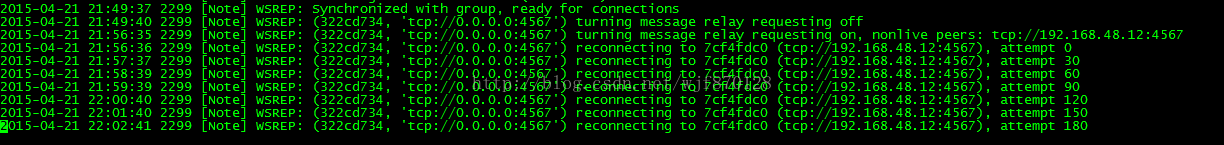

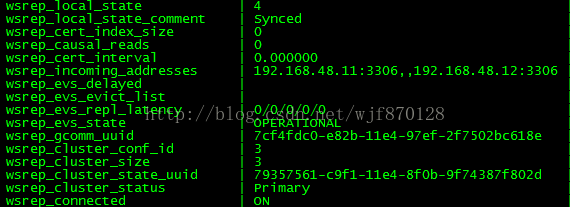

备节点:

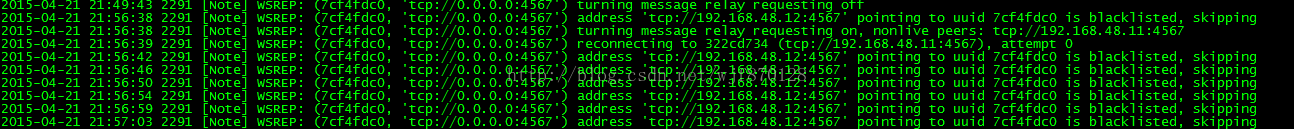

日志:

发现虽然日志中报通信错误,但是集群状态仍然正常。

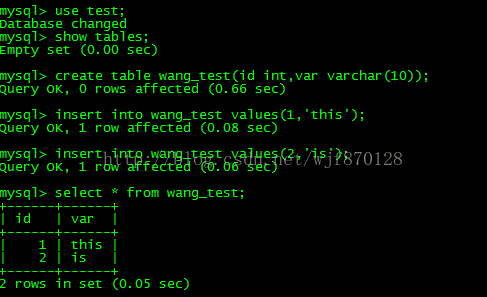

3.我们再来插入数据看看是否同步

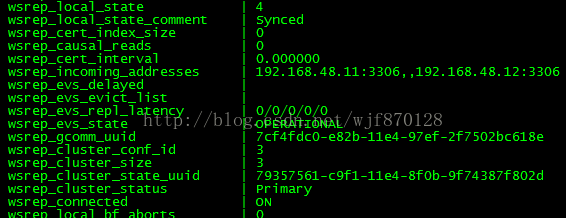

主节点:

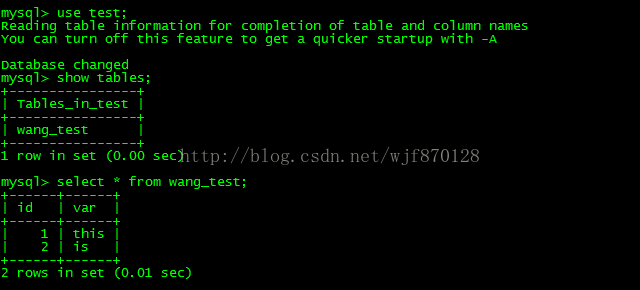

备节点:

可以发现数据已经成功同步到备节点中!Arbitrator功能生效。

1219

1219

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?