环境准备:

一:安装linux系统

CentOS-6.5-x86_64-bin-DVD1.iso

二:创建用户

hadoop

三:配置网络 SCRT访问

四:版本

hadoop-2.6.4.tar.gz

jdk1.7.0_79.tar.gz

步骤:

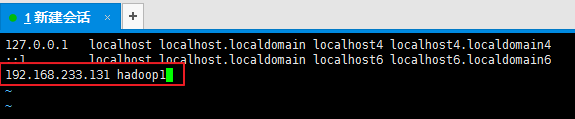

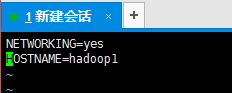

一:修改主机名

/etc/hosts —>hadoop1

/etc/sysconfig/network —hadoop1

重启……reboot

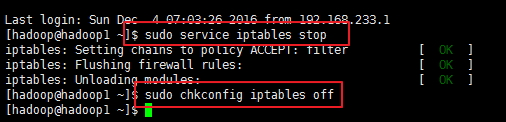

二:关闭防火墙

service iptables stop 关闭 (service iptables start/status)

chkconfig iptables off 关机不启动

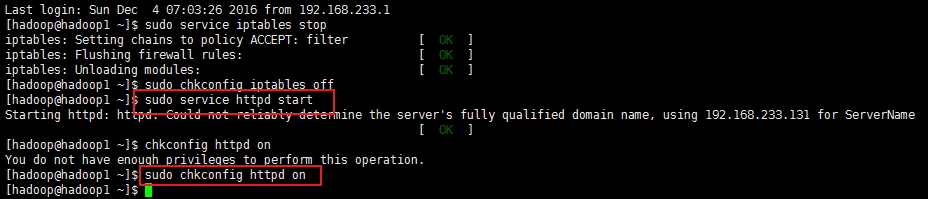

三:http服务保持开启

service httpd start

chkconfig httpd on

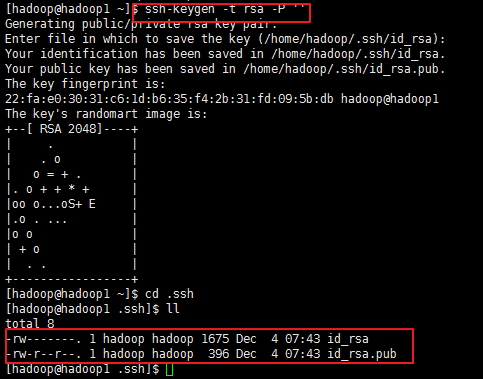

四:配置ssh密钥

1:创建密钥

/home/hadoop/.ssh 或 ~/.ssh

ssh-keygen -t rsa -P ”

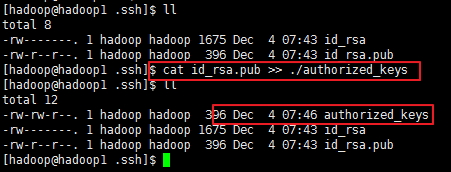

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

2:修改权限

检查权限,不满足的请给权限,默认不需要修改( chmod 700 .ssh chmod 600 id_rsa chmod 644 authorized_keys )

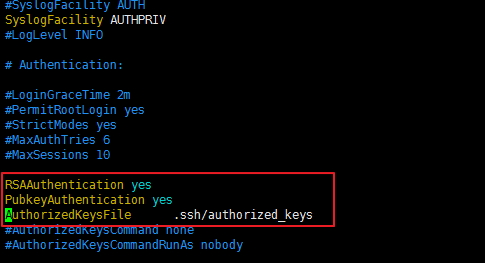

3:修改配置文件

修改SSH配置文件vim /etc/ssh/sshd_config

将下面三行注释去掉,并修改如下:

RSAAuthentication yes

PubkeyAuthentication yes

AuthorizedKeysFile .ssh/authorized_keys

4:测试

设置完后,重启SSH服务 #service sshd restart 验证是否成功

ssh hadoop1

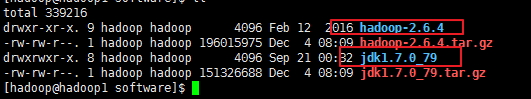

五:安装JDK, hadoop

1:分别解压jdk,hadoop

2:配置环境变量

/etc/profile

####JAVA

export JAVA_HOME=/home/hadoop/software/jdk1.7.0_79

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

##PATH

export PATH=${JAVA_HOME}/bin:$PATH

####HADOOP

export HADOOP_HOME=/home/hadoop/software/hadoop-2.6.4

##PATH

export PATH=${JAVA_HOME}/bin:${HADOOP_HOME}/bin:$PATH 3:刷新(要切换到root):

source /etc/profile

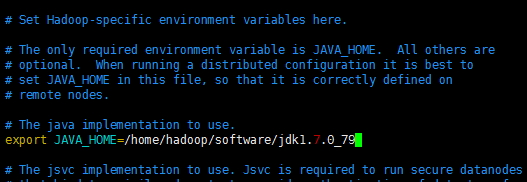

4:修改hadoop-env.sh

cd /home/hadoop/software/hadoop-2.6.4/etc/hadoop

hadoop-env.sh中java_home改成实际目录

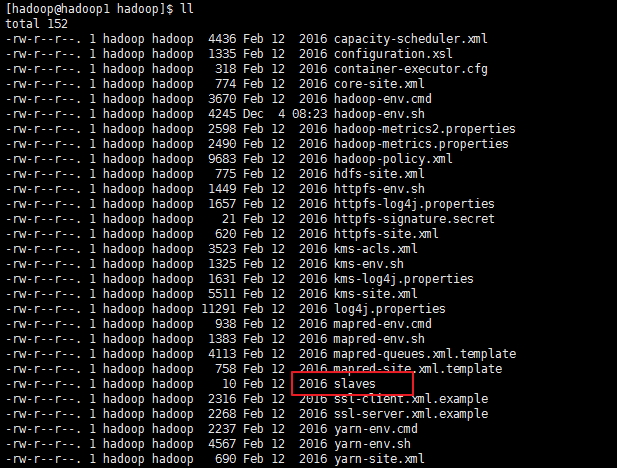

5:修改slaves

vim /home/hadoop/software/hadoop-2.6.4/etc/hadoop/slaves

改为:hadoop1

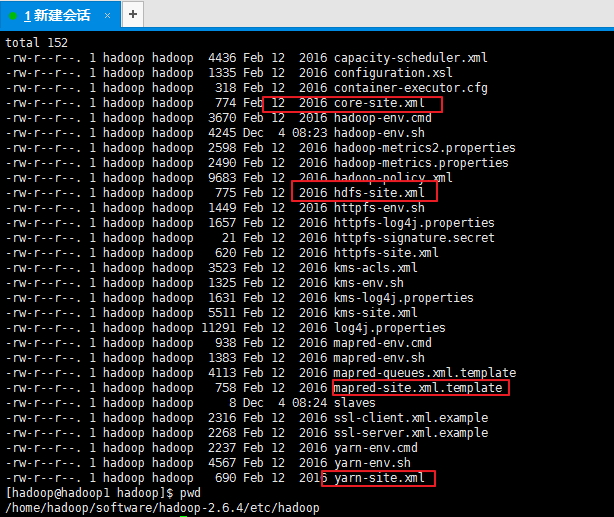

6:修改配置文件

core-site.xml

<property>

<name>hadoop.tmp.dir</name>

<value>/home/hadoop/software/hadoop-2.6.4/tmp</value>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop1:9000</value>

</property>hdfs-site.xml

<property>

<name>dfs.name.dir</name>

<value>/home/hadoop/software/hadoop-2.6.4/tmp/dfs/name</value>

</property>

<property>

<name>dfs.data.dir</name>

<value>/home/hadoop/software/hadoop-2.6.4/tmp/dfs/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>mapred-site.xml(mapred-site.xml.template改为mapred-site.xml)

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

<final>true</final>

</property>

<property>

<name>mapreduce.jobtracker.http.address</name>

<value>hadoop1:50030</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop1:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop1:19888</value>

</property>

<property>

<name>mapred.job.tracker</name>

<value>http://hadoop1:9001</value>

</property>

</configuration>yarn-site.xml

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop1</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>hadoop1:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>hadoop1:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>hadoop1:8031</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>hadoop1:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>hadoop1:8088</value>

</property>

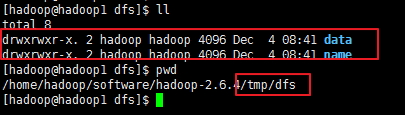

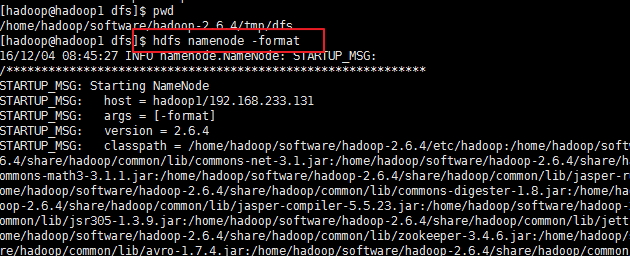

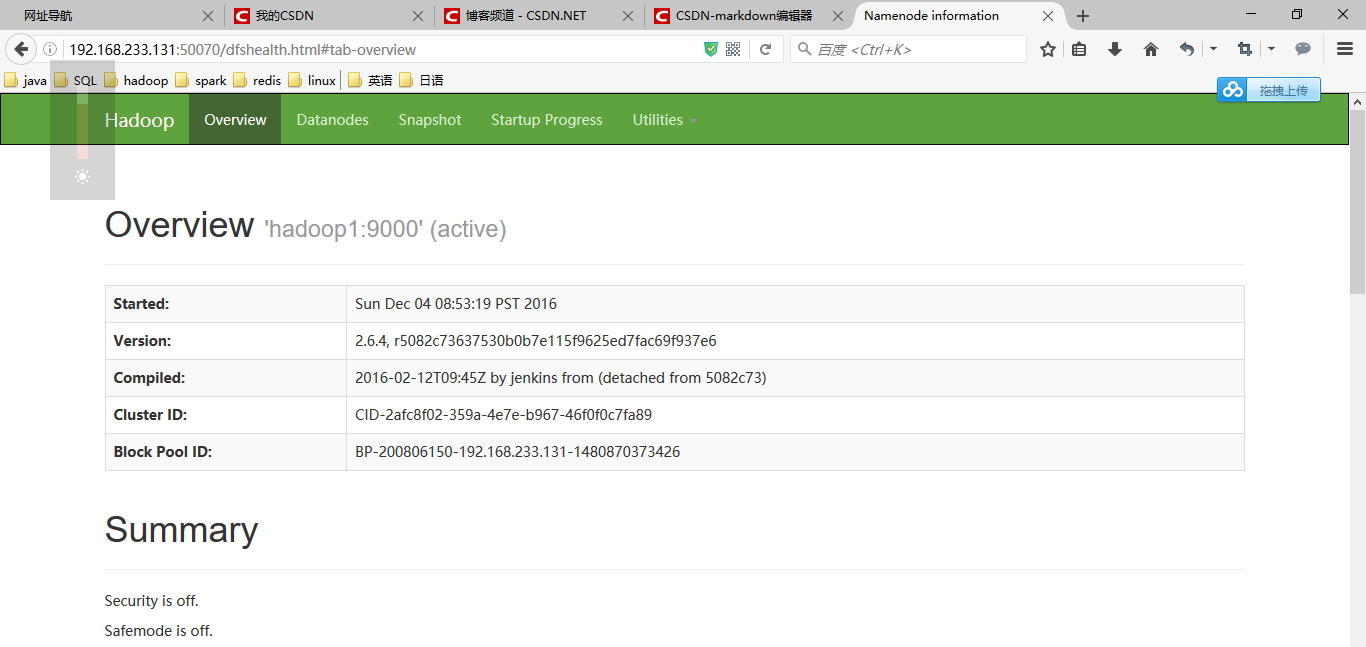

</configuration>六:格式化namenode

1:创建文件夹

ps:上面配置文件里有,指定了namenode的路径

2:格式化namenode

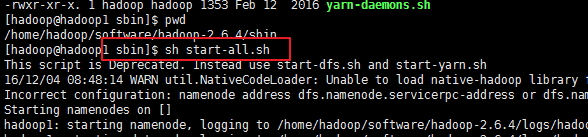

七:启动服务

sh start-all.sh

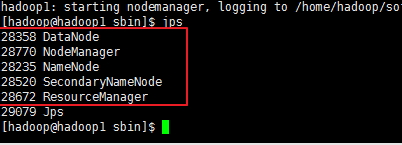

jps查看进程

以上5个进程都OK,才OK

571

571

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?