**注意:本文步骤完全参考阳光奶爸的博文,有些部分根据我自己的理解进行了修改和补充。

http://blog.csdn.net/carl810224/article/details/52174412

自己搭建成功,记录下来作为作业笔记。

**

一. 准备工作:

1.搭建Hbase HA之前需要搭建好Hadoop HA集群

2.下载所需要的软件包:

hbase-1.2.0-cdh5.7.1.tar.gz

http://archive.cloudera.com/cdh5/cdh/5/

二.企业级系统参数配置

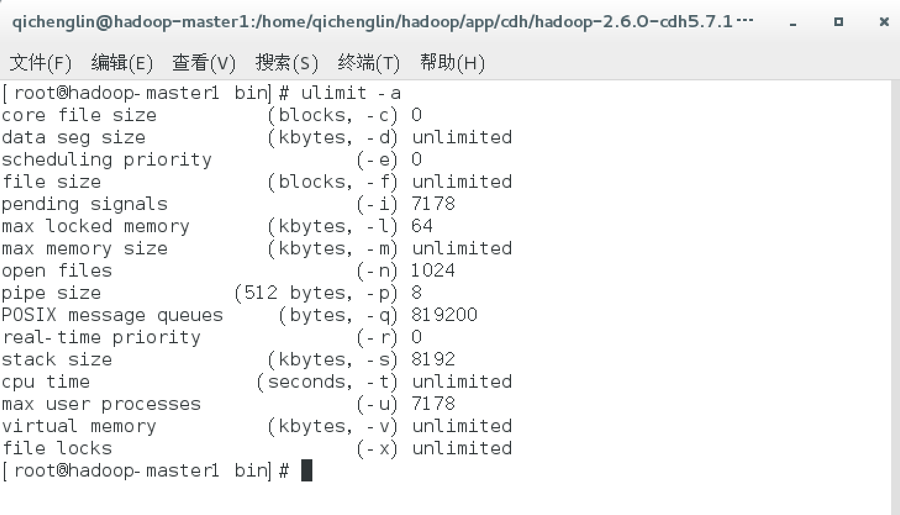

//查看linux系统最大进程数和最大文件打开数

ulimit -a

//设置linux系统最大进程数和最大文件打开数(设置完重新登录shell)

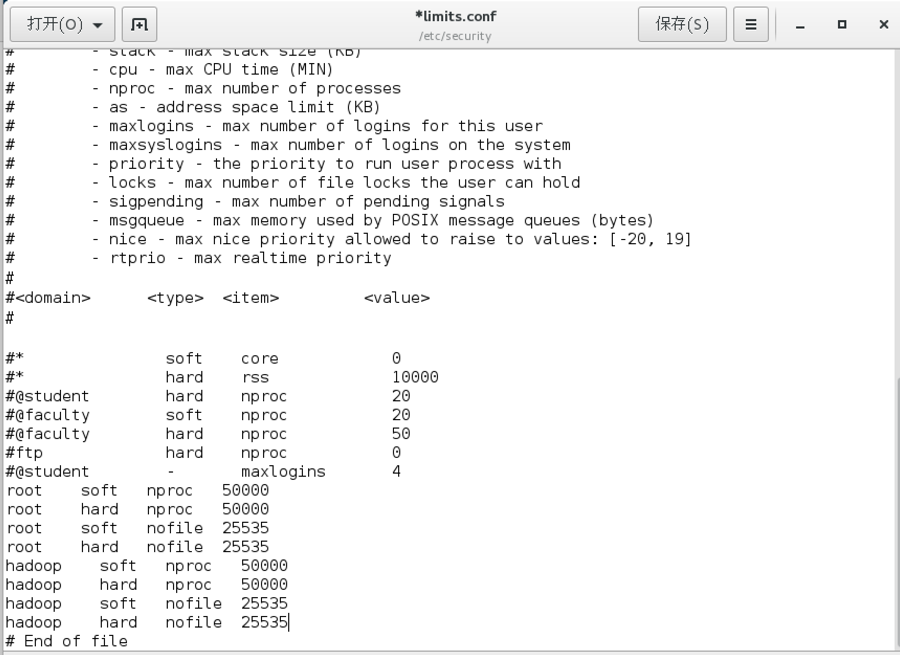

gedit /etc/security/limits.conf

root soft nproc 50000

root hard nproc 50000

root soft nofile 25535

root hard nofile 25535

hadoop soft nproc 50000

hadoop hard nproc 50000

hadoop soft nofile 25535

hadoop hard nofile 25535

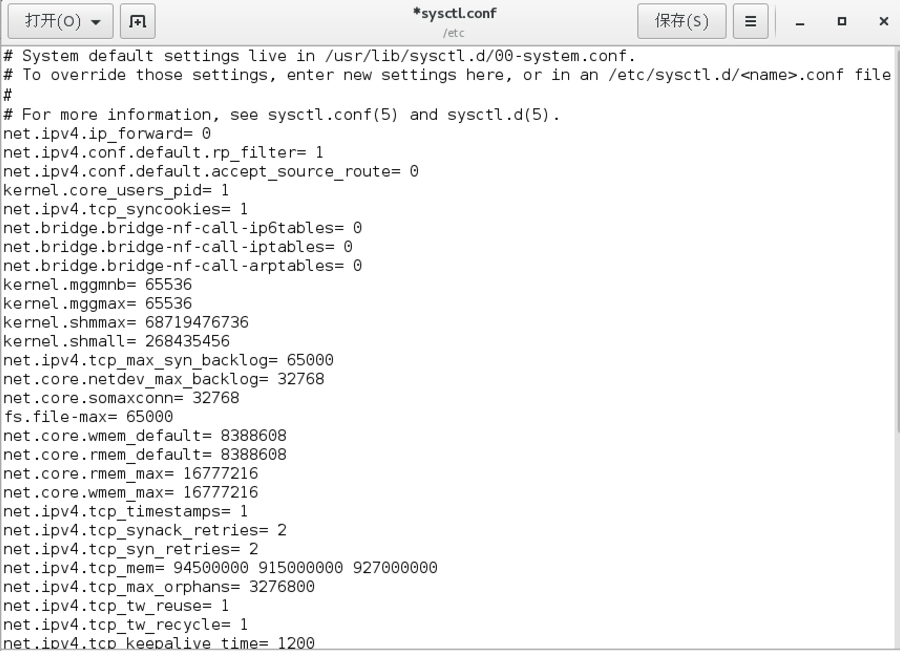

//调整linux内核参数

gedit /etc/sysctl.conf

net.ipv4.ip_forward= 0

net.ipv4.conf.default.rp_filter= 1

net.ipv4.conf.default.accept_source_route= 0

kernel.core_users_pid= 1

net.ipv4.tcp_syncookies= 1

net.bridge.bridge-nf-call-ip6tables= 0

net.bridge.bridge-nf-call-iptables= 0

net.bridge.bridge-nf-call-arptables= 0

kernel.mggmnb= 65536

kernel.mggmax= 65536

kernel.shmmax= 68719476736

kernel.shmall= 268435456

net.ipv4.tcp_max_syn_backlog= 65000

net.core.netdev_max_backlog= 32768

net.core.somaxconn= 32768

fs.file-max= 65000

net.core.wmem_default= 8388608

net.core.rmem_default= 8388608

net.core.rmem_max= 16777216

net.core.wmem_max= 16777216

net.ipv4.tcp_timestamps= 1

net.ipv4.tcp_synack_retries= 2

net.ipv4.tcp_syn_retries= 2

net.ipv4.tcp_mem= 94500000 915000000 927000000

net.ipv4.tcp_max_orphans= 3276800

net.ipv4.tcp_tw_reuse= 1

net.ipv4.tcp_tw_recycle= 1

net.ipv4.tcp_keepalive_time= 1200

net.ipv4.tcp_syncookies= 1

net.ipv4.tcp_fin_timeout= 10

net.ipv4.tcp_keepalive_intvl= 15

net.ipv4.tcp_keepalive_probes= 3

net.ipv4.ip_local_port_range= 1024 65535

net.ipv4.conf.eml.send_redirects= 0

net.ipv4.conf.lo.send_redirects= 0

net.ipv4.conf.default.send_redirects= 0

net.ipv4.conf.all.send_redirects= 0

net.ipv4.icmp_echo_ignore_broadcasts= 1

net.ipv4.conf.eml.accept_source_route= 0

net.ipv4.conf.lo.accept_source_route= 0

net.ipv4.conf.default.accept_source_route= 0

net.ipv4.conf.all.accept_source_route= 0

net.ipv4.icmp_ignore_bogus_error_responses= 1

kernel.core_pattern= /tmp/core

vm.overcommit_memory= 1

//

systcl –p

三.HBase HA配置

//进入hbase的conf目录

cd /home/qichenglin/hadoop/app/cdh/hbase-1.2.0-cdh5.7.1/conf/

修改hbase-env.sh

gedit hbase-env.sh

# 配置JDK安装路径

exportJAVA_HOME=/home/qichenglin/hadoop/app/jdk1.7.0_79

# 配置Hadoop安装路径

exportHADOOP_HOME=/home/qichenglin/hadoop/app/cdh/hadoop-2.6.0-cdh5.7.1

# 设置HBase的日志目录

exportHBASE_LOG_DIR=${HBASE_HOME}/logs

# 设置HBase的pid目录

exportHBASE_PID_DIR=${HBASE_HOME}/pids

# 使用独立的ZooKeeper集群

exportHBASE_MANAGES_ZK=false

# 优化配置项

# 设置HBase内存堆的大小

exportHBASE_HEAPSIZE=1024

# 设置HMaster最大可用内存

exportHBASE_MASTER_OPTS="-Xmx512m"

# 设置HRegionServer最大可用内存

exportHBASE_REGIONSERVER_OPTS="-Xmx1024m"//配置hbase-site.xml

gedit hbase-site.xml

<configuration>

<!-- 设置HRegionServers共享目录 -->

<property>

<name>hbase.rootdir</name>

<value>hdfs://mycluster/hbase</value>

</property>

<!-- 设置HMaster的rpc端口 -->

<property>

<name>hbase.master.port</name>

<value>16000</value>

</property>

<!-- 设置HMaster的http端口 -->

<property>

<name>hbase.master.info.port</name>

<value>16010</value>

</property>

<!-- 指定缓存文件存储的路径 -->

<property>

<name>hbase.tmp.dir</name>

<value>/home/qichenglin/hadoop/app/cdh/hbase-1.2.0-cdh5.7.1/tmp</value>

</property>

<!-- 开启分布式模式 -->

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<!-- 指定ZooKeeper集群位置 -->

<property>

<name>hbase.zookeeper.quorum</name>

<value>hadoop-slave1,hadoop-slave2,hadoop-slave3</value>

</property>

<!-- 指定ZooKeeper集群端口 -->

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<!--指定Zookeeper数据目录,需要与ZooKeeper集群上配置相一致 -->

<property>

<name>hbase.zookeeper.property.dataDir</name>

<value>/home/qichenglin/hadoop/app/cdh/zookeeper-3.4.5-cdh5.7.1/data</value>

</property>

<!-- \\\\\\\\\\以下为优化配置项\\\\\\\\\\ -->

<!-- 关闭分布式日志拆分 -->

<property>

<name>hbase.master.distributed.log.splitting</name>

<value>false</value>

</property>

<!-- hbase客户端rpc扫描一次获取的行数 -->

<property>

<name>hbase.client.scanner.caching</name>

<value>2000</value>

</property>

<!-- HRegion分裂前最大的文件大小(10G) -->

<property>

<name>hbase.hregion.max.filesize</name>

<value>10737418240</value>

</property>

<!-- HRegionServer中最大的region数量 -->

<property>

<name>hbase.regionserver.reginoSplitLimit</name>

<value>2000</value>

</property>

<!-- StoreFile的个数超过这个数就开始合并 -->

<property>

<name>hbase.hstore.compactionThreshold</name>

<value>6</value>

</property>

<!-- 当某一个region的storefile个数达到该值则block写入,等待compact -->

<property>

<name>hbase.hstore.blockingStoreFiles</name>

<value>14</value>

</property>

<!-- 超过memstore大小的倍数达到该值则block所有写入请求,自我保护 -->

<property>

<name>hbase.hregion.memstore.block.multiplier</name>

<value>20</value>

</property>

<!-- service工作的sleep间隔 -->

<property>

<name>hbase.server.thread.wakefrequency</name>

<value>500</value>

</property>

<!-- ZooKeeper客户端同时访问的并发连接数 -->

<property>

<name>hbase.zookeeper.property.maxClientCnxns</name>

<value>2000</value>

</property>

<!-- 根据业务情况进行配置 -->

<property>

<name>hbase.regionserver.global.memstore.lowerLimit</name>

<value>0.3</value>

</property>

<property>

<name>hbase.regionserver.global.memstore.upperLimit</name>

<value>0.39</value>

</property>

<property>

<name>hbase.block.cache.size</name>

<value>0.4</value>

</property>

<!-- RegionServer的请求处理IO线程数 -->

<property>

<name>hbase.reginoserver.handler.count</name>

<value>300</value>

</property>

<!-- 客户端最大重试次数 -->

<property>

<name>hbase.client.retries.number</name>

<value>5</value>

</property>

<!-- 客户端重试的休眠时间 -->

<property>

<name>hbase.client.pause</name>

<value>100</value>

</property>

</configuration>//配置regionservers

gedit regionservers

hadoop-slave1

hadoop-slave2

hadoop-slave3//新建backup-masters文件并配置

gedit backup-masters

//创建hbase的缓存文件目录

cd /home/qichenglin/hadoop/app/cdh/hbase-1.2.0-cdh5.7.1/

mkdir tmp

//创建hbase的日志文件目录

mkdir logs

//创建hbase的pid文件目录

mkdir pids

//将hbase工作目录同步到集群其它节点

scp -r /home/qichenglin/hadoop/app/cdh/hbase-1.2.0-cdh5.7.1/ hadoop-master2:/home/qichenglin/hadoop/app/cdh/

scp -r /home/qichenglin/hadoop/app/cdh/hbase-1.2.0-cdh5.7.1/ hadoop-slave1:/home/qichenglin/hadoop/app/cdh/

scp -r /home/qichenglin/hadoop/app/cdh/hbase-1.2.0-cdh5.7.1/ hadoop-slave2:/home/qichenglin/hadoop/app/cdh/

scp -r /home/qichenglin/hadoop/app/cdh/hbase-1.2.0-cdh5.7.1/ hadoop-slave3:/home/qichenglin/hadoop/app/cdh/

//每个复制了路径的节点

chmod -R 777 /home/qichenglin/hadoop/app/cdh/hadoop-2.6.0-cdh5.7.1/

//在集群各节点上修改用户环境变量

gedit ~/.bashrc

export HBASE_HOME=/home/qichenglin/hadoop/app/cdh/hbase-1.2.0-cdh5.7.1

export PATH=$PATH:$HBASE_HOME/binsource ~/.bashrc

//删除hbase的slf4j-log4j12-1.7.5.jar,解决hbase和hadoop的LSF4J包冲突

cd /home/qichenglin/hadoop/app/cdh/hbase-1.2.0-cdh5.7.1/lib

mv slf4j-log4j12-1.7.5.jar slf4j-log4j12-1.7.5.jar.bk

四.集群启动

// 启动zookeeper集群(分别在slave1、slave2和slave3上执行)

zkServer.sh start

备注:此命令分别在slave1/slave2/slave3节点启动了QuorumPeerMain。

// 启动HDFS(在master1执行)

start-dfs.sh

备注:此命令分别在master1/master2节点启动了NameNode和ZKFC,分别在slave1/slave2/slave3节点启动了DataNode和JournalNode。

// 启动YARN(在master2执行)

start-yarn.sh

备注:此命令在master2节点启动了ResourceManager,分别在slave1/slave2/slave3节点启动了NodeManager。

// 启动YARN的另一个ResourceManager(在master1执行,用于容灾)

yarn-daemon.sh start resourcemanager

备注:此命令在master1节点启动了ResourceManager。

// 启动HBase(在master1执行)

start-hbase.sh

备注:此命令分别在master1/master2节点启动了HMaster,分别在slave1/slave2/slave3节点启动了HRegionServer。

五 .测试

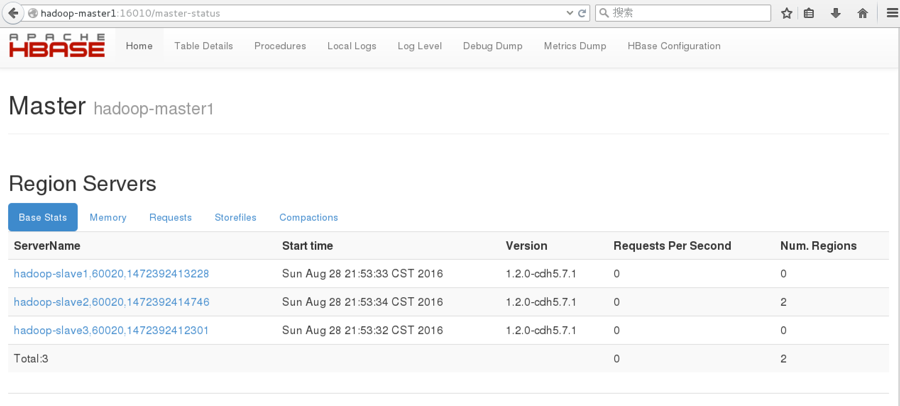

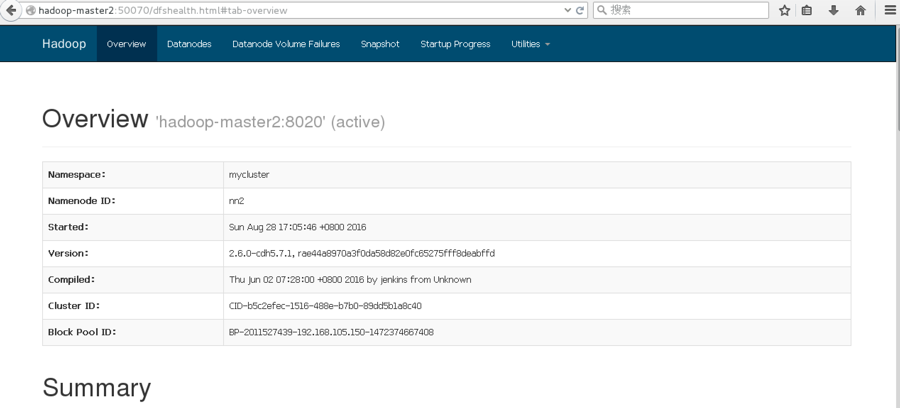

(一)Web UI

下图为http://hadoop-master1:16010,可看到主Master状态:

下图为http://hadoop-master2:50070,可看到备份Master状态:

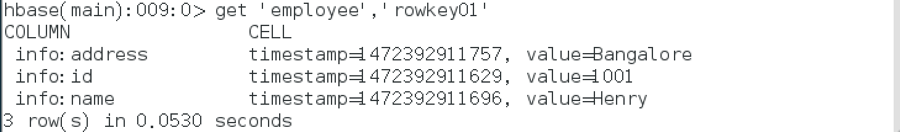

(二)Shell操作

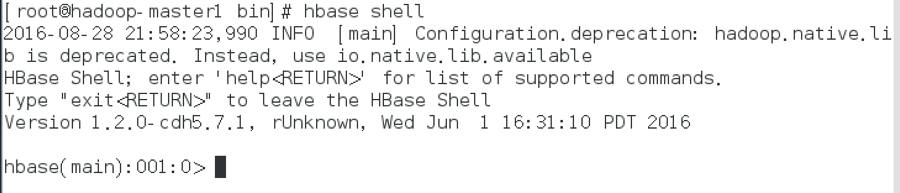

连接hbase客户端

hbase shell

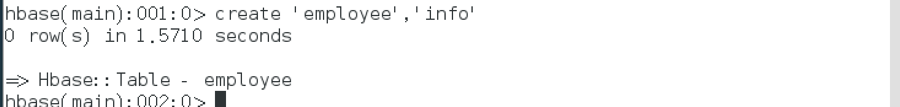

创建表,表名为employee,列族为info

create ‘employee’,’info’

显示hbase已创建的表,验证表employee是否创建成功

list

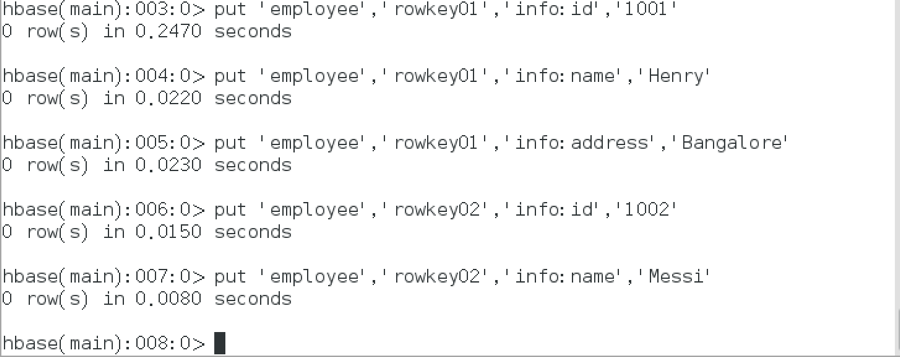

在表employee中插入测试数据

put ‘employee’,’rowkey01’,’info:id’,’1001’

put ‘employee’,’rowkey01’,’info:name’,’Henry’

put ‘employee’,’rowkey01’,’info:address’,’Bangalore’

put ‘employee’,’rowkey02’,’info:id’,’1002’

put ‘employee’,’rowkey02’,’info:name’,’Messi’

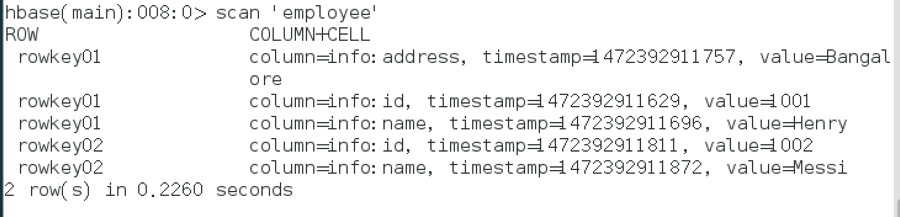

检索表employee中的所有记录

scan ‘employee’

检索表employee中行键为rowkey01的记录

get ‘employee’,’rowkey01’

禁用表employee并删除

disable ‘employee’

drop ‘employee’

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?