环境准备

安装hadoop需要jdk的环境,因此先安装jdk,因为环境变量配置是遍历 /etc/profile.d/**.sh文件,因此在此路径下面创建my_env.sh文件,把我们的环境变量全部配置到这里,包括hadoop的环境变量。

关闭防火墙,防止不必要的异常发生

安装jdk,关闭防火墙

yum install -y epel-release

关闭防火墙,和防火墙启动自启

[root@hadoop100 ~]# systemctl stop firewalld

[root@hadoop100 ~]# systemctl disable firewalld.service

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@hadoop100 ~]# reboot

#查看本机自带的jdk

[root@hadoop102 software]# rpm -qa |grep -i java

#删除本机自带的jdk

[root@hadoop102 software]# rpm -qa |grep -i java | xargs -n1 rpm -e --nodeps

#下载jdk

[root@hadoop102 software]# 上传jdk

#配置环境变量

[root@hadoop102 module]# cd /etc/profile.d/

[root@hadoop102 profile.d]# vim my_env.sh

#JAVA_HOME

export JAVA_HOME=/opt/module/jdk1.8.0_251

export PATH=$PATH:$JAVA_HOME/bin

保存退出

[root@hadoop102 profile.d]# source /etc/profile

[root@hadoop102 profile.d]# java -version

java version "1.8.0_251"

Java(TM) SE Runtime Environment (build 1.8.0_251-b08)

Java HotSpot(TM) 64-Bit Server VM (build 25.251-b08, mixed mode)

安装成功

安装hadoop,配置hadoop环境变量

[root@hadoop102 software]# wget http://archive.apache.org/dist/hadoop/core/hadoop-3.1.3/hadoop-3.1.3.tar.gz

##解压

[root@hadoop102 software]# tar -zxvf hadoop-3.1.3.tar.gz -C /opt/module/

##配置环境变量

[root@hadoop102 software]# vim /etc/profile.d/my_env.sh

#JAVA_HOME

export JAVA_HOME=/opt/module/jdk1.8.0_251

export PATH=$PATH:$JAVA_HOME/bin

export HADOOP_HOME=/opt/module/jdk1.8.0_251

export PATH=$PATH:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbinsource /etc/profile安装jdk,hadoop之后,copy到hadoop103和104

[root@hadoop102 module]# scp -r jdk1.8.0_251/ root@hadoop103:/opt/module/

[root@hadoop102 module]# scp -r jdk1.8.0_251/ root@hadoop104:/opt/module/

[root@hadoop102 module]# scp -r hadoop-3.1.3/ root@hadoop103:/opt/module/

[root@hadoop102 module]# scp -r hadoop-3.1.3/ root@hadoop104:/opt/module/

创建快速同步工具xsync

#创建xsync文件

[root@hadoop102 ~]# cd /usr/bin

[root@hadoop102 bin]# vim xsync#!/bin/bash

#1.判断参数个数

if [ $# -lt 1 ]

then

echo Not Enough Arguement!

exit;

fi

#2.遍历集群所有机器

for host in hadoop102 hadoop103 hadoop104

do

echo ================== $host ===============

#3.遍历所有目录 挨个发送

for file in $@

do

#4.判断文件是否存在

if [ -e $file ]

then

#5. 获取父目录

pdir=$(cd -P $(dirname $file); pwd)

#6. 获取当前文件名称

fname=$(basename $file)

ssh $host "mkdir -p $pdir"

rsync -av $pdir/$fname $host:$pdir

else

echo $file does not exists!

fi

done

done

[root@hadoop102 bin]# chmod 777 xsync

分发

[root@hadoop102 opt]# xsync /etc/profile.d/my_env.sh

ssh免密登录

[root@hadoop102 .ssh]# ssh-keygen -t rsa

[root@hadoop102 .ssh]# ssh-copy-id hadoop102

[root@hadoop102 .ssh]# ssh-copy-id hadoop103

[root@hadoop102 .ssh]# ssh-copy-id hadoop104

[root@hadoop103 .ssh]# ssh-keygen -t rsa

[root@hadoop103 .ssh]# ssh-copy-id hadoop102

[root@hadoop103 .ssh]# ssh-copy-id hadoop103

[root@hadoop103 .ssh]# ssh-copy-id hadoop104

[root@hadoop104 .ssh]# ssh-keygen -t rsa

[root@hadoop104 .ssh]# ssh-copy-id hadoop102

[root@hadoop104 .ssh]# ssh-copy-id hadoop103

[root@hadoop104 .ssh]# ssh-copy-id hadoop104

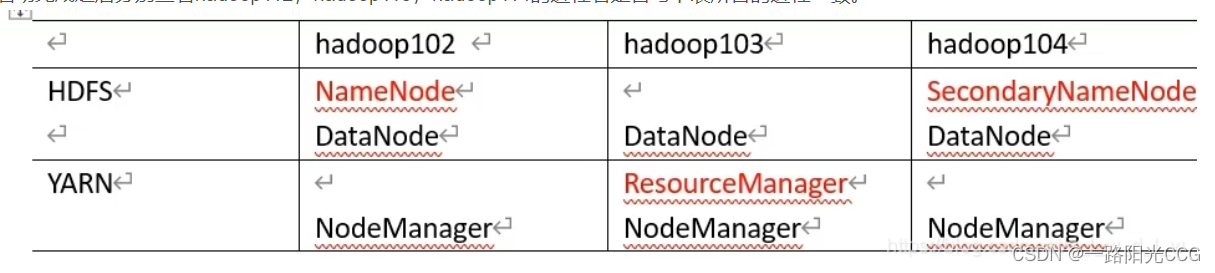

集群配置

配置集群需要修改几个文件

- core-site.xml 主要配置namenode的地址,以及数据存储目录

- hdfs-site.xml 配置namenode.http-address和secondary.http-address,这里配置的hadoop104,因此当启动集群的时候,hadoop102的jps有NameNode,

hadoop104的jps会有SecondaryNameNode - yarn-site.xml 配置yarn的namemanager,这里配置的是hadoop103,因此hadoop103还需要启动start-yarn.sh

- mapred-site.xml

修改core-site.xml

[root@hadoop102 hadoop]# cd /opt/module/hadoop-3.1.3/etc/hadoop

[root@hadoop102 hadoop]# vim core-site.xml

<configuration>

<!-- 制定NameNode地址 -->

<property>

<name>fs.default.name</name>

<value>hdfs://hadoop102:8020</value>

</property>

<!-- 制定hadoop数据的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-3.1.3/data</value>

</property>

</configuration>

修改 hdfs-site.xml

[root@hadoop102 hadoop]# vim hdfs-site.xml <configuration>

<!-- nn web 端访问地址 -->

<property>

<name>dfs.namenode.http-address</name>

<value>hadoop102:9870</value>

</property>

<!-- 2nn web 端访问地址 -->

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop104:9868</value>

</property>

</configuration>

修改yarn-site.xml

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop103</value>

</property>

<property>

<name>yarn.nodemanager.env-whitelist</name>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME,SPARK_HOME</value>

</property>

<!-- 开启日志聚集功能 -->

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<!-- 设置日志聚集服务器地址 -->

<property>

<name>yarn.log.server.url</name>

<value>http://hadoop102:19888/jobhistory/logs</value>

</property>

<!-- 设置日志保留时间为7天 -->

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property>修改mapred-site.xml

<!-- 指定MapReduce程序运行在Yarn上 -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<!-- 历史服务器端地址 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop102:10020</value>

</property>

<!-- 历史服务器web端地址 -->

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop102:19888</value>

</property>分发

[root@hadoop102 etc]# xsync hadoop/

[root@hadoop102 etc]# vim hadoop/workers

[root@hadoop102 etc]# cat hadoop/workers

hadoop102

hadoop103

hadoop104

[root@hadoop102 etc]# xsync hadoop/

启动集群 第一次需要初始化

[root@hadoop102 etc]# hdfs namenode -format

启动集群

[root@hadoop102 hadoop-3.1.3]# sbin/start-dfs.sh

[root@hadoop102 hadoop-3.1.3]# jps

66997 Jps

66717 DataNode

66559 NameNode

如果启动的时候出现

[root@hadoop102 hadoop-3.1.3]# sbin/start-dfs.sh

Starting namenodes on [hadoop102]

ERROR: Attempting to operate on hdfs namenode as root

ERROR: but there is no HDFS_NAMENODE_USER defined. Aborting operation.

Starting datanodes

ERROR: Attempting to operate on hdfs datanode as root

ERROR: but there is no HDFS_DATANODE_USER defined. Aborting operation.

Starting secondary namenodes [hadoop104]

ERROR: Attempting to operate on hdfs secondarynamenode as root

ERROR: but there is no HDFS_SECONDARYNAMENODE_USER defined. Aborting operation.

就在my_env.sh添加配置

[root@hadoop102 ~]# vim /etc/profile.d/my_env.sh

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

##分发

[root@hadoop102 ~]# xsync /etc/profile.d/my_env.sh

================== hadoop102 ===============

sending incremental file list

sent 48 bytes received 12 bytes 120.00 bytes/sec

total size is 377 speedup is 6.28

================== hadoop103 ===============

sending incremental file list

my_env.sh

sent 472 bytes received 41 bytes 1,026.00 bytes/sec

total size is 377 speedup is 0.73

================== hadoop104 ===============

sending incremental file list

my_env.sh

sent 472 bytes received 41 bytes 1,026.00 bytes/sec

total size is 377 speedup is 0.73

##

[root@hadoop102 ~]# source /etc/profile

在hadoop103下

[root@hadoop103 ~]# source /etc/profile

在hadoop104下

[root@hadoop104 ~]# source /etc/profile

启动yarn

在hadoop103下启动

[root@hadoop103 sbin]# start-yarn.sh

Starting resourcemanager

上一次登录:六 10月 15 14:03:52 CST 2022从 192.168.10.1pts/0 上

Starting nodemanagers

上一次登录:六 10月 15 14:11:47 CST 2022pts/0 上

[root@hadoop103 sbin]# jps

2784 Jps

2437 NodeManager

2061 DataNode

2285 ResourceManager

[root@hadoop103 sbin]#

看一下hadoop104下的

[root@hadoop104 ~]# jps

2177 SecondaryNameNode

2212 Jps

2063 DataNode

[root@hadoop104 ~]#

启动完成看是否一致jps

jpsall

[root@hadoop102 bin]# vim jpsall

#!/bin/bash

for host in hadoop102 hadoop103 hadoop104

do

echo ================= $host =================

ssh $host jps

done

[root@hadoop102 bin]# chmod 777 jpsall

[root@hadoop102 bin]# jpsall

================= hadoop102 =================

8544 DataNode

9042 JobHistoryServer

8403 NameNode

8876 NodeManager

9324 Jps

================= hadoop103 =================

7330 DataNode

7526 ResourceManager

8186 Jps

7659 NodeManager

================= hadoop104 =================

5360 SecondaryNameNode

5762 Jps

5446 NodeManager

5240 DataNode

启动历史服务器

[root@hadoop102 hadoop-3.1.3]# cd /opt/module/hadoop-3.1.3/bin/

[root@hadoop102 bin]# mapred --daemon start historyserver

[root@hadoop102 bin]# jps

4130 NodeManager

4146 Jps

4024 JobHistoryServer 历史服务器

2573 DataNode

2398 NameNode

#停止

[root@hadoop102 bin]# mapred --daemon stop historyserver

[root@hadoop102 /]# jps

6576 Jps

4854 NodeManager

2573 DataNode

2398 NameNode

6046 JobHistoryServer

[root@hadoop102 /]# kill -9 4854

[root@hadoop102 /]# jps

6597 Jps

2573 DataNode

2398 NameNode

6046 JobHistoryServer

[root@hadoop102 /]# yarn --daemon start nodemanager

[root@hadoop102 /]# jps

6691 Jps

6662 NodeManager

2573 DataNode

2398 NameNode

6046 JobHistoryServer

集群搭建完毕

一键启动停止hadoop

[root@hadoop102 bin]# vim myhadoop.sh

#!/bin/bash

case $1 in

"start" ){

echo "================启动hadoop集群================"

echo "================启动hadoop102 hdfs================"

ssh hadoop102 "/opt/module/hadoop-3.1.3/sbin/start-dfs.sh"

echo "================启动hadoop103 yarn================"

ssh hadoop103 "/opt/module/hadoop-3.1.3/sbin/start-yarn.sh"

echo "================启动hadoop102 historyserver================"

ssh hadoop102 "/opt/module/hadoop-3.1.3/bin/mapred --daemon start historyserver"

};;

"stop"){

echo "================关闭hadoop集群================"

echo "================关闭hadoop102 historyserver================"

ssh hadoop102 "/opt/module/hadoop-3.1.3/bin/mapred --daemon stop historyserver"

echo "================关闭hadoop103 yarn================"

ssh hadoop103 "/opt/module/hadoop-3.1.3/sbin/stop-yarn.sh"

echo "================关闭hadoop102 hdfs================"

ssh hadoop102 "/opt/module/hadoop-3.1.3/sbin/stop-dfs.sh"

};;

esac

[root@hadoop102 bin]# chmod 777 myhadoop.sh

######################################################测试停止

[root@hadoop102 hadoop-3.1.3]# myhadoop.sh stop

================关闭hadoop集群================

================关闭hadoop102 historyserver================

================关闭hadoop103 yarn================

Stopping nodemanagers

上一次登录:六 10月 15 16:18:58 CST 2022

Stopping resourcemanager

上一次登录:六 10月 15 16:19:10 CST 2022

================关闭hadoop102 hdfs================

Stopping namenodes on [hadoop102]

上一次登录:六 10月 15 16:18:48 CST 2022

Stopping datanodes

上一次登录:六 10月 15 16:19:22 CST 2022

Stopping secondary namenodes [hadoop104]

上一次登录:六 10月 15 16:19:24 CST 2022

######################################################测试启动

[root@hadoop102 hadoop-3.1.3]# myhadoop.sh start

================启动hadoop集群================

================启动hadoop102 hdfs================

Starting namenodes on [hadoop102]

上一次登录:六 10月 15 16:19:28 CST 2022

Starting datanodes

上一次登录:六 10月 15 16:20:48 CST 2022

Starting secondary namenodes [hadoop104]

上一次登录:六 10月 15 16:20:52 CST 2022

================启动hadoop103 yarn================

Starting resourcemanager

上一次登录:六 10月 15 16:20:29 CST 2022从 192.168.10.1pts/1 上

Starting nodemanagers

上一次登录:六 10月 15 16:21:18 CST 2022

================启动hadoop102 historyserver================

886

886

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?