concurrentqueue助我实现GenTL的AcquisitionChain

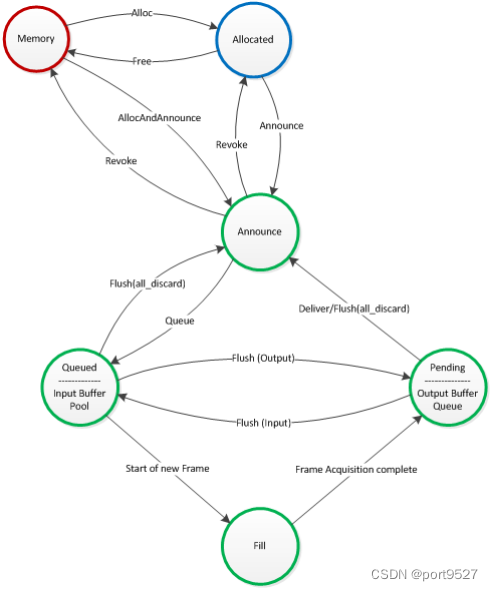

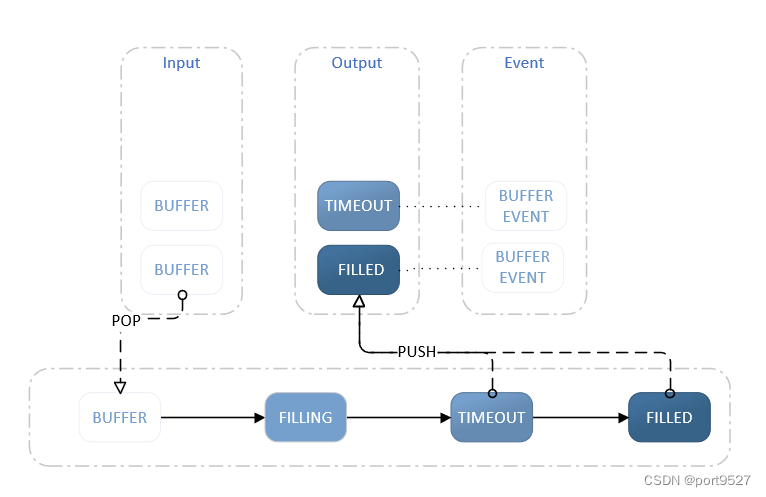

该图来自GenTL的从Buffer角度的采集链路图。此图中有两处用到了队列,我们可以将下面的Input Buffer Pool/Output Buffer Queue/Fill 节点稍微细节一下变成如下图的架构

上图更为清晰的发现在Input/Output上使用了队列的技术,手写简单的队列没有问题,但是符合工业级的无锁队列就要请出功臣moodycamel::ConcurrentQueue,以下是该队列的主要特点

-

超快性能,让人瞠目结舌

-

单头实现。只需将其放入项目中即可

-

完全线程安全的无锁队列。可由任意数量的线程并发使用

-

C++11 实现 – 尽可能移动元素(而不是复制)

-

模板化,无需专门处理指针–内存由专人管理

-

对元素类型或最大数量没有人为限制

-

内存可以预先分配一次,也可以根据需要动态分配

-

完全可移植(无需汇编;所有操作均通过标准 C++11 基元完成)

-

支持超快的批量操作

-

包括低开销阻塞版本(BlockingConcurrentQueue)

-

异常安全

1、moodycamel::ConcurrentQueue主要基本用法

#include <iostream>

#include <string>

#include <memory>

#include <map>

#include "blockingconcurrentqueue.h"

struct demoStruct {

int intV;

float floatV;

double doubleV;

std::string stringV;

void* pointerV;

std::size_t sizeV;

};

class demoClass {

public:

demoClass() = default;

demoClass(int a, float b, double c, std::string d, std::size_t e) :

intV(a), floatV(b), doubleV(c), stringV(d), sizeV(e), pointerV(nullptr), name(d) {}

void test() {}

void test2() {}

private:

demoClass(demoClass &);// forbidden

demoClass &operator=(const demoClass &);

std::string name;

int intV;

float floatV;

double doubleV;

std::string stringV;

void* pointerV;

std::size_t sizeV;

};

int main()

{

// 存放基本类型

moodycamel::ConcurrentQueue<int> q;

for (int i = 0; i != 123; ++i)

q.enqueue(i);

int item;

for (int i = 0; i != 123; ++i) {

q.try_dequeue(item);

std::cout << item << std::endl;

assert(item == i);

}

q.size_approx();

// 存放结构体

moodycamel::ConcurrentQueue<demoStruct> structQ;

demoStruct q1;

q1.doubleV = 3.00f;

q1.floatV = 1.00f;

q1.intV = 2;

q1.pointerV = nullptr;

q1.sizeV = 23;

q1.stringV = "hello";

structQ.enqueue(q1);

demoStruct q2;

structQ.try_dequeue(q2);

// BlockingConcurrentQueue使用

moodycamel::BlockingConcurrentQueue<int> intq;

intq.enqueue(1);

std::cout << intq.size_approx() << std::endl;

{

moodycamel::BlockingConcurrentQueue<int> otherq;

intq.swap(otherq);

}

std::cout << intq.size_approx() << std::endl;

// BlockingConcurrentQueue存放类

moodycamel::BlockingConcurrentQueue<std::shared_ptr<demoClass>> scq;

std::shared_ptr<demoClass> sc = std::make_shared<demoClass>(1, 2.0, 3.0f, "hello world", 123);

scq.enqueue(sc);

std::shared_ptr<demoClass> sc2;

scq.try_dequeue(sc2);

// BlockingConcurrentQueue超时机制

moodycamel::BlockingConcurrentQueue<int> cq;

std::thread reader([&]() {

int item;

#if 0

for (int i = 0; i != 100; ++i) {

// Fully-blocking:

q.wait_dequeue(item);

}

#else

for (int i = 0; i != 100; ) {

// Blocking with timeout

if (cq.wait_dequeue_timed(item, std::chrono::milliseconds(6))) {

std::cout << item << std::endl;

++i;

}

}

#endif

});

std::thread writer([&]() {

for (int i = 0; i != 100; ++i) {

cq.enqueue(i);

std::this_thread::sleep_for(std::chrono::milliseconds(5));

}

});

writer.join();

reader.join();

return 0;

}

2、使用moodycamel::ConcurrentQueue简易实现图二

#include <iostream>

#include <thread>

#include "blockingconcurrentqueue.h"

class Buffer

{

public:

Buffer() {}

void SetBaseAddr(uint64_t baseAddr) {

anologBaseAddr = baseAddr;

}

uint64_t GetBaseAddr()

{

return anologBaseAddr;

}

void SetDataSize(size_t dataSize) {

size = dataSize;

}

size_t GetDataSize() {

return size;

}

private:

Buffer(Buffer &); // forbidden

Buffer &operator=(const Buffer &); // forbidden

private:

uint64_t anologBaseAddr;// 模拟地址

size_t size; // 数据大小

};

moodycamel::BlockingConcurrentQueue<std::shared_ptr<Buffer>> inputQueue;

moodycamel::BlockingConcurrentQueue<std::shared_ptr<Buffer>> outputQueue;

static uint64_t address = 0x00000000001;

int main()

{

std::cout << "Hello World!\n";

// fill buffer

std::thread FillBufferThread([]() {

while (true) {

std::shared_ptr<Buffer> newBuffer;

// input queue -> filling

inputQueue.wait_dequeue(newBuffer);

newBuffer->SetBaseAddr(address++);

newBuffer->SetDataSize(10 * 1024);

std::this_thread::sleep_for(std::chrono::seconds(2));

std::cout << "Filled " << address << " OK" << std::endl;

// filled -> output queue

outputQueue.enqueue(newBuffer);

}

});

// op buffer

std::thread OpBuffer([]() {

while (true) {

std::shared_ptr<Buffer> newBuffer;

std::cout << "Waiting Filled Buffer" << std::endl;

// output queue -> op

outputQueue.wait_dequeue(newBuffer);

std::this_thread::sleep_for(std::chrono::seconds(1));

std::cout << "Op Buffer " << newBuffer->GetBaseAddr() << " size " << newBuffer->GetDataSize() << std::endl;

// queue buffer -> input queue

inputQueue.enqueue(newBuffer);

}

});

for (size_t idx = 0; idx < 4; ++idx) {

// announce

std::shared_ptr<Buffer> newBuffer = std::make_shared<Buffer>();

// queuebuffer -> input queue

inputQueue.enqueue(newBuffer);

}

FillBufferThread.join();

OpBuffer.join();

return 0;

}

1949

1949

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?