一、概述

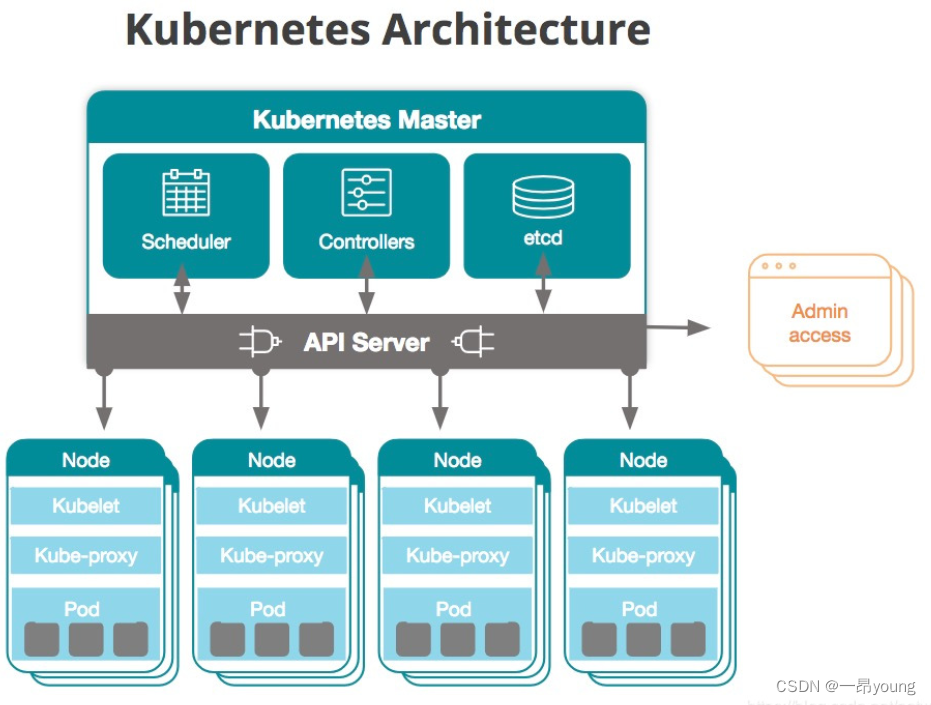

1、kubernetes组件架构图

2、节点信息

| 主机名 | IP | 角色 | 组件 | 配置 |

|---|---|---|---|---|

| master | 192.168.2.10 | 管理节点 | kube-apiserver kube-controller-manager docker | 2C2G |

| node1 | 192.168.2.20 | 计算节点1 | kube-proxy kube-flannel docker | 2C2G |

| node2 | 192.168.2.30 | 计算节点2 | kube-porxy kube-flannel docker | 2C2G |

3、组件版本

centos版本:CentOS Linux release 7.6.1810 (Core)

docker版本:19.03.15

kubernetes版本:1.19.16

4、安装步骤

官方部署链接:安装 kubeadm | Kubernetes

- 基础环境配置

- kubernetes安装前设置(源、镜像及相关配置)

- kubeadm部署(master)

- 启用基于flannel的Pod网络

- kubeadm加入node节点

- dashboard组件安装与使用

- heapster监控组件安装与使用

- 访问测试

二、基础环境配置(所有节点)

1. 修改主机名与hosts文件

在192.168.2.10/20/30上分别执行:

hostnamectl set-hostname k8s-master

hostnamectl set-hostname k8s-node1

hostnamectl set-hostname k8s-node2

echo "192.168.2.10 k8s-master

192.168.2.20 k8s-node1

192.168.2.30 k8s-node2" >> /etc/hosts

2. 验证mac地址uuid,保证各节点mac和uuid唯一

cat /sys/class/net/ens33/address cat /sys/class/dmi/id/product_uuid

3.时间同步

yum -y install chrony

systemctl start chronyd

systemctl enable chronyd

timedatectl set-timezone Asia/Shanghai

[root@k8s-master ~]# chronyc sources

210 Number of sources = 4

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* time.neu.edu.cn 1 8 377 14 +85us[ +163us] +/- 8142us

^- ntp5.flashdance.cx 2 8 351 130 -17ms[ -17ms] +/- 187ms

^- ns1.blazing.de 2 8 377 68 +2345us[+2421us] +/- 150ms

^- time.cloudflare.com 3 8 370 908 -2803us[-2651us] +/- 95ms

4. 设置防火墙规则

[root@k8s-master ~]# systemctl stop firewalld

[root@k8s-master ~]# systemctl disable firewalld

[root@k8s-master ~]# yum -y install iptables-services

[root@k8s-master ~]# systemctl start iptables

[root@k8s-master ~]# systemctl enable iptables

[root@k8s-master ~]# iptables -F

[root@k8s-master ~]# service iptables save

iptables: Saving firewall rules to /etc/sysconfig/iptables:[ OK ]

5. 关闭selinux

[root@k8s-master ~]# setenforce 0

[root@k8s-master ~]# sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

6. 关闭swap分区

[root@k8s-master ~]# swapoff -a

[root@k8s-master ~]# sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

7. 修改内核iptables相关参数,启用iptables查看桥接流量

[root@k8s-master ~]# cat <<EOF > /etc/sysctl.d/kubernetes.conf

> vm.swappiness = 0

> net.bridge.bridge-nf-call-ip6tables = 1

> net.bridge.bridge-nf-call-iptables = 1

> net.ipv4.ip_forward = 1

> EOF

[root@k8s-master ~]# sysctl -p /etc/sysctl.d/kubernetes.conf

注意:

centos8会有如下报错:

vm.swappiness = 0

sysctl: cannot stat /proc/sys/net/bridge/bridge-nf-call-ip6tables: 没有那个文件或目录

sysctl: cannot stat /proc/sys/net/bridge/bridge-nf-call-iptables: 没有那个文件或目录

net.ipv4.ip_forward = 1临时解决,重启失效

modprobe br_netfilter

永久解决

cat > /etc/rc.sysinit << EOF

#!/bin/bash

for file in /etc/sysconfig/modules/*.modules ; do

[ -x $file ] && $file

done

EOF

cat > /etc/sysconfig/modules/br_netfilter.modules << EOF

modprobe br_netfilter

EOF8. 升级内核(可选,4.18+以上即可)

载入公钥[root@master ~]# rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

升级安装ELRepo[root@master ~]# rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm

如果是centos8使用如下命令[root@master ~]#yum install https://www.elrepo.org/elrepo-release-8.0-2.el8.elrepo.noarch.rpm

载入elrepo-kernel元数据[root@master ~]# yum --disablerepo=\* --enablerepo=elrepo-kernel repolist

安装最新版本的kernel[root@master ~]# yum --disablerepo=\* --enablerepo=elrepo-kernel install kernel-ml.x86_64 -y

删除旧版本工具包[root@master ~]# yum remove kernel-tools-libs.x86_64 kernel-tools.x86_64 -y

安装新版本工具包[root@master ~]# yum --disablerepo=\* --enablerepo=elrepo-kernel install kernel-ml-tools.x86_64 -y

查看内核插入顺序[root@server-1 ~]# awk -F \' '$1=="menuentry " {print i++ " : " $2}' /etc/grub2.cfg

设置默认启动[root@server-1 ~]# grub2-set-default 0 // 0代表当前第一行,也就是5.3版本 [root@server-1 ~]# grub2-editenv list

重启验证

二、kubernetes安装前设置(每个节点)

1. kube-proxy开启ipvs的前置条件(每个节点执行)

yum -y install ipset ipvsadm

[root@k8s-master ~]# cat > /etc/sysconfig/modules/ipvs.modules <<EOF

> #!/bin/bash

> modprobe -- ip_vs

> modprobe -- ip_vs_rr

> modprobe -- ip_vs_wrr

> modprobe -- ip_vs_sh

> modprobe -- nf_conntrack

> EOF

[root@k8s-master ~]# chmod 755 /etc/sysconfig/modules/ipvs.modules && bash

linux kernel 5.19版本已经将nf_conntrack_ipv4 更新为 nf_conntrack

2. docker安装(所有节点)

安装前源准备:

yum install -y yum-utils device-mapper-persistent-data lvm2

配置yum源:

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

查看可安装的docker版本:

yum list docker-ce --showduplicates | sort -r

安装19.03.15版本docker:

yum install -y docker-ce-19.03.15*

3. 禁用iptables的forward调用链

docker 1.13以上版本默认禁用iptables的forward调用链,因此需要执行开启命令:iptables -P FORWARD ACCEPT

4. 修改docker cgroup driver为systemd

使用systemd作为docker的cgroup driver可以确保服务器节点在资源紧张的情况更加稳定

[root@k8s-master ~]# mkdir -p /etc/docker

[root@k8s-master ~]# vim /etc/docker/daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://registry.docker-cn.com"]

}

[root@k8s-master ~]# systemctl daemon-reload

[root@k8s-master ~]# systemctl enable docker

[root@k8s-master ~]# systemctl start docker

[root@k8s-master ~]# docker info | grep Driver

Storage Driver: overlay2

Logging Driver: json-file

Cgroup Driver: systemd

5. 配置阿里云yum源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

6. 安装kubeadm、kubectl、kubelet

yum install -y kubelet-1.19.16 kubeadm-1.19.16 kubectl-1.19.16

三、kubeadm部署(master)

1. 配置文件创建集群

- 获取默认的初始化参数文件

# kubeadm config print init-defaults > kubeadm-conf.yaml

- 配置kubeadm-conf.yaml初始化文件

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.2.10 # master节点ip地址,如果 Master 有多个interface,建议明确指定,

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master # master节点主机名

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: k8s.gcr.io

kind: ClusterConfiguration

kubernetesVersion: v1.19.16 # k8s安装版本

imageRepository: "registry.aliyuncs.com/google_containers" # 将其指定为阿里云镜像地址

networking:

dnsDomain: cluster.local

podSubnet: "10.244.0.0/16" #Kubernetes 支持多种网络方案,而且不同网络方案对--pod-network-cidr 有自己的要求,这里设置为 10.244.0.0/16 是因为我们将使用flannel 网络方案,必须设置成这个 CIDR。

serviceSubnet: 10.96.0.0/12

scheduler: {}

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

featureGates:

SupportIPVSProxyMode: true

mode: ipvs- 指定配置文件创建k8s集群

kubeadm init --config=kubeadm-conf.yaml

2. 命令行创建k8s集群

[root@master ~]#kubeadm init --apiserver-advertise-address=192.168.10.100 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.19.15 --pod-network-cidr=10.244.0.0/16

- –apiserver-advertise-address

指明用 Master 的哪个 interface 与 Cluster 的其他节点通信。如果 Master 有多个interface,建议明确指定,如果不指定,kubeadm 会自动选择有默认网关的interface。 - –pod-network-cidr

指定 Pod 网络的范围。Kubernetes 支持多种网络方案,而且不同网络方案对–pod-network-cidr 有自己的要求,这里设置为 10.244.0.0/16 是因为我们将使用flannel 网络方案,必须设置成这个 CIDR。 - –image-repository

Kubenetes默认Registries地址是 k8s.gcr.io,在国内并不能访问gcr.io,在1.13版本中我们可以增加–image-repository参数,默认值是k8s.gcr.io,将其指定为阿里云镜像地址:registry.aliyuncs.com/google_containers。 - –kubernetes-version=v1.19.15

关闭版本探测,因为它的默认值是stable-1,会导致从https://dl.k8s.io/release/stable-1.txt下载最新的版本号

执行完毕后会提示以下信息:

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.2.10:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:145fef3344ce82389e5666b5d311f7bbd38693aecc76bb8ca015ef551d188c61

[root@k8s-master ~]#

3、根据提示初始化kubetcl

[root@k8s-master ~]# mkdir -p $HOME/.kube

[root@k8s-master ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

4. 启用 kubectl 命令自动补全功能

[root@k8s-master ~]# yum -y install bash-completion

[root@k8s-master ~]# echo "source <(kubectl completion bash)" >> ~/.bashrc

[root@k8s-master ~]# source ~/.bashrc

5. 测试kubectl

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady master 3m9s v1.19.16

二、启用基于flannel的Pod网络

1. 下载配置文件

[root@k8s-master ~]# wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

2. 启用flannel

[root@k8s-master ~]# kubectl apply -f kube-flannel.yml

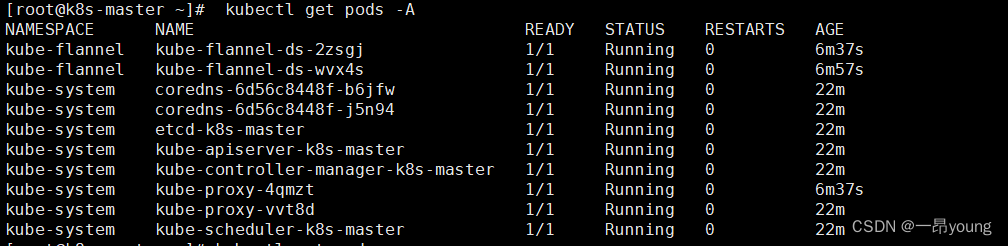

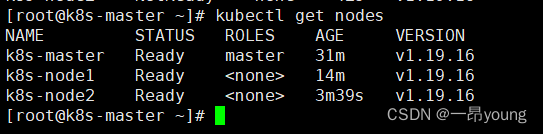

3. 验证操作

node1加入kubernetes集群

node2加入kubernetes集群

验证三节点集群

注:若卸载k8s,执行一下命令

sudo yum remove -y kubelet kubeadm kubectl

kubeadm reset -f

yum erase -y kubelet kubectl kubeadm kubernetes-cni

modprobe -r ipip

lsmod

rm -rf ~/.kube/

rm -rf /etc/kubernetes/

rm -rf /etc/systemd/system/kubelet.service.d

rm -rf /etc/systemd/system/kubelet.service

rm -rf /usr/bin/kube*

rm -rf /etc/cni

rm -rf /opt/cni

rm -rf /var/lib/etcd

rm -rf /var/etcd

1575

1575

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?