avFoundation 框架

GPUImage

FFmpeg

x264

liremp

---

ijkplayer框架

ffmpeg

videoToolbox

audioToolbox

=========

第一部分:采集推流SDK

目前市面上集视频采集、编码、封装和推流于一体的SDK已经有很多了,例如商业版的NodeMedia,但NodeMedia SDK按包名授权,未授权包名应用使用有版权提示信息。

我这里使用的是别人分享在github上的一个免费SDK,下载地址。

下面我就代码分析一下直播推流的过程吧:

先看入口界面:

很简单,一个输入框让你填写服务器的推流地址,另外一个按钮开启推流。

public class StartActivity extends Activity {

public static final String RTMPURL_MESSAGE = "rtmppush.hx.com.rtmppush.rtmpurl";

private Button _startRtmpPushButton = null;

private EditText _rtmpUrlEditText = null;

private View.OnClickListener _startRtmpPushOnClickedEvent = new View.OnClickListener() {

@Override

public void onClick(View arg0) {

Intent i = new Intent(StartActivity.this, MainActivity.class);

String rtmpUrl = _rtmpUrlEditText.getText().toString();

i.putExtra(StartActivity.RTMPURL_MESSAGE, rtmpUrl);

StartActivity.this.startActivity(i);

}

};

private void InitUI(){

_rtmpUrlEditText = (EditText)findViewById(R.id.rtmpUrleditText);

_startRtmpPushButton = (Button)findViewById(R.id.startRtmpButton);

_rtmpUrlEditText.setText("rtmp://192.168.1.104:1935/live/12345");

_startRtmpPushButton.setOnClickListener(_startRtmpPushOnClickedEvent);

}

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_start);

InitUI();

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

主要的推流过程在MainActivity里面,同样,先看界面:

布局文件:

<RelativeLayout xmlns:android="http://schemas.android.com/apk/res/android"

xmlns:tools="http://schemas.android.com/tools"

android:id="@+id/cameraRelative"

android:layout_width="match_parent"

android:layout_height="match_parent"

android:paddingBottom="@dimen/activity_vertical_margin"

android:paddingLeft="@dimen/activity_horizontal_margin"

android:paddingRight="@dimen/activity_horizontal_margin"

android:paddingTop="@dimen/activity_vertical_margin"

android:theme="@android:style/Theme.NoTitleBar.Fullscreen">

<SurfaceView

android:id="@+id/surfaceViewEx"

android:layout_width="match_parent"

android:layout_height="match_parent"/>

<Button

android:id="@+id/SwitchCamerabutton"

android:layout_width="wrap_content"

android:layout_height="wrap_content"

android:layout_alignBottom="@+id/surfaceViewEx"

android:text="@string/SwitchCamera" />

</RelativeLayout>

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

其实就是用一个SurfaceView显示摄像头拍摄画面,并提供了一个按钮切换前置和后置摄像头。从入口函数看起:

@Override

protected void onCreate(Bundle savedInstanceState) {

requestWindowFeature(Window.FEATURE_NO_TITLE)

getWindow().setFlags(WindowManager.LayoutParams.FLAG_FULLSCREEN,

WindowManager.LayoutParams.FLAG_FULLSCREEN)

this.getWindow().setFlags(WindowManager.LayoutParams.FLAG_KEEP_SCREEN_ON, WindowManager.LayoutParams.FLAG_KEEP_SCREEN_ON)

super.onCreate(savedInstanceState)

setContentView(R.layout.activity_main)

setRequestedOrientation(ActivityInfo.SCREEN_ORIENTATION_PORTRAIT)

Intent intent = getIntent()

_rtmpUrl = intent.getStringExtra(StartActivity.RTMPURL_MESSAGE)

InitAll()

PowerManager pm = (PowerManager) getSystemService(Context.POWER_SERVICE)

_wakeLock = pm.newWakeLock(PowerManager.SCREEN_DIM_WAKE_LOCK, "My Tag")

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

首先设置全屏显示,常亮,竖屏,获取服务器的推流url,再初始化所有东西。

private void InitAll() {

WindowManager wm = this.getWindowManager()

int width = wm.getDefaultDisplay().getWidth()

int height = wm.getDefaultDisplay().getHeight()

int iNewWidth = (int) (height * 3.0 / 4.0)

RelativeLayout rCameraLayout = (RelativeLayout) findViewById(R.id.cameraRelative)

RelativeLayout.LayoutParams layoutParams = new RelativeLayout.LayoutParams(RelativeLayout.LayoutParams.MATCH_PARENT,

RelativeLayout.LayoutParams.MATCH_PARENT)

int iPos = width - iNewWidth

layoutParams.setMargins(iPos, 0, 0, 0)

_mSurfaceView = (SurfaceView) this.findViewById(R.id.surfaceViewEx)

_mSurfaceView.getHolder().setFixedSize(HEIGHT_DEF, WIDTH_DEF)

_mSurfaceView.getHolder().setType(SurfaceHolder.SURFACE_TYPE_PUSH_BUFFERS)

_mSurfaceView.getHolder().setKeepScreenOn(true)

_mSurfaceView.getHolder().addCallback(new SurceCallBack())

_mSurfaceView.setLayoutParams(layoutParams)

InitAudioRecord()

_SwitchCameraBtn = (Button) findViewById(R.id.SwitchCamerabutton)

_SwitchCameraBtn.setOnClickListener(_switchCameraOnClickedEvent)

RtmpStartMessage()

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

首先设置屏幕比例3:4显示,给SurfaceView设置一些参数并添加回调,再初始化AudioRecord,最后执行开始推流。音频在这里初始化了,那么相机在哪里初始化呢?其实在SurfaceView的回调函数里。

@Override

public void surfaceCreated(SurfaceHolder holder) {

_iDegrees = getDisplayOritation(getDispalyRotation(), 0);

if (_mCamera != null) {

InitCamera();

return;

}

if (Camera.getNumberOfCameras() == 1) {

_bIsFront = false;

_mCamera = Camera.open(Camera.CameraInfo.CAMERA_FACING_BACK);

} else {

_mCamera = Camera.open(Camera.CameraInfo.CAMERA_FACING_FRONT);

}

InitCamera();

}

@Override

public void surfaceDestroyed(SurfaceHolder holder) {

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

相机的初始化就在这里啦:

public void InitCamera() {

Camera.Parameters p = _mCamera.getParameters()

Size prevewSize = p.getPreviewSize()

showlog("Original Width:" + prevewSize.width + ", height:" + prevewSize.height)

List<Size> PreviewSizeList = p.getSupportedPreviewSizes()

List<Integer> PreviewFormats = p.getSupportedPreviewFormats()

showlog("Listing all supported preview sizes")

for (Camera.Size size : PreviewSizeList) {

showlog(" w: " + size.width + ", h: " + size.height)

}

showlog("Listing all supported preview formats")

Integer iNV21Flag = 0

Integer iYV12Flag = 0

for (Integer yuvFormat : PreviewFormats) {

showlog("preview formats:" + yuvFormat)

if (yuvFormat == android.graphics.ImageFormat.YV12) {

iYV12Flag = android.graphics.ImageFormat.YV12

}

if (yuvFormat == android.graphics.ImageFormat.NV21) {

iNV21Flag = android.graphics.ImageFormat.NV21

}

}

if (iNV21Flag != 0) {

_iCameraCodecType = iNV21Flag

} else if (iYV12Flag != 0) {

_iCameraCodecType = iYV12Flag

}

p.setPreviewSize(HEIGHT_DEF, WIDTH_DEF)

p.setPreviewFormat(_iCameraCodecType)

p.setPreviewFrameRate(FRAMERATE_DEF)

showlog("_iDegrees="+_iDegrees)

_mCamera.setDisplayOrientation(_iDegrees)

p.setRotation(_iDegrees)

_mCamera.setPreviewCallback(_previewCallback)

_mCamera.setParameters(p)

try {

_mCamera.setPreviewDisplay(_mSurfaceView.getHolder())

} catch (Exception e) {

return

}

_mCamera.cancelAutoFocus()

_mCamera.startPreview()

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

还记得之前初始化完成之后开始推流函数吗?

private void RtmpStartMessage() {

Message msg = new Message();

msg.what = ID_RTMP_PUSH_START;

Bundle b = new Bundle();

b.putInt("ret", 0);

msg.setData(b);

mHandler.sendMessage(msg);

}

Handler处理:

public Handler mHandler = new Handler() {

public void handleMessage(android.os.Message msg) {

Bundle b = msg.getData();

int ret;

switch (msg.what) {

case ID_RTMP_PUSH_START: {

Start();

break;

}

}

}

};

真正的推流实现原来在这里:

private void Start() {

if (DEBUG_ENABLE) {

File saveDir = Environment.getExternalStorageDirectory();

String strFilename = saveDir + "/aaa.h264";

try {

if (!new File(strFilename).exists()) {

new File(strFilename).createNewFile();

}

_outputStream = new DataOutputStream(new FileOutputStream(strFilename));

} catch (Exception e) {

e.printStackTrace();

}

}

_rtmpSessionMgr = new RtmpSessionManager();

_rtmpSessionMgr.Start(_rtmpUrl);

int iFormat = _iCameraCodecType;

_swEncH264 = new SWVideoEncoder(WIDTH_DEF, HEIGHT_DEF, FRAMERATE_DEF, BITRATE_DEF);

_swEncH264.start(iFormat);

_bStartFlag = true;

_h264EncoderThread = new Thread(_h264Runnable);

_h264EncoderThread.setPriority(Thread.MAX_PRIORITY);

_h264EncoderThread.start();

_AudioRecorder.startRecording();

_AacEncoderThread = new Thread(_aacEncoderRunnable);

_AacEncoderThread.setPriority(Thread.MAX_PRIORITY);

_AacEncoderThread.start();

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

里面主要的函数有四个,我分别标出来了,现在我们逐一看一下。首先是point 1,这已经走到SDK里面了

public int Start(String rtmpUrl){

int iRet = 0;

_rtmpUrl = rtmpUrl;

_rtmpSession = new RtmpSession();

_bStartFlag = true;

_h264EncoderThread.setPriority(Thread.MAX_PRIORITY);

_h264EncoderThread.start();

return iRet;

}

其实就是启动了一个线程,这个线程稍微有点复杂

private Thread _h264EncoderThread = new Thread(new Runnable() {

private Boolean WaitforReConnect(){

for(int i=0; i < 500; i++){

try {

Thread.sleep(10);

} catch (InterruptedException e) {

e.printStackTrace();

}

if(_h264EncoderThread.interrupted() || (!_bStartFlag)){

return false;

}

}

return true;

}

@Override

public void run() {

while (!_h264EncoderThread.interrupted() && (_bStartFlag)) {

if(_rtmpHandle == 0) {

_rtmpHandle = _rtmpSession.RtmpConnect(_rtmpUrl);

if(_rtmpHandle == 0){

if(!WaitforReConnect()){

break;

}

continue;

}

}else{

if(_rtmpSession.RtmpIsConnect(_rtmpHandle) == 0){

_rtmpHandle = _rtmpSession.RtmpConnect(_rtmpUrl);

if(_rtmpHandle == 0){

if(!WaitforReConnect()){

break;

}

continue;

}

}

}

if((_videoDataQueue.size() == 0) && (_audioDataQueue.size()==0)){

try {

Thread.sleep(30);

} catch (InterruptedException e) {

e.printStackTrace();

}

continue;

}

for(int i = 0; i < 100; i++){

byte[] audioData = GetAndReleaseAudioQueue();

if(audioData == null){

break;

}

_rtmpSession.RtmpSendAudioData(_rtmpHandle, audioData, audioData.length);

}

byte[] videoData = GetAndReleaseVideoQueue();

if(videoData != null){

_rtmpSession.RtmpSendVideoData(_rtmpHandle, videoData, videoData.length);

}

try {

Thread.sleep(1);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

_videoDataQueueLock.lock();

_videoDataQueue.clear();

_videoDataQueueLock.unlock();

_audioDataQueueLock.lock();

_audioDataQueue.clear();

_audioDataQueueLock.unlock();

if((_rtmpHandle != 0) && (_rtmpSession != null)){

_rtmpSession.RtmpDisconnect(_rtmpHandle);

}

_rtmpHandle = 0;

_rtmpSession = null;

}

});

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

看18行,主要就是一个while循环,每隔一段时间去_audioDataQueue和_videoDataQueue两个缓冲数组中取数据发送给服务器,发送方法_rtmpSession.RtmpSendAudioData和_rtmpSession.RtmpSendVideoData都是Native方法,通过jni调用so库文件的内容,每隔一段时间,这个时间是多少呢?看第4行,原来是5秒钟,也就是说我们的视频数据会在缓冲中存放5秒才被取出来发给服务器,所有直播会有5秒的延时,我们可以修改这块来控制直播延时。

上面说了我们会从_audioDataQueue和_videoDataQueue两个Buffer里面取数据,那么数据是何时放进去的呢?看上面的point 2,3,4。首先是point 2,同样走进了SDK:

public boolean start(int iFormateType){

int iType = OpenH264Encoder.YUV420_TYPE;

if(iFormateType == android.graphics.ImageFormat.YV12){

iType = OpenH264Encoder.YUV12_TYPE;

}else{

iType = OpenH264Encoder.YUV420_TYPE;

}

_OpenH264Encoder = new OpenH264Encoder();

_iHandle = _OpenH264Encoder.InitEncode(_iWidth, _iHeight, _iBitRate, _iFrameRate, iType);

if(_iHandle == 0){

return false;

}

_iFormatType = iFormateType;

return true;

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

其实这是初始化编码器,具体的初始化过程也在so文件,jni调用。point 3,4其实就是开启两个线程,那我们看看线程中具体实现吧。

private Thread _h264EncoderThread = null;

private Runnable _h264Runnable = new Runnable() {

@Override

public void run() {

while (!_h264EncoderThread.interrupted() && _bStartFlag) {

int iSize = _YUVQueue.size();

if (iSize > 0) {

_yuvQueueLock.lock();

byte[] yuvData = _YUVQueue.poll();

if (iSize > 9) {

Log.i(LOG_TAG, "###YUV Queue len=" + _YUVQueue.size() + ", YUV length=" + yuvData.length);

}

_yuvQueueLock.unlock();

if (yuvData == null) {

continue;

}

if (_bIsFront) {

_yuvEdit = _swEncH264.YUV420pRotate270(yuvData, HEIGHT_DEF, WIDTH_DEF);

} else {

_yuvEdit = _swEncH264.YUV420pRotate90(yuvData, HEIGHT_DEF, WIDTH_DEF);

}

byte[] h264Data = _swEncH264.EncoderH264(_yuvEdit);

if (h264Data != null) {

_rtmpSessionMgr.InsertVideoData(h264Data);

if (DEBUG_ENABLE) {

try {

_outputStream.write(h264Data);

int iH264Len = h264Data.length;

} catch (IOException e1) {

e1.printStackTrace();

}

}

}

}

try {

Thread.sleep(1);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

_YUVQueue.clear();

}

};

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

也是一个循环线程,第9行,从_YUVQueue中取出摄像头获取的数据,然后进行视频旋转,第24行,对数据进行编码,然后执行26行,InsertVideoData:

public void InsertVideoData(byte[] videoData){

if(!_bStartFlag){

return;

}

_videoDataQueueLock.lock();

if(_videoDataQueue.size() > 50){

_videoDataQueue.clear();

}

_videoDataQueue.offer(videoData);

_videoDataQueueLock.unlock();

}

果然就是插入之前提到的_videoDataQueue的Buffer。这里插入的是视频数据,那么音频数据呢?在另外一个线程,内容大致相同

private Runnable _aacEncoderRunnable = new Runnable() {

@Override

public void run() {

DataOutputStream outputStream = null;

if (DEBUG_ENABLE) {

File saveDir = Environment.getExternalStorageDirectory();

String strFilename = saveDir + "/aaa.aac";

try {

if (!new File(strFilename).exists()) {

new File(strFilename).createNewFile();

}

outputStream = new DataOutputStream(new FileOutputStream(strFilename));

} catch (Exception e1) {

e1.printStackTrace();

}

}

long lSleepTime = SAMPLE_RATE_DEF * 16 * 2 / _RecorderBuffer.length;

while (!_AacEncoderThread.interrupted() && _bStartFlag) {

int iPCMLen = _AudioRecorder.read(_RecorderBuffer, 0, _RecorderBuffer.length);

if ((iPCMLen != _AudioRecorder.ERROR_BAD_VALUE) && (iPCMLen != 0)) {

if (_fdkaacHandle != 0) {

byte[] aacBuffer = _fdkaacEnc.FdkAacEncode(_fdkaacHandle, _RecorderBuffer);

if (aacBuffer != null) {

long lLen = aacBuffer.length;

_rtmpSessionMgr.InsertAudioData(aacBuffer);

if (DEBUG_ENABLE) {

try {

outputStream.write(aacBuffer);

} catch (IOException e) {

e.printStackTrace();

}

}

}

}

} else {

Log.i(LOG_TAG, "######fail to get PCM data");

}

try {

Thread.sleep(lSleepTime / 10);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

Log.i(LOG_TAG, "AAC Encoder Thread ended ......");

}

};

private Thread _AacEncoderThread = null;

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

这就是通过循环将音频数据插入_audioDataQueue这个Buffer。

以上就是视频采集和推流的代码分析,Demo中并没有对视频进行任何处理,只是摄像头采集,编码后推流到服务器端。

第二部分:Nginx服务器搭建

流媒体服务器有诸多选择,如商业版的Wowza。但我选择的是免费的Nginx(nginx-rtmp-module)。Nginx本身是一个非常出色的HTTP服务器,它通过nginx的模块nginx-rtmp-module可以搭建一个功能相对比较完善的流媒体服务器。这个流媒体服务器可以支持RTMP和HLS。

Nginx配合SDK做流媒体服务器的原理是: Nginx通过rtmp模块提供rtmp服务, SDK推送一个rtmp流到Nginx, 然后客户端通过访问Nginx来收看实时视频流。 HLS也是差不多的原理,只是最终客户端是通过HTTP协议来访问的,但是SDK推送流仍然是rtmp的。

下面是一款已经集成rtmp模块的windows版本的Nginx。下载后,即可直接使用

下载链接:https://github.com/illuspas/nginx-rtmp-win32

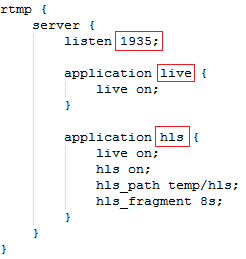

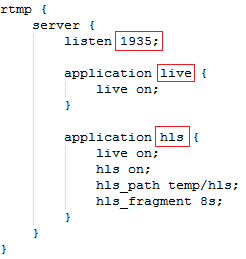

1、rtmp端口配置

配置文件在/conf/nginx.conf

RTMP监听 1935 端口,启用live 和hls 两个application

所以你的流媒体服务器url可以写成:rtmp://(服务器IP地址):1935/live/xxx 或 rtmp://(服务器IP地址):1935/hls/xxx

例如我们上面写的 rtmp://192.168.1.104:1935/live/12345

HTTP监听 8080 端口,

- :8080/stat 查看stream状态

- :8080/index.html 为一个直播播放与直播发布测试器

- :8080/vod.html 为一个支持RTMP和HLS点播的测试器

2、启动nginx服务

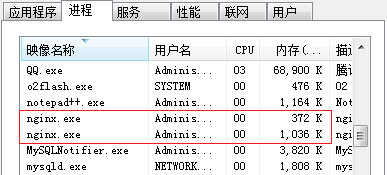

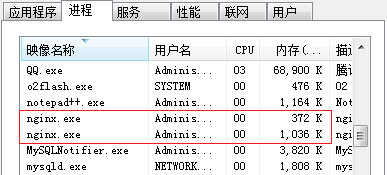

双击nginx.exe文件或者在dos窗口下运行nginx.exe,即可启动nginx服务:

1)启动任务管理器,可以看到nginx.exe进程

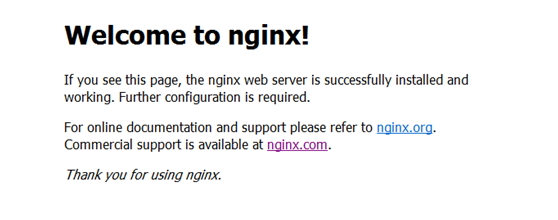

2)打开网页输入http://localhot:8080,出现如下画面:

显示以上界面说明启动成功。

第三部分:直播流的播放

主播界面:

上面说过了只要支持RTMP流传输协议的播放器都可以收看到我们的直播。下面举两个例子吧:

(1)window端播放器VLC

(2)android端播放器ijkplayer

ijkplayer的使用请参考Android ijkplayer的使用解析

private void initPlayer() {

player = new PlayerManager(this);

player.setFullScreenOnly(true);

player.setScaleType(PlayerManager.SCALETYPE_FILLPARENT);

player.playInFullScreen(true);

player.setPlayerStateListener(this);

player.play("rtmp://192.168.1.104:1935/live/12345");

}

======

2293

2293

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?