一、换座位

小美是一所中学的信息科技老师,她有一张 seat 座位表,平时用来储存学生名字和与他们相对应的座位 id。

其中纵列的 id 是连续递增的

小美想改变相邻俩学生的座位。

你能不能帮她写一个 SQL query 来输出小美想要的结果呢?

示例:

±--------±--------+

| id | student |

±--------±--------+

| 1 | Abbot |

| 2 | Doris |

| 3 | Emerson |

| 4 | Green |

| 5 | Jeames |

±--------±--------+

假如数据输入的是上表,则输出结果如下:

±--------±--------+

| id | student |

±--------±--------+

| 1 | Doris |

| 2 | Abbot |

| 3 | Green |

| 4 | Emerson |

| 5 | Jeames |

±--------±--------+

注意:

如果学生人数是奇数,则不需要改变最后一个同学的座位

select (case

when id%2!=0 and id!=counts then id+1

when id%2!=0 and id=counts then id

else id-1 end)as id,student

from seat,(select count(*)as counts from seat)as seat_counts

order by id;

或者:

select

id_re,

student

from

(select

id,

student,

if(id%2=1,nvl(lead(id,1) over(sort by id),id),lag(id,1) over(sort by id)) id_re

from zhongdeng1) t1

order by id_re asc;

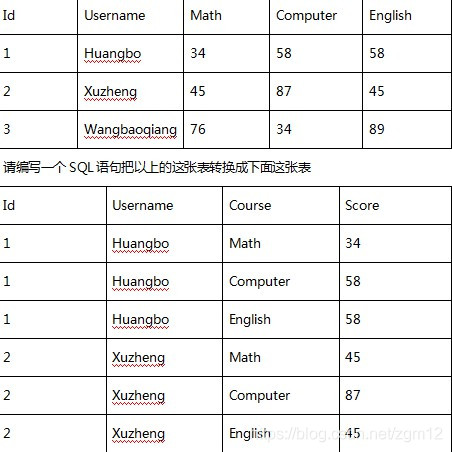

二、行列转换

1.l列转行

select id,username,'Math' as Course,math as Score from t

union all

select id,username,'Computer' as Course,computer as Score from t

union all

select id,username,'English' as Course,english as Score from t

order by Id,Course;

2.行转列

select id,username,

sum(case when course='Math' then score else 0 end) as Math,

sum(case when course='Computer' then score else 0 end) as Computer,

sum(case when course='English' then score else 0 end) as English

from t

group by id;

//也可以换成

sum(if(course='Math',Score,0)) as Math.......

三、人生阶段,行列转换

- 当前有用户人生阶段表LifeStage,有用户唯一ID字段UID,用户人生阶段字段stage,其中stage字段内容为各人生杰顿标签按照英文逗号分隔的拼接内容,如计划买房,已买车,并且每个用户的内容不同,请使用Hive Sql统计每个人生阶段的用户量。

select stage_someone,count(distinct uid) as uids

from lifeStage

lateral view explode(split(stage,',')) lifeStage_tmp as stage_someone

group by stage_someone

;

- 上题中相同的数据场景,但是LifeStage中每行数据存储一个用户的人生阶段数据,如一行数据UID字段内容为 43,ustage字段内容为 计划买车,另一行数据UID字段内容为 43,ustage字段内容为已买房,请输出类似于UID字段43,stage字段内容为 计划买车,已买房 这样的的新整合数据,并给出Hive Sql语句

select uid,

concat_ws(",",collect_set(ustage)) as stage

from Lifestage

group by uid;

四、最大连续登陆天数

有如下登录信息:

1 2019-04-30

1 2019-05-01

1 2019-05-02

1 2019-05-03

1 2019-05-04

1 2019-05-05

1 2019-05-07

1 2019-05-08

2 2019-05-06

2 2019-05-07

2 2019-05-08

2 2019-05-25

2 2019-05-26

2 2019-05-27

2 2019-05-28

2 2019-05-29

2 2019-05-30

2 2019-05-31

2 2019-06-01

2 2019-06-02

求每个用户的连续登录最长天数

select

uid,max(num)

from

(select

uid,count(*) as num

from

(select

uid,dt,

date_sub(dt,row_number() over(partition by uid order by dt) )as flag

from landlog) t

group by uid,flag)t2

group by uid

;

类似题目:

id1 id2

2014 1

2015 1

2016 1

2017 0

2018 0

2019 1

2020 1

2021 1

2022 0

2023 0

如何变成:

id1 id2 id3

2014 1 1

2015 1 2

2016 1 3

2017 0 1

2018 0 2

2019 1 1

2020 1 2

2021 1 3

2022 0 1

2023 0 2

select

id1,id2,row_number() over(partition by flag order by id1) as id3

from(

select t1.id1,t1.id2,t1.dr,t1.id1-t1.dr as flag

from(

select

id1,id2,

dense_rank() over(partition by id2 order by id1) as dr

from m4

) t1

)t2 order by id1;

五、级联求和

练习:

有如下访客访问次数统计表 access

访客 月份 访问次数

name dt times

A 2015-01 5

A 2015-01 15

B 2015-01 5

A 2015-01 8

B 2015-01 25

A 2015-01 5

A 2015-02 4

A 2015-02 6

B 2015-02 10

B 2015-02 5

…… …… ……

需要输出报表:t_access_times_accumulate

访客 月份 月访问总计 累计访问总计

A 2015-01 33 33

A 2015-02 10 43

……. ……. ……. …….

B 2015-01 30 30

B 2015-02 15 45

select

name,dt,st,

sum(st) over(partition by name order by dt) as sst

from

(select

name,dt,sum(times) st

from access a

group by name,dt

) t;

六、求黑名单

求黑名单

有以下数据:

userid url timestamp

1 www.baidu.com 2019-05-24 08:30:23:019

2 www.sina.com.cn 2019-05-24 08:31:23:026

1 www.taobao.com 2019-05-24 08:31:24:002

…

1 wwww 2019-05-24 08:35:45:001 100

1 222 2019-05-24 08:36:12:004 102

请求出5分钟内访问次数达到100次的用户

select

userid

from t

where

unix_timestamp(timestamp)-

unix_timestamp(

lag(timestamp,99,timestamp) over(partition by userid order by timestamp ))

<=5*60*60*1000;

七、求各科的前3名的SQL写法:

cid sid score count

01 03 80 0 1

01 01 80 0 1

01 05 76 2 3

02 01 90 0

02 07 89 1

02 05 87 2

03 01 99 0

03 07 98 1

03 02 80 2

03 03 80 2

04 07 60 0

04 01 50 1

select

a.c_id,a.s_id ,a.s_score,count(distinct b.s_id)

from Score a

left join Score b on a.c_id=b.c_id and a.s_score<b.s_score

group by a.c_id,a.s_id ,a.s_score

having count( b.s_score)<3

order by a.c_id,a.s_score desc;

查看中间过程中join效果的SQL:

select

a.c_id,a.s_id ,a.s_score,b.s_id ,b.s_score

from Score a

left join Score b on a.c_id=b.c_id and a.s_score<b.s_score

group by a.c_id,a.s_id ,a.s_score,b.s_id ,b.s_score

order by a.c_id,a.s_score desc;

中间join的效果:

空的地方是null

01 03 80

01 01 80

01 05 76 03 80

01 05 76 01 80

01 02 70 03 80

01 02 70 01 80

01 02 70 05 76

01 04 50 02 70

01 04 50 05 76

01 04 50 01 80

01 04 50 03 80

01 06 31 01 80

01 06 31 03 80

01 06 31 05 76

01 06 31 02 70

01 06 31 04 50

02 01 90

02 07 89 01 90

02 05 87 07 89

02 05 87 01 90

02 03 80 05 87

02 03 80 01 90

02 03 80 07 89

02 02 60 01 90

02 02 60 05 87

02 02 60 03 80

02 02 60 07 89

02 04 30 02 60

02 04 30 05 87

02 04 30 01 90

02 04 30 03 80

02 04 30 07 89

03 01 99

03 07 98 01 99

03 02 80 01 99

03 03 80 01 99

03 02 80 07 98

03 03 80 07 98

03 06 34 02 80

03 06 34 07 98

03 06 34 01 99

03 06 34 03 80

03 04 20 01 99

03 04 20 03 80

03 04 20 07 98

03 04 20 02 80

03 04 20 06 34

04 07 60

04 01 50 07 60

ps:在Mysql以上可以,但是在hive中,join 不支持非等值链接,

left join Score b on a.c_id=b.c_id

where a.s_score<b.s_score

但是!!这样实现不了要求的效果

七、求数字1所在位置

原数据:

id1

1011

0101

要求输出:

id1

1,3,4

2,4

select concat_ws(',',collect set(cast(res as string))from

(select id,sp1,row_number() over(distribute by id)res from

(select split(id,"") sp,row_number() over() id from m2)a lateral view explode(sp)sp1 as sp1

where sp1!=")b

where sp1==1 group by id;

八,求店铺销售

有销售表如下

store_sale.store store_sale.prod store_sale.sale

1001 A 600.0

1001 B 700.0

1002 A 300.0

1002 B 200.0

1003 A 100.0

1003 B 800.0

求出这样的结果:

store prod sale storesale store_num sale_num

1001 A 600.0 1300.0 2 3

1001 B 700.0 1300.0 1 2

1002 A 300.0 500.0 1 4

1002 B 200.0 500.0 2 5

1003 A 100.0 900.0 2 6

1003 B 800.0 900.0 1 1

select

store,prod,sale,

sum(sale) over(partition by store) as storesale,

row_number() over(partition by store order by sale desc) as store_num,

row_number() over(order by sale desc) as sale_num

from store_sale

order by store,prod

;

九、转换单位名称

select

a.user_id,a.pt_id,nvl(b.dpt_name,'其他部门')

from user a left join dpt b on a.dpt_id=b.dpt_id;

2057

2057

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?