文章目录

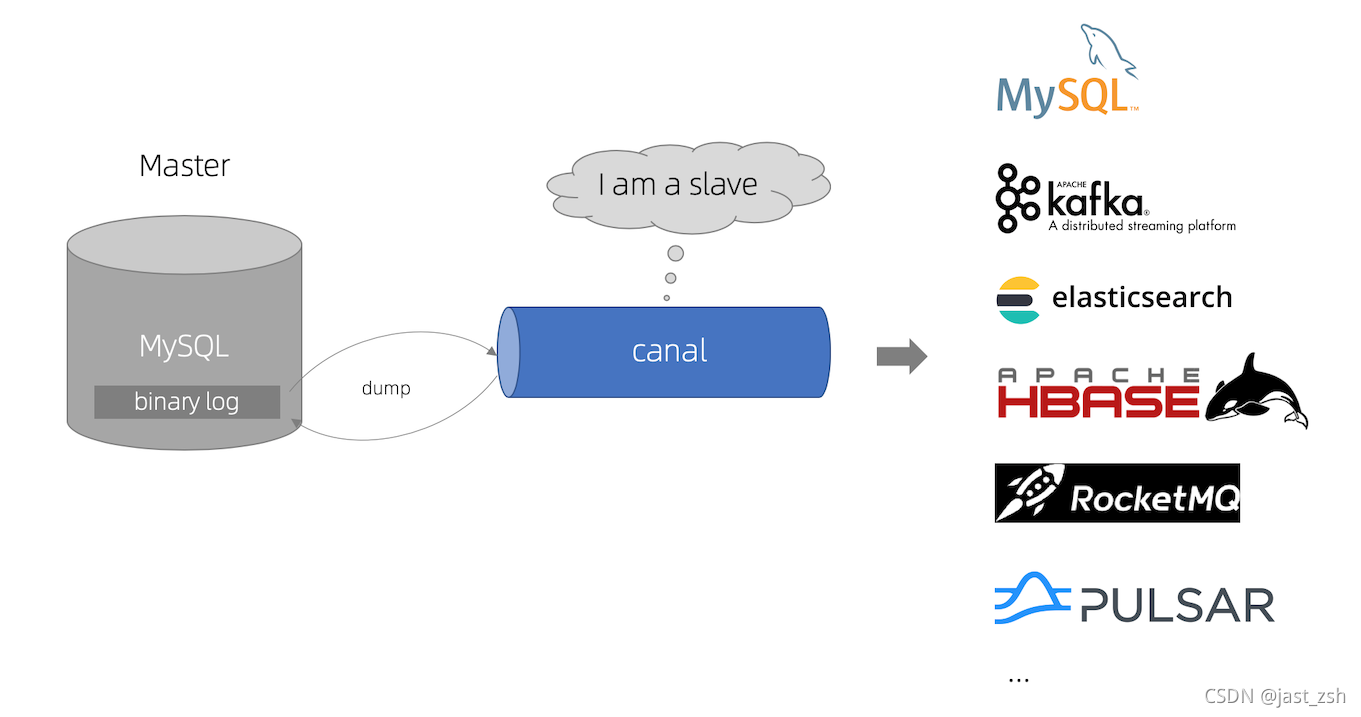

一、Canal介绍

早期阿里巴巴因为杭州和美国双机房部署,存在跨机房同步的业务需求,实现方式主要是基于业务 trigger 获取增量变更。从 2010 年开始,业务逐步尝试数据库日志解析获取增量变更进行同步,由此衍生出了大量的数据库增量订阅和消费业务。

基于日志增量订阅和消费的业务包括

- 数据库镜像

- 数据库实时备份

- 索引构建和实时维护(拆分异构索引、倒排索引等)

- 业务 cache 刷新

- 带业务逻辑的增量数据处理

当前的 canal 支持源端 MySQL 版本包括 5.1.x , 5.5.x , 5.6.x , 5.7.x , 8.0.x

工作原理

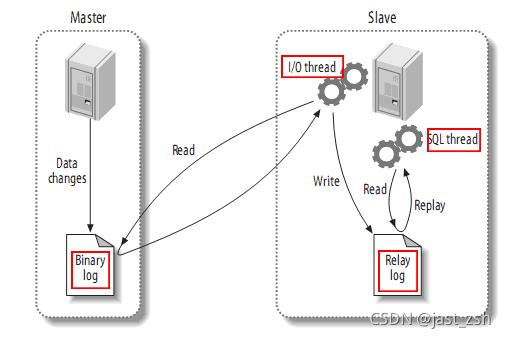

- MySQL master 将数据变更写入二进制日志( binary log, 其中记录叫做二进制日志事件binary log events,可以通过 show binlog events 进行查看)

- MySQL slave 将 master 的 binary log events 拷贝到它的中继日志(relay log)

- MySQL slave 重放 relay log 中事件,将数据变更反映它自己的数据

canal 工作原理

- canal 模拟 MySQL slave 的交互协议,伪装自己为 MySQL slave ,向 - MySQL master 发送dump 协议

- MySQL master 收到 dump 请求,开始推送 binary log 给 slave (即 canal )

- canal 解析 binary log 对象(原始为 byte 流)

GitHub : https://github.com/alibaba/canal

二、下载

下载地址:https://github.com/alibaba/canal/tags

这里我们使用 v1.1.5版本 ,点击下载

网盘地址:

链接: https://pan.baidu.com/s/1VjIzpb79d05CET5xEnwdEQ

提取码: h0bk

三、安装使用

Mysql准备

- 对于自建 MySQL , 需要先开启 Binlog 写入功能,配置 binlog-format 为 ROW 模式,my.cnf 中配置如下

[mysqld]

log-bin=mysql-bin # 开启 binlog

binlog-format=ROW # 选择 ROW 模式

server_id=1 # 配置 MySQL replaction 需要定义,不要和 canal 的 slaveId 重复

- 授权 canal 链接 MySQL 账号具有作为 MySQL slave 的权限, 如果已有账户可直接 grant

CREATE USER canal IDENTIFIED BY 'canal';

GRANT SELECT, REPLICATION SLAVE, REPLICATION CLIENT ON *.* TO 'canal'@'%';

-- GRANT ALL PRIVILEGES ON *.* TO 'canal'@'%' ;

FLUSH PRIVILEGES;

canal 安装

解压缩 canal-deployer

tar -zxvf canal.deployer-1.1.5.tar.gz

解压后目录结构如下

drwxr-xr-x 2 root root 76 Sep 18 16:58 bin

drwxr-xr-x 5 root root 123 Sep 18 16:58 conf

drwxr-xr-x 2 root root 4096 Sep 18 16:58 lib

drwxrwxrwx 2 root root 6 Apr 19 16:15 logs

drwxrwxrwx 2 root root 177 Apr 19 16:15 plugin

配置修改

- 修改 confg/canal.properties

#################################################

######### common argument #############

#################################################

# tcp bind ip

# canal server绑定的本地IP信息,如果不配置,默认选择一个本机IP进行启动服务,默认:无

canal.ip =

# register ip to zookeeper

# 运行canal-server服务的主机IP,可以不用配置,他会自动绑定一个本机的IP

canal.register.ip =

# canal-server监听的端口(TCP模式下,非TCP模式不监听1111端口)

canal.port = 11111

# canal-server metrics.pull监听的端口

canal.metrics.pull.port = 11112

# canal instance user/passwd

# canal.user = canal

# canal.passwd = E3619321C1A937C46A0D8BD1DAC39F93B27D4458

# canal admin config

#canal.admin.manager = 127.0.0.1:8089

canal.admin.port = 11110

canal.admin.user = admin

canal.admin.passwd = 4ACFE3202A5FF5CF467898FC58AAB1D615029441

# admin auto register

#canal.admin.register.auto = true

#canal.admin.register.cluster =

#canal.admin.register.name =

# canal server 链接zookeeper集群的链接信息,集群模式下要配置zookeeper进行协调配置,单机模式可以不用配置

canal.zkServers =

# flush data to zk canal持久化数据到zookeeper上的更新频率,单位毫秒

canal.zookeeper.flush.period = 1000

canal.withoutNetty = false

# tcp, kafka, rocketMQ, rabbitMQ canal-server运行的模式,TCP模式就是直连客户端,不经过中间件。kafka和mq是消息队列的模式

canal.serverMode = tcp

# flush meta cursor/parse position to file 存放数据的路径

canal.file.data.dir = ${canal.conf.dir}

canal.file.flush.period = 1000

## memory store RingBuffer size, should be Math.pow(2,n)

canal.instance.memory.buffer.size = 16384

## memory store RingBuffer used memory unit size , default 1kb 下面是一些系统参数的配置,包括内存、网络等

canal.instance.memory.buffer.memunit = 1024

## meory store gets mode used MEMSIZE or ITEMSIZE

canal.instance.memory.batch.mode = MEMSIZE

canal.instance.memory.rawEntry = true

## detecing config 这里是心跳检查的配置,做HA时会用到

canal.instance.detecting.enable = false

#canal.instance.detecting.sql = insert into retl.xdual values(1,now()) on duplicate key update x=now()

canal.instance.detecting.sql = select 1

canal.instance.detecting.interval.time = 3

canal.instance.detecting.retry.threshold = 3

canal.instance.detecting.heartbeatHaEnable = false

# support maximum transaction size, more than the size of the transaction will be cut into multiple transactions delivery

canal.instance.transaction.size = 1024

# mysql fallback connected to new master should fallback times

canal.instance.fallbackIntervalInSeconds = 60

# network config

canal.instance.network.receiveBufferSize = 16384

canal.instance.network.sendBufferSize = 16384

canal.instance.network.soTimeout = 30

# binlog filter config binlog过滤的配置,指定过滤那些SQL

canal.instance.filter.druid.ddl = true

# 是否忽略DCL的query语句,比如grant/create user等,默认false

canal.instance.filter.query.dcl = false

# 是否忽略DML的query语句,比如insert/update/delete table.(mysql5.6的ROW模式可以包含statement模式的query记录),默认false

canal.instance.filter.query.dml = false

# 是否忽略DDL的query语句,比如create table/alater table/drop table/rename table/create index/drop index.

# (目前支持的ddl类型主要为table级别的操作,create databases/trigger/procedure暂时划分为dcl类型),默认false

canal.instance.filter.query.ddl = false

canal.instance.filter.table.error = false

canal.instance.filter.rows = false

canal.instance.filter.transaction.entry = false

canal.instance.filter.dml.insert = false

canal.instance.filter.dml.update = false

canal.instance.filter.dml.delete = false

# binlog format/image check binlog格式检测,使用ROW模式,非ROW模式也不会报错,但是同步不到数据

canal.instance.binlog.format = ROW,STATEMENT,MIXED

canal.instance.binlog.image = FULL,MINIMAL,NOBLOB

# binlog ddl isolation

canal.instance.get.ddl.isolation = false

# parallel parser config 并行解析配置,如果是单个CPU就把下面这个true改为false

canal.instance.parser.parallel = true

## concurrent thread number, default 60% available processors, suggest not to exceed Runtime.getRuntime().availableProcessors()

#canal.instance.parser.parallelThreadSize = 16

## disruptor ringbuffer size, must be power of 2

canal.instance.parser.parallelBufferSize = 256

# table meta tsdb info

canal.instance.tsdb.enable = true

canal.instance.tsdb.dir = ${canal.file.data.dir:../conf}/${canal.instance.destination:}

canal.instance.tsdb.url = jdbc:h2:${canal.instance.tsdb.dir}/h2;CACHE_SIZE=1000;MODE=MYSQL;

canal.instance.tsdb.dbUsername = canal

canal.instance.tsdb.dbPassword = canal

# dump snapshot interval, default 24 hour

canal.instance.tsdb.snapshot.interval = 24

# purge snapshot expire , default 360 hour(15 days)

canal.instance.tsdb.snapshot.expire = 360

#################################################

######### destinations #############

#################################################

# canal-server创建的实例,在这里指定你要创建的实例的名字,比如test1,test2等,逗号隔开

canal.destinations = example

# conf root dir

canal.conf.dir = ../conf

# auto scan instance dir add/remove and start/stop instance

canal.auto.scan = true

canal.auto.scan.interval = 5

# set this value to 'true' means that when binlog pos not found, skip to latest.

# WARN: pls keep 'false' in production env, or if you know what you want.

canal.auto.reset.latest.pos.mode = false

canal.instance.tsdb.spring.xml = classpath:spring/tsdb/h2-tsdb.xml

#canal.instance.tsdb.spring.xml = classpath:spring/tsdb/mysql-tsdb.xml

canal.instance.global.mode = spring

canal.instance.global.lazy = false

canal.instance.global.manager.address = ${canal.admin.manager}

#canal.instance.global.spring.xml = classpath:spring/memory-instance.xml

canal.instance.global.spring.xml = classpath:spring/file-instance.xml

#canal.instance.global.spring.xml = classpath:spring/default-instance.xml

##################################################

######### MQ Properties #############

##################################################

# aliyun ak/sk , support rds/mq

canal.aliyun.accessKey =

canal.aliyun.secretKey =

canal.aliyun.uid=

canal.mq.flatMessage = true

canal.mq.canalBatchSize = 50

canal.mq.canalGetTimeout = 100

# Set this value to "cloud", if you want open message trace feature in aliyun.

canal.mq.accessChannel = local

canal.mq.database.hash = true

canal.mq.send.thread.size = 30

canal.mq.build.thread.size = 8

##################################################

######### Kafka #############

##################################################

kafka.bootstrap.servers = 127.0.0.1:9092

kafka.acks = all

kafka.compression.type = none

kafka.batch.size = 16384

kafka.linger.ms = 1

kafka.max.request.size = 1048576

kafka.buffer.memory = 33554432

kafka.max.in.flight.requests.per.connection = 1

kafka.retries = 0

kafka.kerberos.enable = false

kafka.kerberos.krb5.file = "../conf/kerberos/krb5.conf"

kafka.kerberos.jaas.file = "../conf/kerberos/jaas.conf"

##################################################

######### RocketMQ #############

##################################################

rocketmq.producer.group = test

rocketmq.enable.message.trace = false

rocketmq.customized.trace.topic =

rocketmq.namespace =

rocketmq.namesrv.addr = 127.0.0.1:9876

rocketmq.retry.times.when.send.failed = 0

rocketmq.vip.channel.enabled = false

rocketmq.tag =

##################################################

######### RabbitMQ #############

##################################################

rabbitmq.host =

rabbitmq.virtual.host =

rabbitmq.exchange =

rabbitmq.username =

rabbitmq.password =

rabbitmq.deliveryMode =

- 修改example配置

在 confg/canal.properties配置了实例后,需要在根配置的同级目录下创建该实例目录,并创建文件 instance.properties。(example是官方给的Demo)

内容如下:

#################################################

## mysql serverId , v1.0.26+ will autoGen

## v1.0.26版本后会自动生成slaveId,所以可以不用配置

# canal.instance.mysql.slaveId=0

# enable gtid use true/false

canal.instance.gtidon=false

# position info

# 数据库地址

canal.instance.master.address=127.0.0.1:3306

# binlog日志名称

canal.instance.master.journal.name=

# mysql主库链接时起始的binlog偏移量

canal.instance.master.position=

# mysql主库链接时起始的binlog的时间戳

canal.instance.master.timestamp=

canal.instance.master.gtid=

# rds oss binlog

canal.instance.rds.accesskey=

canal.instance.rds.secretkey=

canal.instance.rds.instanceId=

# table meta tsdb info

canal.instance.tsdb.enable=true

#canal.instance.tsdb.url=jdbc:mysql://127.0.0.1:3306/canal_tsdb

#canal.instance.tsdb.dbUsername=canal

#canal.instance.tsdb.dbPassword=canal

#canal.instance.standby.address =

#canal.instance.standby.journal.name =

#canal.instance.standby.position =

#canal.instance.standby.timestamp =

#canal.instance.standby.gtid=

# username/password

canal.instance.dbUsername=canal

canal.instance.dbPassword=canal

# canal.instance.connectionCharset 代表数据库的编码方式对应到 java 中的编码类型,比如 UTF-8,GBK , ISO-8859-1

canal.instance.connectionCharset = UTF-8

# enable druid Decrypt database password

canal.instance.enableDruid=false

#canal.instance.pwdPublicKey=MFwwDQYJKoZIhvcNAQEBBQADSwAwSAJBALK4BUxdDltRRE5/zXpVEVPUgunvscYFtEip3pmLlhrWpacX7y7GCMo2/JM6LeHmiiNdH1FWgGCpUfircSwlWKUCAwEAAQ==

# table regex

# 配置监听,支持正则表达式

# mysql 数据解析关注的表,Perl正则表达式.多个正则之间以逗号(,)分隔,转义符需要双斜杠(\\)

# 常见例子:

# 1. 所有表:.* or .*\\..*

# 2. canal schema下所有表: canal\\..*

# 3. canal下的以canal打头的表:canal\\.canal.*

# 4. canal schema下的一张表:canal.test1

# 5. 多个规则组合使用:canal\\..*,mysql.test1,mysql.test2 (逗号分隔)

# 这个是比较重要的参数,匹配库表白名单,比如我只要test库的user表的增量数据,则这样写 test.user

canal.instance.filter.regex=.*\\..*

# table black regex

# 配置不监听,支持正则表达式

canal.instance.filter.black.regex=mysql\\.slave_.*

# table field filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

#canal.instance.filter.field=test1.t_product:id/subject/keywords,test2.t_company:id/name/contact/ch

# table field black filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2)

#canal.instance.filter.black.field=test1.t_product:subject/product_image,test2.t_company:id/name/contact/ch

# mq config

canal.mq.topic=example

# dynamic topic route by schema or table regex

#canal.mq.dynamicTopic=mytest1.user,mytest2\\..*,.*\\..*

canal.mq.partition=0

# hash partition config

#canal.mq.partitionsNum=3

#canal.mq.partitionHash=test.table:id^name,.*\\..*

#canal.mq.dynamicTopicPartitionNum=test.*:4,mycanal:6

#################################################

启动

sh bin/startup.sh

查看server日志

# tailf logs/canal/canal.log

2021-09-19 09:38:26.746 [main] INFO com.alibaba.otter.canal.deployer.CanalLauncher - ## set default uncaught exception handler

2021-09-19 09:38:26.793 [main] INFO com.alibaba.otter.canal.deployer.CanalLauncher - ## load canal configurations

2021-09-19 09:38:26.812 [main] INFO com.alibaba.otter.canal.deployer.CanalStarter - ## start the canal server.

2021-09-19 09:38:26.874 [main] INFO com.alibaba.otter.canal.deployer.CanalController - ## start the canal server[192.168.168.2(192.168.168.2):11111]

2021-09-19 09:38:28.240 [main] INFO com.alibaba.otter.canal.deployer.CanalStarter - ## the canal server is running now ......

查看instance日志

# tailf logs/example/example.log

2021-09-19 09:38:28.191 [main] INFO c.a.otter.canal.instance.spring.CanalInstanceWithSpring - start CannalInstance for 1-example

2021-09-19 09:38:28.202 [main] WARN c.a.o.canal.parse.inbound.mysql.dbsync.LogEventConvert - --> init table filter : ^.*\..*$

2021-09-19 09:38:28.202 [main] WARN c.a.o.canal.parse.inbound.mysql.dbsync.LogEventConvert - --> init table black filter : ^mysql\.slave_.*$

2021-09-19 09:38:28.207 [main] INFO c.a.otter.canal.instance.core.AbstractCanalInstance - start successful....

服务停止

sh bin/stop.sh

canal-client使用

- manve引用

<dependency>

<groupId>com.alibaba.otter</groupId>

<artifactId>canal.client</artifactId>

<version>1.1.0</version>

</dependency>

- ClientSample.java

import java.net.InetSocketAddress;

import java.util.List;

import com.alibaba.otter.canal.client.CanalConnectors;

import com.alibaba.otter.canal.client.CanalConnector;

import com.alibaba.otter.canal.common.utils.AddressUtils;

import com.alibaba.otter.canal.protocol.Message;

import com.alibaba.otter.canal.protocol.CanalEntry.Column;

import com.alibaba.otter.canal.protocol.CanalEntry.Entry;

import com.alibaba.otter.canal.protocol.CanalEntry.EntryType;

import com.alibaba.otter.canal.protocol.CanalEntry.EventType;

import com.alibaba.otter.canal.protocol.CanalEntry.RowChange;

import com.alibaba.otter.canal.protocol.CanalEntry.RowData;

/**

* @author Jast

* @description

* @date 2021-09-19 09:43

*/

public class ClientSample {

public static void main(String args[]) {

// 创建链接

// CanalConnector connector = CanalConnectors.newSingleConnector(new InetSocketAddress(AddressUtils.getHostIp(),

// 11111), "example", "", "");

CanalConnector connector = CanalConnectors.newSingleConnector(new InetSocketAddress("192.168.168.2",

11111), "example", "", "");

int batchSize = 1000;

int emptyCount = 0;

try {

connector.connect();

connector.subscribe(".*\\..*");

connector.rollback();

int totalEmptyCount = 120;

while (emptyCount < totalEmptyCount) {

Message message = connector.getWithoutAck(batchSize); // 获取指定数量的数据

long batchId = message.getId();

int size = message.getEntries().size();

if (batchId == -1 || size == 0) {

emptyCount++;

System.out.println("empty count : " + emptyCount);

try {

Thread.sleep(1000);

} catch (InterruptedException e) {

}

} else {

emptyCount = 0;

// System.out.printf("message[batchId=%s,size=%s] \n", batchId, size);

printEntry(message.getEntries());

}

connector.ack(batchId); // 提交确认

// connector.rollback(batchId); // 处理失败, 回滚数据

}

System.out.println("empty too many times, exit");

} finally {

connector.disconnect();

}

}

private static void printEntry(List<Entry> entrys) {

for (Entry entry : entrys) {

if (entry.getEntryType() == EntryType.TRANSACTIONBEGIN || entry.getEntryType() == EntryType.TRANSACTIONEND) {

continue;

}

RowChange rowChage = null;

try {

rowChage = RowChange.parseFrom(entry.getStoreValue());

} catch (Exception e) {

throw new RuntimeException("ERROR ## parser of eromanga-event has an error , data:" + entry.toString(),

e);

}

EventType eventType = rowChage.getEventType();

System.out.println(String.format("================> binlog[%s:%s] , name[%s,%s] , eventType : %s",

entry.getHeader().getLogfileName(), entry.getHeader().getLogfileOffset(),

entry.getHeader().getSchemaName(), entry.getHeader().getTableName(),

eventType));

for (RowData rowData : rowChage.getRowDatasList()) {

if (eventType == EventType.DELETE) {

printColumn(rowData.getBeforeColumnsList());

} else if (eventType == EventType.INSERT) {

printColumn(rowData.getAfterColumnsList());

} else {

System.out.println("-------> before");

printColumn(rowData.getBeforeColumnsList());

System.out.println("-------> after");

printColumn(rowData.getAfterColumnsList());

}

}

}

}

private static void printColumn(List<Column> columns) {

for (Column column : columns) {

System.out.println(column.getName() + " : " + column.getValue() + " update=" + column.getUpdated());

}

}

}

此时数据库相关操作会在控制台输出

================> binlog[mysql-bin.000003:834] , name[mysql,test] , eventType : CREATE

Canal Adapter

- 解压压缩包

mkdir canal-adapter && tar -zxvf canal.adapter-1.1.5.tar.gz -C canal-adapter

数据同步Hbase

- 1.修改启动器配置:{canal-apapter}/conf/application.yml

server:

port: 8081

logging:

level:

com.alibaba.otter.canal.client.adapter: DEBUG

com.alibaba.otter.canal.client.adapter.hbase: DEBUG

spring:

jackson:

date-format: yyyy-MM-dd HH:mm:ss

time-zone: GMT+8

default-property-inclusion: non_null

canal.conf:

# tcp kafka rocketMQ rabbitMQ canal-server运行的模式,TCP模式就是直连客户端,不经过中间件。kafka和mq是消息队列的模式

mode: tcp

# flatMessage: true

zookeeperHosts:

syncBatchSize: 1

retries: 0

timeout: 1000

accessKey:

secretKey:

consumerProperties:

# canal tcp consumer 指定canal-server的地址和端口

canal.tcp.server.host: 127.0.0.1:11111

canal.tcp.zookeeper.hosts: 127.0.0.1:2181

canal.tcp.batch.size: 1

canal.tcp.username:

canal.tcp.password:

srcDataSources: # 数据源配置,从哪里获取数据

defaultDS: # 指定一个名字,在ES的配置中会用到,唯一

url: jdbc:mysql://127.0.0.1:3306/test2?useUnicode=true

username: root

password: *****

canalAdapters:

- instance: example # canal instance Name or mq topic name 指定在canal配置的实例名称

groups:

- groupId: g1

outerAdapters:

- name: logger

# - name: rdb

# key: mysql1

# properties:

# jdbc.driverClassName: com.mysql.jdbc.Driver

# jdbc.url: jdbc:mysql://127.0.0.1:3306/mytest2?useUnicode=true

# jdbc.username: root

# jdbc.password: 121212

# - name: rdb

# key: oracle1

# properties:

# jdbc.driverClassName: oracle.jdbc.OracleDriver

# jdbc.url: jdbc:oracle:thin:@localhost:49161:XE

# jdbc.username: mytest

# jdbc.password: m121212

# - name: rdb

# key: postgres1

# properties:

# jdbc.driverClassName: org.postgresql.Driver

# jdbc.url: jdbc:postgresql://localhost:5432/postgres

# jdbc.username: postgres

# jdbc.password: 121212

# threads: 1

# commitSize: 3000

- name: hbase # config目录下的子目录名称

properties:

hbase.zookeeper.quorum: sangfor.abdi.node3,sangfor.abdi.node2,sangfor.abdi.node1

hbase.zookeeper.property.clientPort: 2181

zookeeper.znode.parent: /hbase-unsecure # 这里是hbase在Zookeeper元信息的目录

# - name: es7

# hosts: 127.0.0.1:9300 # 127.0.0.1:9200 for rest mode

# properties:

# mode: transport # or rest

# # security.auth: test:123456 # only used for rest mode

# cluster.name: my_application

# - name: kudu

# key: kudu

# properties:

# kudu.master.address: 127.0.0.1 # ',' split multi address

注意:adapter将会自动加载 conf/hbase 下的所有.yml结尾的配置文件

- 2.Hbase表映射文件

修改 conf/hbase/mytest_person.yml文件:

dataSourceKey: defaultDS # 对应application.yml中的datasourceConfigs下的配置

destination: example # 对应tcp模式下的canal instance或者MQ模式下的topic

groupId: # !!! 注意,同步Hbase数据这里groupId不要填写内容,对应MQ模式下的groupId, 只会同步对应groupId的数据

hbaseMapping: # mysql--HBase的单表映射配置

mode: STRING # HBase中的存储类型, 默认统一存为String, 可选: #PHOENIX #NATIVE #STRING

# NATIVE: 以java类型为主, PHOENIX: 将类型转换为Phoenix对应的类型

destination: example # 对应 canal destination/MQ topic 名称

database: mytest # 数据库名/schema名

table: person # 表名

hbaseTable: MYTEST.PERSON # HBase表名

family: CF # 默认统一Column Family名称

uppercaseQualifier: true # 字段名转大写, 默认为true

commitBatch: 3000 # 批量提交的大小, ETL中用到

#rowKey: id,type # 复合字段rowKey不能和columns中的rowKey并存

# 复合rowKey会以 '|' 分隔

columns: # 字段映射, 如果不配置将自动映射所有字段,

# 并取第一个字段为rowKey, HBase字段名以mysql字段名为主

id: ROWKE

name: CF:NAME

email: EMAIL # 如果column family为默认CF, 则可以省略

type: # 如果HBase字段和mysql字段名一致, 则可以省略

c_time:

birthday:

注意: 如果涉及到类型转换,可以如下形式:

...

columns:

id: ROWKE$STRING

...

type: TYPE$BYTE

...

类型转换涉及到Java类型和Phoenix类型两种, 分别定义如下:

#Java 类型转换, 对应配置 mode: NATIVE

$DEFAULT

$STRING

$INTEGER

$LONG

$SHORT

$BOOLEAN

$FLOAT

$DOUBLE

$BIGDECIMAL

$DATE

$BYTE

$BYTES

#Phoenix 类型转换, 对应配置 mode: PHOENIX

$DEFAULT 对应PHOENIX里的VARCHAR

$UNSIGNED_INT 对应PHOENIX里的UNSIGNED_INT 4字节

$UNSIGNED_LONG 对应PHOENIX里的UNSIGNED_LONG 8字节

$UNSIGNED_TINYINT 对应PHOENIX里的UNSIGNED_TINYINT 1字节

$UNSIGNED_SMALLINT 对应PHOENIX里的UNSIGNED_SMALLINT 2字节

$UNSIGNED_FLOAT 对应PHOENIX里的UNSIGNED_FLOAT 4字节

$UNSIGNED_DOUBLE 对应PHOENIX里的UNSIGNED_DOUBLE 8字节

$INTEGER 对应PHOENIX里的INTEGER 4字节

$BIGINT 对应PHOENIX里的BIGINT 8字节

$TINYINT 对应PHOENIX里的TINYINT 1字节

$SMALLINT 对应PHOENIX里的SMALLINT 2字节

$FLOAT 对应PHOENIX里的FLOAT 4字节

$DOUBLE 对应PHOENIX里的DOUBLE 8字节

$BOOLEAN 对应PHOENIX里的BOOLEAN 1字节

$TIME 对应PHOENIX里的TIME 8字节

$DATE 对应PHOENIX里的DATE 8字节

$TIMESTAMP 对应PHOENIX里的TIMESTAMP 12字节

$UNSIGNED_TIME 对应PHOENIX里的UNSIGNED_TIME 8字节

$UNSIGNED_DATE 对应PHOENIX里的UNSIGNED_DATE 8字节

$UNSIGNED_TIMESTAMP 对应PHOENIX里的UNSIGNED_TIMESTAMP 12字节

$VARCHAR 对应PHOENIX里的VARCHAR 动态长度

$VARBINARY 对应PHOENIX里的VARBINARY 动态长度

$DECIMAL 对应PHOENIX里的DECIMAL 动态长度

如果不配置将以java对象原生类型默认映射转换

- 3.启动服务

启动:bin/startup.sh

停止:bin/stop.sh

重启:bin/restart.sh

日志目录:logs/adapter/adapter.log

- 4.验证服务

往mysql插入数据

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

日志内容,可以看出我们写入的数据已获取到

2021-09-20 12:35:09.682 [pool-1-thread-1] INFO c.a.o.canal.client.adapter.logger.LoggerAdapterExample - DML: {"data":[{"id":"2286ed67-19cc-11ec-bbe0-708cb6f5eaa6","name":"2286ed83-19cc-11ec-bbe0-708cb6f5eaa6","age":2,"age_2":null,"message":null,"insert_time":1632112508000}],"database":"test2","destination":"example","es":1632112508000,"groupId":"g1","isDdl":false,"old":null,"pkNames":["id"],"sql":"","table":"testsync","ts":1632112509680,"type":"INSERT"}

2021-09-20 12:35:09.689 [pool-1-thread-1] DEBUG c.a.o.c.client.adapter.hbase.service.HbaseSyncService - DML: {"data":[{"id":"2286ed67-19cc-11ec-bbe0-708cb6f5eaa6","name":"2286ed83-19cc-11ec-bbe0-708cb6f5eaa6","age":2,"age_2":null,"message":null,"insert_time":1632112508000}],"database":"test2","destination":"example","es":1632112508000,"groupId":"g1","isDdl":false,"old":null,"pkNames":["id"],"sql":"","table":"testsync","ts":1632112509680,"type":"INSERT"}

查看Hbase表中的数据,发现写入成功

hbase(main):036:0> scan 'testsync',{LIMIT=>1}

ROW COLUMN+CELL

226ba6e8-19cc-11ec-bbe0-708c column=CF:AGE, timestamp=2021-09-20T12:35:08.548, value=2

b6f5eaa6

226ba6e8-19cc-11ec-bbe0-708c column=CF:INSERT_TIME, timestamp=2021-09-20T12:35:08.548, value=2021-09-20 12:35:08.0

b6f5eaa6

226ba6e8-19cc-11ec-bbe0-708c column=CF:NAME, timestamp=2021-09-20T12:35:08.548, value=226ba718-19cc-11ec-bbe0-708c

b6f5eaa6 b6f5eaa6

1 row(s)

Took 0.0347 seconds

PS: 这个环节有个问题卡住很久,日志打印出数据,实际Hbase就是无法成功写入。解决方法参考:https://blog.csdn.net/zhangshenghang/article/details/120411341

数据同步ElasticSearch

我们接着在之前配置Hbase基础上直接修改配置,实现同时同步ElasticSearch

- 1.修改启动器配置 {canal-apapter}/conf/application.yml

server:

port: 8081

logging:

level:

com.alibaba.otter.canal.client.adapter: DEBUG

com.alibaba.otter.canal.client.adapter.hbase: DEBUG

spring:

jackson:

date-format: yyyy-MM-dd HH:mm:ss

time-zone: GMT+8

default-property-inclusion: non_null

canal.conf:

# tcp kafka rocketMQ rabbitMQ canal-server运行的模式,TCP模式就是直连客户端,不经过中间件。kafka和mq是消息队列的模式

mode: tcp

# flatMessage: true

zookeeperHosts:

syncBatchSize: 1

retries: 0

timeout: 1000

accessKey:

secretKey:

consumerProperties:

# canal tcp consumer 指定canal-server的地址和端口

canal.tcp.server.host: 127.0.0.1:11111

canal.tcp.zookeeper.hosts: 127.0.0.1:2181

canal.tcp.batch.size: 1

canal.tcp.username:

canal.tcp.password:

srcDataSources: # 数据源配置,从哪里获取数据

defaultDS: # 指定一个名字,在ES的配置中会用到,唯一

url: jdbc:mysql://127.0.0.1:3306/test2?useUnicode=true

username: root

password: *****

canalAdapters:

- instance: example # canal instance Name or mq topic name 指定在canal配置的实例名称

groups:

- groupId: g1

outerAdapters:

- name: logger

# - name: rdb

# key: mysql1

# properties:

# jdbc.driverClassName: com.mysql.jdbc.Driver

# jdbc.url: jdbc:mysql://127.0.0.1:3306/mytest2?useUnicode=true

# jdbc.username: root

# jdbc.password: 121212

# - name: rdb

# key: oracle1

# properties:

# jdbc.driverClassName: oracle.jdbc.OracleDriver

# jdbc.url: jdbc:oracle:thin:@localhost:49161:XE

# jdbc.username: mytest

# jdbc.password: m121212

# - name: rdb

# key: postgres1

# properties:

# jdbc.driverClassName: org.postgresql.Driver

# jdbc.url: jdbc:postgresql://localhost:5432/postgres

# jdbc.username: postgres

# jdbc.password: 121212

# threads: 1

# commitSize: 3000

- name: hbase

properties:

hbase.zookeeper.quorum: sangfor.abdi.node3,sangfor.abdi.node2,sangfor.abdi.node1

hbase.zookeeper.property.clientPort: 2181

zookeeper.znode.parent: /hbase-unsecure

- name: es7 # config目录下的子目录名称

hosts: 192.168.168.2:9300 # 127.0.0.1:9200 for rest mode

properties:

mode: transport # or rest

# # security.auth: test:123456 # only used for rest mode

cluster.name: my_application

# - name: kudu

# key: kudu

# properties:

# kudu.master.address: 127.0.0.1 # ',' split multi address

- 2.ElasticSearch 表映射文件

# 指定数据源,这个值和adapter的application.yml文件中配置的srcDataSources值对应。

dataSourceKey: defaultDS

# 指定canal-server中配置的某个实例的名字,不同实例对应不同业务

destination: example

# 组ID ,tcp方式这里填写空,不要填写值,不然可能会接收不到数据

groupId:

# ES的mapping(映射)

esMapping:

# ES索引名称

_index: testsync2

# ES标示文档的唯一标示,通常对应数据表中的主键ID字段

_id: _id

# upsert: true

# pk: id

# 数据表每个字段映射到表中的具体名称,不能重复

sql: "select a.id as _id, a.name,a.age,a.age_2,a.message,a.insert_time from testsync as a"

# objFields:

# _labels: array:;

# etlCondition: "where a.c_time>={}"

commitBatch: 10

- 3 重启服务

bin/restart.sh

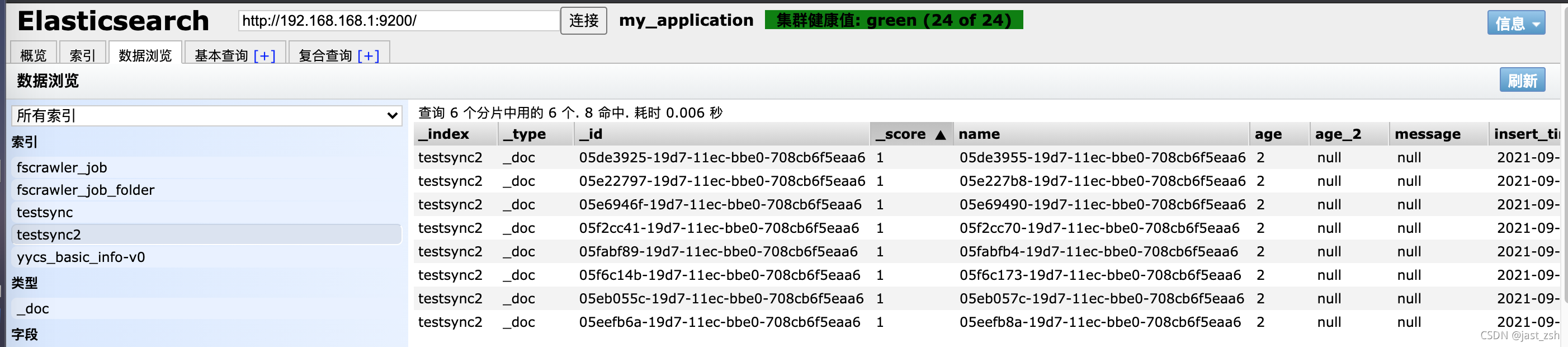

写入数据

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

INSERT INTO testsync(id,name,age,insert_time) values(UUID(),UUID(),2,now());

查看adapter日志

2021-09-20 13:53:07.279 [pool-1-thread-1] INFO c.a.o.canal.client.adapter.logger.LoggerAdapterExample - DML: {"data":[{"id":"05fabf89-19d7-11ec-bbe0-708cb6f5eaa6","name":"05fabfb4-19d7-11ec-bbe0-708cb6f5eaa6","age":2,"age_2":null,"message":null,"insert_time":1632117185000}],"database":"test2","destination":"example","es":1632117185000,"groupId":"g1","isDdl":false,"old":null,"pkNames":["id"],"sql":"","table":"testsync","ts":1632117187278,"type":"INSERT"}

2021-09-20 13:53:07.286 [pool-1-thread-1] DEBUG c.a.o.c.client.adapter.hbase.service.HbaseSyncService - DML: {"data":[{"id":"05fabf89-19d7-11ec-bbe0-708cb6f5eaa6","name":"05fabfb4-19d7-11ec-bbe0-708cb6f5eaa6","age":2,"age_2":null,"message":null,"insert_time":1632117185000}],"database":"test2","destination":"example","es":1632117185000,"groupId":"g1","isDdl":false,"old":null,"pkNames":["id"],"sql":"","table":"testsync","ts":1632117187278,"type":"INSERT"}

2021-09-20 13:53:07.287 [pool-1-thread-1] DEBUG c.a.o.canal.client.adapter.es.core.service.ESSyncService - DML: {"data":[{"id":"05fabf89-19d7-11ec-bbe0-708cb6f5eaa6","name":"05fabfb4-19d7-11ec-bbe0-708cb6f5eaa6","age":2,"age_2":null,"message":null,"insert_time":1632117185000}],"database":"test2","destination":"example","es":1632117185000,"groupId":"g1","isDdl":false,"old":null,"pkNames":["id"],"sql":"","table":"testsync","ts":1632117187278,"type":"INSERT"}

Affected indexes: testsync2

查看ElasticSearch数据

至此写入ElasticSearch、Hbase成功

8007

8007

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?