MongoDB Auto-Sharding 解决了海量存储和动态扩容的问题,但离实际生产环境所需的高可靠、高可用还有些距离。

[root@node1 /]# mkdir -p /data/config

connecting to: 127.0.0.1:27017/test

> config = {_id: 'shard1', members: [

for(var i=1;i<=200000;i++) db.users.insert({id:i,addr_1:"Beijing",addr_2:"Shanghai"});

mongos> db.users.stats()

{

"sharded" : true,

"paddingFactorNote" : "paddingFactor is unused and unmaintained in 3.0. It remains hard coded to 1.0 for compatibility only.",

"userFlags" : 1,

"capped" : false,

"ns" : "test.users",

"count" : 200000,

"numExtents" : 14,

"size" : 22400000,

"storageSize" : 45015040,

"totalIndexSize" : 6532624,

"indexSizes" : {

"_id_" : 6532624

},

"avgObjSize" : 112,

"nindexes" : 1,

"nchunks" : 41,

"shards" : {

shard2:PRIMARY> use test

switched to db test

shard2:PRIMARY> db.users.count()

104829 --有这么多条

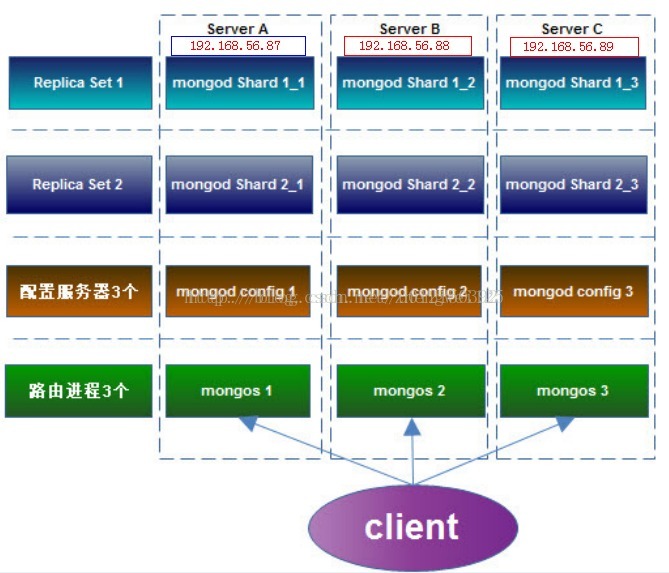

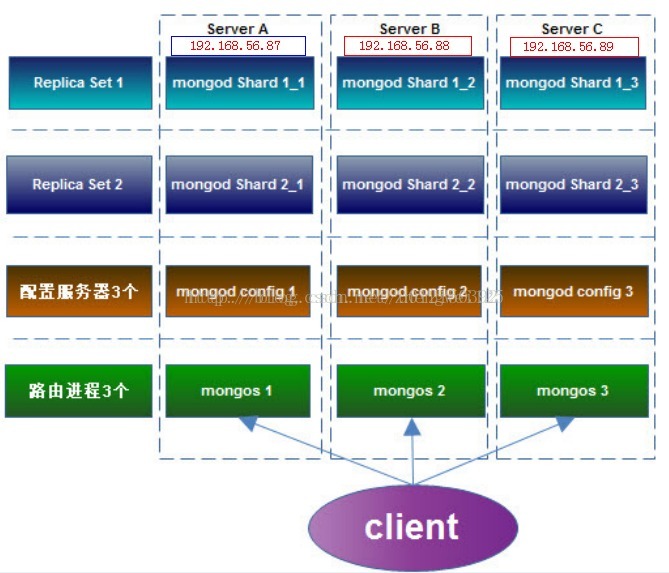

所以有了” Replica Sets + Sharding”的解决方案:

Shard: 使用 Replica Sets,确保每个数据节点都具有备份、自动容错转移、自动恢复能力。

Config:使用3 个配置服务器,确保元数据完整性

Route:使用3 个路由进程,实现负载平衡,提高客户端接入性能

以下我们配置一个 Replica Sets + Sharding 的环境,架构图如下:

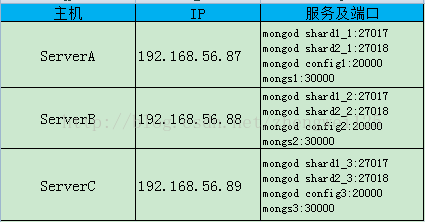

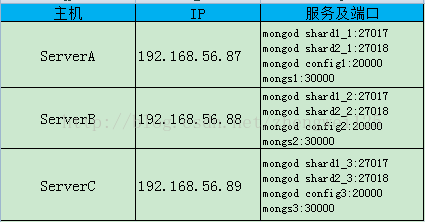

开放的端口如下:

1. 创建数据目录

在 Server A 上:

[root@node1 /]# mkdir -p /data/shard1_1

[root@node1 /]# mkdir -p /data/shard2_1

[root@node1 /]# mkdir -p /data/shard2_1

[root@node1 /]# mkdir -p /data/config

在 Server B 上:

[root@node1 /]# mkdir -p /data/shard1_2

[root@node1 /]# mkdir -p /data/shard2_2

[root@node1 /]# mkdir -p /data/config

[root@node1 /]# mkdir -p /data/shard2_2

[root@node1 /]# mkdir -p /data/config

在 Server C 上:

[root@node1 /]# mkdir -p /data/shard1_3

[root@node1 /]# mkdir -p /data/shard2_3

[root@node1 /]# mkdir -p /data/config

[root@node1 /]# chmod -R 755 /data

2. 配置 Replica Sets

2.1 配置shard1 所用到的 Replica Sets

在 Server A 上启动shard1 的 Replica Sets:

./mongod --shardsvr --replSet shard1 --port 27017 --dbpath /data/shard1_1 --logpath /data/shard1_1/shard1_1.log --logappend --fork

在 Server B 上启动shard1 的 Replica Sets:

./mongod --shardsvr --replSet shard1 --port 27017 --dbpath /data/shard1_2 --logpath /data/shard1_2/shard1_2.log --logappend --fork

在 Server C 上启动shard1 的 Replica Sets:

./mongod --shardsvr --replSet shard1 --port 27017 --dbpath /data/shard1_3 --logpath /data/shard1_3/shard1_3.log --logappend --fork

2.2 用mongo连接其中一台机器的27017 端口的mongod,初始化Replica Sets“shard1”,执行:

[root@node1 bin]# ./mongo --port 27017

MongoDB shell version: 1.8.1

connecting to: 127.0.0.1:27017/test

> config = {_id: 'shard1', members: [

{_id: 0, host: '192.168.56.87:27017'},

{_id: 1, host: '192.168.56.88:27017'},

{_id: 1, host: '192.168.56.88:27017'},

{_id: 2, host: '192.168.56.89:27017'}

] }

> config = {_id: 'shard2', members: [

{_id: 0, host: '192.168.56.87:27018'},

{_id: 1, host: '192.168.56.88:27018'},

{_id: 2, host: '192.168.56.89:27018'}

] }

> rs.initiate(config)

{

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}

2.3 配置shard2 所用到的 Replica Sets

在 Server A 上启动shard1 的 Replica Sets:

./mongod --shardsvr --replSet shard2 --port 27018 --dbpath /data/shard2_1 --logpath /data/shard2_1/shard2_1.log --logappend --fork

在 Server B 上启动shard1 的 Replica Sets:

./mongod --shardsvr --replSet shard2 --port 27018 --dbpath /data/shard2_2 --logpath /data/shard2_2/shard2_2.log --logappend --fork

在 Server C 上启动shard1 的 Replica Sets:

./mongod --shardsvr --replSet shard2 --port 27018 --dbpath /data/shard2_3 --logpath /data/shard2_3/shard2_3.log --logappend --fork

2.4 用mongo连接其中一台机器的27018 端口的mongod,初始化Replica Sets “shard2”,执行:

[root@node1 bin]# ./mongo --port 27018

MongoDB shell version: 3.0.2

connecting to: 127.0.0.1:27018/test

MongoDB shell version: 3.0.2

connecting to: 127.0.0.1:27018/test

> config = {_id: 'shard2', members: [

{_id: 1, host: '192.168.56.88:27018'},

{_id: 2, host: '192.168.56.89:27018'}

] }

> rs.initiate(config)

{

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}

{

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}

3. 配置3台 Config Server

在 Server A、B、C 上执行 , 启动config server

./mongod --configsvr --dbpath /data/config --port 20000 --logpath /data/config/config.log --logappend --fork

4. 配置3台 Route Process, 把config server 加入到 Route 里面

在 Server A、B、C 上执行 , 启动config server./mongos --configdb 192.168.56.87:20000,192.168.56.88:20000,192.168.56.89:20000 --port 30000 --chunkSize 1 --logpath /data/mongos.log --logappend --fork

5.配置 Shard Cluster

连接到其中一台机器的端口30000的mongos进程,并切换到admin数据库做以下配置

[root@node1 bin]# ./mongo --port 30000

MongoDB shell version: 3.0.2

connecting to: 127.0.0.1:30000/test> use adminswitched to db admin>db.runCommand({addshard:"shard1/192.168.56.87:27017,192.168.56.88:27017,192.168.56.89:27017"});{ "shardAdded" : "shard1", "ok" : 1 }>db.runCommand({addshard:"shard2/192.168.56.87:27018,192.168.56.88:27018,192.168.56.89:27018"});{ "shardAdded" : "shard2", "ok" : 1 }

激活数据库及集合的分片db.runCommand({ enablesharding:"test" })db.runCommand({ shardcollection: "test.users", key: { _id:1 }})

6.验证 sharding 正常工作

连接到其中一台机器的端口30000的mongos进程,并切换到test数据库,以便添加测试数据

>use test

mongos> db.users.stats() --查看一下状态,目前没数据,在shard1上面

{

"sharded" : true,

"paddingFactorNote" : "paddingFactor is unused and unmaintained in 3.0. It remains hard coded to 1.0 for compatibility only.",

"userFlags" : 1,

"capped" : false,

"ns" : "test.users",

"count" : 0,

"numExtents" : 1,

"size" : 0,

"storageSize" : 8192,

"totalIndexSize" : 8176,

"indexSizes" : {

"_id_" : 8176

},

"avgObjSize" : 0,

"nindexes" : 1,

"nchunks" : 1,

"shards" : {

"shard1" : {

"ns" : "test.users",

"count" : 0,

"size" : 0,

"numExtents" : 1,

"storageSize" : 8192,

"lastExtentSize" : 8192,

"paddingFactor" : 1,

"paddingFactorNote" : "paddingFactor is unused and unmaintained in 3.0. It remains hard coded to 1.0 for compatibility only.",

"userFlags" : 1,

"capped" : false,

"nindexes" : 1,

"totalIndexSize" : 8176,

"indexSizes" : {

"_id_" : 8176

},

"ok" : 1,

"$gleStats" : {

"lastOpTime" : Timestamp(0, 0),

"electionId" : ObjectId("5551b2d34c58971a07cbc15d")

}

}

},

"ok" : 1

}

{

"sharded" : true,

"paddingFactorNote" : "paddingFactor is unused and unmaintained in 3.0. It remains hard coded to 1.0 for compatibility only.",

"userFlags" : 1,

"capped" : false,

"ns" : "test.users",

"count" : 0,

"numExtents" : 1,

"size" : 0,

"storageSize" : 8192,

"totalIndexSize" : 8176,

"indexSizes" : {

"_id_" : 8176

},

"avgObjSize" : 0,

"nindexes" : 1,

"nchunks" : 1,

"shards" : {

"shard1" : {

"ns" : "test.users",

"count" : 0,

"size" : 0,

"numExtents" : 1,

"storageSize" : 8192,

"lastExtentSize" : 8192,

"paddingFactor" : 1,

"paddingFactorNote" : "paddingFactor is unused and unmaintained in 3.0. It remains hard coded to 1.0 for compatibility only.",

"userFlags" : 1,

"capped" : false,

"nindexes" : 1,

"totalIndexSize" : 8176,

"indexSizes" : {

"_id_" : 8176

},

"ok" : 1,

"$gleStats" : {

"lastOpTime" : Timestamp(0, 0),

"electionId" : ObjectId("5551b2d34c58971a07cbc15d")

}

}

},

"ok" : 1

}

插入2oW条数据再看看

for(var i=1;i<=200000;i++) db.users.insert({id:i,addr_1:"Beijing",addr_2:"Shanghai"});

mongos> db.users.stats()

{

"sharded" : true,

"paddingFactorNote" : "paddingFactor is unused and unmaintained in 3.0. It remains hard coded to 1.0 for compatibility only.",

"userFlags" : 1,

"capped" : false,

"ns" : "test.users",

"count" : 200000,

"numExtents" : 14,

"size" : 22400000,

"storageSize" : 45015040,

"totalIndexSize" : 6532624,

"indexSizes" : {

"_id_" : 6532624

},

"avgObjSize" : 112,

"nindexes" : 1,

"nchunks" : 41,

"shards" : {

"shard1" : {

"ns" : "test.users",

"count" : 95171,

"size" : 10659152,

"avgObjSize" : 112,

"numExtents" : 7,

"storageSize" : 22507520,

"lastExtentSize" : 11325440,

"paddingFactor" : 1,

"paddingFactorNote" : "paddingFactor is unused and unmaintained in 3.0. It remains hard coded to 1.0 for compatibility only.",

"userFlags" : 1,

"capped" : false,

"nindexes" : 1,

"totalIndexSize" : 3106880,

"indexSizes" : {

"_id_" : 3106880

},

"ok" : 1,

"$gleStats" : {

"lastOpTime" : Timestamp(0, 0),

"electionId" : ObjectId("5551b2d34c58971a07cbc15d")

}

},

"shard2" : {

"ns" : "test.users",

"count" : 104829,

"size" : 11740848,

"avgObjSize" : 112,

"numExtents" : 7,

"storageSize" : 22507520,

"lastExtentSize" : 11325440,

"paddingFactor" : 1,

"paddingFactorNote" : "paddingFactor is unused and unmaintained in 3.0. It remains hard coded to 1.0 for compatibility only.",

"userFlags" : 1,

"capped" : false,

"nindexes" : 1,

"totalIndexSize" : 3425744,

"indexSizes" : {

"_id_" : 3425744

},

"ok" : 1,

"$gleStats" : {

"lastOpTime" : Timestamp(0, 0),

"electionId" : ObjectId("5551b61a6b688ae8f59852a0")

}

}

},

"ok" : 1

}

可以看到shard1和 shard2 都有数据了 ,Sharding搭建成功了。

看shard1 多少数据

[root@node1 bin]# ./mongo 192.168.56.87:27017

MongoDB shell version: 3.0.2

connecting to: 27017

connecting to: 27017

shard1:PRIMARY> use test

switched to db test

shard1:PRIMARY> db.users.count()

95171 --有这么多条

switched to db test

shard1:PRIMARY> db.users.count()

95171 --有这么多条

看shard2 多少数据

connecting to: 192.168.56.87:27018/test

Server has startup warnings:

Server has startup warnings:

shard2:PRIMARY> use test

switched to db test

shard2:PRIMARY> db.users.count()

104829 --有这么多条

95171 + 104829 =200000 可以看出数据分到shard1 和shard2上了;

491

491

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?