Problem Description

Most of us know that in the game called DotA(Defense of the Ancient), Pudge is a strong hero in the first period of the game. When the game goes to end however, Pudge is not a strong hero any more.

So Pudge’s teammates give him a new assignment—Eat the Trees!

The trees are in a rectangle N * M cells in size and each of the cells either has exactly one tree or has nothing at all. And what Pudge needs to do is to eat all trees that are in the cells.

There are several rules Pudge must follow:

I. Pudge must eat the trees by choosing a circuit and he then will eat all trees that are in the chosen circuit.

II. The cell that does not contain a tree is unreachable, e.g. each of the cells that is through the circuit which Pudge chooses must contain a tree and when the circuit is chosen, the trees which are in the cells on the circuit will disappear.

III. Pudge may choose one or more circuits to eat the trees.

Now Pudge has a question, how many ways are there to eat the trees?

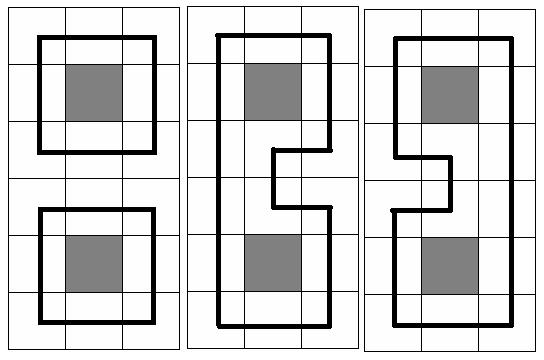

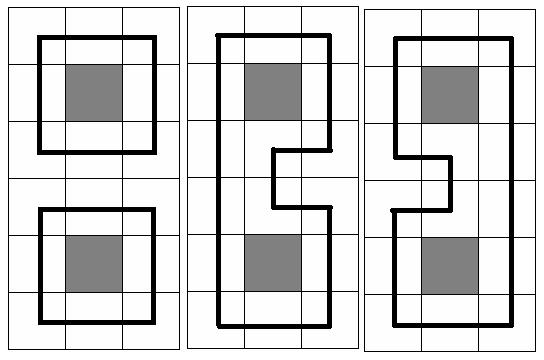

At the picture below three samples are given for N = 6 and M = 3(gray square means no trees in the cell, and the bold black line means the chosen circuit(s))

So Pudge’s teammates give him a new assignment—Eat the Trees!

The trees are in a rectangle N * M cells in size and each of the cells either has exactly one tree or has nothing at all. And what Pudge needs to do is to eat all trees that are in the cells.

There are several rules Pudge must follow:

I. Pudge must eat the trees by choosing a circuit and he then will eat all trees that are in the chosen circuit.

II. The cell that does not contain a tree is unreachable, e.g. each of the cells that is through the circuit which Pudge chooses must contain a tree and when the circuit is chosen, the trees which are in the cells on the circuit will disappear.

III. Pudge may choose one or more circuits to eat the trees.

Now Pudge has a question, how many ways are there to eat the trees?

At the picture below three samples are given for N = 6 and M = 3(gray square means no trees in the cell, and the bold black line means the chosen circuit(s))

Input

The input consists of several test cases. The first line of the input is the number of the cases. There are no more than 10 cases.

For each case, the first line contains the integer numbers N and M, 1<=N, M<=11. Each of the next N lines contains M numbers (either 0 or 1) separated by a space. Number 0 means a cell which has no trees and number 1 means a cell that has exactly one tree.

For each case, the first line contains the integer numbers N and M, 1<=N, M<=11. Each of the next N lines contains M numbers (either 0 or 1) separated by a space. Number 0 means a cell which has no trees and number 1 means a cell that has exactly one tree.

Output

For each case, you should print the desired number of ways in one line. It is guaranteed, that it does not exceed 2

63 – 1. Use the format in the sample.

Sample Input

2 6 3 1 1 1 1 0 1 1 1 1 1 1 1 1 0 1 1 1 1 2 4 1 1 1 1 1 1 1 1

Sample Output

Case 1: There are 3 ways to eat the trees. Case 2: There are 2 ways to eat the trees.

插头Dp。感受到了博弈论时候的恐惧QAQ。

那么我的f[i][j][S]表示处理完(i,j)这个格子后插头的集合QWQ。

那么f[i][0][S<<1]=f[i-1][m][S];因为枚举处理完上一行的状态来更新这一行的状态。

f[i][j][k]=f[i][j-1][k^(1<<(j-1))^(1<<j)]考虑每个方块有一个或两个的都能考虑进去(除去单个竖直插头)

f[i][j][k]=f[i][j-1][k]当这个方块只有一个竖直插头的时候可以由上一个方块转移。

上面讨论的都是非障碍,下面讨论障碍。

如果当前障碍(i,j)上没有插头则继承上一个即f[i][j][S]=f[i][j-1][S];

然后答案就是f[n][m][0]即操作完(n,m)后剩余插头状态为0(因为轮廓线下移。)

code:

#define ll long long

#include <stdio.h>

#include <cstring>

#include <iostream>

template<typename _t>

inline _t read(){

_t x=0,f=1;

char ch=getchar();

for(;!isdigit(ch);ch=getchar())if(ch=='-')f=-f;

for(;isdigit(ch);ch=getchar())x=x*10+(ch^48);

return x*f;

}

int n,m,a[13][13],Tcase,num;

ll f[13][13][1<<13];

void Dp(){

memset(f,0,sizeof f);

f[0][m][0]=1;

int full = 1<<(m+1);

for(int i=1;i<=n;i++){

for(int j=0;j<full>>1;j++)

f[i][0][j<<1]=f[i-1][m][j];

for(int j=1;j<=m;j++)

for(int k=0;k<full;k++){

int x = 1<<(j-1);

int y = 1<<(j);

if(a[i][j]){

f[i][j][k]+=f[i][j-1][k^x^y];

if((k&x)&&(k&y))continue;

if(!(k&x)&&!(k&y))continue;

f[i][j][k]+=f[i][j-1][k];

}

else{

if(!(k&x)&&!(k&y))f[i][j][k]+=f[i][j-1][k];

else f[i][j][k]=0;

}

}

}

}

int main(){

Tcase=read<int>();

while(Tcase--){

n=read<int>(),m=read<int>();

for(int i=1;i<=n;i++)

for(int j=1;j<=m;j++)

a[i][j]=read<int>();

Dp();

printf("Case %d: There are %lld ways to eat the trees.\n",++num,f[n][m][0]);

}

}

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?