系列前言

参考文献:

- RNNLM - Recurrent Neural Network Language Modeling Toolkit(点此阅读)

- Recurrent neural network based language model(点此阅读)

- EXTENSIONS OF RECURRENT NEURAL NETWORK LANGUAGE MODEL(点此阅读)

- Strategies for Training Large Scale Neural Network Language Models(点此阅读)

- STATISTICAL LANGUAGE MODELS BASED ON NEURAL NETWORKS(点此阅读)

- A guide to recurrent neural networks and backpropagation(点此阅读)

- A Neural Probabilistic Language Model(点此阅读)

- Learning Long-Term Dependencies with Gradient Descent is Difficult(点此阅读)

- Can Artificial Neural Networks Learn Language Models?(点此阅读)

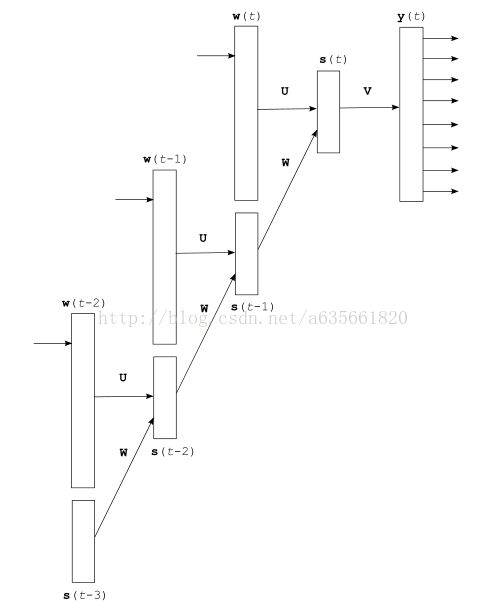

前一篇是网络的前向计算,这篇是网络的学习算法,学习算法我在rnnlm原理及BPTT数学推导中介绍了。学习算法主要更新的地方在网络中的权值,这个最终版本的网络的权值大体可以分为三个部分来看:第一个是网络中类似输入到隐层的权值,隐层到输出层的权值。第二个是网络中ME的部分,即输入层到输出层的权值部分。第三个来看是BPTT的部分。我先把整个网络的ME+Rnn图放上来,然后再贴带注释的源码,结构图如下:

下面代码还是分成两部分来看,一部分是更新非BPTT部分,一个是更新BPTT部分,如下:

//反传误差,更新网络权值

void CRnnLM::learnNet(int last_word, int word)

{

//word表示要预测的词,last_word表示当前输入层所在的词

int a, b, c, t, step;

real beta2, beta3;

//alpha表示学习率,初始值为0.1, beta初始值为0.0000001;

beta2=beta*alpha;

//这里注释不懂,希望明白的朋友讲下~

beta3=beta2*1; //beta3 can be possibly larger than beta2, as that is useful on small datasets (if the final model is to be interpolated wich backoff model) - todo in the future

if (word==-1) return;

//compute error vectors,计算输出层的(只含word所在类别的所有词)误差向量

for (c=0; c<class_cn[vocab[word].class_index]; c++) {

a=class_words[vocab[word].class_index][c];

neu2[a].er=(0-neu2[a].ac);

}

neu2[word].er=(1-neu2[word].ac); //word part

//flush error

for (a=0; a<layer1_size; a++) neu1[a].er=0;

for (a=0; a<layerc_size; a++) neuc[a].er=0;

//计算输出层的class部分的误差向量

for (a=vocab_size; a<layer2_size; a++) {

neu2[a].er=(0-neu2[a].ac);

}

neu2[vocab[word].class_index+vocab_size].er=(1-neu2[vocab[word].class_index+vocab_size].ac); //class part

//计算特征所在syn_d中的下标,和上面一样,针对ME中word部分

if (direct_size>0) { //learn direct connections between words

if (word!=-1) {

unsigned long long hash[MAX_NGRAM_ORDER];

for (a=0; a<direct_order; a++) hash[a]=0;

for (a=0; a<direct_order; a++) {

b=0;

if (a>0) if (history[a-1]==-1) break;

hash[a]=PRIMES[0]*PRIMES[1]*(unsigned long long)(vocab[word].class_index+1);

for (b=1; b<=a; b++) hash[a]+=PRIMES[(a*PRIMES[b]+b)%PRIMES_SIZE]*(unsigned long long)(history[b-1]+1);

hash[a]=(hash[a]%(direct_size/2))+(direct_size)/2;

}

//更新ME中的权值部分,这部分是正对word的

for (c=0; c<class_cn[vocab[word].class_index]; c++) {

a=class_words[vocab[word].class_index][c];

//这里的更新公式很容易推导,利用梯度上升,和RNN是一样的

//详见我的这篇文章,另外这里不同的是权值是放在一维数组里面的,所以程序写法有点不同

for (b=0; b<direct_order; b++) if (hash[b]) {

syn_d[hash[b]]+=alpha*neu2[a].er - syn_d[hash[b]]*beta3;

hash[b]++;

hash[b]=hash[b]%direct_size;

} else break;

}

}

}

//计算n元模型特征,这是对class计算的

//learn direct connections to classes

if (direct_size>0) { //learn direct connections between words and classes

unsigned long long hash[MAX_NGRAM_ORDER];

for (a=0; a<direct_order; a++) hash[a]=0;

for (a=0; a<direct_order; a++) {

b=0;

if (a>0) if (history[a-1]==-1) break;

hash[a]=PRIMES[0]*PRIMES[1];

for (b=1; b<=a; b++) hash[a]+=PRIMES[(a*PRIMES[b]+b)%PRIMES_SIZE]*(unsigned long long)(history[b-1]+1);

hash[a]=hash[a]%(direct_size/2);

}

//和上面一样,更新ME中权值部分,这是对class的

for (a=vocab_size; a<layer2_size; a++) {

for (b=0; b<direct_order; b++) if (hash[b]) {

syn_d[hash[b]]+=alpha*neu2[a].er - syn_d[hash[b]]*beta3;

hash[b]++;

} else break;

}

}

//

//含压缩层的情况,更新sync, syn1

if (layerc_size>0) {

//将输出层的误差传递到压缩层,即计算部分V × eo^T(符号含义表示见图)

matrixXvector(neuc, neu2, sync, layerc_size, class_words[vocab[word].class_index][0], class_words[vocab[word].class_index][0]+class_cn[vocab[word].class_index], 0, layerc_size, 1);

//这里把一维的权值转换为二维的权值矩阵好理解一些

//注意这里的t相当于定位到了参数矩阵的行,下面的下标a相当于列

t=class_words[vocab[word].class_index][0]*layerc_size;

for (c=0; c<class_cn[vocab[word].class_index]; c++) {

b=class_words[vocab[word].class_index][c];

//这里的更新公式见我写的另一篇文章的rnn推导

//并且每训练10次才L2正规化一次

if ((counter%10)==0) //regularization is done every 10. step

for (a=0; a<layerc_size; a++) sync[a+t].weight+=alpha*neu2[b].er*neuc[a].ac - sync[a+t].weight*beta2;

else

for (a=0; a<layerc_size; a++) sync[a+t].weight+=alpha*neu2[b].er*neuc[a].ac;

//参数矩阵下移动一行

t+=layerc_size;

}

//将输出层的误差传递到压缩层,即计算部分V × eo^T(符号含义表示见图)

//这里计算输出层中class部分到压缩层

matrixXvector(neuc, neu2, sync, layerc_size, vocab_size, layer2_size, 0, layerc_size, 1); //propagates errors 2->c for classes

//这里同样相当于定位的权值矩阵中的行,下面的a表示列

c=vocab_size*layerc_size;

//更新和上面公式是一样的,不同的是更新的权值数组中不同的部分

for (b=vocab_size; b<layer2_size; b++) {

if ((counter%10)==0) {

for (a=0; a<layerc_size; a++) sync[a+c].weight+=alpha*neu2[b].er*neuc[a].ac - sync[a+c].weight*beta2; //weight c->2 update

}

else {

for (a=0; a<layerc_size; a++) sync[a+c].weight+=alpha*neu2[b].er*neuc[a].ac; //weight c->2 update

}

//下一行

c+=layerc_size;

}

//这里是误差向量,即论文中的e hj (t)

for (a=0; a<layerc_size; a++) neuc[a].er=neuc[a].er*neuc[a].ac*(1-neuc[a].ac); //error derivation at compression layer

//下面都是同理,将误差再往下传,计算syn1(二维) × ec^T, ec表示压缩层的误差向量

matrixXvector(neu1, neuc, syn1, layer1_size, 0, layerc_size, 0, layer1_size, 1); //propagates errors c->1

//更新syn1,相应的见公式

for (b=0; b<layerc_size; b++) {

for (a=0; a<layer1_size; a++) syn1[a+b*layer1_size].weight+=alpha*neuc[b].er*neu1[a].ac; //weight 1->c update

}

}

else //无压缩层的情况,更新syn1

{

//下面的情况和上面类似,只是少了一个压缩层

matrixXvector(neu1, neu2, syn1, layer1_size, class_words[vocab[word].class_index][0], class_words[vocab[word].class_index][0]+class_cn[vocab[word].class_index], 0, layer1_size, 1);

t=class_words[vocab[word].class_index][0]*layer1_size;

for (c=0; c<class_cn[vocab[word].class_index]; c++) {

b=class_words[vocab[word].class_index][c];

if ((counter%10)==0) //regularization is done every 10. step

for (a=0; a<layer1_size; a++) syn1[a+t].weight+=alpha*neu2[b].er*neu1[a].ac - syn1[a+t].weight*beta2;

else

for (a=0; a<layer1_size; a++) syn1[a+t].weight+=alpha*neu2[b].er*neu1[a].ac;

t+=layer1_size;

}

//

matrixXvector(neu1, neu2, syn1, layer1_size, vocab_size, layer2_size, 0, layer1_size, 1); //propagates errors 2->1 for classes

c=vocab_size*layer1_size;

for (b=vocab_size; b<layer2_size; b++) {

if ((counter%10)==0) { //regularization is done every 10. step

for (a=0; a<layer1_size; a++) syn1[a+c].weight+=alpha*neu2[b].er*neu1[a].ac - syn1[a+c].weight*beta2; //weight 1->2 update

}

else {

for (a=0; a<layer1_size; a++) syn1[a+c].weight+=alpha*neu2[b].er*neu1[a].ac; //weight 1->2 update

}

c+=layer1_size;

}

}

//

//到这里,上面部分把到隐层的部分更新完毕

///

//这里就是最常规的RNN,即t时刻往前只展开了s(t-1)

if (bptt<=1) { //bptt==1 -> normal BP

//计算隐层的误差,即eh(t)

for (a=0; a<layer1_size; a++) neu1[a].er=neu1[a].er*neu1[a].ac*(1-neu1[a].ac); //error derivation at layer 1

//这部分更新隐层到word部分的权值

//注意由于word部分是1-of-V编码

//所以这里更新的循环式上有点不同,并且下面的更新都是每10次训练更新才进行一次L2正规化

a=last_word;

if (a!=-1) {

if ((counter%10)==0)

for (b=0; b<layer1_size; b++) syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac - syn0[a+b*layer0_size].weight*beta2;

else

//我们可以把这里的权值看做一个矩阵

//b表示行,a表示列,非L2正规化的完整更新式如下:

/*for (b=0; b<layer1_size; b++) {

for (k=0; k<vocab_size; k++) {

syn0[k+b*layer0_size].weight+=alpha*neu1[b].er*neu0[k].ac;

}

}*/

//但由于neu0[k]==1只有当k==a时,所以为了加快循环计算的速度,更新就变为如下了

//下面的代码是类似的道理,其实这里的neu0[a].ac可以直接省略掉,因为本身值为1

for (b=0; b<layer1_size; b++) syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac;

}

//这部分更新隐层到s(t-1)的权值

if ((counter%10)==0) {

for (b=0; b<layer1_size; b++) for (a=layer0_size-layer1_size; a<layer0_size; a++) syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac - syn0[a+b*layer0_size].weight*beta2;

}

else {

for (b=0; b<layer1_size; b++) for (a=layer0_size-layer1_size; a<layer0_size; a++) syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac;

}

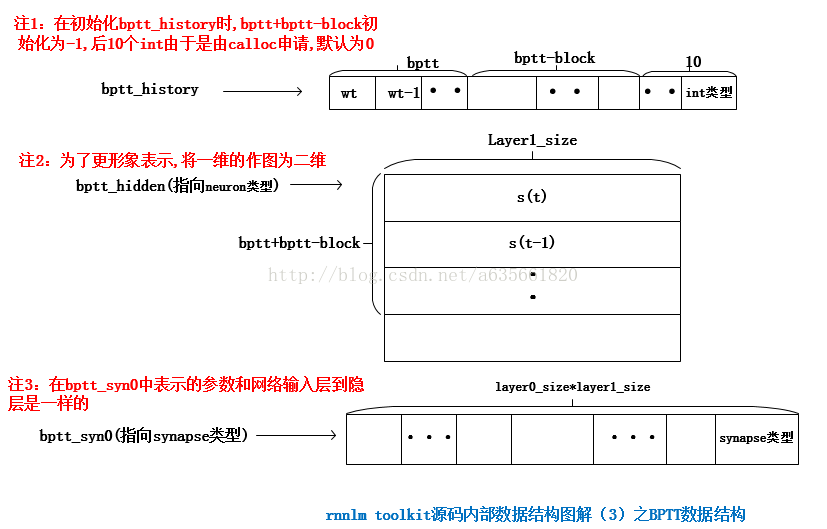

}下面是更新BPTT的部分, 还是先把BPTT算法部分的数据结构图上来,这样更方便对照:

else //BPTT

{

//BPTT部分权值就不在用syn0了,而是用bptt_syn0

//神经元部分的存储用bptt_hidden

//将隐层的神经元信息复制到bptt_hidden,这里对neu1做备份

for (b=0; b<layer1_size; b++) bptt_hidden[b].ac=neu1[b].ac;

for (b=0; b<layer1_size; b++) bptt_hidden[b].er=neu1[b].er;

//bptt_block第一个条件是控制BPTT的,因为不能每训练一个word都用BPTT来深度更新参数

//这样每进行一次训练计算量太大,过于耗时

//word==0表示当前一个句子结束

//或者当independent开关不为0时,下一个词是句子的结束,则进行BPTT

if (((counter%bptt_block)==0) || (independent && (word==0))) {

//step表示

for (step=0; step<bptt+bptt_block-2; step++) {

//计算隐层的误差向量,即eh(t)

for (a=0; a<layer1_size; a++) neu1[a].er=neu1[a].er*neu1[a].ac*(1-neu1[a].ac); //error derivation at layer 1

//更新的是输入层中vocab_size那部分权值

//bptt_history下标从0开始依次存放的是wt, wt-1, wt-2...

//比如当step == 0时,相当于正在进行普通的BPTT,这时bptt_history[step]表示当前输入word的在vocab中的索引

a=bptt_history[step];

if (a!=-1)

for (b=0; b<layer1_size; b++) {

//由于输入层word部分是1-of-V编码,所以neu0[a].ac==1 所以这里后面没有乘以它

//在step == 0时,bptt_syn0相当于原来的syn0

bptt_syn0[a+b*layer0_size].weight+=alpha*neu1[b].er;

}

//为从隐层往状态层传误差做准备

for (a=layer0_size-layer1_size; a<layer0_size; a++) neu0[a].er=0;

//从隐层传递误差到状态层

matrixXvector(neu0, neu1, syn0, layer0_size, 0, layer1_size, layer0_size-layer1_size, layer0_size, 1); //propagates errors 1->0

//更新隐层到状态层的权值

//之所以先计算误差的传递就是因为更新完权值会发生改变

for (b=0; b<layer1_size; b++) for (a=layer0_size-layer1_size; a<layer0_size; a++) {

//neu0[a].er += neu1[b].er * syn0[a+b*layer0_size].weight;

bptt_syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac;

}

//todo这里我理解是把论文中的s(t-1)复制到s(t),准备下一次循环

for (a=0; a<layer1_size; a++) { //propagate error from time T-n to T-n-1

//s(t-1)的误差来自于两部分(见图更明显):1.s(t)传过来的 2.t-1时刻的输出层传过来的,谢谢博友qq_16478429提出来的

neu1[a].er=neu0[a+layer0_size-layer1_size].er + bptt_hidden[(step+1)*layer1_size+a].er;

}

//历史中的s(t-2)复制到s(t-1)

if (step<bptt+bptt_block-3)

for (a=0; a<layer1_size; a++) {

neu1[a].ac=bptt_hidden[(step+1)*layer1_size+a].ac;

neu0[a+layer0_size-layer1_size].ac=bptt_hidden[(step+2)*layer1_size+a].ac;

}到这里,无奈再把代码打断一下,把上面两个关键循环按照step的增加走一下,并假设当前时刻为t,展开一下上面的循环:

step = 0:

第一个for循环完成:

neu1.er = neu0.er + btpp_hidden.er -> s(t) = s(t-1) + s(t-1)

第二个for循环完成:

neu1.ac = bptt_hidden.ac -> s(t) = s(t-1)

neu0.ac = bptt_hidden.ac -> s(t-1) = s(t-2)

step = 1:

第一个for循环完成:

neu1.er = neu0.er + btpp_hidden.er -> s(t-1) = s(t-2) + s(t-2)

第二个for循环完成:

neu1.ac = bptt_hidden.ac -> s(t-1) = s(t-2)

neu0.ac = bptt_hidden.ac -> s(t-2) = s(t-3)

通过上面列出的步骤,大概能知道原理,其中neu0,和bptt_hidden所对应的s(t-1)有不同,前者的er值隐层传下来的 后者的er值是在t-1时刻,压缩层传下来的

然后继续被打断的代码:

}

//清空历史信息中的er值

for (a=0; a<(bptt+bptt_block)*layer1_size; a++) {

bptt_hidden[a].er=0;

}

//这里恢复neu1,因为neu1反复在循环中使用

for (b=0; b<layer1_size; b++) neu1[b].ac=bptt_hidden[b].ac; //restore hidden layer after bptt

//将BPTT后的权值改变作用到syn0上

for (b=0; b<layer1_size; b++) { //copy temporary syn0

//bptt完毕,将bptt_syn0的权值复制到syn0中来,这是复制状态层部分

if ((counter%10)==0) {

for (a=layer0_size-layer1_size; a<layer0_size; a++) {

syn0[a+b*layer0_size].weight+=bptt_syn0[a+b*layer0_size].weight - syn0[a+b*layer0_size].weight*beta2;

bptt_syn0[a+b*layer0_size].weight=0;

}

}

else {

for (a=layer0_size-layer1_size; a<layer0_size; a++) {

syn0[a+b*layer0_size].weight+=bptt_syn0[a+b*layer0_size].weight;

bptt_syn0[a+b*layer0_size].weight=0;

}

}

//bptt完毕,将bptt_syn0的权值复制到syn0中来,这是word部分

if ((counter%10)==0) {

for (step=0; step<bptt+bptt_block-2; step++) if (bptt_history[step]!=-1) {

syn0[bptt_history[step]+b*layer0_size].weight+=bptt_syn0[bptt_history[step]+b*layer0_size].weight - syn0[bptt_history[step]+b*layer0_size].weight*beta2;

bptt_syn0[bptt_history[step]+b*layer0_size].weight=0;

}

}

else {

//因为输入的word层是1-of-V编码,并不是全部的权值改变

//这样写可以加快计算速度,避免不必要的循环检索

for (step=0; step<bptt+bptt_block-2; step++) if (bptt_history[step]!=-1) {

syn0[bptt_history[step]+b*layer0_size].weight+=bptt_syn0[bptt_history[step]+b*layer0_size].weight;

bptt_syn0[bptt_history[step]+b*layer0_size].weight=0;

}

}

}

}

}

}

//将隐层神经元的ac值复制到输出层后layer1_size那部分

void CRnnLM::copyHiddenLayerToInput()

{

int a;

for (a=0; a<layer1_size; a++) {

neu0[a+layer0_size-layer1_size].ac=neu1[a].ac;

}

}

最后仍然和前面一样,把整个函数完整的注释版贴在下面:

//反传误差,更新网络权值

void CRnnLM::learnNet(int last_word, int word)

{

//word表示要预测的词,last_word表示当前输入层所在的词

int a, b, c, t, step;

real beta2, beta3;

//alpha表示学习率,初始值为0.1, beta初始值为0.0000001;

beta2=beta*alpha;

//这里注释不懂,希望明白的朋友讲下~

beta3=beta2*1; //beta3 can be possibly larger than beta2, as that is useful on small datasets (if the final model is to be interpolated wich backoff model) - todo in the future

if (word==-1) return;

//compute error vectors,计算输出层的(只含word所在类别的所有词)误差向量

for (c=0; c<class_cn[vocab[word].class_index]; c++) {

a=class_words[vocab[word].class_index][c];

neu2[a].er=(0-neu2[a].ac);

}

neu2[word].er=(1-neu2[word].ac); //word part

//flush error

for (a=0; a<layer1_size; a++) neu1[a].er=0;

for (a=0; a<layerc_size; a++) neuc[a].er=0;

//计算输出层的class部分的误差向量

for (a=vocab_size; a<layer2_size; a++) {

neu2[a].er=(0-neu2[a].ac);

}

neu2[vocab[word].class_index+vocab_size].er=(1-neu2[vocab[word].class_index+vocab_size].ac); //class part

//计算特征所在syn_d中的下标,和上面一样,针对ME中word部分

if (direct_size>0) { //learn direct connections between words

if (word!=-1) {

unsigned long long hash[MAX_NGRAM_ORDER];

for (a=0; a<direct_order; a++) hash[a]=0;

for (a=0; a<direct_order; a++) {

b=0;

if (a>0) if (history[a-1]==-1) break;

hash[a]=PRIMES[0]*PRIMES[1]*(unsigned long long)(vocab[word].class_index+1);

for (b=1; b<=a; b++) hash[a]+=PRIMES[(a*PRIMES[b]+b)%PRIMES_SIZE]*(unsigned long long)(history[b-1]+1);

hash[a]=(hash[a]%(direct_size/2))+(direct_size)/2;

}

//更新ME中的权值部分,这部分是正对word的

for (c=0; c<class_cn[vocab[word].class_index]; c++) {

a=class_words[vocab[word].class_index][c];

//这里的更新公式很容易推导,利用梯度上升,和RNN是一样的

//详见我的这篇文章,另外这里不同的是权值是放在一维数组里面的,所以程序写法有点不同

for (b=0; b<direct_order; b++) if (hash[b]) {

syn_d[hash[b]]+=alpha*neu2[a].er - syn_d[hash[b]]*beta3;

hash[b]++;

hash[b]=hash[b]%direct_size;

} else break;

}

}

}

//计算n元模型特征,这是对class计算的

//learn direct connections to classes

if (direct_size>0) { //learn direct connections between words and classes

unsigned long long hash[MAX_NGRAM_ORDER];

for (a=0; a<direct_order; a++) hash[a]=0;

for (a=0; a<direct_order; a++) {

b=0;

if (a>0) if (history[a-1]==-1) break;

hash[a]=PRIMES[0]*PRIMES[1];

for (b=1; b<=a; b++) hash[a]+=PRIMES[(a*PRIMES[b]+b)%PRIMES_SIZE]*(unsigned long long)(history[b-1]+1);

hash[a]=hash[a]%(direct_size/2);

}

//和上面一样,更新ME中权值部分,这是对class的

for (a=vocab_size; a<layer2_size; a++) {

for (b=0; b<direct_order; b++) if (hash[b]) {

syn_d[hash[b]]+=alpha*neu2[a].er - syn_d[hash[b]]*beta3;

hash[b]++;

} else break;

}

}

//

//含压缩层的情况,更新sync, syn1

if (layerc_size>0) {

//将输出层的误差传递到压缩层,即计算部分V × eo^T(符号含义表示见图)

matrixXvector(neuc, neu2, sync, layerc_size, class_words[vocab[word].class_index][0], class_words[vocab[word].class_index][0]+class_cn[vocab[word].class_index], 0, layerc_size, 1);

//这里把一维的权值转换为二维的权值矩阵好理解一些

//注意这里的t相当于定位到了参数矩阵的行,下面的下标a相当于列

t=class_words[vocab[word].class_index][0]*layerc_size;

for (c=0; c<class_cn[vocab[word].class_index]; c++) {

b=class_words[vocab[word].class_index][c];

//这里的更新公式见我写的另一篇文章的rnn推导

//并且每训练10次才L2正规化一次

if ((counter%10)==0) //regularization is done every 10. step

for (a=0; a<layerc_size; a++) sync[a+t].weight+=alpha*neu2[b].er*neuc[a].ac - sync[a+t].weight*beta2;

else

for (a=0; a<layerc_size; a++) sync[a+t].weight+=alpha*neu2[b].er*neuc[a].ac;

//参数矩阵下移动一行

t+=layerc_size;

}

//将输出层的误差传递到压缩层,即计算部分V × eo^T(符号含义表示见图)

//这里计算输出层中class部分到压缩层

matrixXvector(neuc, neu2, sync, layerc_size, vocab_size, layer2_size, 0, layerc_size, 1); //propagates errors 2->c for classes

//这里同样相当于定位的权值矩阵中的行,下面的a表示列

c=vocab_size*layerc_size;

//更新和上面公式是一样的,不同的是更新的权值数组中不同的部分

for (b=vocab_size; b<layer2_size; b++) {

if ((counter%10)==0) {

for (a=0; a<layerc_size; a++) sync[a+c].weight+=alpha*neu2[b].er*neuc[a].ac - sync[a+c].weight*beta2; //weight c->2 update

}

else {

for (a=0; a<layerc_size; a++) sync[a+c].weight+=alpha*neu2[b].er*neuc[a].ac; //weight c->2 update

}

//下一行

c+=layerc_size;

}

//这里是误差向量,即论文中的e hj (t)

for (a=0; a<layerc_size; a++) neuc[a].er=neuc[a].er*neuc[a].ac*(1-neuc[a].ac); //error derivation at compression layer

//下面都是同理,将误差再往下传,计算syn1(二维) × ec^T, ec表示压缩层的误差向量

matrixXvector(neu1, neuc, syn1, layer1_size, 0, layerc_size, 0, layer1_size, 1); //propagates errors c->1

//更新syn1,相应的见公式

for (b=0; b<layerc_size; b++) {

for (a=0; a<layer1_size; a++) syn1[a+b*layer1_size].weight+=alpha*neuc[b].er*neu1[a].ac; //weight 1->c update

}

}

else //无压缩层的情况,更新syn1

{

//下面的情况和上面类似,只是少了一个压缩层

matrixXvector(neu1, neu2, syn1, layer1_size, class_words[vocab[word].class_index][0], class_words[vocab[word].class_index][0]+class_cn[vocab[word].class_index], 0, layer1_size, 1);

t=class_words[vocab[word].class_index][0]*layer1_size;

for (c=0; c<class_cn[vocab[word].class_index]; c++) {

b=class_words[vocab[word].class_index][c];

if ((counter%10)==0) //regularization is done every 10. step

for (a=0; a<layer1_size; a++) syn1[a+t].weight+=alpha*neu2[b].er*neu1[a].ac - syn1[a+t].weight*beta2;

else

for (a=0; a<layer1_size; a++) syn1[a+t].weight+=alpha*neu2[b].er*neu1[a].ac;

t+=layer1_size;

}

//

matrixXvector(neu1, neu2, syn1, layer1_size, vocab_size, layer2_size, 0, layer1_size, 1); //propagates errors 2->1 for classes

c=vocab_size*layer1_size;

for (b=vocab_size; b<layer2_size; b++) {

if ((counter%10)==0) { //regularization is done every 10. step

for (a=0; a<layer1_size; a++) syn1[a+c].weight+=alpha*neu2[b].er*neu1[a].ac - syn1[a+c].weight*beta2; //weight 1->2 update

}

else {

for (a=0; a<layer1_size; a++) syn1[a+c].weight+=alpha*neu2[b].er*neu1[a].ac; //weight 1->2 update

}

c+=layer1_size;

}

}

//

//到这里,上面部分把到隐层的部分更新完毕

///

//这里就是最常规的RNN,即t时刻往前只展开了s(t-1)

if (bptt<=1) { //bptt==1 -> normal BP

//计算隐层的误差,即eh(t)

for (a=0; a<layer1_size; a++) neu1[a].er=neu1[a].er*neu1[a].ac*(1-neu1[a].ac); //error derivation at layer 1

//这部分更新隐层到word部分的权值

//注意由于word部分是1-of-V编码

//所以这里更新的循环式上有点不同,并且下面的更新都是每10次训练更新才进行一次L2正规化

a=last_word;

if (a!=-1) {

if ((counter%10)==0)

for (b=0; b<layer1_size; b++) syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac - syn0[a+b*layer0_size].weight*beta2;

else

//我们可以把这里的权值看做一个矩阵

//b表示行,a表示列,非L2正规化的完整更新式如下:

/*for (b=0; b<layer1_size; b++) {

for (k=0; k<vocab_size; k++) {

syn0[k+b*layer0_size].weight+=alpha*neu1[b].er*neu0[k].ac;

}

}*/

//但由于neu0[k]==1只有当k==a时,所以为了加快循环计算的速度,更新就变为如下了

//下面的代码是类似的道理,其实这里的neu0[a].ac可以直接省略掉,因为本身值为1

for (b=0; b<layer1_size; b++) syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac;

}

//这部分更新隐层到s(t-1)的权值

if ((counter%10)==0) {

for (b=0; b<layer1_size; b++) for (a=layer0_size-layer1_size; a<layer0_size; a++) syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac - syn0[a+b*layer0_size].weight*beta2;

}

else {

for (b=0; b<layer1_size; b++) for (a=layer0_size-layer1_size; a<layer0_size; a++) syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac;

}

}

else //BPTT

{

//BPTT部分权值就不在用syn0了,而是用bptt_syn0

//神经元部分的存储用bptt_hidden

//将隐层的神经元信息复制到bptt_hidden,这里对neu1做备份

for (b=0; b<layer1_size; b++) bptt_hidden[b].ac=neu1[b].ac;

for (b=0; b<layer1_size; b++) bptt_hidden[b].er=neu1[b].er;

//bptt_block第一个条件是控制BPTT的,因为不能每训练一个word都用BPTT来深度更新参数

//这样每进行一次训练计算量太大,过于耗时

//word==0表示当前一个句子结束

//或者当independent开关不为0时,下一个词是句子的结束,则进行BPTT

if (((counter%bptt_block)==0) || (independent && (word==0))) {

//step表示

for (step=0; step<bptt+bptt_block-2; step++) {

//计算隐层的误差向量,即eh(t)

for (a=0; a<layer1_size; a++) neu1[a].er=neu1[a].er*neu1[a].ac*(1-neu1[a].ac); //error derivation at layer 1

//更新的是输入层中vocab_size那部分权值

//bptt_history下标从0开始依次存放的是wt, wt-1, wt-2...

//比如当step == 0时,相当于正在进行普通的BPTT,这时bptt_history[step]表示当前输入word的在vocab中的索引

a=bptt_history[step];

if (a!=-1)

for (b=0; b<layer1_size; b++) {

//由于输入层word部分是1-of-V编码,所以neu0[a].ac==1 所以这里后面没有乘以它

//在step == 0时,bptt_syn0相当于原来的syn0

bptt_syn0[a+b*layer0_size].weight+=alpha*neu1[b].er;

}

//为从隐层往状态层传误差做准备

for (a=layer0_size-layer1_size; a<layer0_size; a++) neu0[a].er=0;

//从隐层传递误差到状态层

matrixXvector(neu0, neu1, syn0, layer0_size, 0, layer1_size, layer0_size-layer1_size, layer0_size, 1); //propagates errors 1->0

//更新隐层到状态层的权值

//之所以先计算误差的传递就是因为更新完权值会发生改变

for (b=0; b<layer1_size; b++) for (a=layer0_size-layer1_size; a<layer0_size; a++) {

//neu0[a].er += neu1[b].er * syn0[a+b*layer0_size].weight;

bptt_syn0[a+b*layer0_size].weight+=alpha*neu1[b].er*neu0[a].ac;

}

//todo这里我理解是把论文中的s(t-1)复制到s(t),准备下一次循环

for (a=0; a<layer1_size; a++) { //propagate error from time T-n to T-n-1

//后面为什么会加bptt_hidden呢

neu1[a].er=neu0[a+layer0_size-layer1_size].er + bptt_hidden[(step+1)*layer1_size+a].er;

}

//历史中的s(t-2)复制到s(t-1)

if (step<bptt+bptt_block-3)

for (a=0; a<layer1_size; a++) {

neu1[a].ac=bptt_hidden[(step+1)*layer1_size+a].ac;

neu0[a+layer0_size-layer1_size].ac=bptt_hidden[(step+2)*layer1_size+a].ac;

}

}

//清空历史信息中的er值

for (a=0; a<(bptt+bptt_block)*layer1_size; a++) {

bptt_hidden[a].er=0;

}

//这里恢复neu1,因为neu1反复在循环中使用

for (b=0; b<layer1_size; b++) neu1[b].ac=bptt_hidden[b].ac; //restore hidden layer after bptt

//将BPTT后的权值改变作用到syn0上

for (b=0; b<layer1_size; b++) { //copy temporary syn0

//bptt完毕,将bptt_syn0的权值复制到syn0中来,这是复制状态层部分

if ((counter%10)==0) {

for (a=layer0_size-layer1_size; a<layer0_size; a++) {

syn0[a+b*layer0_size].weight+=bptt_syn0[a+b*layer0_size].weight - syn0[a+b*layer0_size].weight*beta2;

bptt_syn0[a+b*layer0_size].weight=0;

}

}

else {

for (a=layer0_size-layer1_size; a<layer0_size; a++) {

syn0[a+b*layer0_size].weight+=bptt_syn0[a+b*layer0_size].weight;

bptt_syn0[a+b*layer0_size].weight=0;

}

}

//bptt完毕,将bptt_syn0的权值复制到syn0中来,这是word部分

if ((counter%10)==0) {

for (step=0; step<bptt+bptt_block-2; step++) if (bptt_history[step]!=-1) {

syn0[bptt_history[step]+b*layer0_size].weight+=bptt_syn0[bptt_history[step]+b*layer0_size].weight - syn0[bptt_history[step]+b*layer0_size].weight*beta2;

bptt_syn0[bptt_history[step]+b*layer0_size].weight=0;

}

}

else {

//因为输入的word层是1-of-V编码,并不是全部的权值改变

//这样写可以加快计算速度,避免不必要的循环检索

for (step=0; step<bptt+bptt_block-2; step++) if (bptt_history[step]!=-1) {

syn0[bptt_history[step]+b*layer0_size].weight+=bptt_syn0[bptt_history[step]+b*layer0_size].weight;

bptt_syn0[bptt_history[step]+b*layer0_size].weight=0;

}

}

}

}

}

}

1697

1697

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?