- 理解【网站基本指标】的几个概念

- 1PV:网页浏览量-》每天 每周 每月

-》用户每打开一次就记录1次 - 1UV:独立访客数—》userID

–>cookie-》过期时间 - 2VV: 访客的访问次数

- 3IP:独立IP数

- 1PV:网页浏览量-》每天 每周 每月

分析需求,依据MapReduce 编程模板编程PV程序

1具体代码

package com.ibeifeng.bigdata.senior.hadoop.mapreduce; import java.io.IOException; import org.apache.commons.lang.StringUtils; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.conf.Configured; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import org.apache.hadoop.util.Tool; import org.apache.hadoop.util.ToolRunner; /** * @author beifeng * */ public class WebPvMapReduce extends Configured implements Tool { // step 1 : Mapper Class public static class WebPvMapper extends Mapper<LongWritable, Text, IntWritable, IntWritable> { private IntWritable mapOutputValue = new IntWritable(1); private IntWritable mapOutputKey = new IntWritable(); @Override protected void setup(Context context) throws IOException, InterruptedException { // TODO Auto-generated method stub } @Override public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { // line value String lineValue = value.toString(); // split String[] values = lineValue.split("\t"); if (values.length < 30) { // Counter context.getCounter("WEBPVMAPPRE_COUNTERS", "LENGTH_LT_30_COUNTER").increment(1L); return; } // url String urlValue = values[1]; if (StringUtils.isBlank(urlValue)) { // Counter context.getCounter("WEBPVMAPPRE_COUNTERS", "URL_BLANK_COUNTER") .increment(1L); return; } // provinceId String provinceIdValue = values[23]; if (StringUtils.isBlank(provinceIdValue)) { // Counter context.getCounter("WEBPVMAPPRE_COUNTERS", "PROVINCEID_BLANK_COUNTER").increment(1L); return; } Integer provinceId = Integer.MAX_VALUE; try { provinceId = Integer.valueOf(provinceIdValue); } catch (Exception e) { // Counter context.getCounter("WEBPVMAPPRE_COUNTERS", "PROVINCEID_NOT_NUMBER_COUNTER").increment(1L); return; } // map output key mapOutputKey.set(provinceId); context.write(mapOutputKey, mapOutputValue); } @Override protected void cleanup(Context context) throws IOException, InterruptedException { // TODO Auto-generated method stub } } // step 2 : Reducer Class public static class WebPvReducer extends Reducer<IntWritable, IntWritable, IntWritable, IntWritable> { private IntWritable outputValue = new IntWritable(); @Override protected void setup(Context context) throws IOException, InterruptedException { // TODO Auto-generated method stub } @Override protected void reduce(IntWritable key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException { // temp int sum = 0; // iterator for (IntWritable value : values) { // total sum += value.get(); } // set outputValue.set(sum); // output context.write(key, outputValue); } @Override protected void cleanup(Context context) throws IOException, InterruptedException { // TODO Auto-generated method stub } } /** * * @param args * @return * @throws Exception * int run(String [] args) throws Exception; */ // step 3 : Driver public int run(String[] args) throws Exception { Configuration configuration = this.getConf(); Job job = Job.getInstance(configuration, this.getClass() .getSimpleName()); job.setJarByClass(WebPvMapReduce.class); // set job // input Path inpath = new Path(args[0]); FileInputFormat.addInputPath(job, inpath); // output Path outpath = new Path(args[1]); FileOutputFormat.setOutputPath(job, outpath); // Mapper job.setMapperClass(WebPvMapper.class); // TODD job.setMapOutputKeyClass(IntWritable.class); job.setMapOutputValueClass(IntWritable.class); // =================shuffle================== // 1.partition // job.setPartitionerClass(cls); // 2.sort // job.setSortComparatorClass(cls); // 3combiner job.setCombinerClass(WebPvReducer.class); // 4.group // job.setGroupingComparatorClass(cls); // =================shuffle================== // Reducer job.setReducerClass(WebPvReducer.class); // TODD job.setOutputKeyClass(IntWritable.class); job.setOutputValueClass(IntWritable.class); // submit job boolean isSuccess = job.waitForCompletion(true); return isSuccess ? 0 : 1; } public static void main(String[] args) throws Exception { Configuration configuration = new Configuration(); // 传递两个参数,设置路径 args = new String[] { // 参数1:输入路径 "hdfs://hadoop-senior01.ibeifeng.com:8020/user/beifeng/webpv/input", // 参数2:输出路径 "hdfs://hadoop-senior01.ibeifeng.com:8020/user/beifeng/output7" }; // run job int status = ToolRunner.run(configuration, new WebPvMapReduce(), args); // exit program System.exit(status); } }运行

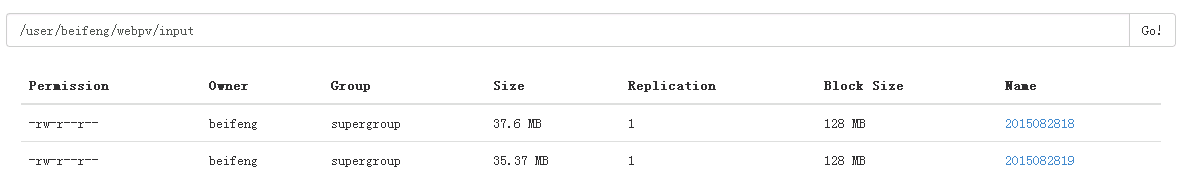

- 创建目录:

bin/hdfs dfs -mkdir -p webpv/input 将两个文件都上传

bin/hdfs dfs -put /opt/datas/2015082818 /user/beifeng/webpv/input bin/hdfs dfs -put /opt/datas/2015082819 /user/beifeng/webpv/input一次性读取两个文件,使用combiner

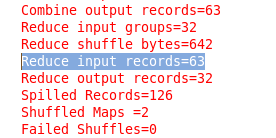

// 3combiner job.setCombinerClass(WebPvReducer.class);减少Reduce数量,节省性能

- 创建目录:

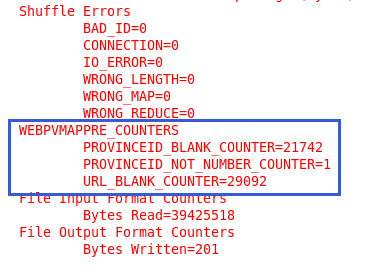

自定义计算器,对MapReduce进行DEBUG测试

在每个return前加入Conunter;如下

// Counter context.getCounter("WEBPVMAPPRE_COUNTERS", "PROVINCEID_NOT_NUMBER_COUNTER").increment(1L);结果显示:

- 等

MapReduce 分析网站基本指标

最新推荐文章于 2022-10-27 23:34:51 发布

280

280

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?