之前写了两篇文章一个是KNN算法的C++串行实现,另一个是CUDA计算向量的欧氏距离。那么这篇文章就可以说是前两篇文章的一个简单的整合。在看这篇文章之前可以先阅读前两篇文章。

一、生成数据集

现在需要生成一个N个D维的数据,没在一组数据都有一个类标,这个类标根据第一维的正负来进行标识样本数据的类标:Positive and Negative。

#!/usr/bin/python

import re

import sys

import random

import os

filename = "input.txt"

if(os.path.exists(filename)):

print("%s exists and del" % filename)

os.remove(filename)

fout = open(filename,"w")

for i in range( 0,int(sys.argv[1]) ): #str to int

x = []

for j in range(0,int(sys.argv[2])):

x.append( "%4f" % random.uniform(-1,1) ) #generate random data and limit the digits into 4

fout.write("%s\t" % x[j])

#fout.write(x) : TypeError:expected a character buffer object

if(x[0][0] == '-'):

fout.write(" Negative"+"\n")

else:

fout.write(" Positive"+"\n")

fout.close()

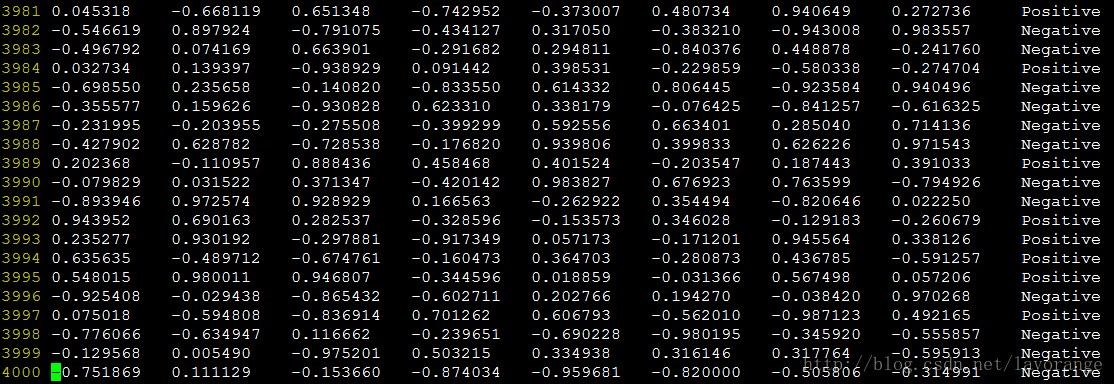

运行程序,生成4000个维度为8的数据:

生成了文件"input.txt":

二、串行代码:

这个代码和之前的文章的代码一致,我们选择400个数据进行作为测试数据,3600个数据进行训练数据。

KNN_2.cc:

#include<iostream>

#include<map>

#include<vector>

#include<stdio.h>

#include<cmath>

#include<cstdlib>

#include<algorithm>

#include<fstream>

using namespace std;

typedef string tLabel;

typedef double tData;

typedef pair<int,double> PAIR;

const int MaxColLen = 10;

const int MaxRowLen = 10000;

ifstream fin;

class KNN

{

private:

tData dataSet[MaxRowLen][MaxColLen];

tLabel labels[MaxRowLen];

tData testData[MaxColLen];

int rowLen;

int colLen;

int k;

int test_data_num;

map<int,double> map_index_dis;

map<tLabel,int> map_label_freq;

double get_distance(tData *d1,tData *d2);

public:

KNN(int k , int rowLen , int colLen , char *filename);

void get_all_distance();

tLabel get_max_freq_label();

void auto_norm_data();

void get_error_rate();

struct CmpByValue

{

bool operator() (const PAIR& lhs,const PAIR& rhs)

{

return lhs.second < rhs.second;

}

};

~KNN();

};

KNN::~KNN()

{

fin.close();

map_index_dis.clear();

map_label_freq.clear();

}

KNN::KNN(int k , int row ,int col , char *filename)

{

this->rowLen = row;

this->colLen = col;

this->k = k;

test_data_num = 0;

fin.open(filename);

if( !fin )

{

cout<<"can not open the file"<<endl;

exit(0);

}

//read data from file

for(int i=0;i<rowLen;i++)

{

for(int j=0;j<colLen;j++)

{

fin>>dataSet[i][j];

}

fin>>labels[i];

}

}

void KNN:: get_error_rate()

{

int i,j,count = 0;

tLabel label;

cout<<"please input the number of test data : "<<endl;

cin>>test_data_num;

for(i=0;i<test_data_num;i++)

{

for(j=0;j<colLen;j++)

{

testData[j] = dataSet[i][j];

}

get_all_distance();

label = get_max_freq_label();

if( label!=labels[i] )

count++;

map_index_dis.clear();

map_label_freq.clear();

}

cout<<"the error rate is = "<<(double)count/(double)test_data_num<<endl;

}

double KNN:: get_distance(tData *d1,tData *d2)

{

double sum = 0;

for(int i=0;i<colLen;i++)

{

sum += pow( (d1[i]-d2[i]) , 2 );

}

//cout<<"the sum is = "<<sum<<endl;

return sqrt(sum);

}

//get distance between testData and all dataSet

void KNN:: get_all_distance()

{

double distance;

int i;

for(i=test_data_num;i<rowLen;i++)

{

distance = get_distance(dataSet[i],testData);

map_index_dis[i] = distance;

}

}

tLabel KNN:: get_max_freq_label()

{

vector<PAIR> vec_index_dis( map_index_dis.begin(),map_index_dis.end() );

sort(vec_index_dis.begin(),vec_index_dis.end(),CmpByValue());

for(int i=0;i<k;i++)

{

/*

cout<<"the index = "<<vec_index_dis[i].first<<" the distance = "<<vec_index_dis[i].second<<" the label = "<<labels[ vec_index_dis[i].first ]<<" the coordinate ( ";

int j;

for(j=0;j<colLen-1;j++)

{

cout<<dataSet[ vec_index_dis[i].first ][j]<<",";

}

cout<<dataSet[ vec_index_dis[i].first ][j]<<" )"<<endl;

*/

map_label_freq[ labels[ vec_index_dis[i].first ] ]++;

}

map<tLabel,int>::const_iterator map_it = map_label_freq.begin();

tLabel label;

int max_freq = 0;

while( map_it != map_label_freq.end() )

{

if( map_it->second > max_freq )

{

max_freq = map_it->second;

label = map_it->first;

}

map_it++;

}

//cout<<"The test data belongs to the "<<label<<" label"<<endl;

return label;

}

void KNN::auto_norm_data()

{

tData maxa[colLen] ;

tData mina[colLen] ;

tData range[colLen] ;

int i,j;

for(i=0;i<colLen;i++)

{

maxa[i] = max(dataSet[0][i],dataSet[1][i]);

mina[i] = min(dataSet[0][i],dataSet[1][i]);

}

for(i=2;i<rowLen;i++)

{

for(j=0;j<colLen;j++)

{

if( dataSet[i][j]>maxa[j] )

{

maxa[j] = dataSet[i][j];

}

else if( dataSet[i][j]<mina[j] )

{

mina[j] = dataSet[i][j];

}

}

}

for(i=0;i<colLen;i++)

{

range[i] = maxa[i] - mina[i] ;

//normalize the test data set

testData[i] = ( testData[i] - mina[i] )/range[i] ;

}

//normalize the training data set

for(i=0;i<rowLen;i++)

{

for(j=0;j<colLen;j++)

{

dataSet[i][j] = ( dataSet[i][j] - mina[j] )/range[j];

}

}

}

int main(int argc , char** argv)

{

int k,row,col;

char *filename;

if( argc!=5 )

{

cout<<"The input should be like this : ./a.out k row col filename"<<endl;

exit(1);

}

k = atoi(argv[1]);

row = atoi(argv[2]);

col = atoi(argv[3]);

filename = argv[4];

KNN knn(k,row,col,filename);

knn.auto_norm_data();

knn.get_error_rate();

return 0;

}

target:

g++ KNN_2.cc

./a.out 7 4000 8 input.txt

cu:

nvcc KNN.cu

./a.out 7 4000 8 input.txt

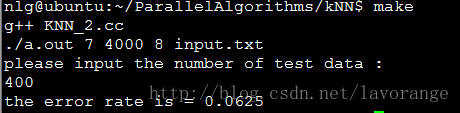

运行结果:

三、并行实现

并行实现的过程就是将没一个测试样本到N个训练样本的距离进行并行化,如果串行计算的话,时间复杂度为:O(N*D),如果串行计算的话,时间复杂度为O(D),其实D为数据的维度。

KNN.cu:

#include<iostream>

#include<map>

#include<vector>

#include<stdio.h>

#include<cmath>

#include<cstdlib>

#include<algorithm>

#include<fstream>

using namespace std;

typedef string tLabel;

typedef float tData;

typedef pair<int,double> PAIR;

const int MaxColLen = 10;

const int MaxRowLen = 10010;

const int test_data_num = 400;

ifstream fin;

class KNN

{

private:

tData dataSet[MaxRowLen][MaxColLen];

tLabel labels[MaxRowLen];

tData testData[MaxColLen];

tData trainingData[3600][8];

int rowLen;

int colLen;

int k;

map<int,double> map_index_dis;

map<tLabel,int> map_label_freq;

double get_distance(tData *d1,tData *d2);

public:

KNN(int k , int rowLen , int colLen , char *filename);

void get_all_distance();

tLabel get_max_freq_label();

void auto_norm_data();

void get_error_rate();

void get_training_data();

struct CmpByValue

{

bool operator() (const PAIR& lhs,const PAIR& rhs)

{

return lhs.second < rhs.second;

}

};

~KNN();

};

KNN::~KNN()

{

fin.close();

map_index_dis.clear();

map_label_freq.clear();

}

KNN::KNN(int k , int row ,int col , char *filename)

{

this->rowLen = row;

this->colLen = col;

this->k = k;

fin.open(filename);

if( !fin )

{

cout<<"can not open the file"<<endl;

exit(0);

}

for(int i=0;i<rowLen;i++)

{

for(int j=0;j<colLen;j++)

{

fin>>dataSet[i][j];

}

fin>>labels[i];

}

}

void KNN:: get_training_data()

{

for(int i=test_data_num;i<rowLen;i++)

{

for(int j=0;j<colLen;j++)

{

trainingData[i-test_data_num][j] = dataSet[i][j];

}

}

}

void KNN:: get_error_rate()

{

int i,j,count = 0;

tLabel label;

cout<<"the test data number is : "<<test_data_num<<endl;

get_training_data();

//get testing data and calculate

for(i=0;i<test_data_num;i++)

{

for(j=0;j<colLen;j++)

{

testData[j] = dataSet[i][j];

}

get_all_distance();

label = get_max_freq_label();

if( label!=labels[i] )

count++;

map_index_dis.clear();

map_label_freq.clear();

}

cout<<"the error rate is = "<<(double)count/(double)test_data_num<<endl;

}

//global function

__global__ void cal_dis(tData *train_data,tData *test_data,tData* dis,int pitch,int N , int D)

{

int tid = blockIdx.x;

if(tid<N)

{

tData temp = 0;

tData sum = 0;

for(int i=0;i<D;i++)

{

temp = *( (tData*)( (char*)train_data+tid*pitch )+i ) - test_data[i];

sum += temp * temp;

}

dis[tid] = sum;

}

}

//Parallel calculate the distance

void KNN:: get_all_distance()

{

int height = rowLen - test_data_num;

tData *distance = new tData[height];

tData *d_train_data,*d_test_data,*d_dis;

size_t pitch_d ;

size_t pitch_h = colLen * sizeof(tData);

//allocate memory on GPU

cudaMallocPitch( &d_train_data,&pitch_d,colLen*sizeof(tData),height);

cudaMalloc( &d_test_data,colLen*sizeof(tData) );

cudaMalloc( &d_dis, height*sizeof(tData) );

cudaMemset( d_train_data,0,height*colLen*sizeof(tData) );

cudaMemset( d_test_data,0,colLen*sizeof(tData) );

cudaMemset( d_dis , 0 , height*sizeof(tData) );

//copy training and testing data from host to device

cudaMemcpy2D( d_train_data,pitch_d,trainingData,pitch_h,colLen*sizeof(tData),height,cudaMemcpyHostToDevice);

cudaMemcpy( d_test_data,testData,colLen*sizeof(tData),cudaMemcpyHostToDevice);

//calculate the distance

cal_dis<<<height,1>>>( d_train_data,d_test_data,d_dis,pitch_d,height,colLen );

//copy distance data from device to host

cudaMemcpy( distance,d_dis,height*sizeof(tData),cudaMemcpyDeviceToHost);

int i;

for( i=0;i<rowLen-test_data_num;i++ )

{

map_index_dis[i+test_data_num] = distance[i];

}

}

tLabel KNN:: get_max_freq_label()

{

vector<PAIR> vec_index_dis( map_index_dis.begin(),map_index_dis.end() );

sort(vec_index_dis.begin(),vec_index_dis.end(),CmpByValue());

for(int i=0;i<k;i++)

{

/*

cout<<"the index = "<<vec_index_dis[i].first<<" the distance = "<<vec_index_dis[i].second<<" the label = "<<labels[ vec_index_dis[i].first ]<<" the coordinate ( ";

int j;

for(j=0;j<colLen-1;j++)

{

cout<<dataSet[ vec_index_dis[i].first ][j]<<",";

}

cout<<dataSet[ vec_index_dis[i].first ][j]<<" )"<<endl;

*/

map_label_freq[ labels[ vec_index_dis[i].first ] ]++;

}

map<tLabel,int>::const_iterator map_it = map_label_freq.begin();

tLabel label;

int max_freq = 0;

while( map_it != map_label_freq.end() )

{

if( map_it->second > max_freq )

{

max_freq = map_it->second;

label = map_it->first;

}

map_it++;

}

cout<<"The test data belongs to the "<<label<<" label"<<endl;

return label;

}

void KNN::auto_norm_data()

{

tData maxa[colLen] ;

tData mina[colLen] ;

tData range[colLen] ;

int i,j;

for(i=0;i<colLen;i++)

{

maxa[i] = max(dataSet[0][i],dataSet[1][i]);

mina[i] = min(dataSet[0][i],dataSet[1][i]);

}

for(i=2;i<rowLen;i++)

{

for(j=0;j<colLen;j++)

{

if( dataSet[i][j]>maxa[j] )

{

maxa[j] = dataSet[i][j];

}

else if( dataSet[i][j]<mina[j] )

{

mina[j] = dataSet[i][j];

}

}

}

for(i=0;i<colLen;i++)

{

range[i] = maxa[i] - mina[i] ;

//normalize the test data set

testData[i] = ( testData[i] - mina[i] )/range[i] ;

}

//normalize the training data set

for(i=0;i<rowLen;i++)

{

for(j=0;j<colLen;j++)

{

dataSet[i][j] = ( dataSet[i][j] - mina[j] )/range[j];

}

}

}

int main(int argc , char** argv)

{

int k,row,col;

char *filename;

if( argc!=5 )

{

cout<<"The input should be like this : ./a.out k row col filename"<<endl;

exit(1);

}

k = atoi(argv[1]);

row = atoi(argv[2]);

col = atoi(argv[3]);

filename = argv[4];

KNN knn(k,row,col,filename);

knn.auto_norm_data();

knn.get_error_rate();

return 0;

}

运行结果:

因为内存分配的问题(之前文章提到过),那么就需要将训练数据trainingData进行静态的空间分配,这样不是很方便。

可以看到,在测试数据集和训练数据集完全相同的情况下,结果是完全一样的。数据量小,没有做时间性能上的对比。还有可以改进的地方就是可以一次性的将所有testData载入到显存中,而不是一个一个的载入,这样就能够减少训练数据拷贝到显存中的次数,提高效率。

Author:忆之独秀

Email:leaguenew@qq.com

注明出处:http://blog.csdn.net/lavorange/article/details/42172451

1032

1032

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?