caffe 参数介绍

solver.prototxt

<code class="hljs http has-numbering" style="display: block; padding: 0px; color: inherit; box-sizing: border-box; font-family: 'Source Code Pro', monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal; background: transparent;"><span class="hljs-attribute" style="box-sizing: border-box;">net</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"models/bvlc_alexnet/train_val.prototxt" </span>

<span class="hljs-attribute" style="box-sizing: border-box;">test_iter</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">1000 # </span>

<span class="hljs-attribute" style="box-sizing: border-box;">test_interval</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">1000 # </span>

<span class="hljs-attribute" style="box-sizing: border-box;">base_lr</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">0.01 # 开始的学习率</span>

<span class="hljs-attribute" style="box-sizing: border-box;">lr_policy</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"step" # 学习率的drop是以gamma在每一次迭代中</span>

<span class="hljs-attribute" style="box-sizing: border-box;">gamma</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">0.1</span>

<span class="hljs-attribute" style="box-sizing: border-box;">stepsize</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">100000 # 每stepsize的迭代降低学习率:乘以gamma</span>

<span class="hljs-attribute" style="box-sizing: border-box;">display</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">20 # 没display次打印显示loss</span>

<span class="hljs-attribute" style="box-sizing: border-box;">max_iter</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">450000 # train 最大迭代max_iter </span>

<span class="hljs-attribute" style="box-sizing: border-box;">momentum</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">0.9 #</span>

<span class="hljs-attribute" style="box-sizing: border-box;">weight_decay</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">0.0005 #</span>

<span class="hljs-attribute" style="box-sizing: border-box;">snapshot</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">10000 # 没迭代snapshot次,保存一次快照</span>

<span class="hljs-attribute" style="box-sizing: border-box;">snapshot_prefix</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;"> "models/bvlc_reference_caffenet/caffenet_train"</span>

<span class="hljs-attribute" style="box-sizing: border-box;">solver_mode</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">GPU # 使用的模式是GPU </span></code><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li><li style="box-sizing: border-box; padding: 0px 5px;">5</li><li style="box-sizing: border-box; padding: 0px 5px;">6</li><li style="box-sizing: border-box; padding: 0px 5px;">7</li><li style="box-sizing: border-box; padding: 0px 5px;">8</li><li style="box-sizing: border-box; padding: 0px 5px;">9</li><li style="box-sizing: border-box; padding: 0px 5px;">10</li><li style="box-sizing: border-box; padding: 0px 5px;">11</li><li style="box-sizing: border-box; padding: 0px 5px;">12</li><li style="box-sizing: border-box; padding: 0px 5px;">13</li><li style="box-sizing: border-box; padding: 0px 5px;">14</li></ul><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li><li style="box-sizing: border-box; padding: 0px 5px;">5</li><li style="box-sizing: border-box; padding: 0px 5px;">6</li><li style="box-sizing: border-box; padding: 0px 5px;">7</li><li style="box-sizing: border-box; padding: 0px 5px;">8</li><li style="box-sizing: border-box; padding: 0px 5px;">9</li><li style="box-sizing: border-box; padding: 0px 5px;">10</li><li style="box-sizing: border-box; padding: 0px 5px;">11</li><li style="box-sizing: border-box; padding: 0px 5px;">12</li><li style="box-sizing: border-box; padding: 0px 5px;">13</li><li style="box-sizing: border-box; padding: 0px 5px;">14</li></ul>

-

test_iter

在测试的时候,需要迭代的次数,即test_iter* batchsize(测试集的)=测试集的大小,测试集的 batchsize可以在prototx文件里设置。

-

test_interval

训练的时候,每迭代test_interval次就进行一次测试。

-

momentum

灵感来自于牛顿第一定律,基本思路是为寻优加入了“惯性”的影响,这样一来,当误差曲面中存在平坦区的时候,SGD可以更快的速度学习。

wi←m∗wi−η∂E∂wi

train_val.prototxt

<code class="hljs bash has-numbering" style="display: block; padding: 0px; color: inherit; box-sizing: border-box; font-family: 'Source Code Pro', monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal; background: transparent;">layer { <span class="hljs-comment" style="color: rgb(136, 0, 0); box-sizing: border-box;"># 数据层</span>

name: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"data"</span>

<span class="hljs-built_in" style="color: rgb(102, 0, 102); box-sizing: border-box;">type</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"Data"</span>

top: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"data"</span>

top: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"label"</span>

include {

phase: TRAIN <span class="hljs-comment" style="color: rgb(136, 0, 0); box-sizing: border-box;"># 表明这是在训练阶段才包括进去</span>

}

transform_param { <span class="hljs-comment" style="color: rgb(136, 0, 0); box-sizing: border-box;"># 对数据进行预处理</span>

mirror: <span class="hljs-literal" style="color: rgb(0, 102, 102); box-sizing: border-box;">true</span> <span class="hljs-comment" style="color: rgb(136, 0, 0); box-sizing: border-box;"># 是否做镜像</span>

crop_size: <span class="hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">227</span>

<span class="hljs-comment" style="color: rgb(136, 0, 0); box-sizing: border-box;"># 减去均值文件</span>

mean_file: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"data/ilsvrc12/imagenet_mean.binaryproto"</span>

}

data_param { <span class="hljs-comment" style="color: rgb(136, 0, 0); box-sizing: border-box;"># 设定数据的来源</span>

<span class="hljs-built_in" style="color: rgb(102, 0, 102); box-sizing: border-box;">source</span>: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"examples/imagenet/ilsvrc12_train_lmdb"</span>

batch_size: <span class="hljs-number" style="color: rgb(0, 102, 102); box-sizing: border-box;">256</span>

backend: LMDB

}

}</code><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li><li style="box-sizing: border-box; padding: 0px 5px;">5</li><li style="box-sizing: border-box; padding: 0px 5px;">6</li><li style="box-sizing: border-box; padding: 0px 5px;">7</li><li style="box-sizing: border-box; padding: 0px 5px;">8</li><li style="box-sizing: border-box; padding: 0px 5px;">9</li><li style="box-sizing: border-box; padding: 0px 5px;">10</li><li style="box-sizing: border-box; padding: 0px 5px;">11</li><li style="box-sizing: border-box; padding: 0px 5px;">12</li><li style="box-sizing: border-box; padding: 0px 5px;">13</li><li style="box-sizing: border-box; padding: 0px 5px;">14</li><li style="box-sizing: border-box; padding: 0px 5px;">15</li><li style="box-sizing: border-box; padding: 0px 5px;">16</li><li style="box-sizing: border-box; padding: 0px 5px;">17</li><li style="box-sizing: border-box; padding: 0px 5px;">18</li><li style="box-sizing: border-box; padding: 0px 5px;">19</li><li style="box-sizing: border-box; padding: 0px 5px;">20</li></ul><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li><li style="box-sizing: border-box; padding: 0px 5px;">5</li><li style="box-sizing: border-box; padding: 0px 5px;">6</li><li style="box-sizing: border-box; padding: 0px 5px;">7</li><li style="box-sizing: border-box; padding: 0px 5px;">8</li><li style="box-sizing: border-box; padding: 0px 5px;">9</li><li style="box-sizing: border-box; padding: 0px 5px;">10</li><li style="box-sizing: border-box; padding: 0px 5px;">11</li><li style="box-sizing: border-box; padding: 0px 5px;">12</li><li style="box-sizing: border-box; padding: 0px 5px;">13</li><li style="box-sizing: border-box; padding: 0px 5px;">14</li><li style="box-sizing: border-box; padding: 0px 5px;">15</li><li style="box-sizing: border-box; padding: 0px 5px;">16</li><li style="box-sizing: border-box; padding: 0px 5px;">17</li><li style="box-sizing: border-box; padding: 0px 5px;">18</li><li style="box-sizing: border-box; padding: 0px 5px;">19</li><li style="box-sizing: border-box; padding: 0px 5px;">20</li></ul>

<code class="hljs css has-numbering" style="display: block; padding: 0px; color: inherit; box-sizing: border-box; font-family: 'Source Code Pro', monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal; background: transparent;"><span class="hljs-tag" style="color: rgb(0, 0, 0); box-sizing: border-box;">layer</span> <span class="hljs-rules" style="box-sizing: border-box;">{

<span class="hljs-rule" style="box-sizing: border-box;"><span class="hljs-attribute" style="box-sizing: border-box;">name</span>:<span class="hljs-value" style="box-sizing: border-box; color: rgb(0, 102, 102);"> <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"data"</span>

type: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"Data"</span>

top: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"data"</span>

top: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"label"</span>

include {

phase: TEST # 测试阶段

</span></span></span>}

<span class="hljs-tag" style="color: rgb(0, 0, 0); box-sizing: border-box;">transform_param</span> <span class="hljs-rules" style="box-sizing: border-box;">{

<span class="hljs-rule" style="box-sizing: border-box;"><span class="hljs-attribute" style="box-sizing: border-box;">mirror</span>:<span class="hljs-value" style="box-sizing: border-box; color: rgb(0, 102, 102);"> false # 是否做镜像

crop_size: <span class="hljs-number" style="box-sizing: border-box;">227</span>

# 减去均值文件

mean_file: <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"data/ilsvrc12/imagenet_mean.binaryproto"</span>

</span></span></span>}

<span class="hljs-tag" style="color: rgb(0, 0, 0); box-sizing: border-box;">data_param</span> <span class="hljs-rules" style="box-sizing: border-box;">{

<span class="hljs-rule" style="box-sizing: border-box;"><span class="hljs-attribute" style="box-sizing: border-box;">source</span>:<span class="hljs-value" style="box-sizing: border-box; color: rgb(0, 102, 102);"> <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"examples/imagenet/ilsvrc12_val_lmdb"</span>

batch_size: <span class="hljs-number" style="box-sizing: border-box;">50</span>

backend: LMDB

</span></span></span>}

}</code><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li><li style="box-sizing: border-box; padding: 0px 5px;">5</li><li style="box-sizing: border-box; padding: 0px 5px;">6</li><li style="box-sizing: border-box; padding: 0px 5px;">7</li><li style="box-sizing: border-box; padding: 0px 5px;">8</li><li style="box-sizing: border-box; padding: 0px 5px;">9</li><li style="box-sizing: border-box; padding: 0px 5px;">10</li><li style="box-sizing: border-box; padding: 0px 5px;">11</li><li style="box-sizing: border-box; padding: 0px 5px;">12</li><li style="box-sizing: border-box; padding: 0px 5px;">13</li><li style="box-sizing: border-box; padding: 0px 5px;">14</li><li style="box-sizing: border-box; padding: 0px 5px;">15</li><li style="box-sizing: border-box; padding: 0px 5px;">16</li><li style="box-sizing: border-box; padding: 0px 5px;">17</li><li style="box-sizing: border-box; padding: 0px 5px;">18</li><li style="box-sizing: border-box; padding: 0px 5px;">19</li><li style="box-sizing: border-box; padding: 0px 5px;">20</li></ul><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li><li style="box-sizing: border-box; padding: 0px 5px;">5</li><li style="box-sizing: border-box; padding: 0px 5px;">6</li><li style="box-sizing: border-box; padding: 0px 5px;">7</li><li style="box-sizing: border-box; padding: 0px 5px;">8</li><li style="box-sizing: border-box; padding: 0px 5px;">9</li><li style="box-sizing: border-box; padding: 0px 5px;">10</li><li style="box-sizing: border-box; padding: 0px 5px;">11</li><li style="box-sizing: border-box; padding: 0px 5px;">12</li><li style="box-sizing: border-box; padding: 0px 5px;">13</li><li style="box-sizing: border-box; padding: 0px 5px;">14</li><li style="box-sizing: border-box; padding: 0px 5px;">15</li><li style="box-sizing: border-box; padding: 0px 5px;">16</li><li style="box-sizing: border-box; padding: 0px 5px;">17</li><li style="box-sizing: border-box; padding: 0px 5px;">18</li><li style="box-sizing: border-box; padding: 0px 5px;">19</li><li style="box-sizing: border-box; padding: 0px 5px;">20</li></ul>

-

lr_mult

学习率,但是最终的学习率需要乘以 solver.prototxt 配置文件中的 base_lr .

如果有两个 lr_mult, 则第一个表示 weight 的学习率,第二个表示 bias 的学习率

一般 bias 的学习率是 weight 学习率的2倍’

-

decay_mult

权值衰减,为了避免模型的over-fitting,需要对cost function加入规范项。

wi←wi−η∂E∂wi−ηλwi

-

num_output

卷积核(filter)的个数

-

kernel_size

卷积核的大小。

如果卷积核的长和宽不等,需要用 kernel_h 和 kernel_w 分别设定

-

stride

卷积核的步长,默认为1。也可以用stride_h和stride_w来设置。

-

pad

扩充边缘,默认为0,不扩充。

扩充的时候是左右、上下对称的,比如卷积核的大小为5*5,那么pad设置为2,则四个边缘都扩充2个像素,即宽度和高度都扩充了4个像素,这样卷积运算之后的特征图就不会变小。

也可以通过pad_h和pad_w来分别设定。

-

weight_filler

权值初始化。 默认为“constant”,值全为0.

很多时候我们用”xavier”算法来进行初始化,也可以设置为”gaussian”

<code class="hljs css has-numbering" style="display: block; padding: 0px; color: inherit; box-sizing: border-box; font-family: 'Source Code Pro', monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal; background: transparent;"><span class="hljs-tag" style="color: rgb(0, 0, 0); box-sizing: border-box;">weight_filler</span> <span class="hljs-rules" style="box-sizing: border-box;">{

<span class="hljs-rule" style="box-sizing: border-box;"><span class="hljs-attribute" style="box-sizing: border-box;">type</span>:<span class="hljs-value" style="box-sizing: border-box; color: rgb(0, 102, 102);"> <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"gaussian"</span>

std: <span class="hljs-number" style="box-sizing: border-box;">0.01</span>

</span></span></span>}</code><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li></ul><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li></ul>

偏置项的初始化。一般设置为”constant”, 值全为0。

<code class="hljs css has-numbering" style="display: block; padding: 0px; color: inherit; box-sizing: border-box; font-family: 'Source Code Pro', monospace;font-size:undefined; white-space: pre; border-radius: 0px; word-wrap: normal; background: transparent;"><span class="hljs-tag" style="color: rgb(0, 0, 0); box-sizing: border-box;">bias_filler</span> <span class="hljs-rules" style="box-sizing: border-box;">{

<span class="hljs-rule" style="box-sizing: border-box;"><span class="hljs-attribute" style="box-sizing: border-box;">type</span>:<span class="hljs-value" style="box-sizing: border-box; color: rgb(0, 102, 102);"> <span class="hljs-string" style="color: rgb(0, 136, 0); box-sizing: border-box;">"constant"</span>

value: <span class="hljs-number" style="box-sizing: border-box;">0</span>

</span></span></span>}</code><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li></ul><ul class="pre-numbering" style="box-sizing: border-box; position: absolute; width: 50px; top: 0px; left: 0px; margin: 0px; padding: 6px 0px 40px; border-right-width: 1px; border-right-style: solid; border-right-color: rgb(221, 221, 221); list-style: none; text-align: right; background-color: rgb(238, 238, 238);"><li style="box-sizing: border-box; padding: 0px 5px;">1</li><li style="box-sizing: border-box; padding: 0px 5px;">2</li><li style="box-sizing: border-box; padding: 0px 5px;">3</li><li style="box-sizing: border-box; padding: 0px 5px;">4</li></ul>

-

bias_term

是否开启偏置项,默认为true, 开启

-

group

分组,默认为1组。如果大于1,我们限制卷积的连接操作在一个子集内。

卷积分组可以减少网络的参数,至于是否还有其他的作用就不清楚了。

每个input是需要和每一个kernel都进行连接的,但是由于分组的原因其只是与部分的kernel进行连接的

如: 我们根据图像的通道来分组,那么第i个输出分组只能与第i个输入分组进行连接。

-

pool

池化方法,默认为MAX。目前可用的方法有 MAX, AVE, 或 STOCHASTIC

-

dropout_ratio

丢弃数据的概率

(以下 caffe旧版 来源于http://demo.netfoucs.com/danieljianfeng/article/details/42929283)

1. Vision Layers

1.1 卷积层(Convolution)

类型:CONVOLUTION

例子

layers {

name: "conv1"

type: CONVOLUTION

bottom: "data"

top: "conv1"

blobs_lr: 1 <span style="font-family: 'microsoft yahei';"># learning rate multiplier for the filters </span>

<span style="font-family: 'microsoft yahei';"> blobs_lr: 2 # learning rate multiplier for the biases </span>

<span style="font-family: 'microsoft yahei';"> weight_decay: 1 # weight decay multiplier for the filters</span>

<span style="font-family: 'microsoft yahei';"> weight_decay: 0 # weight decay multiplier for the biases </span>

<span style="font-family: 'microsoft yahei';">convolution_param { num_output: 96 # learn 96 filters </span>

<span style="font-family: 'microsoft yahei';"> kernel_size: 11 # each filter is 11x11 </span>

<span style="font-family: 'microsoft yahei';"> stride: 4 # step 4 pixels between each filter application </span>

<span style="font-family: 'microsoft yahei';">weight_filler { </span>

<span style="font-family: 'microsoft yahei';"> type: "gaussian" # initialize the filters from a Gaussian </span>

<span style="font-family: 'microsoft yahei';"> std: 0.01 # distribution with stdev 0.01 (default mean: 0) </span>

<span style="font-family: 'microsoft yahei';"> } </span>

<span style="font-family: 'microsoft yahei';"> bias_filler { </span>

<span style="font-family: 'microsoft yahei';"> type: "constant" # initialize the biases to zero (0) </span>

<span style="font-family: 'microsoft yahei';"> value: 0 } </span>

<span style="font-family: 'microsoft yahei';">}</span>

<span style="font-family: 'microsoft yahei';">}</span>

blobs_lr: 学习率调整的参数,在上面的例子中设置权重学习率和运行中求解器给出的学习率一样,同时是偏置学习率为权重的两倍。

weight_decay:

卷积层的重要参数

必须参数:

num_output (c_o):过滤器的个数

kernel_size (or kernel_h and kernel_w):过滤器的大小

可选参数:

weight_filler [default type: 'constant' value: 0]:参数的初始化方法

bias_filler:偏置的初始化方法

bias_term [default true]:指定是否是否开启偏置项

pad (or pad_h and pad_w) [default 0]:指定在输入的每一边加上多少个像素

stride (or stride_h and stride_w) [default 1]:指定过滤器的步长

group (g) [default 1]: If g > 1, we restrict the connectivityof each filter to a subset of the input. Specifically, the input and outputchannels are separated into g groups, and the ith output group channels will beonly connected to the ith input group channels.

通过卷积后的大小变化:

输入:n * c_i * h_i * w_i

输出:n * c_o * h_o * w_o,其中h_o = (h_i + 2 * pad_h - kernel_h) /stride_h + 1,w_o通过同样的方法计算。

1.2 池化层(Pooling)

类型:POOLING

例子

layers { name: "pool1" type: POOLING bottom: "conv1" top: "pool1" pooling_param { pool: MAX kernel_size: 3 # pool over a 3x3 region stride: 2 # step two pixels (in the bottom blob) between pooling regions }}

卷积层的重要参数

必需参数:

kernel_size (or kernel_h and kernel_w):过滤器的大小

可选参数:

pool [default MAX]:pooling的方法,目前有MAX, AVE, 和STOCHASTIC三种方法

pad (or pad_h and pad_w) [default 0]:指定在输入的每一遍加上多少个像素

stride (or stride_h and stride_w) [default1]:指定过滤器的步长

通过池化后的大小变化:

输入:n * c_i * h_i * w_i

输出:n * c_o * h_o * w_o,其中h_o = (h_i + 2 * pad_h - kernel_h) /stride_h + 1,w_o通过同样的方法计算。

1.3 Local Response Normalization (LRN)

类型:LRN

Local ResponseNormalization是对一个局部的输入区域进行的归一化(激活a被加一个归一化权重(分母部分)生成了新的激活b),有两种不同的形式,一种的输入区域为相邻的channels(cross channel LRN),另一种是为同一个channel内的空间区域(within channel LRN)

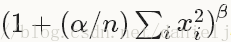

计算公式:对每一个输入除以

可选参数:

local_size [default 5]:对于cross channel LRN为需要求和的邻近channel的数量;对于within channel LRN为需要求和的空间区域的边长

alpha [default 1]:scaling参数

beta [default 5]:指数

norm_region [default ACROSS_CHANNELS]: 选择哪种LRN的方法ACROSS_CHANNELS 或者WITHIN_CHANNEL

2. Loss Layers

深度学习是通过最小化输出和目标的Loss来驱动学习。

2.1 Softmax

类型: SOFTMAX_LOSS

2.2 Sum-of-Squares / Euclidean

类型: EUCLIDEAN_LOSS

2.3 Hinge / Margin

类型: HINGE_LOSS

例子:

# L1 Normlayers { name: "loss" type: HINGE_LOSS bottom: "pred" bottom: "label"}# L2 Normlayers { name: "loss" type: HINGE_LOSS bottom: "pred" bottom: "label" top: "loss" hinge_loss_param { norm: L2 }}

可选参数:

norm [default L1]: 选择L1或者 L2范数

输入:

n * c * h * wPredictions

n * 1 * 1 * 1Labels

输出

1 * 1 * 1 * 1Computed Loss

2.4 Sigmoid Cross-Entropy

类型:SIGMOID_CROSS_ENTROPY_LOSS

2.5 Infogain

类型:INFOGAIN_LOSS

2.6 Accuracy and Top-k

类型:ACCURACY

用来计算输出和目标的正确率,事实上这不是一个loss,而且没有backward这一步。

3. 激励层(Activation / Neuron Layers)

一般来说,激励层是element-wise的操作,输入和输出的大小相同,一般情况下就是一个非线性函数。

3.1 ReLU / Rectified-Linear and Leaky-ReLU

类型: RELU

例子:

layers { name: "relu1" type: RELU bottom: "conv1" top: "conv1"}

可选参数:

negative_slope [default 0]:指定输入值小于零时的输出。

ReLU是目前使用做多的激励函数,主要因为其收敛更快,并且能保持同样效果。

标准的ReLU函数为max(x, 0),而一般为当x > 0时输出x,但x <= 0时输出negative_slope。RELU层支持in-place计算,这意味着bottom的输出和输入相同以避免内存的消耗。

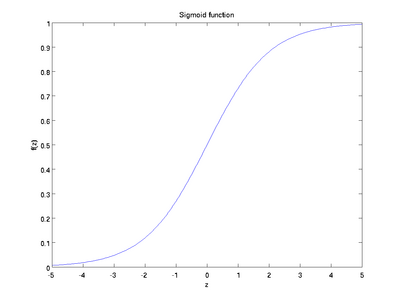

3.2 Sigmoid

类型: SIGMOID

例子:

layers { name: "encode1neuron" bottom: "encode1" top: "encode1neuron" type: SIGMOID}

SIGMOID 层通过 sigmoid(x) 计算每一个输入x的输出,函数如下图。

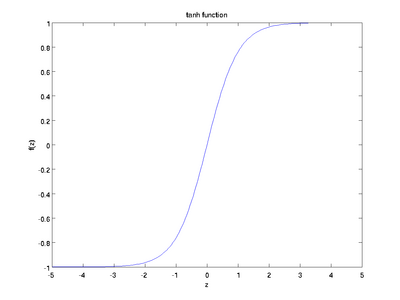

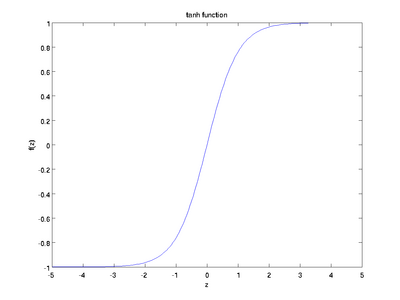

3.3 TanH / Hyperbolic Tangent

类型: TANH

例子:

layers { name: "encode1neuron" bottom: "encode1" top: "encode1neuron" type: SIGMOID}

TANH层通过 tanh(x) 计算每一个输入x的输出,函数如下图。

3.3 Absolute Value

类型: ABSVAL

例子:

layers { name: "layer" bottom: "in" top: "out" type: ABSVAL}

ABSVAL层通过 abs(x) 计算每一个输入x的输出。

3.4 Power

类型: POWER

例子:

layers { name: "layer" bottom: "in" top: "out" type: POWER power_param { power: 1 scale: 1 shift: 0 }}

可选参数:

power [default 1]

scale [default 1]

shift [default 0]

POWER层通过 (shift + scale * x) ^ power计算每一个输入x的输出。

3.5 BNLL

类型: BNLL

例子:

layers { name: "layer" bottom: "in" top: "out" type: BNLL}

BNLL (binomial normal log likelihood) 层通过 log(1 + exp(x)) 计算每一个输入x的输出。

4. 数据层(Data Layers)

数据通过数据层进入Caffe,数据层在整个网络的底部。数据可以来自高效的数据库(LevelDB 或者 LMDB),直接来自内存。如果不追求高效性,可以以HDF5或者一般图像的格式从硬盘读取数据。

4.1 Database

类型:DATA

必须参数:

source:包含数据的目录名称

batch_size:一次处理的输入的数量

可选参数:

rand_skip:在开始的时候从输入中跳过这个数值,这在异步随机梯度下降(SGD)的时候非常有用

backend [default LEVELDB]: 选择使用 LEVELDB 或者 LMDB

4.2 In-Memory

类型: MEMORY_DATA

必需参数:

batch_size, channels, height, width: 指定从内存读取数据的大小

The memory data layer reads data directly from memory, without copying it. In order to use it, one must call MemoryDataLayer::Reset (from C++) or Net.set_input_arrays (from Python) in order to specify a source of contiguous data (as 4D row major array), which is read one batch-sized chunk at a time.

4.3 HDF5 Input

类型: HDF5_DATA

必要参数:

source:需要读取的文件名

batch_size:一次处理的输入的数量

4.4 HDF5 Output

类型: HDF5_OUTPUT

必要参数:

file_name: 输出的文件名

HDF5的作用和这节中的其他的层不一样,它是把输入的blobs写到硬盘

4.5 Images

类型: IMAGE_DATA

必要参数:

source:

text文件的名字,每一行给出一张图片的文件名和label

batch_size:

一个batch中图片的数量

可选参数:

rand_skip:

在开始的时候从输入中跳过这个数值,这在异步随机梯度下降(SGD)的时候非常有用

shuffle [default false]

new_height, new_width: 把所有的图像resize到这个大小

4.6 Windows

类型:WINDOW_DATA

4.7 Dummy

类型:DUMMY_DATA

Dummy 层用于development 和debugging。具体参数DummyDataParameter。

5. 一般层(Common Layers)

5.1 全连接层Inner Product

类型:INNER_PRODUCT

例子:

layers { name: "fc8" type: INNER_PRODUCT blobs_lr: 1 # learning rate multiplier for the filters blobs_lr: 2 # learning rate multiplier for the biases weight_decay: 1 # weight decay multiplier for the filters weight_decay: 0 # weight decay multiplier for the biases inner_product_param { num_output: 1000 weight_filler { type: "gaussian" std: 0.01 } bias_filler { type: "constant" value: 0 } } bottom: "fc7" top: "fc8"}

必要参数:

num_output (c_o):过滤器的个数

可选参数:

weight_filler [default type: 'constant' value: 0]:参数的初始化方法

bias_filler:偏置的初始化方法

bias_term [default true]:指定是否是否开启偏置项

通过全连接层后的大小变化:

输入:n * c_i * h_i * w_i

输出:n * c_o * 1 *1

5.2 Splitting

类型:SPLIT

Splitting层可以把一个输入blob分离成多个输出blobs。这个用在当需要把一个blob输入到多个输出层的时候。

5.3 Flattening

类型:FLATTEN

Flattening是把一个输入的大小为n * c * h * w变成一个简单的向量,其大小为 n * (c*h*w) * 1 * 1。

5.4 Concatenation

类型:CONCAT

例子:

layers { name: "concat" bottom: "in1" bottom: "in2" top: "out" type: CONCAT concat_param { concat_dim: 1 }}

可选参数:

concat_dim [default 1]:0代表链接num,1代表链接channels

通过全连接层后的大小变化:

输入:从1到K的每一个blob的大小n_i * c_i * h * w

输出:

如果concat_dim = 0: (n_1 + n_2 + ... + n_K) *c_1 * h * w,需要保证所有输入的c_i 相同。

如果concat_dim = 1: n_1 * (c_1 + c_2 + ... +c_K) * h * w,需要保证所有输入的n_i 相同。

通过Concatenation层,可以把多个的blobs链接成一个blob。

5.5 Slicing

The SLICE layer is a utility layer that slices an input layer to multiple output layers along a given dimension (currently num or channel only) with given slice indices.

5.6 Elementwise Operations

类型:ELTWISE

5.7 Argmax

类型:ARGMAX

5.8 Softmax

类型:SOFTMAX

5.9 Mean-Variance Normalization

类型:MVN

952

952

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?