根据现有数据对分类边界线建立回归模型,以此进行分类。在练习中会介绍有关梯度上升算法和随机梯度上升算法,最后应用Logistic回归,预测病马的死亡率。参考练习[Exercise 3] Logistic Regression and Newton’s Method。

实验基础

Logistic回归梯度上升算法

#!/usr/bin/env python

# coding=utf-8

from numpy import *

#loadDataSet

def loadDataSet():

dataMat = []; labelMat = []

fr = open('testSet.txt')

for line in fr.readlines():

lineArr = line.strip().split()

dataMat.append([1.0, float(lineArr[0]), float(lineArr[1])])

labelMat.append(int(lineArr[2]))

return dataMat,labelMat

#sigmod function

def sigmod(inX):

return 1.0/(1+ exp(-inX))

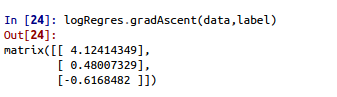

#梯度上升算法

def gradAscent(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn)

labelMat = mat(classLabels).transpose()

m,n = shape(dataMatrix)

alpha = 0.001

maxCycles = 500

weights = ones((n,1))

for k in range(maxCycles):

h = sigmod(dataMatrix*weights)

error = (labelMat - h)

weights = weights + alpha*dataMatrix.transpose()*error

return weights分析数据:画出决策边界

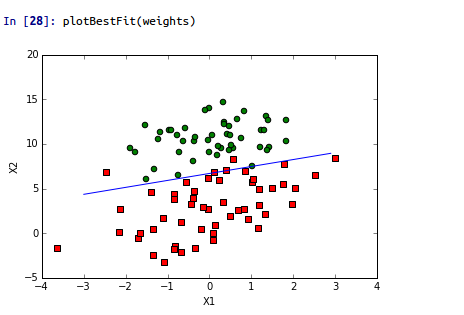

#画出决策边界

def plotBestFit(weights):

import matplotlib.pyplot as plt

dataMat,labelMat = loadDataSet()

dataArr = array(dataMat)

n = shape(dataArr)[0]

xcord1 = []; ycord1 = []

xcord2 = []; ycord2 = []

for i in range(n):

if int(labelMat[i])==1:

xcord1.append(dataArr[i,1]); ycord1.append(dataArr[i,2])

else:

xcord2.append(dataArr[i,1]); ycord2.append(dataArr[i,2])

fig = plt.figure()

ax = fig.add_subplot(111)

ax.scatter(xcord1, ycord1, s=30, c='red', marker='s')

ax.scatter(xcord2, ycord2, s=30, c='green')

x = arange(-3.0, 3.0, 0.1)

y = (-weights[0] - weights[1]*x)/weights[2]

ax.plot(x,y)

plt.xlabel('X1'); plt.ylabel('X2');

plt.show()训练算法

#随机梯度上升算法

def stocGradAscent0(dataMatrix, classLabels):

m,n = shape(dataMatrix)

alpha = 0.01

weights = ones(n)

for i in range(m):

h = sigmod(sum(dataMatrix[i]*weights))

error = classLabels[i] - h

weights = weights + alpha*error*dataMatrix[i]

return weights

#改进随机梯度上升算法 1 alpha每次迭代都会调整 2. 随机选择样本

def stocGradAscent1( dataMatrix, classLabels, numIter = 150):

m,n = shape(dataMatrix)

weights = ones(n)

for j in range(numIter):

dataIndex = range(m)

for i in range(m):

alpha = 4/(1.0 + j + i) + 0.01

randIndex = int(random.uniform(0, len(dataIndex)))

h = sigmod(sum(dataMatrix[i]*weights))

error = classLabels[randIndex] - h

weights = weights + alpha*error*dataMatrix[randIndex]

del(dataIndex[randIndex])

return weights

def classifyVector(inX, weights):

prob = sigmod(sum(inX*weights))

if prob > 0.5:

return 1.0

else:

return 0.0病马死亡率预测

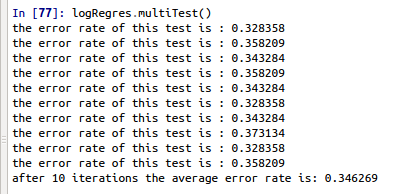

def colicTest():

frTrain = open('horseColicTraining.txt')

frTest = open('horseColicTest.txt')

trainingSet = []; trainingLabels = []

for line in frTrain.readlines():

currline = line.strip().split('\t')

lineArr = []

for i in range(21):

lineArr.append(float(currline[i]))

trainingSet.append(lineArr)

trainingLabels.append(float(currline[21]))

trainWeights = stocGradAscent1(array(trainingSet), trainingLabels, 500)

errorCount = 0; numTestVec = 0.0

for line in frTest.readlines():

numTestVec += 1.0

currline = line.strip().split('\t')

lineArr = []

for i in range(21):

lineArr.append(float(currline[i]))

if int(classifyVector(array(lineArr), trainWeights)) != int(currline[21]):

errorCount += 1

errorRate = (float(errorCount)/numTestVec)

print "the error rate of this test is : %f"%errorRate

return errorRate

def multiTest():

numTests = 10; errorSum = 0.0

for k in range(numTests):

errorSum += colicTest()

print "after %d iterations the average error rate is: %f"%(numTests, errorSum/float(numTests))

小结

Logistic回归的目的是寻找一个非线性函数sigmod的最佳拟合参数。在优化算法中,最常用的就是梯度上升算法,而梯度上升算法又可以简化为随机梯度上升算法。

机器学习的一个重要问题是如何处理缺少数据。

一些可选的做法:

- 使用刊用特征的均值来填补缺失值

- 使用特殊值来填补,如-1

- 忽略有缺失的样本

- 使用相似样本的均值填补缺失值

- 使用另外的算法来预测缺失值

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?