1、创建一个maven项目,项目的相关信息如下:

<groupId>cn.toto.spark</groupId>

<artifactId>bigdata</artifactId>

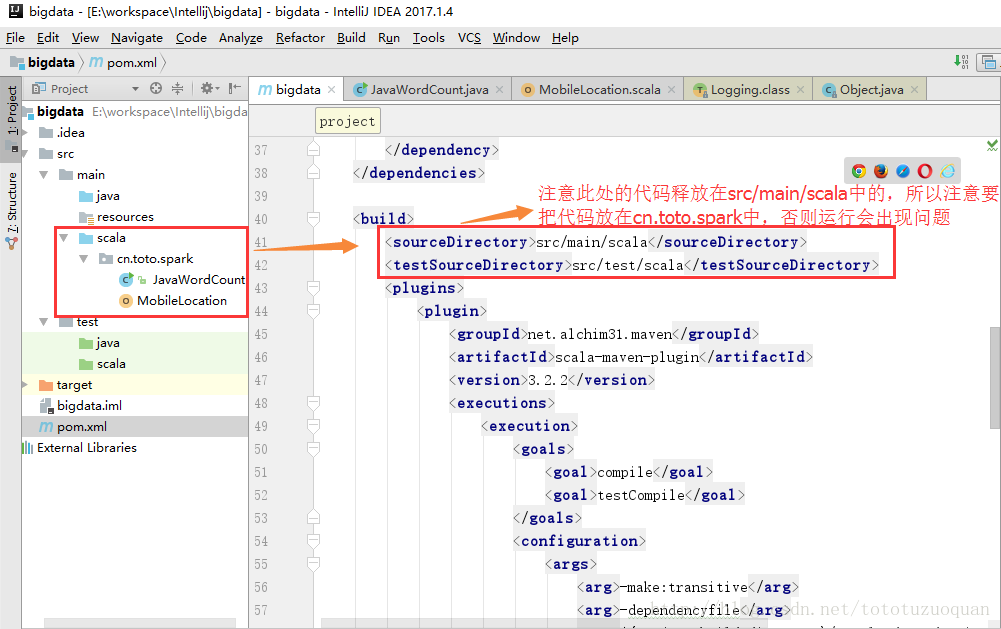

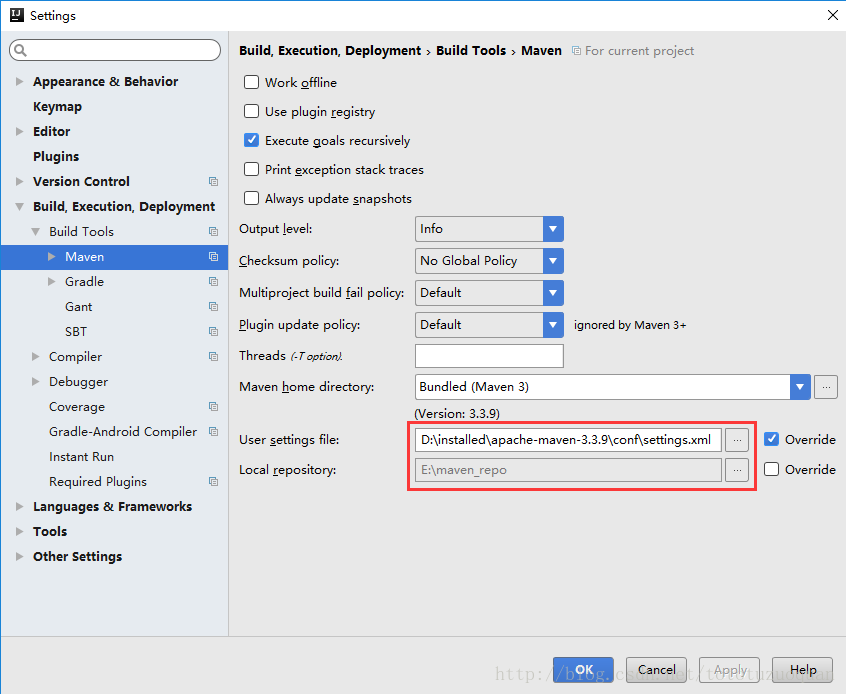

<version>1.0-SNAPSHOT</version>2、修改Maven仓库的位置配置:

3、首先要编写Maven的Pom文件

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>cn.toto.spark</groupId>

<artifactId>bigdata</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>1.7</maven.compiler.source>

<maven.compiler.target>1.7</maven.compiler.target>

<encoding>UTF-8</encoding>

<scala.version>2.10.6</scala.version>

<spark.version>1.6.2</spark.version>

<hadoop.version>2.6.4</hadoop.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.10</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

<plugins>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.2</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

<configuration>

<args>

<arg>-make:transitive</arg>

<arg>-dependencyfile</arg>

<arg>${project.build.directory}/.scala_dependencies</arg>

</args>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.4.3</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>4、编写Java代码

package cn.toto.spark;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.FlatMapFunction;

import org.apache.spark.api.java.function.Function2;

import org.apache.spark.api.java.function.PairFunction;

import scala.Tuple2;

import java.util.Arrays;

/**

* Created by toto on 2017/7/6.

*/

public class JavaWordCount {

public static void main(String[] args) {

SparkConf conf = new SparkConf().setAppName("JavaWordCount");

//创建java sparkcontext

JavaSparkContext jsc = new JavaSparkContext(conf);

//读取数据

JavaRDD<String> lines = jsc.textFile(args[0]);

//切分

JavaRDD<String> words = lines.flatMap(new FlatMapFunction<String, String>() {

@Override

public Iterable<String> call(String line) throws Exception {

return Arrays.asList(line.split(" "));

}

});

//遇见一个单词就记作一个1

JavaPairRDD<String, Integer> wordAndOne = words.mapToPair(new PairFunction<String, String, Integer>() {

@Override

public Tuple2<String, Integer> call(String word) throws Exception {

return new Tuple2<String, Integer>(word, 1);

}

});

//分组聚合

JavaPairRDD<String, Integer> result = wordAndOne.reduceByKey(new Function2<Integer, Integer, Integer>() {

@Override

public Integer call(Integer i1, Integer i2) throws Exception {

return i1 + i2;

}

});

//反转顺序

JavaPairRDD<Integer, String> swapedPair = result.mapToPair(new PairFunction<Tuple2<String, Integer>, Integer, String>() {

@Override

public Tuple2<Integer, String> call(Tuple2<String, Integer> tp) throws Exception {

return new Tuple2<Integer, String>(tp._2, tp._1);

}

});

//排序并调换顺序

JavaPairRDD<String, Integer> finalResult = swapedPair.sortByKey(false).mapToPair(new PairFunction<Tuple2<Integer, String>, String, Integer>() {

@Override

public Tuple2<String, Integer> call(Tuple2<Integer, String> tp) throws Exception {

return tp.swap();

}

});

//保存

finalResult.saveAsTextFile(args[1]);

jsc.stop();

}

}

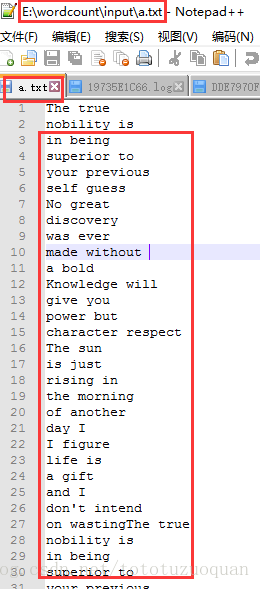

5、准备数据

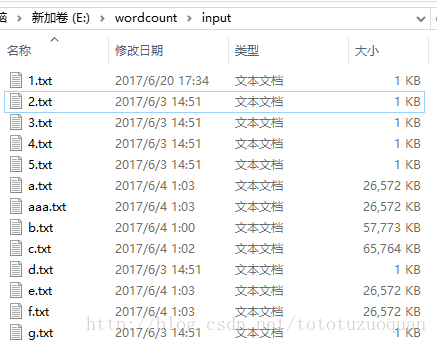

数据放置在E:\wordcount\input中:

里面的文件内容是:

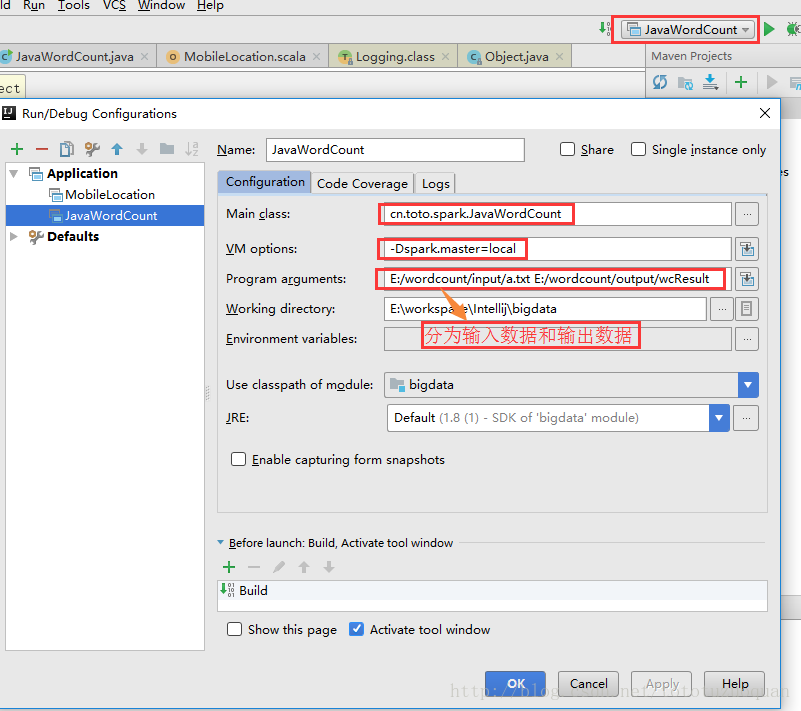

6、通过工具传递参数:

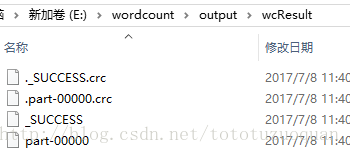

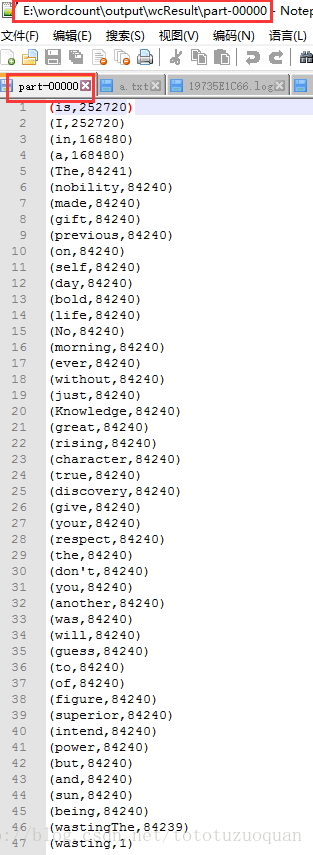

7、运行结果:

8、scala编写wordCount

单词统计的代码如下:

import org.apache.spark.rdd.RDD

import org.apache.spark.{SparkConf, SparkContext}

/**

* Created by ZhaoXing on 2016/6/30.

*/

object ScalaWordCount {

def main(args: Array[String]) {

val conf = new SparkConf().setAppName("ScalaWordCount")

//非常重要的一个对象SparkContext

val sc = new SparkContext(conf)

//textFile方法生成了两个RDD: HadoopRDD[LongWritable, Text] -> MapPartitionRDD[String]

val lines: RDD[String] = sc.textFile(args(0))

//flatMap方法生成了一个MapPartitionRDD[String]

val words: RDD[String] = lines.flatMap(_.split(" "))

//Map方法生成了一个MapPartitionRDD[(String, Int)]

val wordAndOne: RDD[(String, Int)] = words.map((_, 1))

val counts: RDD[(String, Int)] = wordAndOne.reduceByKey(_+_)

val sortedCounts: RDD[(String, Int)] = counts.sortBy(_._2, false)

//保存的HDFS

//sortedCounts.saveAsTextFile(args(1))

counts.saveAsTextFile(args(1))

//释放SparkContext

sc.stop()

}

}

530

530

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?