本系统分为:图像预处理,单易拉罐定位,字符区域块定位,字符识别四大块。

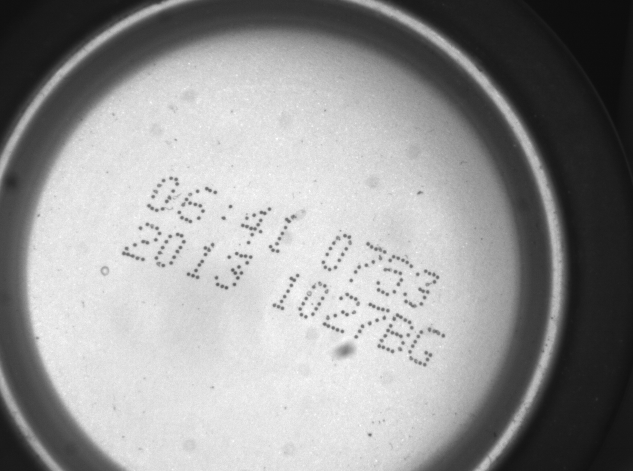

1、图像预处理包括,对不均匀光照的处理,通过直方图拉伸等手段对图像进行亮度区域选择,突出字符区域的亮度分布。(说明:由于系统实时性的要求,尽量减少预处理过程。)

2、单易拉罐定位:当一幅图像中出现多个易拉罐的时候,首先要定位到单个易拉罐,分析易拉罐的形状,可以采用基于易拉罐外形的形状匹配思路来定位到单个易拉罐或者采用基于圆检测可以采用hough圆检测或者小波变换的圆检测来定位到单个易拉罐;

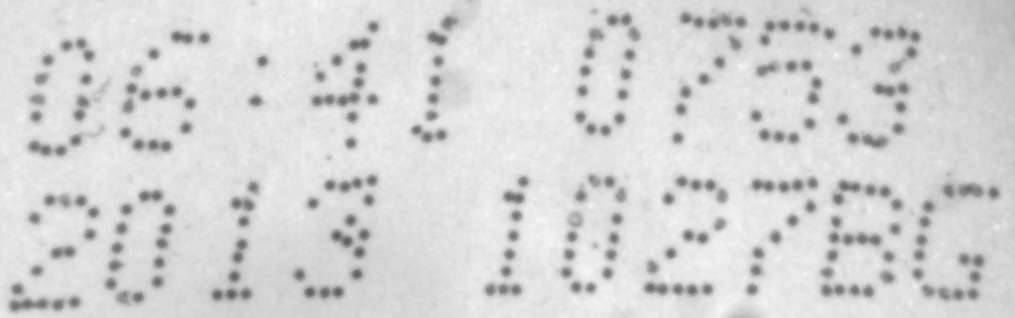

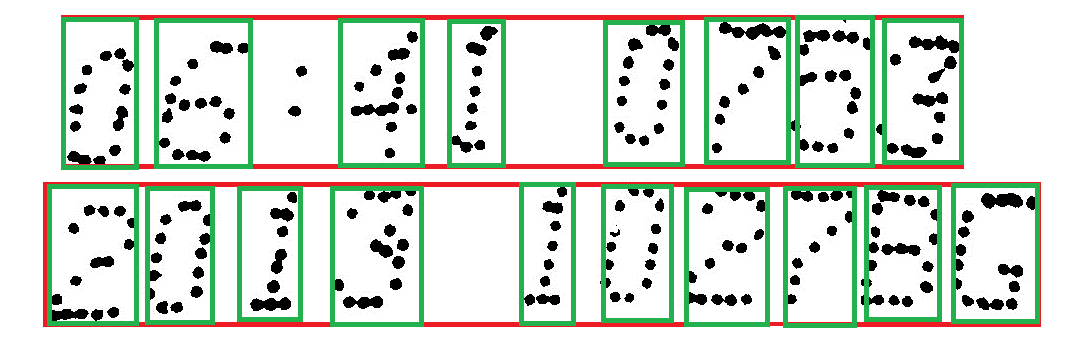

3、字符区域块定位:由于字符区域块具有旋转的特点,这里采用形态学的思路,先使字符区域膨胀粘连成一个整体,然后检测轮廓,通过长宽比和面积筛选轮廓,可以确定字符区域块的位置,最后根据字符区域块的轮廓拟合出的矩形的倾斜角度做矫正就可以得到字符区域块的水平矫正后的区域。

4、字符识别:包括单个字符区域的分割,字符样本筛选和处理,字符训练,字符识别。

(1)单个字符的分割:在第三步处理好的倾斜矫正后的图像上,先垂直投影,将字符区域行分割出来;对于单行的字符区域,做水平投影,然后根据字符宽固定的思路做字符分割,分割出单个的字符。

(2)对单个的字符按类别分类好,并对样本进行初步的预处理,增强,归一化。

将所有的样本归一化为28*28区域大小。

(3)采用CNN卷积神经网络对字符进行训练。

本项目采用的卷积结构说明:

第一层仅具有一个特征映射是输入图像本身。在下面的层中,每个特征映射保持一定数目的独特的内核(权重的二维阵列),等于先前层中的特征映射的数量。在特征图中的每个内核的大小是相同的,并且是一个设计参数。在特征图中的像素值是通过卷积其内核与前一层中的相应特征映射推导。在最后一层的特征映射的数目等于输出选项的数目。例如在0-9数字识别,会有在输出层10的特征图,并具有最高的像素值的特征映射将结果。

#include ".\cnn.h"

#include <stdlib.h>

#include <stdio.h>

#include <time.h> /*用到了time函数,所以要有这个头文件*/

CCNN::CCNN(void)

{

ConstructNN();

}

CCNN::~CCNN(void)

{

DeleteNN();

}

#ifndef max

#define max(a,b) (((a) > (b)) ? (a) : (b))

#endif

////////////////////////////////////

void CCNN::ConstructNN()

////////////////////////////////////

{

int i;

m_nLayer = 5;

m_Layer = new Layer[m_nLayer];

m_Layer[0].pLayerPrev = NULL;

for(i=1; i<m_nLayer; i++) m_Layer[i].pLayerPrev = &m_Layer[i-1];

m_Layer[0].Construct ( INPUT_LAYER, 1, 29, 0, 0 );

m_Layer[1].Construct ( CONVOLUTIONAL, 6, 13, 5, 2 );

m_Layer[2].Construct ( CONVOLUTIONAL, 50, 5, 5, 2 );

m_Layer[3].Construct ( FULLY_CONNECTED, 100, 1, 5, 1 );

m_Layer[4].Construct ( FULLY_CONNECTED, 10, 1, 1, 1 );

}

///////////////////////////////

void CCNN::DeleteNN()

///////////////////////////////

{

//SaveWeights("weights_updated.txt");

for(int i=0; i<m_nLayer; i++) m_Layer[i].Delete();

}

//////////////////////////////////////////////

void CCNN::LoadWeightsRandom()

/////////////////////////////////////////////

{

int i, j, k, m;

srand((unsigned)time(0));

for ( i=1; i<m_nLayer; i++ )

{

for( j=0; j<m_Layer[i].m_nFeatureMap; j++ )

{

m_Layer[i].m_FeatureMap[j].bias = 0.05 * RANDOM_PLUS_MINUS_ONE;

for(k=0; k<m_Layer[i].pLayerPrev->m_nFeatureMap; k++)

for(m=0; m < m_Layer[i].m_KernelSize * m_Layer[i].m_KernelSize; m++)

m_Layer[i].m_FeatureMap[j].kernel[k][m] = 0.05 * RANDOM_PLUS_MINUS_ONE;

}

}

}

//////////////////////////////////////////////

void CCNN::LoadWeights(char *FileName)

/////////////////////////////////////////////

{

int i, j, k, m, n;

FILE *f;

if((f = fopen(FileName, "r")) == NULL) return;

for ( i=1; i<m_nLayer; i++ )

{

for( j=0; j<m_Layer[i].m_nFeatureMap; j++ )

{

fscanf(f, "%lg ", &m_Layer[i].m_FeatureMap[j].bias);

for(k=0; k<m_Layer[i].pLayerPrev->m_nFeatureMap; k++)

for(m=0; m < m_Layer[i].m_KernelSize * m_Layer[i].m_KernelSize; m++)

fscanf(f, "%lg ", &m_Layer[i].m_FeatureMap[j].kernel[k][m]);

}

}

fclose(f);

}

//////////////////////////////////////////////

void CCNN::SaveWeights(char *FileName)

/////////////////////////////////////////////

{

int i, j, k, m;

FILE *f;

if((f = fopen(FileName, "w")) == NULL) return;

for ( i=1; i<m_nLayer; i++ )

{

for( j=0; j<m_Layer[i].m_nFeatureMap; j++ )

{

fprintf(f, "%lg ", m_Layer[i].m_FeatureMap[j].bias);

for(k=0; k<m_Layer[i].pLayerPrev->m_nFeatureMap; k++)

for(m=0; m < m_Layer[i].m_KernelSize * m_Layer[i].m_KernelSize; m++)

{

fprintf(f, "%lg ", m_Layer[i].m_FeatureMap[j].kernel[k][m]);

}

}

}

fclose(f);

}

//////////////////////////////////////////////////////////////////////////

int CCNN::Calculate(double *input, double *output)

//////////////////////////////////////////////////////////////////////////

{

int i, j;

//copy input to layer 0

for(i=0; i<m_Layer[0].m_nFeatureMap; i++)

for(j=0; j < m_Layer[0].m_FeatureSize * m_Layer[0].m_FeatureSize; j++)

m_Layer[0].m_FeatureMap[0].value[j] = input[j];

//forward propagation

//calculate values of neurons in each layer

for(i=1; i<m_nLayer; i++)

{

//initialization of feature maps to ZERO

for(j=0; j<m_Layer[i].m_nFeatureMap; j++)

m_Layer[i].m_FeatureMap[j].Clear();

//forward propagation from layer[i-1] to layer[i]

m_Layer[i].Calculate();

}

//copy last layer values to output

for(i=0; i<m_Layer[m_nLayer-1].m_nFeatureMap; i++)

output[i] = m_Layer[m_nLayer-1].m_FeatureMap[i].value[0];

///================================

/*FILE *f;

char fileName[100];

for(i=0; i<m_nLayer; i++)

{

sprintf(fileName, "layer0%d.txt", i);

f = fopen(fileName, "w");

for(j=0; j<m_Layer[i].m_nFeatureMap; j++)

{

for(k=0; k<m_Layer[i].m_FeatureSize * m_Layer[i].m_FeatureSize; k++)

{

if(k%m_Layer[i].m_FeatureSize == 0) fprintf(f, "\n");

fprintf(f, "%10.7lg\t", m_Layer[i].m_FeatureMap[j].value[k]);

}

fprintf(f, "\n\n\n");

}

fclose(f);

}*/

///==================================

//get index of highest scoring output feature

j = 0;

for(i=1; i<m_Layer[m_nLayer-1].m_nFeatureMap; i++)

if(output[i] > output[j]) j = i;

return j;

}

///////////////////////////////////////////////////////////

void CCNN::BackPropagate(double *desiredOutput, double eta)

///////////////////////////////////////////////////////////

{

int i ;

//derivative of the error in last layer

//calculated as difference between actual and desired output (eq. 2)

for(i=0; i<m_Layer[m_nLayer-1].m_nFeatureMap; i++)

{

m_Layer[m_nLayer-1].m_FeatureMap[i].dError[0] =

m_Layer[m_nLayer-1].m_FeatureMap[i].value[0] - desiredOutput[i];

}

double mse=0.0;

for ( i=0; i<10; i++ )

{

mse += m_Layer[m_nLayer-1].m_FeatureMap[i].dError[0] * m_Layer[m_nLayer-1].m_FeatureMap[i].dError[0];

}

//backpropagate through rest of the layers

for(i=m_nLayer-1; i>0; i--)

{

m_Layer[i].BackPropagate(1, eta);

}

///================================

//for debugging: write dError for each feature map in each layer to a file

/*

FILE *f;

char fileName[100];

for(i=0; i<m_nLayer; i++)

{

sprintf(fileName, "backlx0%d.txt", i);

f = fopen(fileName, "w");

for(j=0; j<m_Layer[i].m_nFeatureMap; j++)

{

for(k=0; k<m_Layer[i].m_FeatureSize * m_Layer[i].m_FeatureSize; k++)

{

if(k%m_Layer[i].m_FeatureSize == 0) fprintf(f, "\n");

fprintf(f, "%10.7lg\n", m_Layer[i].m_FeatureMap[j].dError[k]);

}

fprintf(f, "\n\n\n");

}

fclose(f);

}

//---------------------------------

//for debugging: write dErr_wrtw for each feature map in each layer to a file

for(i=1; i<m_nLayer; i++)

{

sprintf(fileName, "backlw0%d.txt", i);

f = fopen(fileName, "w");

for(j=0; j<m_Layer[i].m_nFeatureMap; j++)

{

fprintf(f, "%10.7lg\n", m_Layer[i].m_FeatureMap[j].dErr_wrtb);

for(k=0; k<m_Layer[i].pLayerPrev->m_nFeatureMap; k++)

{

for(int m=0; m < m_Layer[i].m_KernelSize * m_Layer[i].m_KernelSize; m++)

{

fprintf(f, "%10.7lg\n", m_Layer[i].m_FeatureMap[j].dErr_wrtw[k][m]);

}

fprintf(f, "\n\n\n");

}

}

fclose(f);

}

///==================================

*/

int t=0;

}

////////////////////////////////////////////////

void CCNN::CalculateHessian( )

////////////////////////////////////////////////

{

int i, j, k ;

//2nd derivative of the error wrt Xn in last layer

//it is always 1

//Xn is the output after applying SIGMOID

for(i=0; i<m_Layer[m_

本文详细介绍了易拉罐底字符识别系统,包括图像预处理、易拉罐定位、字符区域块定位和字符识别四个步骤。通过形状匹配、圆检测、形态学操作、CNN卷积神经网络等技术实现高精度的字符识别。

本文详细介绍了易拉罐底字符识别系统,包括图像预处理、易拉罐定位、字符区域块定位和字符识别四个步骤。通过形状匹配、圆检测、形态学操作、CNN卷积神经网络等技术实现高精度的字符识别。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

4128

4128

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?