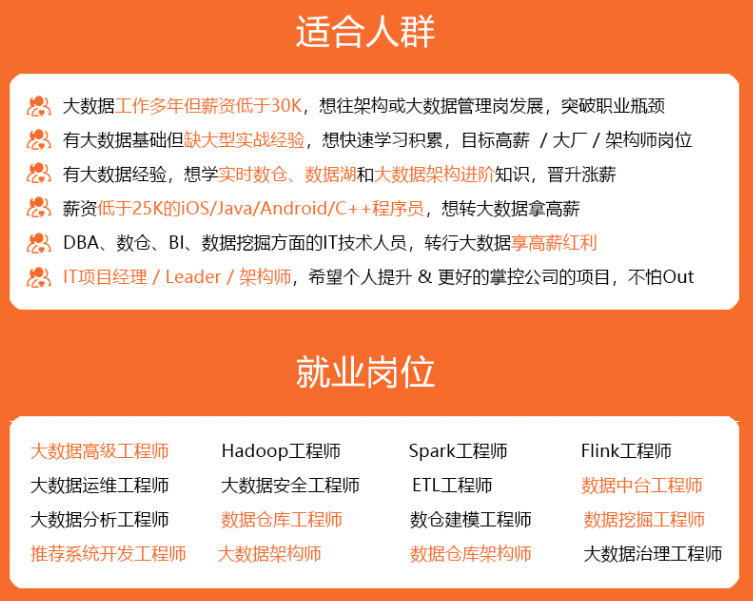

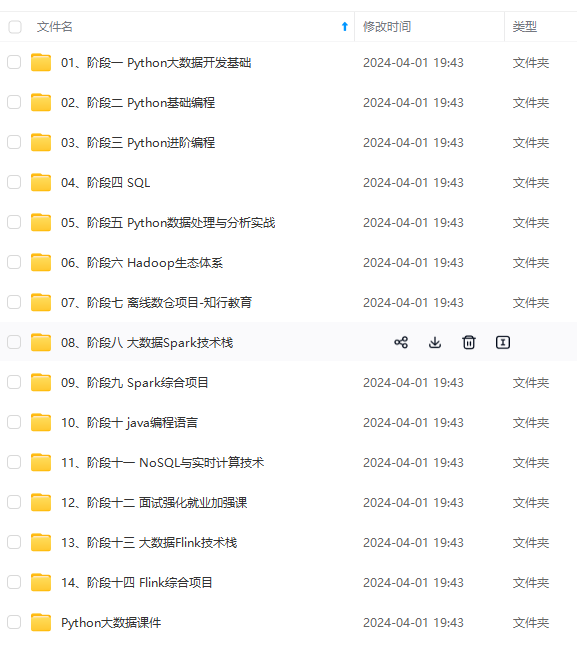

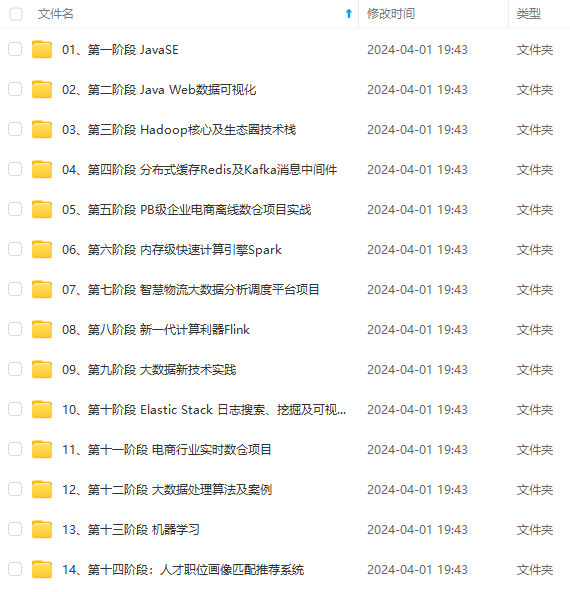

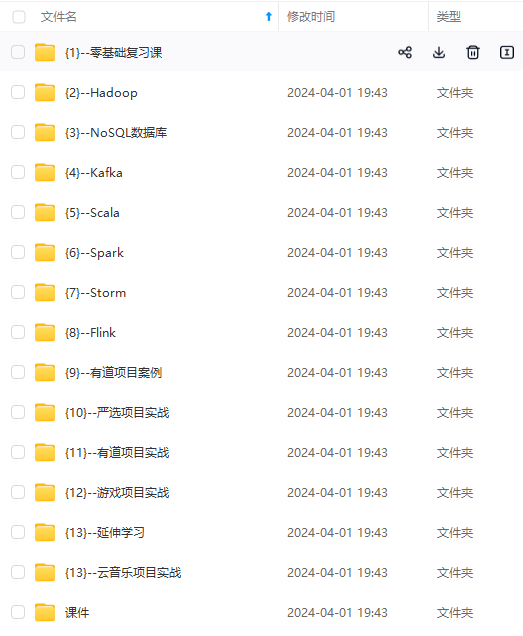

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

找数据集的时候我校验过,百度飞桨和github上的数据集是一样的。

github官网下载比较慢,可使用wget命令直接从百度飞桨的数据集地址下载,网速非常快。

原始数据如下图所示,使用nnunet要求结构化的数据集,使用前进行一个简单处理

root@worker04:~/data# tree data/KiTS19/origin

data/KiTS19/origin

|-- case_00000

| |-- imaging.nii.gz

| `-- segmentation.nii.gz

|-- case_00001

| |-- imaging.nii.gz

| `-- segmentation.nii.gz

|-- case_00002

| |-- imaging.nii.gz

| `-- segmentation.nii.gz

|-- case_00003

| |-- imaging.nii.gz

| `-- segmentation.nii.gz

......

下面是我根据nnunet中的dataset_conversion/Task040_KiTS.py修改的代码

import os

import json

import shutil

def save\_json(obj, file, indent=4, sort_keys=True):

with open(file, 'w') as f:

json.dump(obj, f, sort_keys=sort_keys, indent=indent)

def maybe\_mkdir\_p(directory):

directory = os.path.abspath(directory)

splits = directory.split("/")[1:]

for i in range(0, len(splits)):

if not os.path.isdir(os.path.join("/", \*splits[:i+1])):

try:

os.mkdir(os.path.join("/", \*splits[:i+1]))

except FileExistsError:

# this can sometimes happen when two jobs try to create the same directory at the same time,

# especially on network drives.

print("WARNING: Folder %s already existed and does not need to be created" % directory)

def subdirs(folder, join=True, prefix=None, suffix=None, sort=True):

if join:

l = os.path.join

else:

l = lambda x, y: y

res = [l(folder, i) for i in os.listdir(folder) if os.path.isdir(os.path.join(folder, i))

and (prefix is None or i.startswith(prefix))

and (suffix is None or i.endswith(suffix))]

if sort:

res.sort()

return res

base = "/root/data/data/KiTS19/origin"

out = "/root/data/nnUNet\_raw\_data\_base/nnUNet\_raw\_data/Task040\_KiTS"

cases = subdirs(base, join=False)

maybe_mkdir_p(out)

maybe_mkdir_p(os.path.join(out, "imagesTr"))

maybe_mkdir_p(os.path.join(out, "imagesTs"))

maybe_mkdir_p(os.path.join(out, "labelsTr"))

for c in cases:

case_id = int(c.split("\_")[-1])

if case_id < 210:

shutil.copy(os.path.join(base, c, "imaging.nii.gz"), os.path.join(out, "imagesTr", c + "\_0000.nii.gz"))

shutil.copy(os.path.join(base, c, "segmentation.nii.gz"), os.path.join(out, "labelsTr", c + ".nii.gz"))

else:

shutil.copy(os.path.join(base, c, "imaging.nii.gz"), os.path.join(out, "imagesTs", c + "\_0000.nii.gz"))

print(case_id,' done!')

json_dict = {}

json_dict['name'] = "KiTS"

json_dict['description'] = "kidney and kidney tumor segmentation"

json_dict['tensorImageSize'] = "4D"

json_dict['reference'] = "KiTS data for nnunet"

json_dict['licence'] = ""

json_dict['release'] = "0.0"

json_dict['modality'] = {

"0": "CT",

}

json_dict['labels'] = {

"0": "background",

"1": "Kidney",

"2": "Tumor"

}

json_dict['numTraining'] = 210

json_dict['numTest'] = 90

json_dict['training'] = [{'image': "./imagesTr/%s.nii.gz" % i, "label": "./labelsTr/%s.nii.gz" % i} for i in

cases[:210]]

json_dict['test'] = ["./imagesTs/%s.nii.gz" % i for i in

cases[210:]]

save_json(json_dict, os.path.join(out, "dataset.json"))

这里只是对数据集进行一个拷贝和重命名,不对原始数据进行修改。

运行代码后,整理好的数据集结构如下:

nnUNet_raw_data_base/nnUNet_raw_data/Task040_KiTS

├── dataset.json

├── imagesTr

│ ├── case_00000_0000.nii.gz

│ ├── case_00001_0000.nii.gz

│ ├── ...

├── imagesTs

│ ├── case_00210_0000.nii.gz

│ ├── case_00211_0000.nii.gz

│ ├── ...

├── labelsTr

│ ├── case_00000.nii.gz

│ ├── case_00001.nii.gz

│ ├── ...

dataset.json文件保存了训练集图像、训练集标签、测试集图像等信息。

预处理阶段会根据dataset.json读取图像,如果想要剔除某个病例,直接在dataset.json修改就好。

{

"description": "kidney and kidney tumor segmentation",

"labels": {

"0": "background",

"1": "Kidney",

"2": "Tumor"

},

"licence": "",

"modality": {

"0": "CT"

},

"name": "KiTS",

"numTest": 90,

"numTraining": 210,

"reference": "KiTS data for nnunet",

"release": "0.0",

"tensorImageSize": "4D",

"test": [

"./imagesTs/case_00210.nii.gz",

"./imagesTs/case_00211.nii.gz",

.....

],

"training": [

{

"image": "./imagesTr/case_00000.nii.gz",

"label": "./labelsTr/case_00000.nii.gz"

},

{

"image": "./imagesTr/case_00001.nii.gz",

"label": "./labelsTr/case_00001.nii.gz"

},

......

]

}

提前准备三个文件夹,分别存放数据集、预处理数据和训练结果,配置好环境变量,具体细节可以参考我的第一篇博文。

2.数据预处理

nnUnet可以读取CT图像的模态信息、体素间距、灰度分布,自动进行重采样、裁剪以及归一化。

nnUnet图像分割的自动方法配置(https://www.nature.com/articles/s41592-020-01008-z)

重采样

不同时期,不同仪器的CT扫描仪,采样得到的CT图像具有不同的空间分辨率,重采样的目的是将所有的病例采样到相同的空间分辨率(体素间距)。

nnUnet的数据预处理preprocess自带重采样,但我试过两次之后效果并不好,重采样之后的图像尺寸太大了,于是我按照冠军论文里的方法自己写了个重采样,将所有病例的体素间距重采样为 3.22 x 1.62 x 1.62.

另外,论文中有提到case15和case37标签的错误,本来打算去掉,不过后来我去KiTS19的github官网看了一下,官方已经作了修正。

import numpy as np

import SimpleITK as sitk

def transform(image,newSpacing, resamplemethod=sitk.sitkNearestNeighbor):

# 设置一个Filter

resample = sitk.ResampleImageFilter()

# 初始的体素块尺寸

originSize = image.GetSize()

# 初始的体素间距

originSpacing = image.GetSpacing()

newSize = [

int(np.round(originSize[0] \* originSpacing[0] / newSpacing[0])),

int(np.round(originSize[1] \* originSpacing[1] / newSpacing[1])),

int(np.round(originSize[2] \* originSpacing[2] / newSpacing[2]))

]

print('current size:',newSize)

# 沿着x,y,z,的spacing(3)

# The sampling grid of the output space is specified with the spacing along each dimension and the origin.

resample.SetOutputSpacing(newSpacing)

# 设置original

resample.SetOutputOrigin(image.GetOrigin())

# 设置方向

resample.SetOutputDirection(image.GetDirection())

resample.SetSize(newSize)

# 设置插值方式

resample.SetInterpolator(resamplemethod)

# 设置transform

resample.SetTransform(sitk.Euler3DTransform())

# 默认像素值 resample.SetDefaultPixelValue(image.GetPixelIDValue())

return resample.Execute(image)

注意重采样的插值方法,我试过SimpleITK自带的多种插值方法,线性插值,三次插值以及B样条,比较发现B样条的效果是最好的。

因此,image采用sitk.sitkBSpline插值,segment采用sitk.sitkNearestNeighbor插值。

如果感兴趣可以自己尝试一下不同的插值方法,或者使用scipy等其他工具包进行重采样。

data_path = "/root/data/nnUNet\_raw\_data\_base/nnUNet\_raw\_data/Task040\_KiTS/imagesTr"

for path in sorted(os.listdir(data_path)):

print(path)

img_path = os.path.join(data_path,path)

img_itk = sitk.ReadImage(img_path)

print('origin size:', img_itk.GetSize())

new_itk = transform(img_itk, [3.22, 1.62, 1.62], sitk.sitkBSpline) # sitk.sitkLinear

sitk.WriteImage(new_itk, img_path)

print('images is resampled!')

print('-'\*20)

label_path = "/root/data/nnUNet\_raw\_data\_base/nnUNet\_raw\_data/Task040\_KiTS/labelsTr"

for path in sorted(os.listdir(label_path)):

print(path)

img_path = os.path.join(label_path,path)

img_itk = sitk.ReadImage(img_path)

print('origin size:', img_itk.GetSize())

new_itk = transform(img_itk, [3.22, 1.62, 1.62])

sitk.WriteImage(new_itk, img_path)

print('labels is resampled!')

下面开始介绍nnUnet的数据预处理方法:

输入指令:

python nnunet/experiment_planning/nnUNet_plan_and_preprocess.py -t 40 --verify_dataset_integrity

verify_dataset_integrity这里不再赘述,主要是根据验证数据集结构,第一次运行的时候最好还是加上。

裁剪

裁剪的目的是裁去黑边,减少像素值为0的边缘区域,裁剪的时候保持空间分辨率等信息不变。

def crop(task_string, override=False, num_threads=default_num_threads):

# 输出目录:'/root/data/nnUNet\_raw\_data\_base/nnUNet\_cropped\_data/Task040\_KiTS'

cropped_out_dir = join(nnUNet_cropped_data, task_string)

maybe_mkdir_p(cropped_out_dir)

if override and isdir(cropped_out_dir):

shutil.rmtree(cropped_out_dir)

maybe_mkdir_p(cropped_out_dir)

splitted_4d_output_dir_task = join(nnUNet_raw_data, task_string)

lists, _ = create_lists_from_splitted_dataset(splitted_4d_output_dir_task) # 创建裁剪列表

imgcrop = ImageCropper(num_threads, cropped_out_dir)

imgcrop.run_cropping(lists, overwrite_existing=override)

shutil.copy(join(nnUNet_raw_data, task_string, "dataset.json"), cropped_out_dir)

create_lists_from_splitted_dataset加载所有的训练集的图像地址,lists一共有210个元素,每个元素包含图像和标签。

def create\_lists\_from\_splitted\_dataset(base_folder_splitted):

lists = []

json_file = join(base_folder_splitted, "dataset.json")

with open(json_file) as jsn:

d = json.load(jsn)

training_files = d['training']

num_modalities = len(d['modality'].keys())

for tr in training_files:

cur_pat = []

for mod in range(num_modalities):

cur_pat.append(join(base_folder_splitted, "imagesTr", tr['image'].split("/")[-1][:-7] +

"\_%04.0d.nii.gz" % mod))

cur_pat.append(join(base_folder_splitted, "labelsTr", tr['label'].split("/")[-1]))

lists.append(cur_pat)

return lists, {int(i): d['modality'][str(i)] for i in d['modality'].keys()}

重点是这两个函数:

imgcrop = ImageCropper(num_threads, cropped_out_dir)

imgcrop.run_cropping(lists, overwrite_existing=override)

ImageCropper是一个类,包含10个方法。

重点是crop和run_cropping两个方法:

- crop:裁剪到非零区域,返回data, seg, properties

- run_cropping:执行裁剪操作,并且将结果保存为.npz文件(包含data和seg),将size, spacing, origin, classes, size_after_cropping 等属性保存在.pkl文件。

但是执行代码时,发现裁剪前后尺寸没有变化,可能是因为图像没有什么黑边

# 裁剪的时候seg!=None

def crop(data, properties, seg=None):

shape_before = data.shape # 原始尺寸

data, seg, bbox = crop_to_nonzero(data, seg, nonzero_label=-1) # 裁剪结果

shape_after = data.shape # 裁剪尺寸

print("before crop:", shape_before, "after crop:", shape_after, "spacing:",

np.array(properties["original\_spacing"]), "\n")

properties["crop\_bbox"] = bbox

properties['classes'] = np.unique(seg)

seg[seg < -1] = 0

properties["size\_after\_cropping"] = data[0].shape

return data, seg, properties

数据分析

收集上一步裁剪得到的图像信息(尺寸、体素间距、灰度分布),为当前任务制定合适的训练计划(plan)

# '/root/data/nnUNet\_raw\_data\_base/nnUNet\_cropped\_data/Task040\_KiTS'

cropped_out_dir = os.path.join(nnUNet_cropped_data, t)

# '/root/data/nnUNet\_preprocessed/Task040\_KiTS'

preprocessing_output_dir_this_task = os.path.join(preprocessing_output_dir, t)

# we need to figure out if we need the intensity propoerties. We collect them only if one of the modalities is CT

dataset_json = load_json(join(cropped_out_dir, 'dataset.json'))

modalities = list(dataset_json["modality"].values())

collect_intensityproperties = True if (("CT" in modalities) or ("ct" in modalities)) else False

dataset_analyzer = DatasetAnalyzer(cropped_out_dir, overwrite=False, num_processes=tf) # this class creates the fingerprint

_ = dataset_analyzer.analyze_dataset(collect_intensityproperties) # this will write output files that will be used by the ExperimentPlanner

maybe_mkdir_p(preprocessing_output_dir_this_task)

shutil.copy(join(cropped_out_dir, "dataset\_properties.pkl"), preprocessing_output_dir_this_task)

shutil.copy(join(nnUNet_raw_data, t, "dataset.json"), preprocessing_output_dir_this_task)

分析得到的dataset_properties.pkl结果如下:

创建数据指纹

根据上一步得到的数据集信息,针对不同的训练任务,制定合适的训练计划(plan)

if planner_3d is not None:

if args.overwrite_plans is not None:

assert args.overwrite_plans_identifier is not None, "You need to specify -overwrite\_plans\_identifier"

exp_planner = planner_3d(cropped_out_dir, preprocessing_output_dir_this_task, args.overwrite_plans,

args.overwrite_plans_identifier)

else:

exp_planner = planner_3d(cropped_out_dir, preprocessing_output_dir_this_task)

exp_planner.plan_experiment()

if not dont_run_preprocessing: # double negative, yooo

exp_planner.run_preprocessing(threads)

if planner_2d is not None:

exp_planner = planner_2d(cropped_out_dir, preprocessing_output_dir_this_task)

exp_planner.plan_experiment()

if not dont_run_preprocessing: # double negative, yooo

exp_planner.run_preprocessing(threads)

预处理执行完毕,得到如下处理结果:

nnUNet_preprocessed文件夹下

|-- Task040_KiTS

|-- dataset.json

|-- dataset_properties.pkl

|-- gt_segmentations

|-- nnUNetData_plans_v2.1_2D_stage0

|-- nnUNetData_plans_v2.1_stage0

|-- nnUNetPlansv2.1_plans_2D.pkl

|-- nnUNetPlansv2.1_plans_3D.pkl

`-- splits_final.pkl

这里生成的文件都可以打开来看看,对预处理方法和数据指纹有一个了解

- dataset.json在数据获取阶段产生

- daset_properties为数据的 size, spacing, origin, classes, size_after_cropping 等属性

- gt_segmentations为图像分割标签

- nnUNetData_plans_v2.1_2D_stage0和nnUNetData_plans_v2.1_stage0是预处理后的数据集

- splits_final.pkl是五折交叉验证划分的结果,一共210个病人,42为一折

- nnUNetPlansv2.1_plans*.pkl为训练计划,参考官方文档中的edit_plans_files.md可进行编辑

以nnUNetPlansv2.1_plans_3D.pkl为例,

3.模型训练

一行代码开始训练,执行过程以及调参可以参考我的博客nnUnet代码解读–模型训练

python nnunet/run/run_training.py CONFIGURATION TRAINER_CLASS_NAME TASK_NAME_OR_ID FOLD # 格式

python nnunet/run/run_training.py 3d_fullres nnUNetTrainerV2 40 1

训练开始后,训练日志和训练结果记录在nnUNet_trained_models/nnUNet/3d_fullres/Task040_KiTS文件夹下

UNetTrainer__nnUNetPlansv2.1

├── fold_1

│ ├── debug.json

│ ├── model_best.model

│ ├── model_best.model.pkl

│ ├── model_final_checkpoint.model

│ ├── model_final_checkpoint.model.pkl

│ ├── postprocessing.json

│ ├── progress.png

│ ├── training_log_2022_5_4_12_06_14.txt

│ ├── training_log_2022_5_5_10_30_05.txt

│ ├── validation_raw

│ └── validation_raw_postprocessed

训练过程Loss曲线以及在线计算的Dice曲线

这里我想补充一下nnUnet的评价指标

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

── validation_raw

│ └── validation_raw_postprocessed

训练过程Loss曲线以及在线计算的Dice曲线

>

> 这里我想补充一下nnUnet的评价指标

>

>

>

[外链图片转存中...(img-3xdhRFFo-1715280765839)]

[外链图片转存中...(img-VZyMVPTr-1715280765840)]

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/forums/4f45ff00ff254613a03fab5e56a57acb)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

757

757

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?