高柱是按照开源项目self-llm中的FastAPI部署GLM-4-9B-Chat教程,主要遇到了两个问题,记录下~

First:TypeError

完整的错误信息

TypeError: transformers.generation.utils.GenerationMixin.generate() argument after ** must be a mapping, not Tensor

解决方法

升级transformers,pip install transformers==4.41.2(感谢大佬在issue中的解答)

Second:RuntimeError

完整的错误信息

RuntimeError: Expected all tensors to be on the same device, but found at least two devices, cuda:1 and cuda:0! (when checking argument for argument tensors in method wrapper_CUDA_cat)

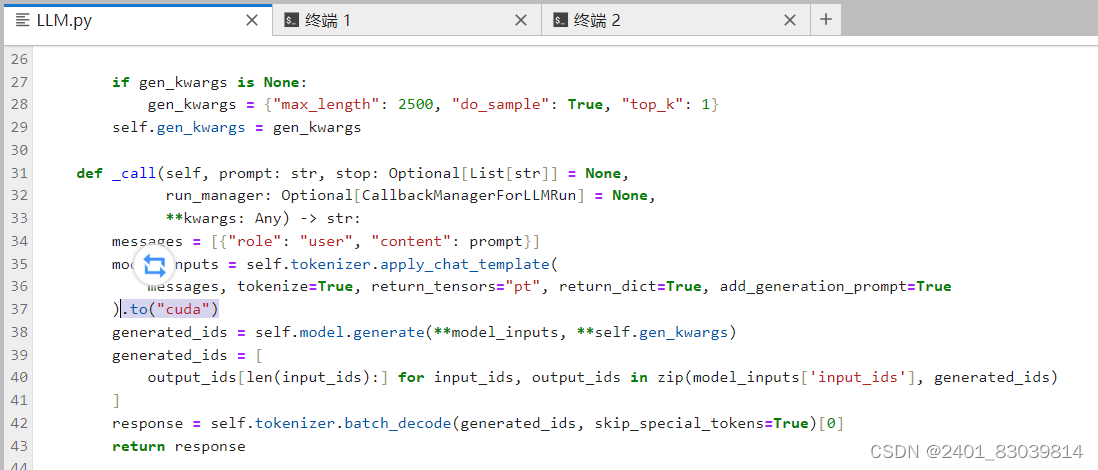

解决方法

在LLM.py中添加.to("cuda")

1668

1668

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?