==> start-all.sh 会在 hadoop1机器上启动master进程,在slaves文件配置的所有hostname的机器上启动worker进程

Spark WordCount统计

val file = spark.sparkContext.textFile(“file:///home/hadoop/data/wc.txt”)

val wordCounts = file.flatMap(line => line.split(",")).map((word => (word, 1))).reduceByKey(\_ + \_)

wordCounts.collect

#### 本地IDE

>

> A master URL must be set in your configuration

>

>

>

点击edit configuration,在左侧点击该项目。在右侧VM options中输入“-Dspark.master=local”,指示本程序本地单线程运行,再次运行即可。

package org.example

import org.apache.spark.sql.SQLContext

import org.apache.spark.{SparkConf, SparkContext}

object SQLContextAPP {

def main(args: Array[String]): Unit = {

//1创建相应的Spark

val sparkConf = new SparkConf()

sparkConf.setAppName(“SQLContextAPP”)

val sc = new SparkContext(sparkConf)

val sqlContext = new SQLContext(sc)

//2数据处理

val people = sqlContext.read.format("json").load("people.json")

people.printSchema()

people.show()

//3关闭资源

sc.stop()

}

}

root

|-- age: long (nullable = true)

|-- name: string (nullable = true)

…

| age| name|

±—±------+

|null|Michael|

| 30| Andy|

| 19| Justin|

±—±------+

>

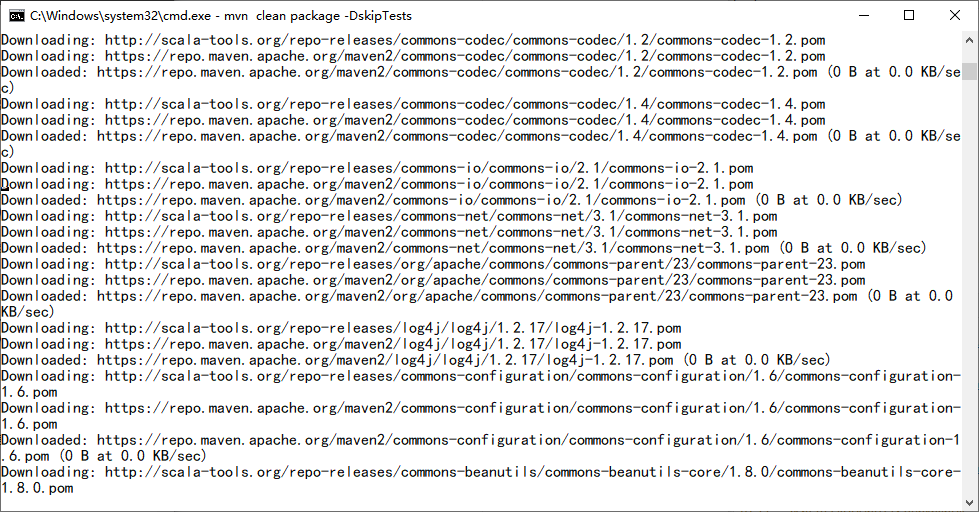

> 配置maven环境变量cmd控制台提示:mvn不是内部或外部命令,也不是可运行的程序或批处理文件

>

>

>

首先maven环境变量:

变量名:MAVEN_HOME

变量值:E:\apache-maven-3.2.3

变量名:Path

变量值:;%MAVEN_HOME%\bin

然后到项目的目录下直接执行

`C:\Users\jacksun\IdeaProjects\SqarkSQL\ mvn clean package -DskipTests`

在集群上测试

spark-submit

–name SQLContextApp

–class org.example.SQLContextApp

–master local[2]

/home/hadoop/lib/sql-1.0.jar

/home/hadoop/app/spark-2.1.0-bin-2.6.0-cdh5.7.0/examples/src/main/resources/people.json

#### HiveContextAPP

注意:

1)To use a HiveContext, you do not need to have an existing Hive setup

2)hive-site.xml

package org.example

import org.apache.spark.{SparkConf, SparkContext}

import org.apache.spark.sql.SQLContext

import org.apache.spark.sql.hive.HiveContext

object HiveContextAPP {

def main(args: Array[String]): Unit = {

//1创建相应的Spark

val path =args(0)

val sparkConf = new SparkConf()

//测试和生产中AppName和Master是通过脚本执行的

//sparkConf.setAppName("HiveContextAPP").setMaster("local[2]")

val sc = new SparkContext(sparkConf)

val hiveContext = new HiveContext(sc)

//2数据处理

hiveContext.table("emp").show

//3关闭资源

sc.stop()

}

}

spark-submit

–name HiveContextApp

–class org.example.HiveContextApp

–master local[2]

/home/hadoop/lib/sql-1.0.jar

–jars /home/hadoop/software/mysql-connector-java-5.1.27-bin.jar

#### SparkSessinon

package org.example

import org.apache.spark.sql.SparkSession

object SparkSessionApp {

def main(args: Array[String]) {

val spark = SparkSession.builder().appName("SparkSessionApp")

.master("local[2]").getOrCreate()

val people = spark.read.json("people.json")

people.show()

spark.stop()

}

}

#### Spark Shell

* 启动hive

[hadoop@hadoop001 bin]$ pwd

/home/hadoop/app/hive-1.1.0-cdh5.7.0/bin

[hadoop@hadoop001 bin]$ hive

ls: cannot access /home/hadoop/app/spark-2.1.0-bin-2.6.0-cdh5.7.0/lib/spark-assembly-*.jar: No such file or directory

which: no hbase in (/home/hadoop/app/spark-2.1.0-bin-2.6.0-cdh5.7.0/bin:/home/hadoop/app/scala-2.11.8/bin:/home/hadoop/app/hive-1.1.0-cdh5.7.0/bin:/home/hadoop/app/hadoop-2.6.0-cdh5.7.0/bin:/home/hadoop/app/apache-maven-3.3.9/bin:/home/hadoop/app/jdk1.7.0_51/bin:/usr/local/bin:/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/sbin)

Logging initialized using configuration in jar:file:/home/hadoop/app/hive-1.1.0-cdh5.7.0/lib/hive-common-1.1.0-cdh5.7.0.jar!/hive-log4j.properties

WARNING: Hive CLI is deprecated and migration to Beeline is recommended.

hive>

* 拷贝

`[hadoop@hadoop001 conf]$ cp hive-site.xml ~/app/spark-2.1.0-bin-2.6.0-cdh5.7.0/conf/`

* 启动Spark

`spark-shell --master local[2] --jars /home/hadoop/software/mysql-connector-java-5.1.27-bin.jar`

scala> spark.sql(“show tables”).show

±-------±-----------±----------+

|database| tableName|isTemporary|

±-------±-----------±----------+

| default| dept| false|

| default| emp| false|

| default|hive_table_1| false|

| default|hive_table_2| false|

| default| t| false|

±-------±-----------±----------+

hive> show tables;

OK

dept

emp

hive_wordcount

scala> spark.sql(“select * from emp e join dept d on e.deptno=d.deptno”).show

±----±-----±--------±—±---------±-----±-----±-----±-----±---------±-------+

|empno| ename| job| mgr| hiredate| sal| comm|deptno|deptno| dname| loc|

±----±-----±--------±—±---------±-----±-----±-----±-----±---------±-------+

| 7369| SMITH| CLERK|7902|1980-12-17| 800.0| null| 20| 20| RESEARCH| DALLAS|

| 7499| ALLEN| SALESMAN|7698| 1981-2-20|1600.0| 300.0| 30| 30| SALES| CHICAGO|

| 7521| WARD| SALESMAN|7698| 1981-2-22|1250.0| 500.0| 30| 30| SALES| CHICAGO|

| 7566| JONES| MANAGER|7839| 1981-4-2|2975.0| null| 20| 20| RESEARCH| DALLAS|

| 7654|MARTIN| SALESMAN|7698| 1981-9-28|1250.0|1400.0| 30| 30| SALES| CHICAGO|

| 7698| BLAKE| MANAGER|7839| 1981-5-1|2850.0| null| 30| 30| SALES| CHICAGO|

| 7782| CLARK| MANAGER|7839| 1981-6-9|2450.0| null| 10| 10|ACCOUNTING|NEW YORK|

| 7788| SCOTT| ANALYST|7566| 1987-4-19|3000.0| null| 20| 20| RESEARCH| DALLAS|

| 7839| KING|PRESIDENT|null|1981-11-17|5000.0| null| 10| 10|ACCOUNTING|NEW YORK|

| 7844|TURNER| SALESMAN|7698| 1981-9-8|1500.0| 0.0| 30| 30| SALES| CHICAGO|

| 7876| ADAMS| CLERK|7788| 1987-5-23|1100.0| null| 20| 20| RESEARCH| DALLAS|

| 7900| JAMES| CLERK|7698| 1981-12-3| 950.0| null| 30| 30| SALES| CHICAGO|

| 7902| FORD| ANALYST|7566| 1981-12-3|3000.0| null| 20| 20| RESEARCH| DALLAS|

| 7934|MILLER| CLERK|7782| 1982-1-23|1300.0| null| 10| 10|ACCOUNTING|NEW YORK|

±----±-----±--------±—±---------±-----±-----±-----±-----±---------±-------+

hive> select * from emp e join dept d on e.deptno=d.deptno

> ;

Query ID = hadoop_20201020054545_f7fbda3e-439e-409e-b2ce-3c553d969ed4

Total jobs = 1

20/10/20 05:48:46 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform… using builtin-java classes where applicable

Execution log at: /tmp/hadoop/hadoop_20201020054545_f7fbda3e-439e-409e-b2ce-3c553d969ed4.log

2020-10-20 05:48:49 Starting to launch local task to process map join; maximum memory = 477102080

2020-10-20 05:48:51 Dump the side-table for tag: 1 with group count: 4 into file: file:/tmp/hadoop/5c8577b3-c00d-4ece-9899-c0e3de66f2f2/hive_2020-10-20_05-48-27_437_556791932773953494-1/-local-10003/HashTable-Stage-3/MapJoin-mapfile01–.hashtable

2020-10-20 05:48:51 Uploaded 1 File to: file:/tmp/hadoop/5c8577b3-c00d-4ece-9899-c0e3de66f2f2/hive_2020-10-20_05-48-27_437_556791932773953494-1/-local-10003/HashTable-Stage-3/MapJoin-mapfile01–.hashtable (404 bytes)

2020-10-20 05:48:51 End of local task; Time Taken: 2.691 sec.

Execution completed successfully

MapredLocal task succeeded

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there’s no reduce operator

Starting Job = job_1602849227137_0002, Tracking URL = http://hadoop001:8088/proxy/application_1602849227137_0002/

Kill Command = /home/hadoop/app/hadoop-2.6.0-cdh5.7.0/bin/hadoop job -kill job_1602849227137_0002

Hadoop job information for Stage-3: number of mappers: 1; number of reducers: 0

2020-10-20 05:49:13,663 Stage-3 map = 0%, reduce = 0%

2020-10-20 05:49:36,950 Stage-3 map = 100%, reduce = 0%, Cumulative CPU 13.08 sec

MapReduce Total cumulative CPU time: 13 seconds 80 msec

Ended Job = job_1602849227137_0002

MapReduce Jobs Launched:

Stage-Stage-3: Map: 1 Cumulative CPU: 13.08 sec HDFS Read: 7639 HDFS Write: 927 SUCCESS

Total MapReduce CPU Time Spent: 13 seconds 80 msec

OK

7369 SMITH CLERK 7902 1980-12-17 800.0 NULL 20 20 RESEARCH DALLAS

7499 ALLEN SALESMAN 7698 1981-2-20 1600.0 300.0 30 30 SALES CHICAGO

7521 WARD SALESMAN 7698 1981-2-22 1250.0 500.0 30 30 SALES CHICAGO

7566 JONES MANAGER 7839 1981-4-2 2975.0 NULL 20 20 RESEARCH DALLAS

7654 MARTIN SALESMAN 7698 1981-9-28 1250.0 1400.0 30 30 SALES CHICAGO

7698 BLAKE MANAGER 7839 1981-5-1 2850.0 NULL 30 30 SALES CHICAGO

7782 CLARK MANAGER 7839 1981-6-9 2450.0 NULL 10 10 ACCOUNTING NEW YORK

7788 SCOTT ANALYST 7566 1987-4-19 3000.0 NULL 20 20 RESEARCH DALLAS

7839 KING PRESIDENT NULL 1981-11-17 5000.0 NULL 10 10 ACCOUNTINGNEW YORK

7844 TURNER SALESMAN 7698 1981-9-8 1500.0 0.0 30 30 SALES CHICAGO

7876 ADAMS CLERK 7788 1987-5-23 1100.0 NULL 20 20 RESEARCH DALLAS

7900 JAMES CLERK 7698 1981-12-3 950.0 NULL 30 30 SALES CHICAGO

7902 FORD ANALYST 7566 1981-12-3 3000.0 NULL 20 20 RESEARCH DALLAS

7934 MILLER CLERK 7782 1982-1-23 1300.0 NULL 10 10 ACCOUNTING NEW YORK

Time taken: 71.998 seconds, Fetched: 14 row(s)

hive>

SPARK SQL 基本秒出结果,hive比较耗时

* hive-site.xml

删除警告

#### Spark Sql

20/10/20 06:20:09 INFO DAGScheduler: Job 1 finished: processCmd at CliDriver.java:376, took 0.261151 s

7369 SMITH CLERK 7902 1980-12-17 800.0 NULL 20 20 RESEARCH DALLAS

7499 ALLEN SALESMAN 7698 1981-2-20 1600.0 300.0 30 30 SALES CHICAGO

7521 WARD SALESMAN 7698 1981-2-22 1250.0 500.0 30 30 SALES CHICAGO

7566 JONES MANAGER 7839 1981-4-2 2975.0 NULL 20 20 RESEARCH DALLAS

7654 MARTIN SALESMAN 7698 1981-9-28 1250.0 1400.0 30 30 SALES CHICAGO

7698 BLAKE MANAGER 7839 1981-5-1 2850.0 NULL 30 30 SALES CHICAGO

7782 CLARK MANAGER 7839 1981-6-9 2450.0 NULL 10 10 ACCOUNTING NEW YORK

7788 SCOTT ANALYST 7566 1987-4-19 3000.0 NULL 20 20 RESEARCH DALLAS

7839 KING PRESIDENT NULL 1981-11-17 5000.0 NULL 10 10 ACCOUNTINGNEW YORK

7844 TURNER SALESMAN 7698 1981-9-8 1500.0 0.0 30 30 SALES CHICAGO

7876 ADAMS CLERK 7788 1987-5-23 1100.0 NULL 20 20 RESEARCH DALLAS

7900 JAMES CLERK 7698 1981-12-3 950.0 NULL 30 30 SALES CHICAGO

7902 FORD ANALYST 7566 1981-12-3 3000.0 NULL 20 20 RESEARCH DALLAS

7934 MILLER CLERK 7782 1982-1-23 1300.0 NULL 10 10 ACCOUNTING NEW YORK

Time taken: 13.625 seconds, Fetched 14 row(s)

20/10/20 06:20:09 INFO CliDriver: Time taken: 13.625 seconds, Fetched 14 row(s)

`explain extended select a.key*(2+3), b.value from t a join t b on a.key = b.key and a.key > 3;`

== Parsed Logical Plan ==

'Project [unresolvedalias(('a.key * (2 + 3)), None), 'b.value]

± 'Join Inner, (('a.key = 'b.key) && ('a.key > 3))

:- 'UnresolvedRelation t, a

± 'UnresolvedRelation t, b

== Analyzed Logical Plan ==

(CAST(key AS DOUBLE) * CAST((2 + 3) AS DOUBLE)): double, value: string

Project [(cast(key#321 as double) * cast((2 + 3) as double)) AS (CAST(key AS DOUBLE) * CAST((2 + 3) AS DOUBLE))#325, value#324]

± Join Inner, ((key#321 = key#323) && (cast(key#321 as double) > cast(3 as double)))

:- SubqueryAlias a

: ± MetastoreRelation default, t

± SubqueryAlias b

± MetastoreRelation default, t

== Optimized Logical Plan ==

Project [(cast(key#321 as double) * 5.0) AS (CAST(key AS DOUBLE) * CAST((2 + 3) AS DOUBLE))#325, value#324]

± Join Inner, (key#321 = key#323)

:- Project [key#321]

: ± Filter (isnotnull(key#321) && (cast(key#321 as double) > 3.0))

: ± MetastoreRelation default, t

± Filter (isnotnull(key#323) && (cast(key#323 as double) > 3.0))

± MetastoreRelation default, t

== Physical Plan ==

*Project [(cast(key#321 as double) * 5.0) AS (CAST(key AS DOUBLE) * CAST((2 + 3) AS DOUBLE))#325, value#324]

± *SortMergeJoin [key#321], [key#323], Inner

:- *Sort [key#321 ASC NULLS FIRST], false, 0

: ± Exchange hashpartitioning(key#321, 200)

: ± *Filter (isnotnull(key#321) && (cast(key#321 as double) > 3.0))

: ± HiveTableScan [key#321], MetastoreRelation default, t

± *Sort [key#323 ASC NULLS FIRST], false, 0

± Exchange hashpartitioning(key#323, 200)

± *Filter (isnotnull(key#323) && (cast(key#323 as double) > 3.0))

± HiveTableScan [key#323, value#324], MetastoreRelation default, t

#### thriftserver/beeline的使用

>

> spark下的sbin

>

>

>

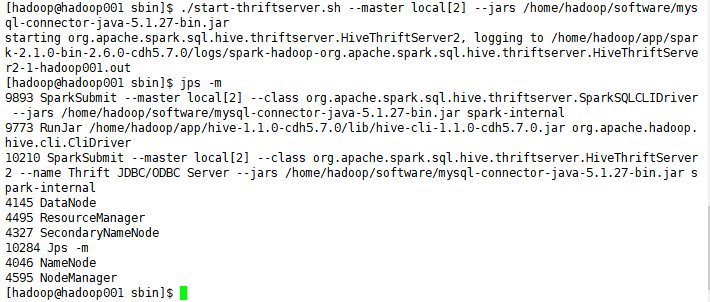

1. 启动thriftserver:

`./start-thriftserver.sh --master local[2] --jars /home/hadoop/software/mysql-connector-java-5.1.27-bin.jar`

默认端口是10000 ,可以修改

./start-thriftserver.sh

–master local[2]

–jars ~/software/mysql-connector-java-5.1.27-bin.jar

–hiveconf hive.server2.thrift.port=14000

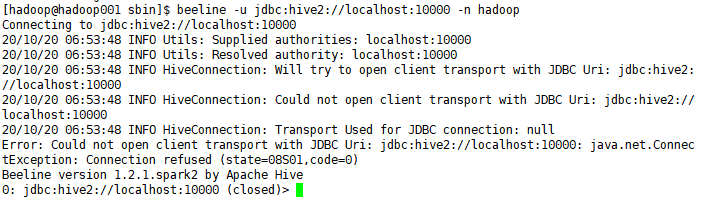

2)启动beeline

`beeline -u jdbc:hive2://localhost:10000 -n hadoop`

`beeline -u jdbc:hive2://localhost:14000 -n hadoop`

thriftserver和普通的spark-shell/spark-sql有什么区别?

1)spark-shell、spark-sql都是一个spark application;

2)thriftserver

* 不管你启动多少个客户端(beeline/code),永远都是一个spark application

* 解决了一个数据共享的问题,多个客户端可以共享数据;

#### jdbc

注意事项:在使用jdbc开发时,一定要先启动thriftserver

Exception in thread “main” java.sql.SQLException:

Could not open client transport with JDBC Uri: jdbc:hive2://hadoop001:14000:

java.net.ConnectException: Connection refused

最后

不知道你们用的什么环境,我一般都是用的Python3.6环境和pycharm解释器,没有软件,或者没有资料,没人解答问题,都可以免费领取(包括今天的代码),过几天我还会做个视频教程出来,有需要也可以领取~

给大家准备的学习资料包括但不限于:

Python 环境、pycharm编辑器/永久激活/翻译插件

python 零基础视频教程

Python 界面开发实战教程

Python 爬虫实战教程

Python 数据分析实战教程

python 游戏开发实战教程

Python 电子书100本

Python 学习路线规划

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

3251

3251

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?