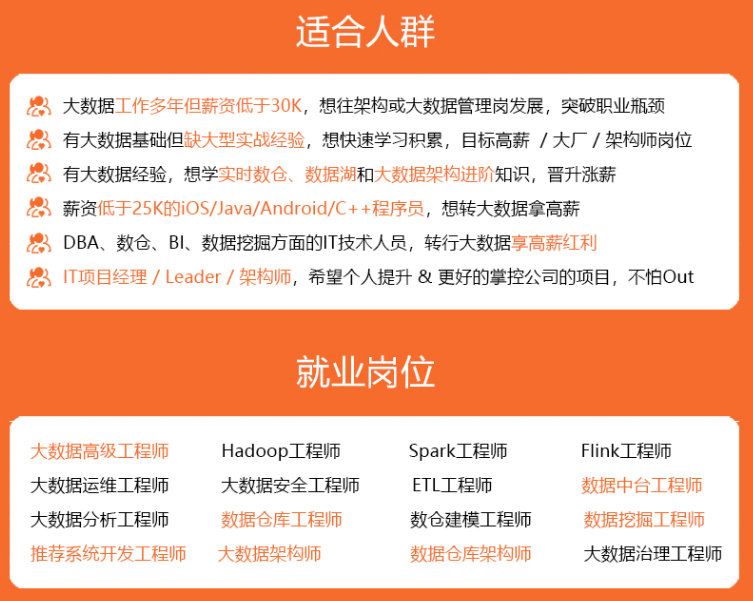

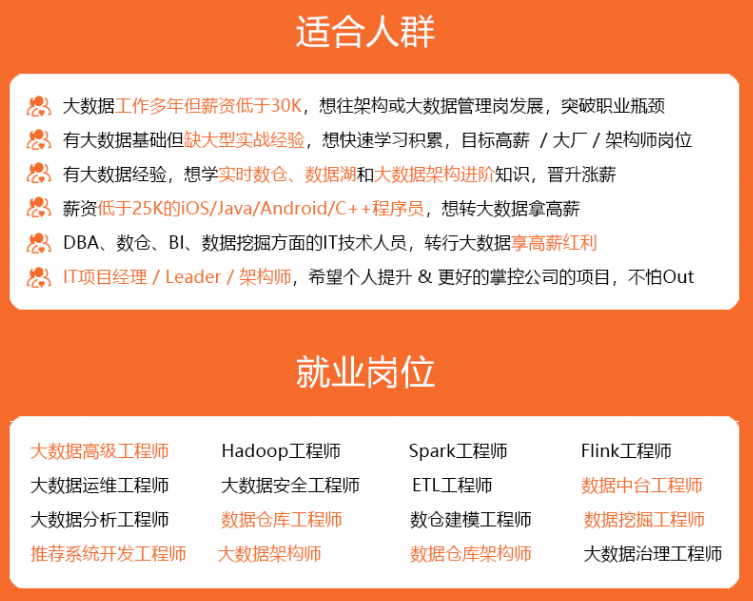

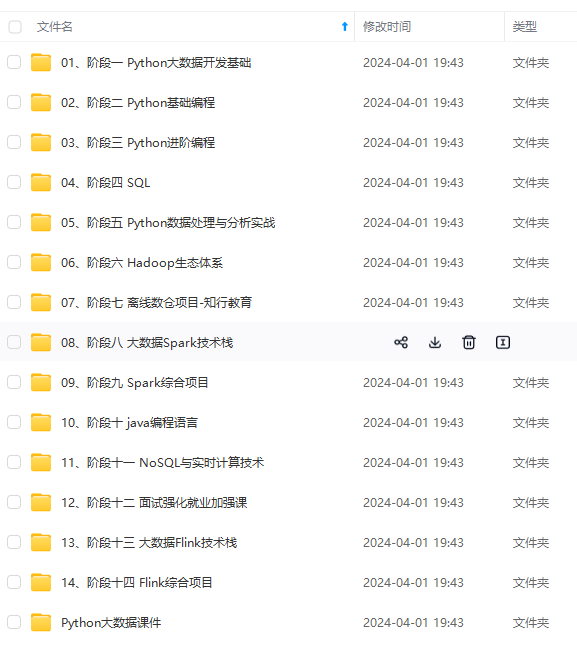

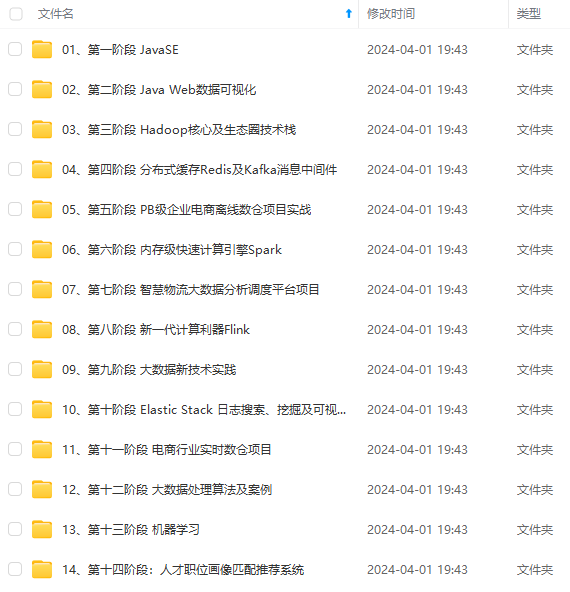

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

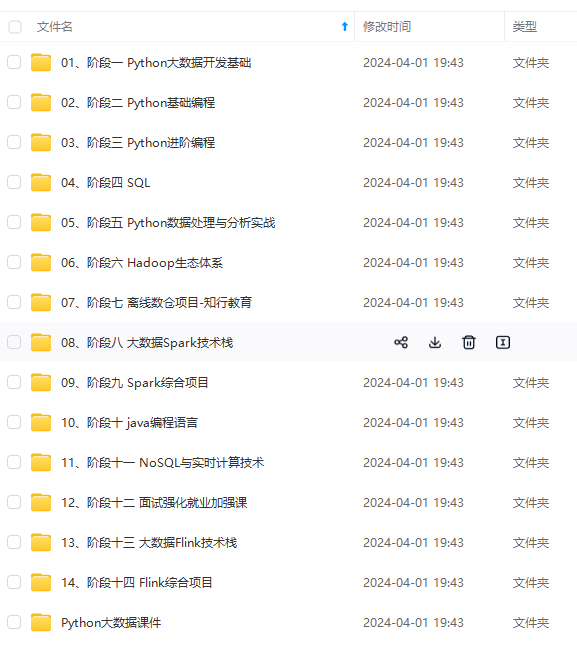

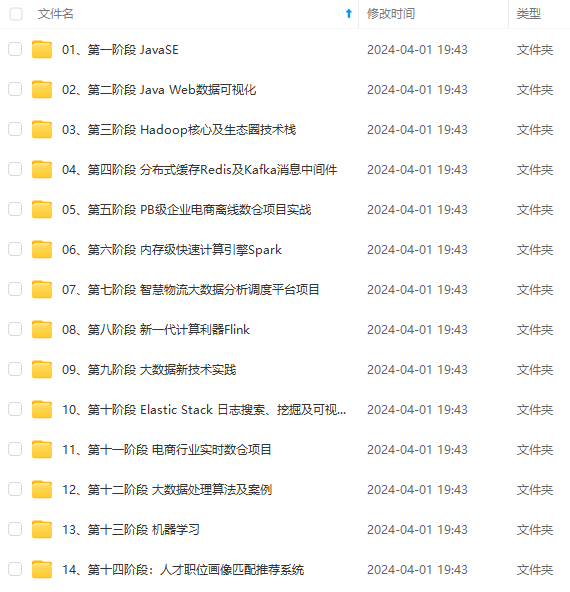

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

Properties properties = new Properties();

// bootstrap.servers kafka集群地址 host1:port1,host2:port2 …

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, “127.0.0.1:9092”);

// key.deserializer 消息key序列化方式

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

// value.deserializer 消息体序列化方式

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

KafkaProducer<String, String> producer = new KafkaProducer<>(properties);

// 0 异步发送消息

for (int i = 0; i < 10; i++) {

String data = “async :” + i;

// 发送消息

producer.send(new ProducerRecord<>(“demo-topic”, data));

}

// 1 同步发送消息 调用get()阻塞返回结果

for (int i = 0; i < 10; i++) {

String data = "sync : " + i;

try {

// 发送消息

Future send = producer.send(new ProducerRecord<>(“demo-topic”, data));

RecordMetadata recordMetadata = send.get();

System.out.println(recordMetadata);

} catch (Exception e) {

e.printStackTrace();

}

}

// 2 异步发送消息 回调callback()

for (int i = 0; i < 10; i++) {

String data = "callback : " + i;

// 发送消息

producer.send(new ProducerRecord<>(“demo-topic”, data), new Callback() {

@Override

public void onCompletion(RecordMetadata metadata, Exception exception) {

// 发送消息的回调

if (exception != null) {

exception.printStackTrace();

} else {

System.out.println(metadata);

}

}

});

}

producer.close();

}

}

Consumer端demo代码:

package kafka;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import org.apache.kafka.common.serialization.StringDeserializer;

import java.time.Duration;

import java.util.Arrays;

import java.util.Properties;

public class Consumer {

public static void main(String[] args) {

Properties properties = new Properties();

//bootstrap.servers kafka集群地址 host1:port1,host2:port2 …

properties.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, “127.0.0.1:9092”);

// key.deserializer 消息key序列化方式

properties.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

// value.deserializer 消息体序列化方式

properties.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

// group.id 消费组id

properties.put(ConsumerConfig.GROUP_ID_CONFIG, “demo-group”);

// enable.auto.commit 设置自动提交offset

properties.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, true);

// auto.offset.reset

properties.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, “earliest”);

KafkaConsumer<String, String> consumer = new KafkaConsumer<>(properties);

String[] topics = new String[]{“demo-topic”};

consumer.subscribe(Arrays.asList(topics));

while (true) {

ConsumerRecords<String, String> records = consumer.poll(Duration.ofMillis(100));

for (ConsumerRecord<String, String> record : records) {

System.out.println(record);

}

}

}

}

Libkafka examples:

https://github.com/edenhill/librdkafka/tree/master/examples

可能会用到Python的kafka客户端:

https://github.com/Parsely/pykafka

安装pykafka客户端模块

$ pip install pykafka

初始化客户端对象

from pykafka import KafkaClient

client = KafkaClient(hosts=“127.0.0.1:9092,127.0.0.1:9093,…”)

TLS(https连接)

from pykafka import KafkaClient, SslConfig

config = SslConfig(cafile=‘/your/ca.cert’,

… certfile=‘/your/client.cert’, # optional

… keyfile=‘/your/client.key’, # optional

… password=‘unlock my client key please’) # optional

client = KafkaClient(hosts=“127.0.0.1:,…”,

… ssl_config=config)

监听topic

client.topics

topic = client.topics[‘my.test’]

往topic发送消息,这里是同步发送的,需要等待消息确认才能发送下一条

with topic.get_sync_producer() as producer:

… for i in range(4):

… producer.produce('test message ’ + str(i ** 2))

为了提高吞吐量, 推荐Producer采用异步发送消息模式 ,produce()函数被调用后会立即返回

with topic.get_producer(delivery_reports=True) as producer:

… count = 0

… while True:

… count += 1

… producer.produce(‘test msg’, partition_key=‘{}’.format(count))

… if count % 10 ** 5 == 0: # adjust this or bring lots of RAM 😉

… while True:

… try:

… msg, exc = producer.get_delivery_report(block=False)

… if exc is not None:

… print ‘Failed to deliver msg {}: {}’.format(

… msg.partition_key, repr(exc))

… else:

… print ‘Successfully delivered msg {}’.format(

… msg.partition_key)

… except Queue.Empty:

… break

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

reak

[外链图片转存中…(img-RFIwFaG6-1715288433380)]

[外链图片转存中…(img-KVHS0aTy-1715288433380)]

[外链图片转存中…(img-K4ogHaEj-1715288433380)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

1006

1006

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?