既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

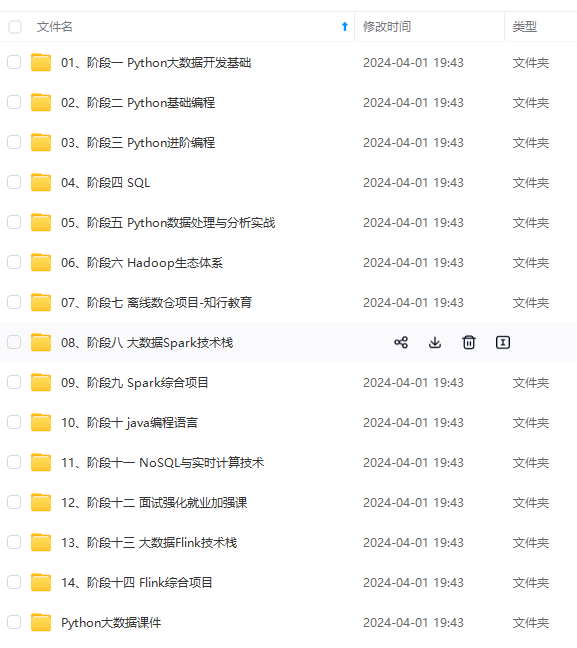

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

-p 8080:8080

-p 16010:16010

-p 4000:4000

-p 3000:3000

centos:7 /usr/sbin/init

其中端口号解释

2222:22# SSH

3306:3306 #MySQL

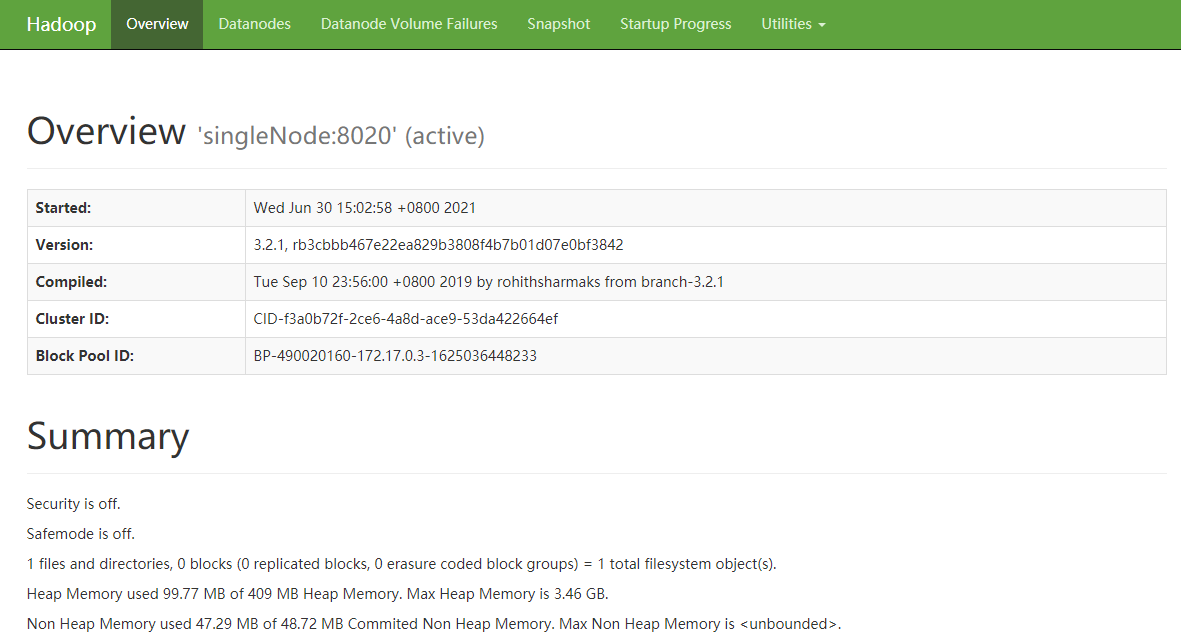

8020:8020 # HDFS RPC

9870:9870 # HDFS web UI

19888:19888 # Yarn job history

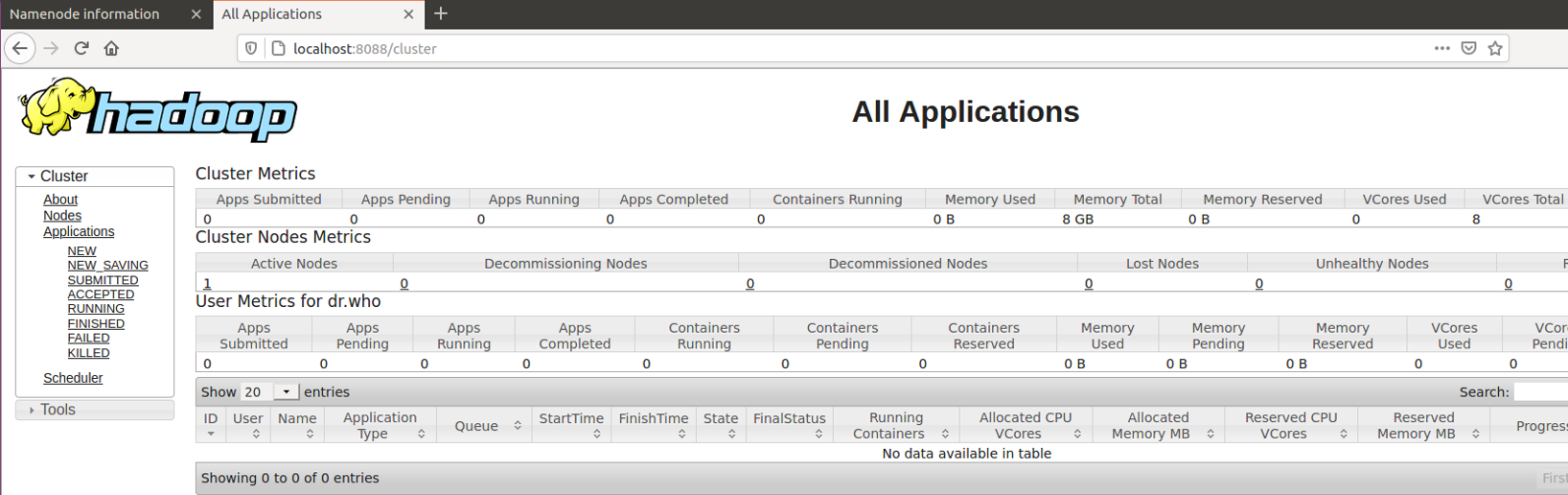

8088:8088 # Yarn web UI

9083:9083 # Hive metastore

10000:10000 # HiveServer2

2181:2181 # zk

9092:9092 # kafka

8091:8091 # flink

#### 2.3 进入容器

docker exec -it singleNode /bin/bash

### 三、环境准备

#### 3.1 安装必要软件

yum clean all

yum -y install unzip bzip2-devel vim bashname

yum install kde-l10n-Chinese -y

yum install glibc-common -y

localedef -c -f UTF-8 -i zh_CN zh_CN.utf8

echo “export LANG=zh_CN.UTF-8” >> /etc/locale.conf

echo “LC_ALL zh_CN.UTF-8” >> ~/.bashrc

#### 3.2 配置SSH免密登录

修改root密码passwd root # 输入两次密码# 安装必要SSH服务yum install -y openssh openssh-server openssh-clients openssl openssl-devel # 生成秘钥ssh-keygen -t rsa -f ~/.ssh/id_rsa -P ‘’ # 配置免密cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys# 方式2: ssh-copy-id# 启动SSH服务systemctl start sshd

#### 3.3 设置时区

cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

#### 3.4 关闭防火墙

yum -y install firewalldsystemctl stop firewalldsystemctl disable firewalld

#### 3.5 时间同步、静态ip、主机映射

* 由于本次课使用docker进行环境搭建,所以对于静态ip和主机映射可以不用配置

* 由于本次课搭建的是单节点的伪分布式集群,所以时间同步可以用不设置

* 如果在物理机上搭建多节点的完全分布式集群则必须配置

### 四、MySQL安装

#### 4.1 上传解压安装包

cd /opt/software/tar xvf MySQL-5.5.40-1.linux2.6.x86_64.rpm-bundle.tar

#### 4.2 安装必要依赖

yum -y install libaio perl

#### 4.3 安装服务端和客户端

rpm -ivh MySQL-server-5.5.40-1.linux2.6.x86_64.rpmrpm -ivh MySQL-client-5.5.40-1.linux2.6.x86_64.rpm

#### 4.4 启动并配置MySQL

方式一

启动服务systemctl start mysql# 修改MySQL密码/usr/bin/mysqladmin -u root password ‘root’# 登陆MySQL设置权限mysql -uroot -proot > update mysql.user set host=‘%’ where host=‘localhost’;> delete from mysql.user where host<>‘%’ or user=‘’;> flush privileges;

方式二

启动服务systemctl start mysql# 执行MySQL的初始化/usr/bin/mysql_secure_installation# 输入一次回车, 两次相同的密码进行修改密码# Remove anonymous users? [Y/n] 是否移除掉anonymous用户 n# Disallow root login remotely? [Y/n] 是否允许root用户远程登录 y# Remove test database and access to it? [Y/n] 是否移除掉test数据库 n# Reload privilege tables now? [Y/n] 是否现在刷新权限 y# 登陆MySQL设置权限mysql -uroot -proot > update mysql.user set host=‘%’ where host=‘localhost’;> delete from mysql.user where host<>‘%’ or user=‘’;> flush privileges;

### 五、安装JDK

#### 5.1 上传并解压

tar zxvf /opt/software/jdk-8u171-linux-x64.tar.gz -C /opt/install/ln -s /opt/install/jdk1.8.0_171 /opt/install/java

#### 5.2 配置环境变量

环境变量配置在 ~/.bashrc 里

vi ~/.bashrc-------------------------------------------export JAVA_HOME=/opt/install/javaexport PATH= J A V A H O M E / b i n : JAVA_HOME/bin: JAVAHOME/bin:PATH-------------------------------------------source ~/.bashrc

#### 5.3 查看版本

java -version

### 六、Hadoop安装

#### 6.1 上传并解压

tar zxvf hadoop-3.2.1.tar.gz -C /opt/install/ln -s /opt/install/hadoop-3.2.1/ /opt/install/hadoop

#### 6.2 修改配置

进入路径cd /opt/install/hadoop/etc/hadoop/

##### 6.2.1 配置core-site.xml

vi core-site.xml------------------------------------------- fs.defaultFS hdfs://singleNode:8020 hadoop.tmp.dir /opt/install/hadoop/data hadoop.proxyuser.root.hosts hadoop.proxyuser.root.groups hadoop.http.staticuser.user root -------------------------------------------

##### 6.2.2 配置hdfs-site.xml

vi hdfs-site.xml------------------------------------------- dfs.replication 1 dfs.namenode.secondary.http-address singleNode:9868 -------------------------------------------

##### 6.2.3 配置mapred-site.xml

vi mapred-site.xml------------------------------------------- mapreduce.framework.name yarn mapreduce.jobhistory.address singleNode:10020 mapreduce.jobhistory.webapp.address singleNode:19888 -------------------------------------------

##### 6.2.4 配置yarn-site.xml

vi yarn-site.xml------------------------------------------- yarn.nodemanager.aux-services mapreduce_shuffle yarn.resourcemanager.hostname singleNode yarn.nodemanager.env-whitelist JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME yarn.scheduler.minimum-allocation-mb 512 yarn.scheduler.maximum-allocation-mb 4096 yarn.nodemanager.resource.memory-mb 4096 yarn.nodemanager.pmem-check-enabled false yarn.nodemanager.vmem-check-enabled false yarn.log-aggregation-enable true yarn.log.server.url http:// y a r n . t i m e l i n e − s e r v i c e . w e b a p p . a d d r e s s / a p p l i c a t i o n h i s t o r y / l o g s < / v a l u e > < / p r o p e r t y > < p r o p e r t y > < n a m e > y a r n . l o g − a g g r e g a t i o n . r e t a i n − s e c o n d s < / n a m e > < v a l u e > 604800 < / v a l u e > < / p r o p e r t y > < p r o p e r t y > < n a m e > y a r n . t i m e l i n e − s e r v i c e . e n a b l e d < / n a m e > < v a l u e > t r u e < / v a l u e > < / p r o p e r t y > < p r o p e r t y > < n a m e > y a r n . t i m e l i n e − s e r v i c e . h o s t n a m e < / n a m e > < v a l u e > {yarn.timeline-service.webapp.address}/applicationhistory/logs</value> </property> <property> <name>yarn.log-aggregation.retain-seconds</name> <value>604800</value> </property> <property> <name>yarn.timeline-service.enabled</name> <value>true</value> </property> <property> <name>yarn.timeline-service.hostname</name> <value> yarn.timeline−service.webapp.address/applicationhistory/logs</value></property><property><name>yarn.log−aggregation.retain−seconds</name><value>604800</value></property><property><name>yarn.timeline−service.enabled</name><value>true</value></property><property><name>yarn.timeline−service.hostname</name><value>{yarn.resourcemanager.hostname} yarn.timeline-service.http-cross-origin.enabled true yarn.resourcemanager.system-metrics-publisher.enabled true -------------------------------------------

##### 6.2.5 配置hadoop-env.sh

vi hadoop-env.sh-------------------------------------------export JAVA_HOME=/opt/install/java-------------------------------------------

##### 6.2.6 配置mapred-env.sh

vi mapred-env.sh-------------------------------------------export JAVA_HOME=/opt/install/java-------------------------------------------

##### 6.2.7 配置yarn-env.sh

vi yarn-env.sh-------------------------------------------export JAVA_HOME=/opt/install/java-------------------------------------------

##### 6.2.8 配置works

vi works-------------------------------------------singleNode-------------------------------------------

#### 6.3 添加变量

vi ~/.bashrc------------------------------------------------export HADOOP_HOME=/opt/install/hadoopexport HADOOP_CONF_DIR= H A D O O P H O M E / e t c / h a d o o p e x p o r t P A T H = HADOOP_HOME/etc/hadoopexport PATH= HADOOPHOME/etc/hadoopexportPATH=HADOOP_HOME/bin:$PATH------------------------------------------------vi $HADOOP_HOME/sbin/start-dfs.shvi $HADOOP_HOME/sbin/stop-dfs.sh------------------------------------------------HDFS_NAMENODE_USER=root HDFS_DATANODE_USER=root HDFS_SECONDARYNAMENODE_USER=root YARN_RESOURCEMANAGER_USER=root YARN_NODEMANAGER_USER=root------------------------------------------------vi $HADOOP_HOME/sbin/start-yarn.shvi $HADOOP_HOME/sbin/stop-yarn.sh------------------------------------------------YARN_RESOURCEMANAGER_USER=root HADOOP_SECURE_DN_USER=yarn YARN_NODEMANAGER_USER=root------------------------------------------------

#### 6.4 HDFS格式化

hdfs namenode -format

#### 6.5 启动Hadoop服务

启动HDFSKaTeX parse error: Expected 'EOF', got '#' at position 30: …in/start-dfs.sh#̲ 启动yarnHADOOP_HOME/sbin/start-yarn.sh# 启动历史服务器mapred --daemon start historyserver

#### 6.6 Web端查看

查看9870端口

查看8088端口

### 七、Hive安装

#### 7.1 上传并解压

tar zxvf /opt/software/apache-hive-3.1.2-bin.tar.gz -C /opt/install/ln -s /opt/install/apache-hive-3.1.2-bin/ /opt/install/hive

#### 7.2 修改配置

进入路径cd /opt/install/hive/conf/

##### 7.2.1 修改hive-site.xml

cp hive-default.xml.template hive-site.xmlvi hive-site.xml------------------------------------------- javax.jdo.option.ConnectionURL jdbc:mysql://singleNode:3306/metastore?createDatabaseIfNotExist=true&useUnicode=true&characterEncoding=UTF-8 javax.jdo.option.ConnectionDriverName com.mysql.jdbc.Driver javax.jdo.option.ConnectionUserName root javax.jdo.option.ConnectionPassword root hive.metastore.warehouse.dir /user/hive/warehouse hive.metastore.schema.verification false hive.metastore.uris thrift://singleNode:9083 hive.server2.thrift.port 10000 hive.server2.thrift.bind.host singleNode hive.metastore.event.db.notification.api.auth false -------------------------------------------

##### 7.2.2 修改hive-env.sh

cp hive-env.sh.template hive-env.shvi hive-env.sh-------------------------------------------HADOOP_HOME=/opt/install/hadoop-------------------------------------------

#### 7.3 添加依赖包

cp /opt/software/mysql-connector-java-5.1.31.jar /opt/install/hive/lib/

#### 7.4 添加环境变量

vi ~/.bashrc------------------------------------------------export HIVE_HOME=/opt/install/hiveexport PATH= H I V E H O M E / b i n : HIVE_HOME/bin: HIVEHOME/bin:PATH------------------------------------------------

#### 7.5 启动服务

初始化元数据表schematool -dbType mysql -initSchema# 启动hiveserver2服务nohup hive --service hiveserver2 &#############报错 Exception in thread “main” java.lang.NoSuchMethodError ################# jar 包冲突, 需要删除低版本包rm -rf /opt/install/hive/lib/guava-19.0.jarcp /opt/install/hadoop/share/hadoop/common/lib/guava-27.0-jre.jar /opt/install/hive/lib/

#### 7.6 Jps查看

### 八、Sqoop安装

#### 8.1 上传并解压

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

10c990357e717310bc2519de7e.png)

### 八、Sqoop安装

#### 8.1 上传并解压

[外链图片转存中...(img-xcFOrRiA-1714869748032)]

[外链图片转存中...(img-WlE9ZJV8-1714869748033)]

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

855

855

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?