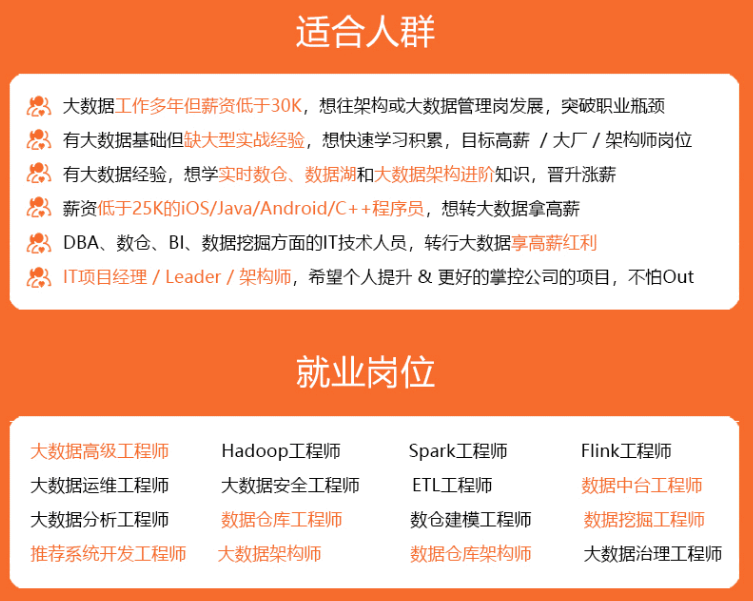

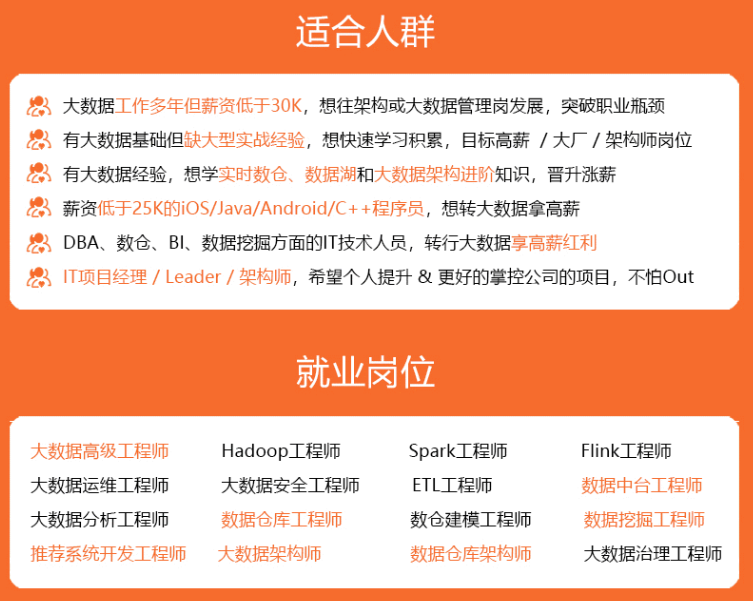

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

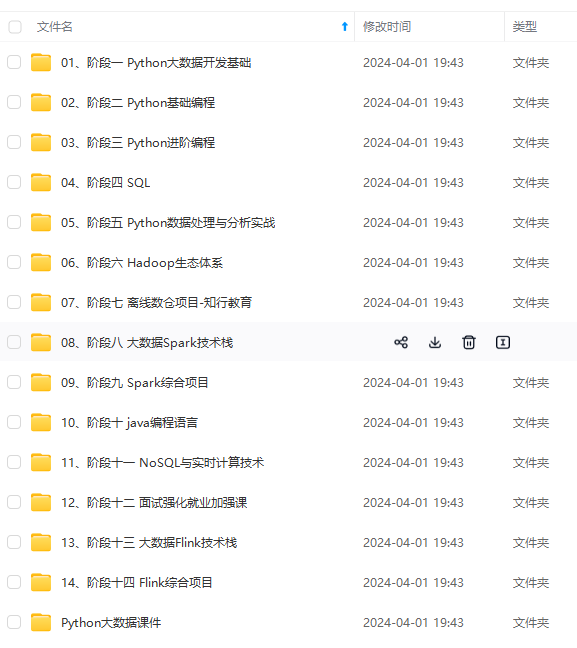

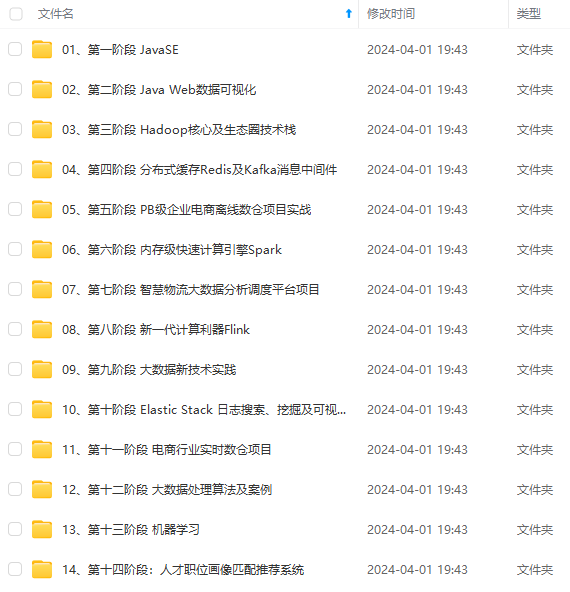

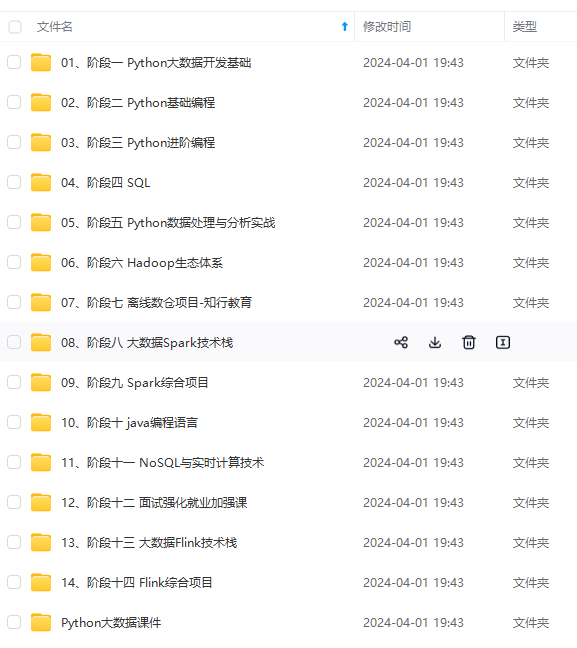

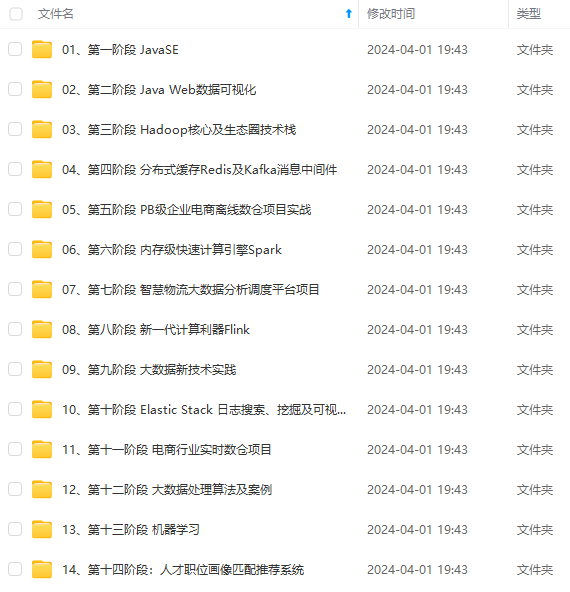

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

2、下载地址

3、官方文档

GettingStarted - Apache Hive - Apache Software Foundation

4、SSH免密配置

大数据入门之 ssh 免密码登录_qq262593421的博客-CSDN博客

5、Zookeeper安装

大数据高可用技术之zookeeper3.4.5安装配置_qq262593421的博客-CSDN博客

6、Hadoop安装

https://blog.csdn.net/qq262593421/article/details/106956480

二、解压Hive

1、解压文件

cd /usr/local/hadoop

tar zxpf apache-hive-2.3.2-bin.tar.gz

2、创建软链接

ln -s /usr/local/hadoop/apache-hive-2.3.2-bin /usr/local/hadoop/hive

三、环境变量配置

1、hive环境变量配置

vim /etc/profile

export HIVE_HOME=/usr/local/hadoop/hive

export PATH= P A T H : PATH: PATH:HIVE_HOME/bin

2、配置环境立即生效

source /etc/profile

四、Hive配置

cd $HIVE_HOME/conf

touch hive-env.sh hive-site.xml

chmod +x hive-env.sh

1、hive-env.sh配置

export HADOOP_HEAPSIZE=4096

export JAVA_HOME=/usr/java/jdk1.8

export HADOOP_HOME=/usr/local/hadoop/hadoop

export HIVE_HOME=/usr/local/hadoop/hive

export HIVE_CONF_DIR=/usr/local/hadoop/hive/conf

export HBASE_HOME=/usr/local/hadoop/hbase

export SPARK_HOME=/usr/local/hadoop/spark

export ZOO_HOME=/usr/local/hadoop/zookeeper

2、hive-site.xml配置

<?xml version="1.0" encoding="UTF-8" standalone="no"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?>hive.metastore.warehouse.dir

/user/hive/warehouse

location of default database for the warehouse

hive.exec.local.scratchdir

/usr/local/hadoop/hive/tmp

Local scratch space for Hive jobs

hive.downloaded.resources.dir

/usr/local/hadoop/hive/tmp/resources

Temporary local directory for added resources in the remote file system.

hive.querylog.location

/user/hadoop/hive/logs

Location of Hive run time structured log file

hive.metastore.schema.verification

false

Enforce metastore schema version consistency.

True: Verify that version information stored in is compatible with one from Hive jars. Also disable automatic

schema migration attempt. Users are required to manually migrate schema after Hive upgrade which ensures

proper metastore schema migration. (Default)

False: Warn if the version information stored in metastore doesn’t match with one from in Hive jars.

hive.metastore.db.type

mysql

Expects one of [derby, oracle, mysql, mssql, postgres].

Type of database used by the metastore. Information schema & JDBCStorageHandler depend on it.

javax.jdo.option.ConnectionURL

jdbc:mysql://hadoop001:3306/hive?createDatabaseIfNotExist=true&useSSL=false

javax.jdo.option.ConnectionDriverName

com.mysql.jdbc.Driver

Driver class name for a JDBC metastore

javax.jdo.option.ConnectionUserName

hive

Username to use against metastore database

javax.jdo.option.ConnectionPassword

hive

Comma separated list of configuration options which should not be read by normal user like passwords

datanucleus.schema.autoCreateAll

true

Auto creates necessary schema on a startup if one doesn’t exist. Set this to false, after creating it once.To enable auto create also set hive.metastore.schema.verification=false. Auto creation is not recommended for production use cases, run schematool command instead.

hive.server2.thrift.bind.host

hadoop001

Bind host on which to run the HiveServer2 Thrift service.

hive.server2.thrift.port

10000

Port number of HiveServer2 Thrift interface when hive.server2.transport.mode is ‘binary’.

hive.metastore.uris

Thrift URI for the remote metastore. Used by metastore client to connect to remote metastore.

hive.server2.logging.operation.log.location

/usr/local/hadoop/hive/tmp/operation_logs

Top level directory where operation logs are stored if logging functionality is enabled

hive.server2.webui.host

hadoop001

The host address the HiveServer2 WebUI will listen on

hive.server2.webui.port

10002

The port the HiveServer2 WebUI will listen on. This can beset to 0 or a negative integer to disable the web UI

hive.server2.webui.max.threads

50

The max HiveServer2 WebUI threads

hive.server2.webui.use.ssl

false

Set this to true for using SSL encryption for HiveServer2 WebUI.

hive.server2.thrift.client.user

root

Username to use against thrift client

hive.server2.thrift.client.password

root

Password to use against thrift client

五、初始化Hive

1、复制mysql jdbc驱动包到hive lib目录

cd $HIVE_HOME/lib

wget https://repo1.maven.org/maven2/mysql/mysql-connector-java/5.1.47/mysql-connector-java-5.1.47.jar

wget https://repo1.maven.org/maven2/mysql/mysql-connector-java/8.0.16/mysql-connector-java-8.0.16.jar

2、MySQL创建用户并赋予权限

– 创建hive用户,密码为hive

CREATE USER ‘hive’@‘%’ IDENTIFIED BY ‘hive’;

– 赋予hive用户全部权限

GRANT ALL PRIVILEGES ON . TO ‘hive’@‘%’ IDENTIFIED BY ‘hive’ WITH GRANT OPTION;

– 刷新权限

FLUSH PRIVILEGES;

3、启动zk和hadoop集群

zkServer.sh start

hdfs --daemon start zkfc

start-all.sh

4、创建hive目录并赋权

hadoop fs -mkdir /tmp

hadoop fs -mkdir /user/hive/warehouse

hadoop fs -chmod g+w /tmp

hadoop fs -chmod g+w /user/hive/warehouse

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

hdfs --daemon start zkfc

start-all.sh

4、创建hive目录并赋权

hadoop fs -mkdir /tmp

hadoop fs -mkdir /user/hive/warehouse

hadoop fs -chmod g+w /tmp

hadoop fs -chmod g+w /user/hive/warehouse

[外链图片转存中…(img-MEVApvYJ-1714918360959)]

[外链图片转存中…(img-aumjg74Y-1714918360960)]

[外链图片转存中…(img-20zxjnZd-1714918360960)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

1391

1391

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?