10003,ww,19,61,star

##### 2.1.4、向主题province生产数据

kafka-console-producer.sh --broker-list 192.168.110.101:9092 --topic province

##### 2.1.5、生产数据

11,北京

61,陕西

#### 2.2、StarRocks准备

##### 2.2.1、创建主键模型表s\_province

create database starrocks;

use starrocks;

CREATE TABLE IF NOT EXISTS starrocks.s\_province (

uid int(10) NOT NULL COMMENT “”,

p\_id int(2) NOT NULL COMMENT “”,

p\_name varchar(30) NULL COMMENT “”

)

PRIMARY KEY(uid)

DISTRIBUTED BY HASH(uid) BUCKETS 1

PROPERTIES (

“replication_num” = “1”,

– 限主键模型

“enable_persistent_index” = “true”

);

#### 2.3、Flink准备

##### 2.3.1、启动Flink

./start-cluster.sh

##### 2.3.2、启动sql-client

/sql-client.sh embedded

##### 2.3.3、执行Flink SQL,创建上下游的映射表

1、Source部分,创建Flink向Kafka的映射表kafka\_source\_behavior

CREATE TABLE kafka_source_behavior (

uuid int,

name string,

age int,

province_id int,

behavior string

) WITH (

‘connector’ = ‘kafka’,

‘topic’ = ‘behavior’,

‘properties.bootstrap.servers’ = ‘192.168.110.101:9092’,

‘properties.group.id’ = ‘source_behavior’,

‘scan.startup.mode’ = ‘earliest-offset’,

‘format’ = ‘csv’

);

2、创建映射表kafka\_source\_province

CREATE TABLE kafka_source_province (

pid int,

p_name string

) WITH (

‘connector’ = ‘kafka’,

‘topic’ = ‘province’,

‘properties.bootstrap.servers’ = ‘192.168.110.101:9092’,

‘properties.group.id’ = ‘source_province’,

‘scan.startup.mode’ = ‘earliest-offset’,

‘format’ = ‘csv’

);

3、Sink部分,创建Flink向StarRocks的映射表sink\_province

CREATE TABLE sink_province (

uid INT,

p_id INT,

p_name STRING,

PRIMARY KEY (uid) NOT ENFORCED

)WITH (

‘connector’ = ‘starrocks’,

‘jdbc-url’=‘jdbc:mysql://192.168.110.101:9030’,

‘load-url’=‘192.168.110.101:8030’,

‘database-name’ = ‘starrocks’,

‘table-name’ = ‘s_province’,

‘username’ = ‘root’,

‘password’ = ‘root’,

‘sink.buffer-flush.interval-ms’ = ‘5000’,

‘sink.properties.column_separator’ = ‘\x01’,

‘sink.properties.row_delimiter’ = ‘\x02’

);

##### 2.3.4、执行同步任务

执行Flink SQL,开始同步任务

insert into sink_province select b.uuid as uid, b.province_id as p_id, p.p_name from kafka_source_behavior b join kafka_source_province p on b.province_id = p.pid;

#### 2.4、StarRocks查看数据

mysql -h192.168.110.101 -P9030 -uroot –proot

use starrocks;

select * from s_province;

### 3、Flink JDBC读取MySQL数据写入StarRocks

使用Flink JDBC方式读取MySQL数据的实时场景不多,因为JDBC下Flink只能获取执行命令时MySQL表的数据,所以更适合离线场景。假设有复杂的MySQL数据,就可以在Flink中跑定时任务,来获取清洗后的数据,完成后写入StarRocks。

#### 3.1、MySQL准备

##### 3.1.1、MySQL中创建表s\_user

use ODS;

CREATE TABLE s\_user (

id INT(11) NOT NULL,

name VARCHAR(32) DEFAULT NULL,

p\_id INT(2) DEFAULT NULL,

PRIMARY KEY (id)

);

##### 3.1.2、插入数据

insert into s_user values(10086,‘lm’,61),(10010, ‘ls’,11), (10000,‘ll’,61);

#### 3.2、StarRocks准备

##### 3.2.1、StarRocks创建表s\_user

use starrocks;

CREATE TABLE IF NOT EXISTS starrocks.s\_user (

id int(10) NOT NULL COMMENT “”,

name varchar(20) NOT NULL COMMENT “”,

p\_id INT(2) NULL COMMENT “”

)

PRIMARY KEY(id)

DISTRIBUTED BY HASH(id) BUCKETS 1

PROPERTIES (

“replication_num” = “1”,

– 限主键模型

“enable_persistent_index” = “true”

);

#### 3.3、Flink创建映射表

##### 3.3.1、启动Flink(服务未停止,可以跳过)

./start-cluster.sh

##### 3.3.2、启动sql-client

./sql-client.sh embedded

##### 3.3.3、Source部分,创建映射至MySQL的映射表source\_mysql\_suser

CREATE TABLE source_mysql_suser (

id INT,

name STRING,

p_id INT,

PRIMARY KEY (id) NOT ENFORCED

)WITH (

‘connector’ = ‘jdbc’,

‘url’ = ‘jdbc:mysql://192.168.110.102:3306/ODS’,

‘table-name’ = ‘s_user’,

‘username’ = ‘root’,

‘password’ = ‘root’

);

##### 3.3.4、Sink部分,创建至StarRocks的映射表sink\_starrocks\_suser

CREATE TABLE sink_starrocks_suser (

id INT,

name STRING,

p_id INT,

PRIMARY KEY (id) NOT ENFORCED

)WITH (

‘connector’ = ‘starrocks’,

‘jdbc-url’=‘jdbc:mysql://192.168.110.101:9030’,

‘load-url’=‘192.168.110.101:8030’,

‘database-name’ = ‘starrocks’,

‘table-name’ = ‘s_user’,

‘username’ = ‘root’,

‘password’ = ‘root’,

‘sink.buffer-flush.interval-ms’ = ‘5000’,

‘sink.properties.column_separator’ = ‘\x01’,

‘sink.properties.row_delimiter’ = ‘\x02’

);

##### 3.3.5、Flink清洗数据并写入StarRocks

只是简单做一个where筛选,实际业务可能是多表join的复杂场景

insert into sink_starrocks_suser select id,name,p_id from source_mysql_suser where p_id = 61;

数据写入StarRocks后,Flink任务完成并结束。此时若再对MySQL中s\_user表的数据进行增删或修改操作,Flink亦不会感知。

### 4、Flink读取StarRocks数据写入MySQL

还使用MySQL 中的s\_user表和StarRocks的s\_user表,将业务流程反转一下,读取StarRocks中的数据写入其他业务库,例如MySQL。

#### 4.1、Flink创建映射表

##### 4.1.1、启动Flink(服务未停止,可以跳过)

./start-cluster.sh

##### 4.1.2、启动sql-client

./sql-client.sh embedded

##### 4.1.3、Source部分,创建StarRocks映射表source\_starrocks\_suser

CREATE TABLE source_starrocks_suser (

id INT,

name STRING,

p_id INT

)WITH (

‘connector’ = ‘starrocks’,

‘scan-url’=‘192.168.110.101:8030’,

‘jdbc-url’=‘jdbc:mysql://192.168.110.101:9030’,

‘database-name’ = ‘starrocks’,

‘table-name’ = ‘s_user’,

‘username’ = ‘root’,

‘password’ = ‘root’

);

##### 4.1.4、Sink部分,创建向MySQL的映射表sink\_mysql\_suser

CREATE TABLE sink_mysql_suser (

id INT,

name STRING,

p_id INT,

PRIMARY KEY (id) NOT ENFORCED

)WITH (

‘connector’ = ‘jdbc’,

‘url’ = ‘jdbc:mysql://192.168.110.102:3306/ODS’,

‘table-name’ = ‘s_user’,

‘username’ = ‘root’,

‘password’ = ‘root’

);

#### 4.2、MySQL准备

##### 4.2.1、清空MySQL s\_user表数据,为一会儿导入新数据做准备

use ODS;

truncate table s_user;

#### 4.3、Flink执行导入任务

简单梳理操作,实际业务可能会对StarRocks中多个表的数据进行分组或者join等处理然后再导入。

insert into sink_mysql_suser select id,name,p_id from source_starrocks_suser;

#### 4.4、查看MySQL数据

select * from s_user;

### 5、Flink CDC同步MySQL数据至StarRocks

* 使用FlinkJDBC来读取MySQL数据时,JDBC的方式是“一次性”的导入,若希望让Flink感知MySQL数据源的数据变化,并近实时的实现据 同步,就需要使用Flink CDC。

* CDC是变更数据捕获(Change Data Capture)技术的缩写,它可以将源数据库(Source)的数据变动记录,同步到一个或多个数据目的地中(Sink)。直观的说就是当数据源的数据变化时,通过CDC可以让目标库中的数据同步发生变化(仅限于DML操作)。

* 还使用前面MySQL的s\_user表以及StarRocks的s\_user表来演示。

#### 5.1、MySQL准备

##### 5.1.1、MySQL开启binlog(格式为ROW模式)

vi /etc/my.cnf

log-bin=mysql-bin # 开启binlog

binlog-format=ROW # 选择ROW模式

server_id=1 # 配置MySQL replaction

##### 5.1.2、重启MySQL服务:

systemctl restart mysqld

#### 5.2、StarRocks准备

##### 5.2.1、StarRocks中清空s\_user表中的数据

mysql -h192.168.110.101 -P9030 -uroot –proot

use starrocks;

truncate table s_user;

#### 5.3、Flink准备

##### 5.3.1、启动Flink(服务未停止,可以跳过)

./start-cluster.sh

##### 5.3.2、启动sql-client

./sql-client.sh embedded

##### 5.3.3、Source部分,创建MySQL映射表cdc\_mysql\_suser

CREATE TABLE cdc_mysql_suser (

id INT,

name STRING,

p_id INT

) WITH (

‘connector’ = ‘mysql-cdc’,

‘hostname’ = ‘192.168.110.102’,

‘port’ = ‘3306’,

‘username’ = ‘root’,

‘password’ = ‘root’,

‘database-name’ = ‘ODS’,

‘scan.incremental.snapshot.enabled’=‘false’,

‘table-name’ = ‘s_user’

);

##### 5.3.4、Sink部分,创建向StarRocks的cdc\_starrocks\_suser

CREATE TABLE cdc_starrocks_suser (

id INT,

name STRING,

p_id INT,

PRIMARY KEY (id) NOT ENFORCED

)WITH (

‘connector’ = ‘starrocks’,

‘jdbc-url’=‘jdbc:mysql://192.168.110.101:9030’,

‘load-url’=‘192.168.110.101:8030’,

‘database-name’ = ‘starrocks’,

‘table-name’ = ‘s_user’,

‘username’ = ‘root’,

‘password’ = ‘root’,

‘sink.buffer-flush.interval-ms’ = ‘5000’,

‘sink.properties.column_separator’ = ‘\x01’,

‘sink.properties.row_delimiter’ = ‘\x02’

);

#### 5.4、执行同步任务

insert into cdc_starrocks_suser select id,name,p_id from cdc_mysql_suser;

在CDC场景下,Flink SQL执行后同步任务将会持续进行,当MySQL中数据出现变化,Flink会快速感知,并将变化同步至StarRocks中。

#### 5.5、数据观察

##### 5.5.1、MySQL库中观察数据

mysql -uroot –proot

use ODS;

select * from s_user;

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

id from cdc_mysql_suser;

在CDC场景下,Flink SQL执行后同步任务将会持续进行,当MySQL中数据出现变化,Flink会快速感知,并将变化同步至StarRocks中。

#### 5.5、数据观察

##### 5.5.1、MySQL库中观察数据

mysql -uroot –proot

use ODS;

select * from s_user;

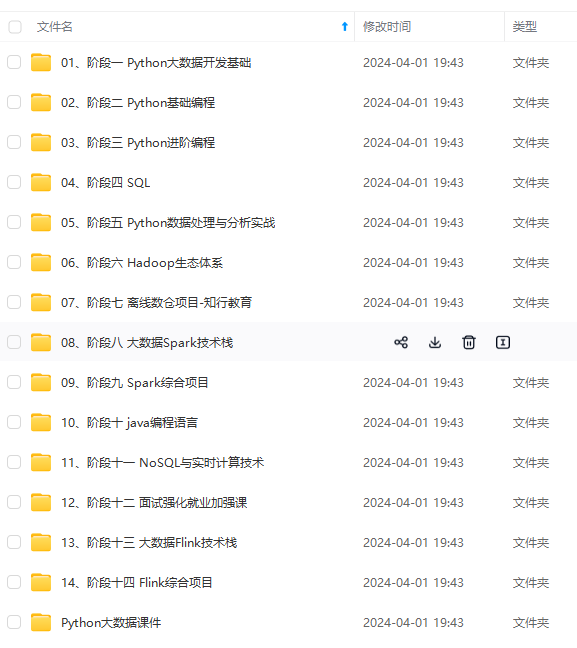

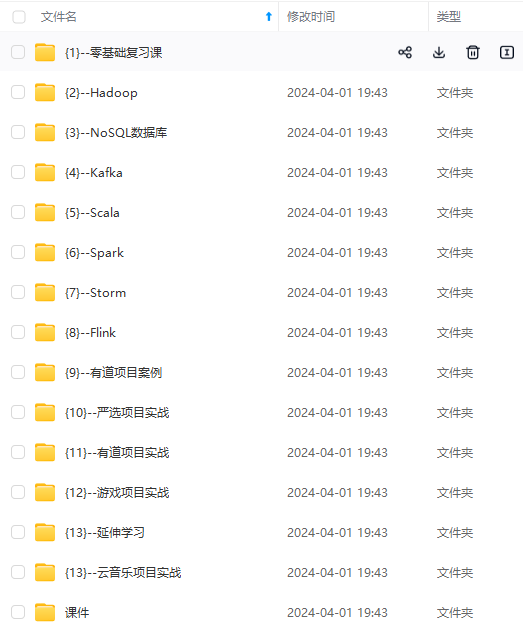

[外链图片转存中…(img-SPAUybTd-1714656588145)]

[外链图片转存中…(img-3B7TFsuM-1714656588145)]

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

756

756

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?