网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

/**

-

Licensed to the Apache Software Foundation (ASF) under one

-

or more contributor license agreements. See the NOTICE file

-

distributed with this work for additional information

-

regarding copyright ownership. The ASF licenses this file

-

to you under the Apache License, Version 2.0 (the

-

“License”); you may not use this file except in compliance

-

with the License. You may obtain a copy of the License at

-

http://www.apache.org/licenses/LICENSE-2.0 -

Unless required by applicable law or agreed to in writing, software

-

distributed under the License is distributed on an “AS IS” BASIS,

-

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

-

See the License for the specific language governing permissions and

-

limitations under the License.

*/

–>

hbase.master

60000

hbase.tmp.dir

/data/cluster/hbase/tmp

hbase.rootdir

hdfs://ns1/hbase

hbase.cluster.distributed

true

hbase.zookeeper.property.clientPort

2181

hbase.zookeeper.quorum

hadoop001,hadoop002,hadoop003

hbase.zookeeper.property.dataDir

/usr/local/zookeeper/data

dfs.datanode.max.transfer.threads

4096

hbase.master

hadoop1

hbase.unsafe.stream.capability.enforce

false

hfile.format.version

3

hbase.superuser

hbase,admin,root,hdfs,zookeeper,hive,hadoop,hue,impala,spark,kylin

hbase.table.sanity.checks

false

hbase.coprocessor.abortonerror

false

hfile.block.cache.size

stofile的读缓存占用Heap的大小百分比。该值直接影响数据读的性能当然是越大越好,如果写比读少很多,开到0.4-0.5也没问题,如果读写均衡,设置为0.3左右。如果写比读多,果断使用默认就行。

hbase.wal.provider

multiwal

MultiWAL: 如果每个RegionServer只有一个WAL,由于HDFS必须是连续的,导致必须写WAL连续的,然后出现性能问题。MultiWAL可以让RegionServer同时写多个WAL并行的,通过HDFS底层的多管道,最终提升总的吞吐量,但是不会提升单个Region的吞吐量。

hbase.regionserver.wal.codec

org.apache.hadoop.hbase.regionserver.wal.IndexedWALEditCodec

phoenix.schema.isNamespaceMappingEnabled

true

phoenix.schema.mapSystemTablesToNamespace

true

phoenix.functions.allowUserDefinedFunctions

true

enable UDF functions

3、hbase-env.sh配置

#!/usr/bin/env bash

export HBASE_OPTS=“-XX:+UseConcMarkSweepGC -verbose:gc -XX:+PrintGCDetails -XX:+PrintGCTimeStamps -Xloggc:/usr/local/hadoop/hbase/logs/jvm-gc-hbase.log”

export JAVA_HOME=/usr/java/jdk1.8

export HBASE_HEAPSIZE=4G

export HADOOP_HOME=/usr/local/hadoop/hadoop

export HBASE_HOME=/usr/local/hadoop/hbase

export HBASE_CLASSPATH=/usr/local/hadoop/hadoop/etc/hadoop

export HBASE_MANAGES_ZK=false

export HBASE_PID_DIR=/var/hadoop/pids

4、backup-masters配置

启动hbase时会将配置的backup-masters节点作为备用HMaster

hadoop001

hadoop002

四、环境变量配置

1、HBase环境变量配置

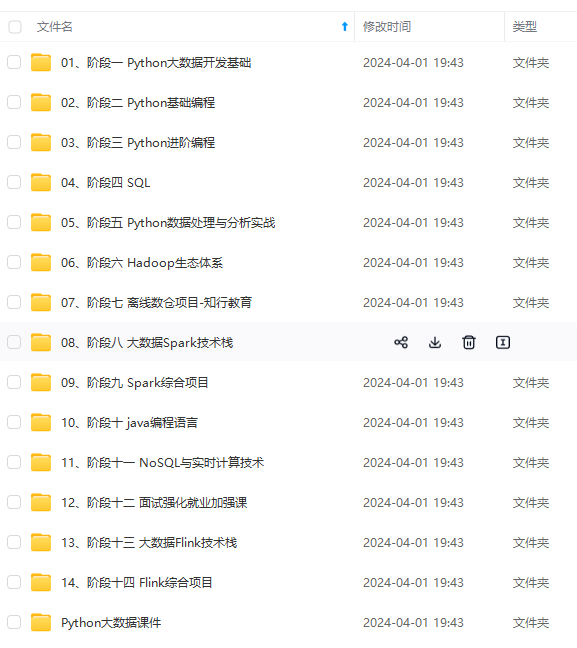

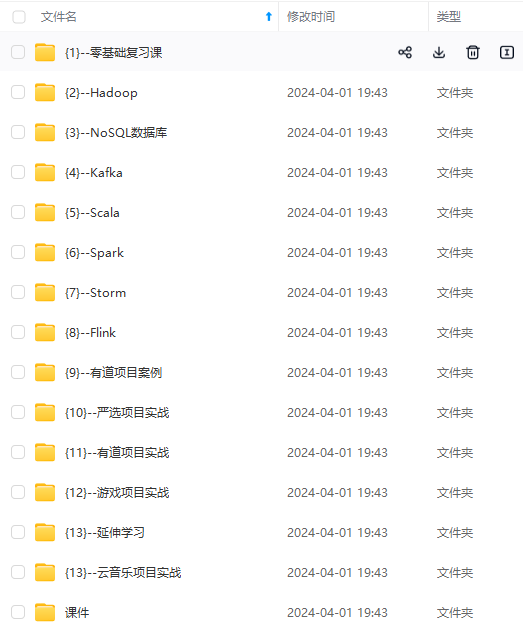

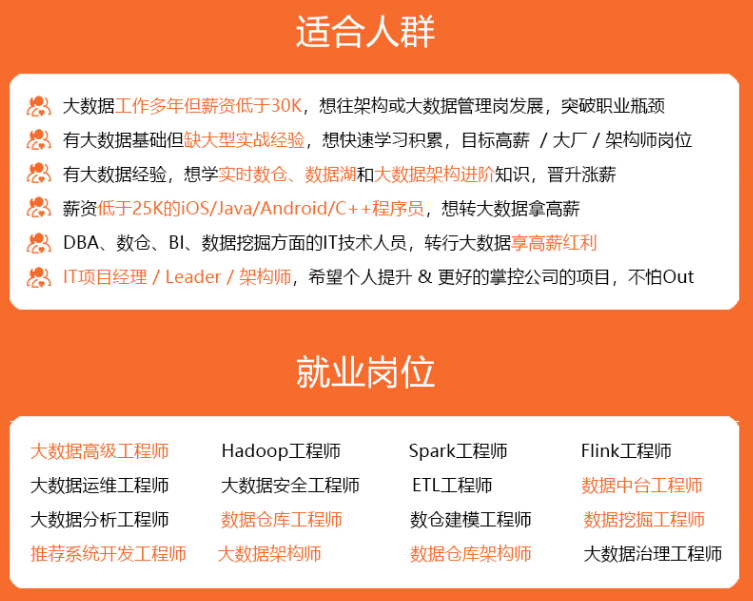

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

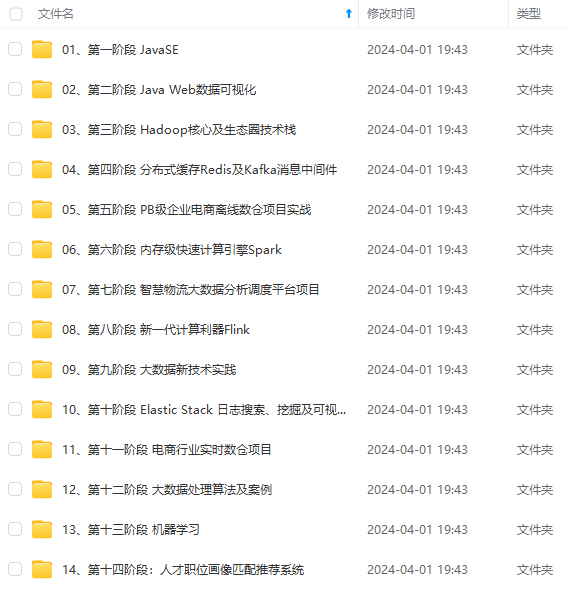

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

p/pids

4、backup-masters配置

启动hbase时会将配置的backup-masters节点作为备用HMaster

hadoop001

hadoop002

四、环境变量配置

1、HBase环境变量配置

[外链图片转存中…(img-njn6isrY-1715043783694)]

[外链图片转存中…(img-yzJsn0nt-1715043783695)]

[外链图片转存中…(img-QTkkvluh-1715043783695)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

1429

1429

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?