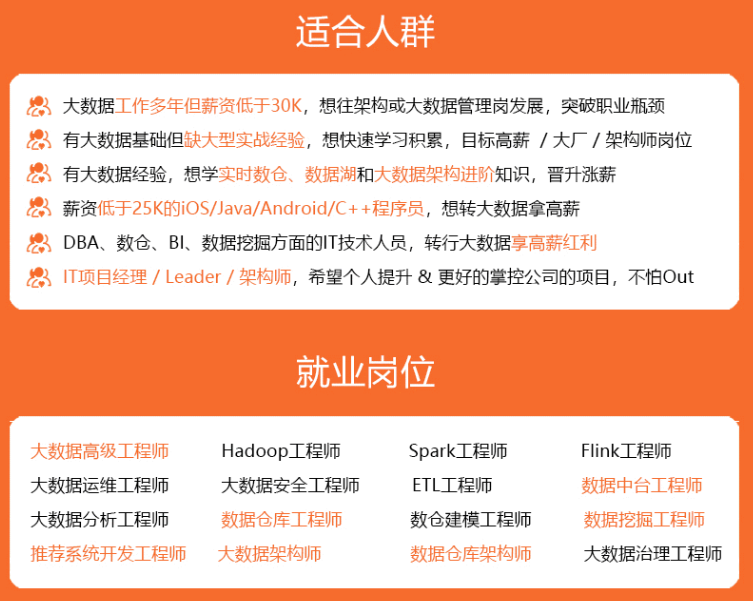

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

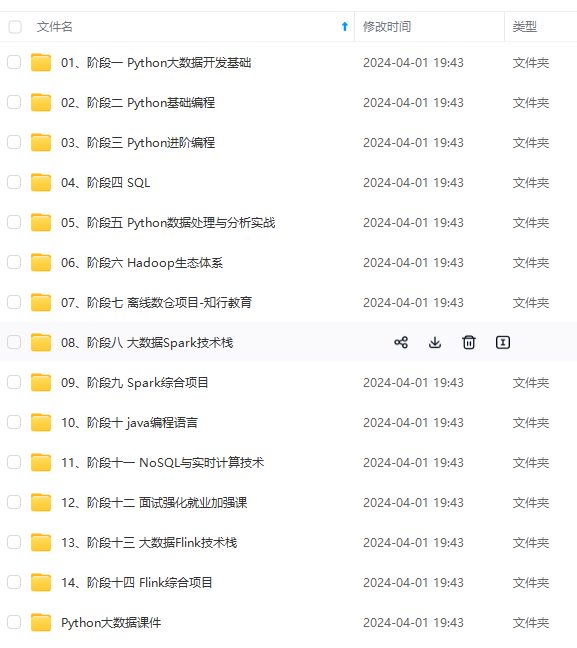

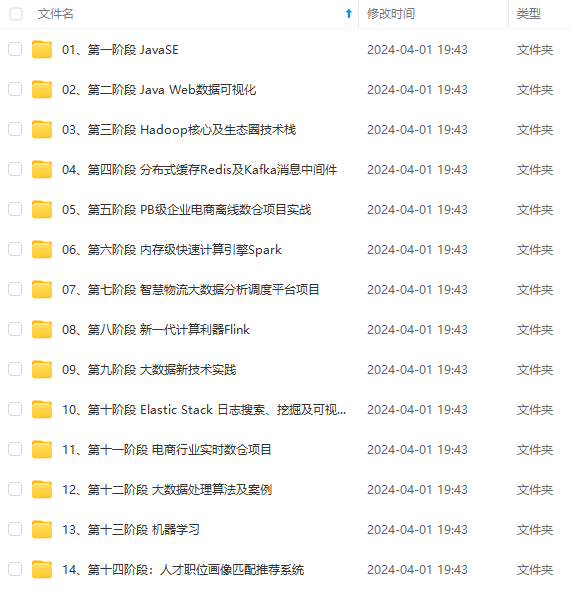

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

public static final String broker_list = "ip(换成自己的):9092";

public static final String topic = "test";

public static void main(String[] args) {

JProducer jproducer = new JProducer();

jproducer.start();

}

@Override

public void run() {

try {

producer();

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

/**

* 向kafka批量生成记录

*/

@Scheduled(initialDelayString="${kf.flink.init}",fixedDelayString = "${kf.flink.fixRate}")

private void producer() throws InterruptedException {

log.info("启动定时任务");

Properties props = config();//kafka连接

Producer<String, String> producer = new KafkaProducer<>(props);

Date date = new Date();

SimpleDateFormat simpleDateFormat = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss");

String dateString = simpleDateFormat.format(date);

while (true) {

for (int i = 1; i <= Integer.MAX_VALUE; i++) {

String json = "{\"id\":" + i + ",\"ip\":\"192.168.0." + i + "\",\"date\":" + dateString + "}";

String k = "第" + i + "条数据=" + json;

sleep(300);

if (i % 10 == 0) {

sleep(1000);

}

producer.send(new ProducerRecord<String, String>(topic, k, json));

}

producer.close();

}

}

/**

* kafka连接

* @return

*/

private Properties config() {

Properties props = new Properties();

props.put("bootstrap.servers",broker_list);

props.put("acks", "1");

props.put("retries", 0);

props.put("batch.size", 16384);

props.put("linger.ms", 1);

props.put("buffer.memory", 33554432);

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

return props;

}

}

#### 2.2 创建flink程序

Flink.class

public class Flink {

private static final String topic = “test”;

public static final String broker_list = “ip(换成自己的):9092”;

public static void main(String[] args) {

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.enableCheckpointing(1000);

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

//读取Kafka数据,主题topic:

DataStream<String> transction = env.addSource(new FlinkKafkaConsumer<String>(topic, new SimpleStringSchema(), props()).setStartFromEarliest());

transction.rebalance().map(new MapFunction<String, Object>() {

private static final long serialVersionUID = 1L;

@Override

public String map(String value) {

System.out.println("ok了");

return value;

}

}).print();

try {

env.execute();

} catch (Exception ex) {

ex.printStackTrace();

}

}

public static Properties props() {

Properties props = new Properties();

props.put("bootstrap.servers", broker_list);

props.put("zookeeper.connect", "192.168.47.130:2182");

props.put("group.id", "kv\_flink");

props.put("enable.auto.commit", "true");

props.put("auto.commit.interval.ms", "1000");

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

return props;

}

}

### 3、把项目打包放入hdfs中

hdfs dfs -put xxx.jar /root/xxx.jar

### 4、执行flink程序

**既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!**

**由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新**

**[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)**

知识点,真正体系化!**

**由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新**

**[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)**

1800

1800

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?