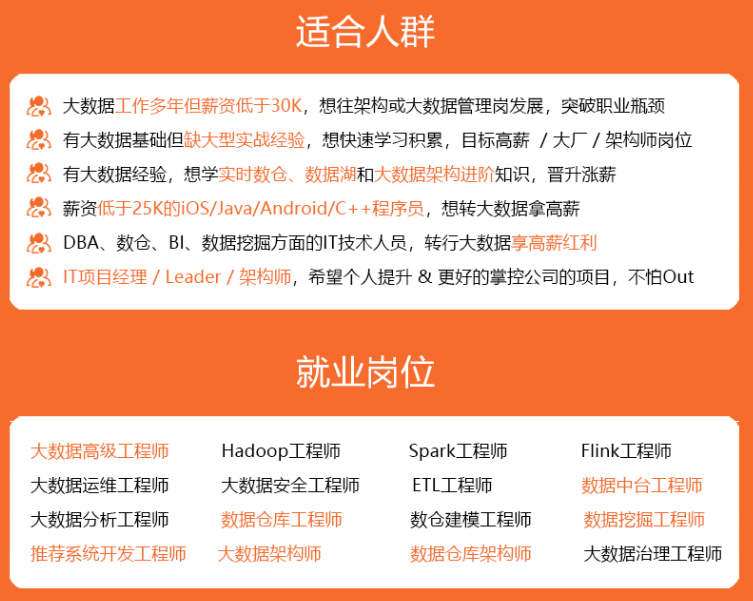

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

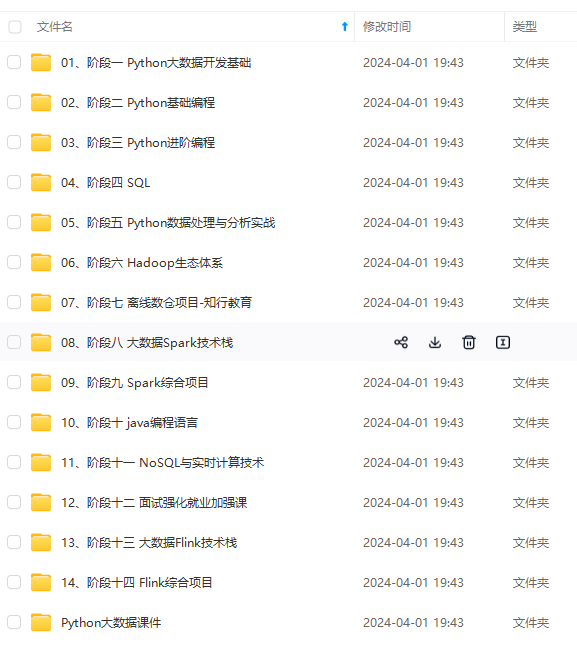

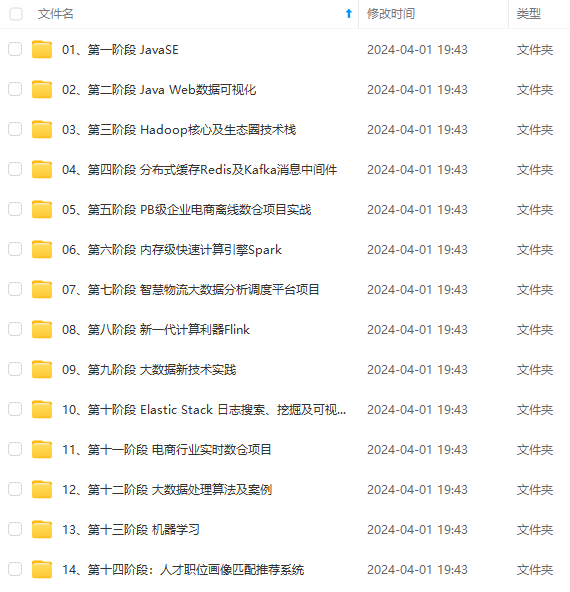

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

<?xml version="1.0"?> yarn.log.server.url https://bigdata0.example.com:19890/jobhistory/logs yarn.acl.enable false Enable ACLs? Defaults to false. yarn.admin.acl * ACL to set admins on the cluster. ACLs are of for comma-separated- usersspacecomma-separated-groups. Defaults to special value of * which means anyone. Special value of just space means no one has access. yarn.log-aggregation-enable true Configuration to enable or disable log aggregation yarn.nodemanager.remote-app-log-dir /tmp/logs Configuration to enable or disable log aggregation yarn.resourcemanager.hostname bigdata0.example.com<property>

<name>yarn.resourcemanager.scheduler.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler</value>

<description></description>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>/data/nm-local</value>

<description>Comma-separated list of paths on the local filesystem where

intermediate data is written.

</description>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value>/data/nm-log</value>

<description>Comma-separated list of paths on the local filesystem where logs are

written.

</description>

</property>

<property>

<name>yarn.nodemanager.log.retain-seconds</name>

<value>10800</value>

<description>Default time (in seconds) to retain log files on the NodeManager Only

applicable if log-aggregation is disabled.

</description>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

<description>Shuffle service that needs to be set for Map Reduce applications.

</description>

</property>

<!-- To enable SSL -->

<property>

<name>yarn.http.policy</name>

<value>HTTPS_ONLY</value>

</property>

<property>

<name>yarn.nodemanager.linux-container-executor.group</name>

<value>hadoop</value>

</property>

<!-- 这个配了可能需要在本机编译 ContainerExecutor -->

<!-- 可以用以下命令检查环境。 -->

<!-- hadoop checknative -a -->

<!-- ldd $HADOOP_HOME/bin/container-executor -->

<!--

<property>

<name>yarn.nodemanager.container-executor.class</name>

<value>org.apache.hadoop.yarn.server.nodemanager.LinuxContainerExecutor</value>

</property>

<property>

<name>yarn.nodemanager.linux-container-executor.path</name>

<value>/bigdata/hadoop-3.3.2/bin/container-executor</value>

</property>

-->

<!-- 以下是 Kerberos 相关配置 -->

<!-- ResourceManager security configs -->

<property>

<name>yarn.resourcemanager.principal</name>

<value>rm/_HOST@EXAMPLE.COM</value>

</property>

<property>

<name>yarn.resourcemanager.keytab</name>

<value>/etc/security/keytabs/rm.service.keytab</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.delegation-token-auth-filter.enabled</name>

<value>true</value>

</property>

<!-- NodeManager security configs -->

<property>

<name>yarn.nodemanager.principal</name>

<value>nm/_HOST@EXAMPLE.COM</value>

</property>

<property>

<name>yarn.nodemanager.keytab</name>

<value>/etc/security/keytabs/nm.service.keytab</value>

</property>

<!-- TimeLine security configs -->

<property>

<name>yarn.timeline-service.principal</name>

<value>tl/_HOST@EXAMPLE.COM</value>

</property>

<property>

<name>yarn.timeline-service.keytab</name>

<value>/etc/security/keytabs/tl.service.keytab</value>

</property>

<property>

<name>yarn.timeline-service.http-authentication.type</name>

<value>kerberos</value>

</property>

<property>

<name>yarn.timeline-service.http-authentication.kerberos.principal</name>

<value>HTTP/_HOST@EXAMPLE.COM</value>

</property>

<property>

<name>yarn.timeline-service.http-authentication.kerberos.keytab</name>

<value>/etc/security/keytabs/spnego.service.keytab</value>

</property>

###### mapred-site.xml

<property>

<name>mapreduce.jobhistory.http.policy</name>

<value>HTTPS_ONLY</value>

</property>

<!-- 以下是 Kerberos 相关配置 -->

<property>

<name>mapreduce.jobhistory.keytab</name>

<value>/etc/security/keytabs/jhs.service.keytab</value>

</property>

<property>

<name>mapreduce.jobhistory.principal</name>

<value>jhs/_HOST@EXAMPLE.COM</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.spnego-principal</name>

<value>HTTP/_HOST@EXAMPLE.COM</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.spnego-keytab-file</name>

<value>/etc/security/keytabs/spnego.service.keytab</value>

</property>

##### 创建https证书

openssl req -new -x509 -keyout bd_ca_key -out bd_ca_cert -days 9999 -subj ‘/C=CN/ST=beijing/L=beijing/O=test/OU=test/CN=test’

scp -r /etc/security/cdh.https bigdata1:/etc/security/

scp -r /etc/security/cdh.https bigdata2:/etc/security/

[root@bigdata0 cdh.https]# openssl req -new -x509 -keyout bd_ca_key -out bd_ca_cert -days 9999 -subj ‘/C=CN/ST=beijing/L=beijing/O=test/OU=test/CN=test’

Generating a 2048 bit RSA private key

…+++

…+++

writing new private key to ‘bd_ca_key’

Enter PEM pass phrase:

Verifying - Enter PEM pass phrase:

[root@bigdata0 cdh.https]#

[root@bigdata0 cdh.https]# ll

总用量 8

-rw-r–r–. 1 root root 1298 10月 5 17:14 bd_ca_cert

-rw-r–r–. 1 root root 1834 10月 5 17:14 bd_ca_key

[root@bigdata0 cdh.https]# scp -r /etc/security/cdh.https bigdata1:/etc/security/

bd_ca_key 100% 1834 913.9KB/s 00:00

bd_ca_cert 100% 1298 1.3MB/s 00:00

[root@bigdata0 cdh.https]# scp -r /etc/security/cdh.https bigdata2:/etc/security/

bd_ca_key 100% 1834 1.7MB/s 00:00

bd_ca_cert 100% 1298 1.3MB/s 00:00

[root@bigdata0 cdh.https]#

在三个节点依次执行

cd /etc/security/cdh.https

所有需要输入密码的地方全部输入123456(方便起见,如果你对密码有要求请自行修改)

1 输入密码和确认密码:123456,此命令成功后输出keystore文件

keytool -keystore keystore -alias localhost -validity 9999 -genkey -keyalg RSA -keysize 2048 -dname “CN=test, OU=test, O=test, L=beijing, ST=beijing, C=CN”

2 输入密码和确认密码:123456,提示是否信任证书:输入yes,此命令成功后输出truststore文件

keytool -keystore truststore -alias CARoot -import -file bd_ca_cert

3 输入密码和确认密码:123456,此命令成功后输出cert文件

keytool -certreq -alias localhost -keystore keystore -file cert

4 此命令成功后输出cert_signed文件

openssl x509 -req -CA bd_ca_cert -CAkey bd_ca_key -in cert -out cert_signed -days 9999 -CAcreateserial -passin pass:123456

5 输入密码和确认密码:123456,是否信任证书,输入yes,此命令成功后更新keystore文件

keytool -keystore keystore -alias CARoot -import -file bd_ca_cert

6 输入密码和确认密码:123456

keytool -keystore keystore -alias localhost -import -file cert_signed

最终得到:

-rw-r–r-- 1 root root 1294 Sep 26 11:31 bd_ca_cert

-rw-r–r-- 1 root root 17 Sep 26 11:36 bd_ca_cert.srl

-rw-r–r-- 1 root root 1834 Sep 26 11:31 bd_ca_key

-rw-r–r-- 1 root root 1081 Sep 26 11:36 cert

-rw-r–r-- 1 root root 1176 Sep 26 11:36 cert_signed

-rw-r–r-- 1 root root 4055 Sep 26 11:37 keystore

-rw-r–r-- 1 root root 978 Sep 26 11:35 truststore

配置ssl-server.xml和ssl-client.xml

ssl-sserver.xml

<property>

<name>ssl.server.truststore.password</name>

<value>123456</value>

<description>Optional. Default value is "".

</description>

</property>

<property>

<name>ssl.server.truststore.type</name>

<value>jks</value>

<description>Optional. The keystore file format, default value is "jks".

</description>

</property>

<property>

<name>ssl.server.truststore.reload.interval</name>

<value>10000</value>

<description>Truststore reload check interval, in milliseconds.

Default value is 10000 (10 seconds).

</description>

</property>

<property>

<name>ssl.server.keystore.location</name>

<value>/etc/security/cdh.https/keystore</value>

<description>Keystore to be used by NN and DN. Must be specified.

</description>

</property>

<property>

<name>ssl.server.keystore.password</name>

<value>123456</value>

<description>Must be specified.

</description>

</property>

<property>

<name>ssl.server.keystore.keypassword</name>

<value>123456</value>

<description>Must be specified.

</description>

</property>

<property>

<name>ssl.server.keystore.type</name>

<value>jks</value>

<description>Optional. The keystore file format, default value is "jks".

</description>

</property>

<property>

<name>ssl.server.exclude.cipher.list</name>

<value>TLS_EE_RSA_WITH_RC4_128_SHA,SSL_DHE_RSA_EXPORT_WITH_DES40_CBC_SHA,

SSL_RSA_WITH_DES_CBC_SHA,SSL_DHE_RSA_WITH_DES_CBC_SHA,

SSL_RSA_EXPORT_WITH_RC4_40_MD5,SSL_RSA_EXPORT_WITH_DES40_CBC_SHA,

SSL_RSA_WITH_RC4_128_MD5</value>

<description>Optional. The weak security cipher suites that you want excluded

from SSL communication.</description>

</property>

ssl-client.xml

<property>

<name>ssl.client.truststore.password</name>

<value>123456</value>

<description>Optional. Default value is "".

</description>

</property>

<property>

<name>ssl.client.truststore.type</name>

<value>jks</value>

<description>Optional. The keystore file format, default value is "jks".

</description>

</property>

<property>

<name>ssl.client.truststore.reload.interval</name>

<value>10000</value>

<description>Truststore reload check interval, in milliseconds.

Default value is 10000 (10 seconds).

</description>

</property>

<property>

<name>ssl.client.keystore.location</name>

<value>/etc/security/cdh.https/keystore</value>

<description>Keystore to be used by clients like distcp. Must be

specified.

</description>

</property>

<property>

<name>ssl.client.keystore.password</name>

<value>123456</value>

<description>Optional. Default value is "".

</description>

</property>

<property>

<name>ssl.client.keystore.keypassword</name>

<value>123456</value>

<description>Optional. Default value is "".

</description>

</property>

<property>

<name>ssl.client.keystore.type</name>

<value>jks</value>

<description>Optional. The keystore file format, default value is "jks".

</description>

</property>

分发配置

scp $HADOOP_HOME/etc/hadoop/* bigdata1:/bigdata/hadoop-3.3.2/etc/hadoop/

scp $HADOOP_HOME/etc/hadoop/* bigdata2:/bigdata/hadoop-3.3.2/etc/hadoop/

### 4、启动服务

ps:初始化 namenode 后可以直接 sbin/start-all.sh

### 5、一些错误:

#### 5.1 Kerberos 相关错误

##### 连不上 realm

Cannot contact any KDC for realm

一般是网络不通

1、可能是没关闭防火墙:

[root@bigdata0 bigdata]# kinit krbtest/admin@EXAMPLE.COM

kinit: Cannot contact any KDC for realm ‘EXAMPLE.COM’ while getting initial credentials

[root@bigdata0 bigdata]#

[root@bigdata0 bigdata]#

[root@bigdata0 bigdata]# systemctl stop firewalld.service

[root@bigdata0 bigdata]# systemctl disable firewalld.service

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@bigdata0 bigdata]# kinit krbtest/admin@EXAMPLE.COM

Password for krbtest/admin@EXAMPLE.COM:

[root@bigdata0 bigdata]#

[root@bigdata0 bigdata]#

[root@bigdata0 bigdata]#

[root@bigdata0 bigdata]#

[root@bigdata0 bigdata]# klist

Ticket cache: FILE:/tmp/krb5cc_0

Default principal: krbtest/admin@EXAMPLE.COM

Valid starting Expires Service principal

2023-10-05T16:19:58 2023-10-06T16:19:58 krbtgt/EXAMPLE.COM@EXAMPLE.COM

[root@bigdata0 bigdata]#

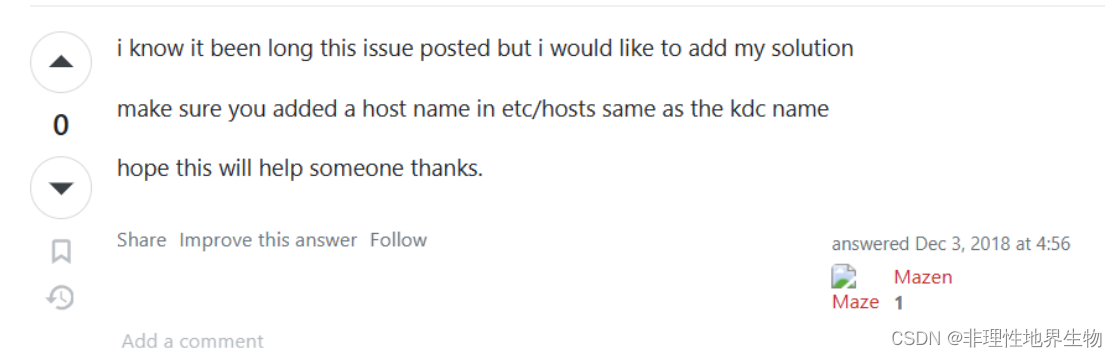

2、可能是 没有在 hosts 中配置 kdc

编辑 /etc/hosts 添加 kdc 和对应 ip 的映射

{kdc ip} {kdc}

192.168.50.10 bigdata3.example.com

<https://serverfault.com/questions/612869/kinit-cannot-contact-any-kdc-for-realm-ubuntu-while-getting-initial-credentia>

##### kinit 命令找错依赖

kinit: relocation error: kinit: symbol krb5\_get\_init\_creds\_opt\_set\_pac\_request, version krb5\_3\_MIT not defined in file libkrb5.so.3 with link time reference

用 ldd

(

w

h

i

c

h

k

i

n

i

t

)

发现指向了非

/

l

i

b

64

下面的

l

i

b

k

r

b

5.

s

o

.

3

依赖。执行

e

x

p

o

r

t

L

D

L

I

B

R

A

R

Y

P

A

T

H

=

/

l

i

b

64

:

(which kinit) 发现指向了非 /lib64 下面的 libkrb5.so.3 依赖。执行 export LD\_LIBRARY\_PATH=/lib64:

(whichkinit)发现指向了非/lib64下面的libkrb5.so.3依赖。执行exportLDLIBRARYPATH=/lib64:{LD\_LIBRARY\_PATH} 即可。

<https://community.broadcom.com/communities/community-home/digestviewer/viewthread?MID=797304#:~:text=To%20solve%20this%20error%2C%20the%20only%20workaround%20found,to%20use%20system%20libraries%20%24%20export%20LD_LIBRARY_PATH%3D%2Flib64%3A%24%20%7BLD_LIBRARY_PATH%7D>

---

##### ccache id 非法

kinit: Invalid Uid in persistent keyring name while getting default ccache

修改 /etc/krb5.conf 中的 default\_ccache\_name 值。

<https://unix.stackexchange.com/questions/712857/kinit-invalid-uid-in-persistent-keyring-name-while-getting-default-ccache-while#:~:text=If%20running%20unset%20KRB5CCNAME%20did%20not%20resolve%20it%2C,of%20%22default_ccache_name%22%20in%20%2Fetc%2Fkrb5.conf%20to%20a%20local%20file%3A>

##### KrbException: Message stream modified (41)

据说和 jre 版本有关系,删除 krb5.conf 配置文件里的 `renew_lifetime = xxx` 即可。

#### 5.2 Hadoop 相关错误

哪个节点的哪个服务有错误,可以在对应日志(或manager日志)中查看是否有异常信息。比如一些 so 文件找不到,不上即可。

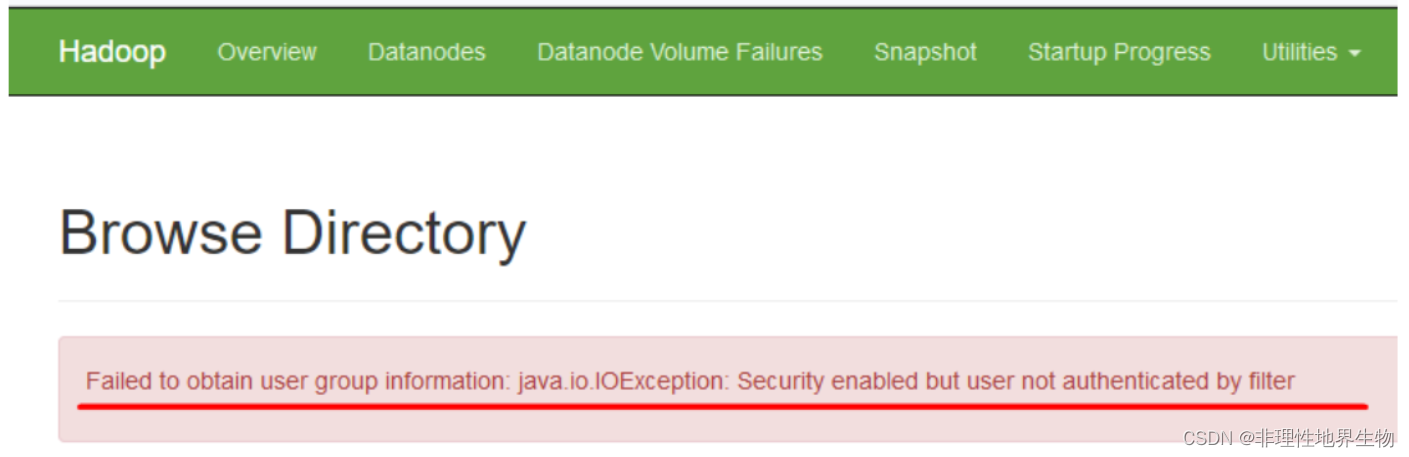

hdfs web 上报错 Failed to obtain user group information: java.io.IOException: Security enabled but user not authenticated by filter

<https://issues.apache.org/jira/browse/HDFS-16441>

### 6 提交 Spark on yarn

1、直接提交:

命令:

bin/spark-submit \

–master yarn

–deploy-mode cluster

–class org.apache.spark.examples.SparkPi

examples/jars/spark-examples_2.12-3.3.0.jar

[root@bigdata3 spark-3.3.0-bin-hadoop3]# bin/spark-submit --master yarn --deploy-mode cluster --class org.apache.spark.examples.SparkPi examples/jars/spark-examples\_2.12-3.3.0.jar

23/10/12 13:53:05 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

23/10/12 13:53:05 INFO DefaultNoHARMFailoverProxyProvider: Connecting to ResourceManager at bigdata0.example.com/192.168.50.7:8032

23/10/12 13:53:06 WARN Client: Exception encountered while connecting to the server

org.apache.hadoop.security.AccessControlException: Client cannot authenticate via:[TOKEN, KERBEROS]

at org.apache.hadoop.security.SaslRpcClient.selectSaslClient(SaslRpcClient.java:179)

at org.apache.hadoop.security.SaslRpcClient.saslConnect(SaslRpcClient.java:392)

at org.apache.hadoop.ipc.Client$Connection.setupSaslConnection(Client.java:623)

at org.apache.hadoop.ipc.Client$Connection.access$2300(Client.java:414)

at org.apache.hadoop.ipc.Client$Connection$2.run(Client.java:843)

at org.apache.hadoop.ipc.Client$Connection$2.run(Client.java:839)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1878)

at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:839)

at org.apache.hadoop.ipc.Client$Connection.access$3800(Client.java:414)

at org.apache.hadoop.ipc.Client.getConnection(Client.java:1677)

at org.apache.hadoop.ipc.Client.call(Client.java:1502)

at org.apache.hadoop.ipc.Client.call(Client.java:1455)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Invoker.invoke(ProtobufRpcEngine2.java:242)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Invoker.invoke(ProtobufRpcEngine2.java:129)

at com.sun.proxy.$Proxy24.getNewApplication(Unknown Source)

at org.apache.hadoop.yarn.api.impl.pb.client.ApplicationClientProtocolPBClientImpl.getNewApplication(ApplicationClientProtocolPBClientImpl.java:286)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:422)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:165)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:157)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:359)

at com.sun.proxy.$Proxy25.getNewApplication(Unknown Source)

at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.getNewApplication(YarnClientImpl.java:284)

at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.createApplication(YarnClientImpl.java:292)

at org.apache.spark.deploy.yarn.Client.submitApplication(Client.scala:200)

at org.apache.spark.deploy.yarn.Client.run(Client.scala:1327)

at org.apache.spark.deploy.yarn.YarnClusterApplication.start(Client.scala:1764)

at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:958)

at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1046)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1055)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Exception in thread "main" java.io.IOException: DestHost:destPort bigdata0.example.com:8032 , LocalHost:localPort bigdata3.example.com/192.168.50.10:0. Failed on local exception: java.io.IOException: org.apache.hadoop.security.AccessControlException: Client cannot authenticate via:[TOKEN, KERBEROS]

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:913)

at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:888)

at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1616)

at org.apache.hadoop.ipc.Client.call(Client.java:1558)

at org.apache.hadoop.ipc.Client.call(Client.java:1455)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Invoker.invoke(ProtobufRpcEngine2.java:242)

at org.apache.hadoop.ipc.ProtobufRpcEngine2$Invoker.invoke(ProtobufRpcEngine2.java:129)

at com.sun.proxy.$Proxy24.getNewApplication(Unknown Source)

at org.apache.hadoop.yarn.api.impl.pb.client.ApplicationClientProtocolPBClientImpl.getNewApplication(ApplicationClientProtocolPBClientImpl.java:286)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:422)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:165)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:157)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:359)

at com.sun.proxy.$Proxy25.getNewApplication(Unknown Source)

at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.getNewApplication(YarnClientImpl.java:284)

at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.createApplication(YarnClientImpl.java:292)

at org.apache.spark.deploy.yarn.Client.submitApplication(Client.scala:200)

at org.apache.spark.deploy.yarn.Client.run(Client.scala:1327)

at org.apache.spark.deploy.yarn.YarnClusterApplication.start(Client.scala:1764)

at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:958)

at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1046)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1055)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

Caused by: java.io.IOException: org.apache.hadoop.security.AccessControlException: Client cannot authenticate via:[TOKEN, KERBEROS]

at org.apache.hadoop.ipc.Client$Connection$1.run(Client.java:798)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1878)

at org.apache.hadoop.ipc.Client$Connection.handleSaslConnectionFailure(Client.java:752)

at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:856)

at org.apache.hadoop.ipc.Client$Connection.access$3800(Client.java:414)

at org.apache.hadoop.ipc.Client.getConnection(Client.java:1677)

at org.apache.hadoop.ipc.Client.call(Client.java:1502)

... 27 more

Caused by: org.apache.hadoop.security.AccessControlException: Client cannot authenticate via:[TOKEN, KERBEROS]

at org.apache.hadoop.security.SaslRpcClient.selectSaslClient(SaslRpcClient.java:179)

at org.apache.hadoop.security.SaslRpcClient.saslConnect(SaslRpcClient.java:392)

at org.apache.hadoop.ipc.Client$Connection.setupSaslConnection(Client.java:623)

at org.apache.hadoop.ipc.Client$Connection.access$2300(Client.java:414)

at org.apache.hadoop.ipc.Client$Connection$2.run(Client.java:843)

at org.apache.hadoop.ipc.Client$Connection$2.run(Client.java:839)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1878)

at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:839)

... 30 more

23/10/12 13:53:06 INFO ShutdownHookManager: Shutdown hook called

23/10/12 13:53:06 INFO ShutdownHookManager: Deleting directory /tmp/spark-b9b19f53-4839-4cf0-82d0-065f4956f830

2、添加 spark.kerberos.keytab 与 spark.kerberos.principal 参数提交。

在提交的机器上添加 Kerberos 账户提交

[root@bigdata3 spark-3.3.0-bin-hadoop3]# kadmin.local

Authenticating as principal root/admin@EXAMPLE.COM with password.

kadmin.local:

kadmin.local: addprinc -randkey nn/bigdata3.example.com@EXAMPLE.COM

WARNING: no policy specified for nn/bigdata3.example.com@EXAMPLE.COM; defaulting to no policy

Principal "nn/bigdata3.example.com@EXAMPLE.COM" created.

kadmin.local: ktadd -k /etc/security/keytabs/nn.service.keytab nn/bigdata3.example.com@EXAMPLE.COM

提交命令:

bin/spark-submit \

–master yarn

–deploy-mode cluster

–conf spark.kerberos.keytab=/etc/security/keytabs/nn.service.keytab

–conf spark.kerberos.principal=nn/bigdata3.example.com@EXAMPLE.COM

–class org.apache.spark.examples.SparkPi

examples/jars/spark-examples_2.12-3.3.0.jar

结果:

[root@bigdata3 spark-3.3.0-bin-hadoop3]# bin/spark-submit --master yarn --deploy-mode cluster --conf spark.kerberos.keytab=/etc/security/keytabs/nn.service.keytab --conf spark.kerberos.principal=nn/bigdata3.example.com@EXAMPLE.COM --class org.apache.spark.examples.SparkPi examples/jars/spark-examples\_2.12-3.3.0.jar

23/10/12 13:53:30 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

23/10/12 13:53:31 INFO Client: Kerberos credentials: principal = nn/bigdata3.example.com@EXAMPLE.COM, keytab = /etc/security/keytabs/nn.service.keytab

23/10/12 13:53:31 INFO DefaultNoHARMFailoverProxyProvider: Connecting to ResourceManager at bigdata0.example.com/192.168.50.7:8032

23/10/12 13:53:32 INFO Configuration: resource-types.xml not found

23/10/12 13:53:32 INFO ResourceUtils: Unable to find 'resource-types.xml'.

23/10/12 13:53:32 INFO Client: Verifying our application has not requested more than the maximum memory capability of the cluster (8192 MB per container)

23/10/12 13:53:32 INFO Client: Will allocate AM container, with 1408 MB memory including 384 MB overhead

23/10/12 13:53:32 INFO Client: Setting up container launch context for our AM

23/10/12 13:53:32 INFO Client: Setting up the launch environment for our AM container

23/10/12 13:53:32 INFO Client: Preparing resources for our AM container

23/10/12 13:53:32 INFO Client: To enable the AM to login from keytab, credentials are being copied over to the AM via the YARN Secure Distributed Cache.

23/10/12 13:53:32 INFO Client: Uploading resource file:/etc/security/keytabs/nn.service.keytab -> hdfs://bigdata0.example.com:8020/user/hdfs/.sparkStaging/application_1697089814250_0001/nn.service.keytab

23/10/12 13:53:34 WARN Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME.

23/10/12 13:53:44 INFO Client: Uploading resource file:/tmp/spark-83cea039-6e72-4958-b097-4537a008e792/__spark_libs__1911433509069709237.zip -> hdfs://bigdata0.example.com:8020/user/hdfs/.sparkStaging/application_1697089814250_0001/__spark_libs__1911433509069709237.zip

23/10/12 13:54:16 INFO Client: Uploading resource file:/bigdata/spark-3.3.0-bin-hadoop3/examples/jars/spark-examples_2.12-3.3.0.jar -> hdfs://bigdata0.example.com:8020/user/hdfs/.sparkStaging/application_1697089814250_0001/spark-examples_2.12-3.3.0.jar

23/10/12 13:54:16 INFO Client: Uploading resource file:/tmp/spark-83cea039-6e72-4958-b097-4537a008e792/__spark_conf__8266642042189366964.zip -> hdfs://bigdata0.example.com:8020/user/hdfs/.sparkStaging/application_1697089814250_0001/__spark_conf__.zip

23/10/12 13:54:17 INFO SecurityManager: Changing view acls to: root,hdfs

23/10/12 13:54:17 INFO SecurityManager: Changing modify acls to: root,hdfs

23/10/12 13:54:17 INFO SecurityManager: Changing view acls groups to:

23/10/12 13:54:17 INFO SecurityManager: Changing modify acls groups to:

23/10/12 13:54:17 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(root, hdfs); groups with view permissions: Set(); users with modify permissions: Set(root, hdfs); groups with modify permissions: Set()

23/10/12 13:54:17 INFO HadoopDelegationTokenManager: Attempting to login to KDC using principal: nn/bigdata3.example.com@EXAMPLE.COM

23/10/12 13:54:17 INFO HadoopDelegationTokenManager: Successfully logged into KDC.

23/10/12 13:54:17 INFO HiveConf: Found configuration file null

23/10/12 13:54:17 INFO HadoopFSDelegationTokenProvider: getting token for: DFS[DFSClient[clientName=DFSClient_NONMAPREDUCE_1847188333_1, ugi=nn/bigdata3.example.com@EXAMPLE.COM (auth:KERBEROS)]] with renewer rm/bigdata0.example.com@EXAMPLE.COM

23/10/12 13:54:17 INFO DFSClient: Created token for hdfs: HDFS_DELEGATION_TOKEN owner=nn/bigdata3.example.com@EXAMPLE.COM, renewer=yarn, realUser=, issueDate=1697090057412, maxDate=1697694857412, sequenceNumber=7, masterKeyId=8 on 192.168.50.7:8020

23/10/12 13:54:17 INFO HadoopFSDelegationTokenProvider: getting token for: DFS[DFSClient[clientName=DFSClient_NONMAPREDUCE_1847188333_1, ugi=nn/bigdata3.example.com@EXAMPLE.COM (auth:KERBEROS)]] with renewer nn/bigdata3.example.com@EXAMPLE.COM

23/10/12 13:54:17 INFO DFSClient: Created token for hdfs: HDFS_DELEGATION_TOKEN owner=nn/bigdata3.example.com@EXAMPLE.COM, renewer=hdfs, realUser=, issueDate=1697090057438, maxDate=1697694857438, sequenceNumber=8, masterKeyId=8 on 192.168.50.7:8020

23/10/12 13:54:17 INFO HadoopFSDelegationTokenProvider: Renewal interval is 86400032 for token HDFS_DELEGATION_TOKEN

23/10/12 13:54:19 INFO Client: Submitting application application_1697089814250_0001 to ResourceManager

23/10/12 13:54:21 INFO YarnClientImpl: Submitted application application_1697089814250_0001

23/10/12 13:54:22 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:22 INFO Client:

client token: Token { kind: YARN_CLIENT_TOKEN, service: }

diagnostics: N/A

ApplicationMaster host: N/A

ApplicationMaster RPC port: -1

queue: root.hdfs

start time: 1697090060093

final status: UNDEFINED

tracking URL: https://bigdata0.example.com:8090/proxy/application_1697089814250_0001/

user: hdfs

23/10/12 13:54:23 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:24 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:25 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:26 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:27 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:28 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:29 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:30 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:31 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:32 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:33 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:34 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:35 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:37 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:38 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:39 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:40 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:41 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:42 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:43 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:45 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:46 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:47 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:48 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:50 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:51 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:52 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:53 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:54 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:55 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:56 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:57 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:58 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:54:59 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:01 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:02 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:03 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:04 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:05 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:06 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:07 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:08 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:09 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:10 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:11 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:12 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:13 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:14 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:15 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:16 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:17 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:18 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:19 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:20 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:22 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:23 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:24 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:25 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:26 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:27 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:28 INFO Client: Application report for application_1697089814250_0001 (state: ACCEPTED)

23/10/12 13:55:29 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:29 INFO Client:

client token: Token { kind: YARN_CLIENT_TOKEN, service: }

diagnostics: N/A

ApplicationMaster host: bigdata0.example.com

ApplicationMaster RPC port: 35967

queue: root.hdfs

start time: 1697090060093

final status: UNDEFINED

tracking URL: https://bigdata0.example.com:8090/proxy/application_1697089814250_0001/

user: hdfs

23/10/12 13:55:30 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:31 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:32 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:33 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:34 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:35 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:36 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:37 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:38 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:39 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:40 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:41 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:42 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:43 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

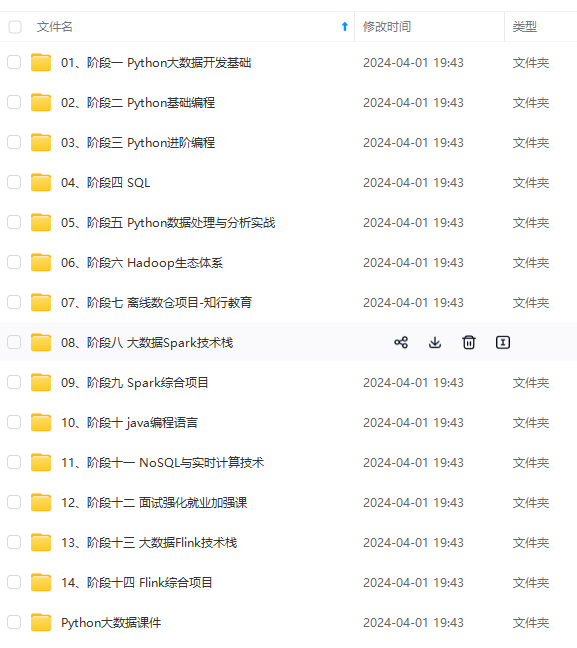

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

/10/12 13:55:37 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:38 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:39 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:40 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:41 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:42 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

23/10/12 13:55:43 INFO Client: Application report for application_1697089814250_0001 (state: RUNNING)

[外链图片转存中…(img-WRysuk6V-1715034011697)]

[外链图片转存中…(img-qJfGzlLz-1715034011697)]

[外链图片转存中…(img-kE7V7ygH-1715034011698)]

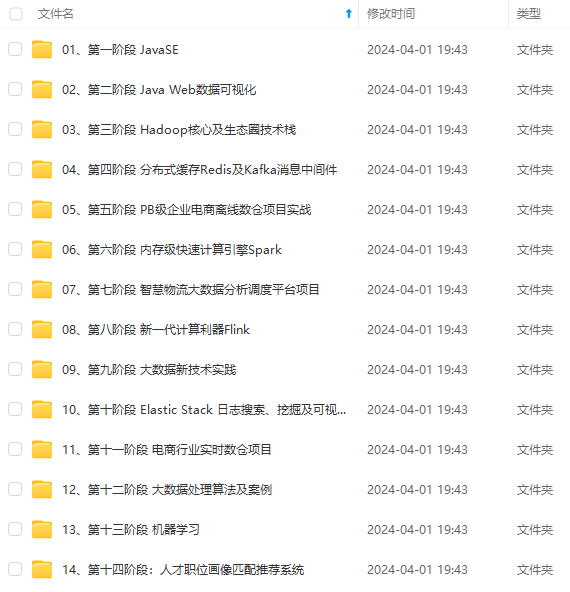

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

5534

5534

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?