但是除非我们知道深度学习 REST API 服务器的功能和局限性,否则它有什么好处呢?

在 stress_test.py 中,我们测试我们的服务器。我们将通过启动 500 个并发线程来实现这一点,这些线程将我们的图像发送到服务器进行并行分类。我建议在服务器 localhost 上运行它以启动,然后从异地客户端运行它。

========================================================================

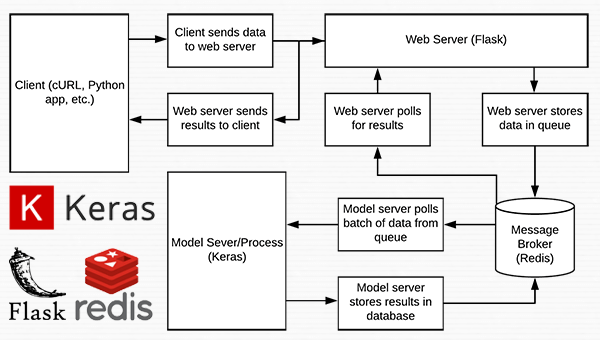

图 1:使用 Python、Keras、Redis 和 Flask 构建的深度学习 REST API 服务器的数据流图。

这个项目中使用的几乎每一行代码都来自我们之前关于构建可扩展深度学习 REST API 的文章——唯一的变化是我们将一些代码移动到单独的文件中,以促进生产环境中的可扩展性。

================================================================

initialize Redis connection settings

REDIS_HOST = “localhost”

REDIS_PORT = 6379

REDIS_DB = 0

initialize constants used to control image spatial dimensions and

data type

IMAGE_WIDTH = 224

IMAGE_HEIGHT = 224

IMAGE_CHANS = 3

IMAGE_DTYPE = “float32”

initialize constants used for server queuing

IMAGE_QUEUE = “image_queue”

BATCH_SIZE = 32

SERVER_SLEEP = 0.25

CLIENT_SLEEP = 0.25

在 settings.py 中,您将能够更改服务器连接、图像尺寸 + 数据类型和服务器队列的参数。

import the necessary packages

import numpy as np

import base64

import sys

def base64_encode_image(a):

base64 encode the input NumPy array

return base64.b64encode(a).decode(“utf-8”)

def base64_decode_image(a, dtype, shape):

if this is Python 3, we need the extra step of encoding the

serialized NumPy string as a byte object

if sys.version_info.major == 3:

a = bytes(a, encoding=“utf-8”)

convert the string to a NumPy array using the supplied data

type and target shape

a = np.frombuffer(base64.decodestring(a), dtype=dtype)

a = a.reshape(shape)

return the decoded image

return a

helpers.py 文件包含两个函数——一个用于 base64 编码,另一个用于解码。

编码是必要的,以便我们可以在 Redis 中序列化 + 存储我们的图像。 同样,解码是必要的,以便我们可以在预处理之前将图像反序列化为 NumPy 数组格式。

====================================================================

import the necessary packages

from tensorflow.keras.preprocessing.image import img_to_array

from tensorflow.keras.applications.resnet50 import preprocess_input

from PIL import Image

import numpy as np

import settings

import helpers

import flask

import redis

import uuid

import time

import json

import io

initialize our Flask application and Redis server

app = flask.Flask(name)

db = redis.StrictRedis(host=settings.REDIS_HOST,

port=settings.REDIS_PORT, db=settings.REDIS_DB)

def prepare_image(image, target):

if the image mode is not RGB, convert it

if image.mode != “RGB”:

image = image.convert(“RGB”)

resize the input image and preprocess it

image = image.resize(target)

image = img_to_array(image)

image = np.expand_dims(image, axis=0)

image = preprocess_input(image)

return the processed image

return image

@app.route(“/”)

def homepage():

return “Welcome to the PyImageSearch Keras REST API!”

@app.route(“/predict”, methods=[“POST”])

def predict():

initialize the data dictionary that will be returned from the

view

data = {“success”: False}

ensure an image was properly uploaded to our endpoint

if flask.request.method == “POST”:

if flask.request.files.get(“image”):

read the image in PIL format and prepare it for

classification

image = flask.request.files[“image”].read()

image = Image.open(io.BytesIO(image))

image = prepare_image(image,

(settings.IMAGE_WIDTH, settings.IMAGE_HEIGHT))

ensure our NumPy array is C-contiguous as well,

otherwise we won’t be able to serialize it

image = image.copy(order=“C”)

generate an ID for the classification then add the

classification ID + image to the queue

k = str(uuid.uuid4())

image = helpers.base64_encode_image(image)

d = {“id”: k, “image”: image}

db.rpush(settings.IMAGE_QUEUE, json.dumps(d))

keep looping until our model server returns the output

predictions

while True:

attempt to grab the output predictions

output = db.get(k)

check to see if our model has classified the input

image

if output is not None:

add the output predictions to our data

dictionary so we can return it to the client

output = output.decode(“utf-8”)

data[“predictions”] = json.loads(output)

delete the result from the database and break

from the polling loop

db.delete(k)

break

sleep for a small amount to give the model a chance

to classify the input image

time.sleep(settings.CLIENT_SLEEP)

indicate that the request was a success

data[“success”] = True

return the data dictionary as a JSON response

return flask.jsonify(data)

for debugging purposes, it’s helpful to start the Flask testing

server (don’t use this for production

if name == “main”:

print(“* Starting web service…”)

app.run()

在 run_web_server.py 中,您将看到 predict ,该函数与我们的 REST API /predict 端点相关联。

predict 函数将编码的图像推送到 Redis 队列中,然后不断循环/轮询,直到它从模型服务器获取预测数据。 然后我们对数据进行 JSON 编码并指示 Flask 将数据发送回客户端。

====================================================================

import the necessary packages

from tensorflow.keras.applications import ResNet50

from tensorflow.keras.applications.resnet50 import decode_predictions

import numpy as np

import settings

import helpers

import redis

import time

import json

connect to Redis server

db = redis.StrictRedis(host=settings.REDIS_HOST,

port=settings.REDIS_PORT, db=settings.REDIS_DB)

def classify_process():

load the pre-trained Keras model (here we are using a model

pre-trained on ImageNet and provided by Keras, but you can

substitute in your own networks just as easily)

print(“* Loading model…”)

model = ResNet50(weights=“imagenet”)

print(“* Model loaded”)

continually pool for new images to classify

while True:

attempt to grab a batch of images from the database, then

initialize the image IDs and batch of images themselves

queue = db.lrange(settings.IMAGE_QUEUE, 0,

settings.BATCH_SIZE - 1)

imageIDs = []

batch = None

loop over the queue

for q in queue:

deserialize the object and obtain the input image

q = json.loads(q.decode(“utf-8”))

image = helpers.base64_decode_image(q[“image”],

settings.IMAGE_DTYPE,

(1, settings.IMAGE_HEIGHT, settings.IMAGE_WIDTH,

settings.IMAGE_CHANS))

check to see if the batch list is None

if batch is None:

batch = image

otherwise, stack the data

else:

batch = np.vstack([batch, image])

update the list of image IDs

imageIDs.append(q[“id”])

check to see if we need to process the batch

if len(imageIDs) > 0:

classify the batch

print(“* Batch size: {}”.format(batch.shape))

preds = model.predict(batch)

results = decode_predictions(preds)

loop over the image IDs and their corresponding set of

results from our model

for (imageID, resultSet) in zip(imageIDs, results):

initialize the list of output predictions

output = []

loop over the results and add them to the list of

output predictions

for (imagenetID, label, prob) in resultSet:

r = {“label”: label, “probability”: float(prob)}

output.append®

store the output predictions in the database, using

the image ID as the key so we can fetch the results

db.set(imageID, json.dumps(output))

remove the set of images from our queue

db.ltrim(settings.IMAGE_QUEUE, len(imageIDs), -1)

sleep for a small amount

time.sleep(settings.SERVER_SLEEP)

if this is the main thread of execution start the model server

process

if name == “main”:

classify_process()

run_model_server.py 文件包含我们的classify_process 函数。 这个函数加载我们的模型,然后对一批图像运行预测。 这个过程最好在 GPU 上执行,但也可以使用 CPU。

在这个例子中,为了简单起见,我们将使用在 ImageNet 数据集上预训练的 ResNet50。 您可以修改classify_process 以利用您自己的深度学习模型。

==================================================================

add our app to the system path

import sys

sys.path.insert(0, “/var/www/html/keras-complete-rest-api”)

import the application and away we go…

from run_web_server import app as application

文件 keras_rest_api_app.wsgi 是我们深度学习 REST API 的一个新组件。 这个 WSGI 配置文件将我们的服务器目录添加到系统路径并导入 Web 应用程序以启动所有操作。 我们在 Apache 服务器设置文件 /etc/apache2/sites-available/000-default.conf 中指向此文件,稍后将在本博文中介绍。

===============================================================

import the necessary packages

from threading import Thread

import requests

import time

initialize the Keras REST API endpoint URL along with the input

image path

KERAS_REST_API_URL = “http://localhost/predict”

IMAGE_PATH = “jemma.png”

initialize the number of requests for the stress test along with

the sleep amount between requests

NUM_REQUESTS = 500

SLEEP_COUNT = 0.05

def call_predict_endpoint(n):

load the input image and construct the payload for the request

image = open(IMAGE_PATH, “rb”).read()

payload = {“image”: image}

submit the request

r = requests.post(KERAS_REST_API_URL, files=payload).json()

ensure the request was sucessful

if r[“success”]:

print(“[INFO] thread {} OK”.format(n))

otherwise, the request failed

else:

print(“[INFO] thread {} FAILED”.format(n))

loop over the number of threads

for i in range(0, NUM_REQUESTS):

start a new thread to call the API

t = Thread(target=call_predict_endpoint, args=(i,))

t.daemon = True

t.start()

time.sleep(SLEEP_COUNT)

insert a long sleep so we can wait until the server is finished

processing the images

time.sleep(300)

我们的 stress_test.py 脚本将帮助我们测试服务器并确定其限制。 我总是建议对您的深度学习 REST API 服务器进行压力测试,以便您知道是否(更重要的是,何时)需要添加额外的 GPU、CPU 或 RAM。 此脚本启动 NUM_REQUESTS 线程和 POST 到 /predict 端点。 这取决于我们的 Flask 网络应用程序。

====================================================================

Redis 是一种高效的内存数据库,它将充当我们的队列/消息代理。 获取和安装Redis非常简单:

$ wget http://download.redis.io/redis-stable.tar.gz

$ tar xvzf redis-stable.tar.gz

$ cd redis-stable

$ make

$ sudo make install

自我介绍一下,小编13年上海交大毕业,曾经在小公司待过,也去过华为、OPPO等大厂,18年进入阿里一直到现在。

深知大多数Python工程师,想要提升技能,往往是自己摸索成长或者是报班学习,但对于培训机构动则几千的学费,着实压力不小。自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

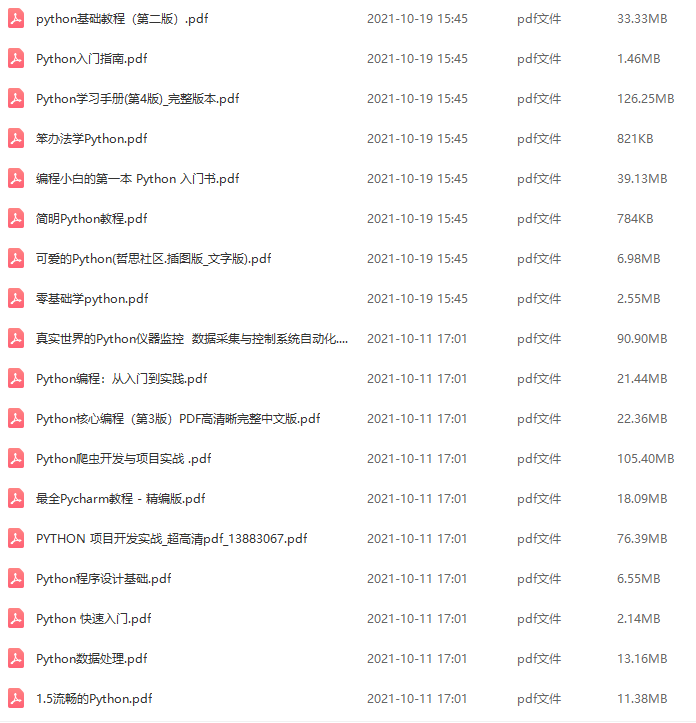

因此收集整理了一份《2024年Python开发全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友,同时减轻大家的负担。

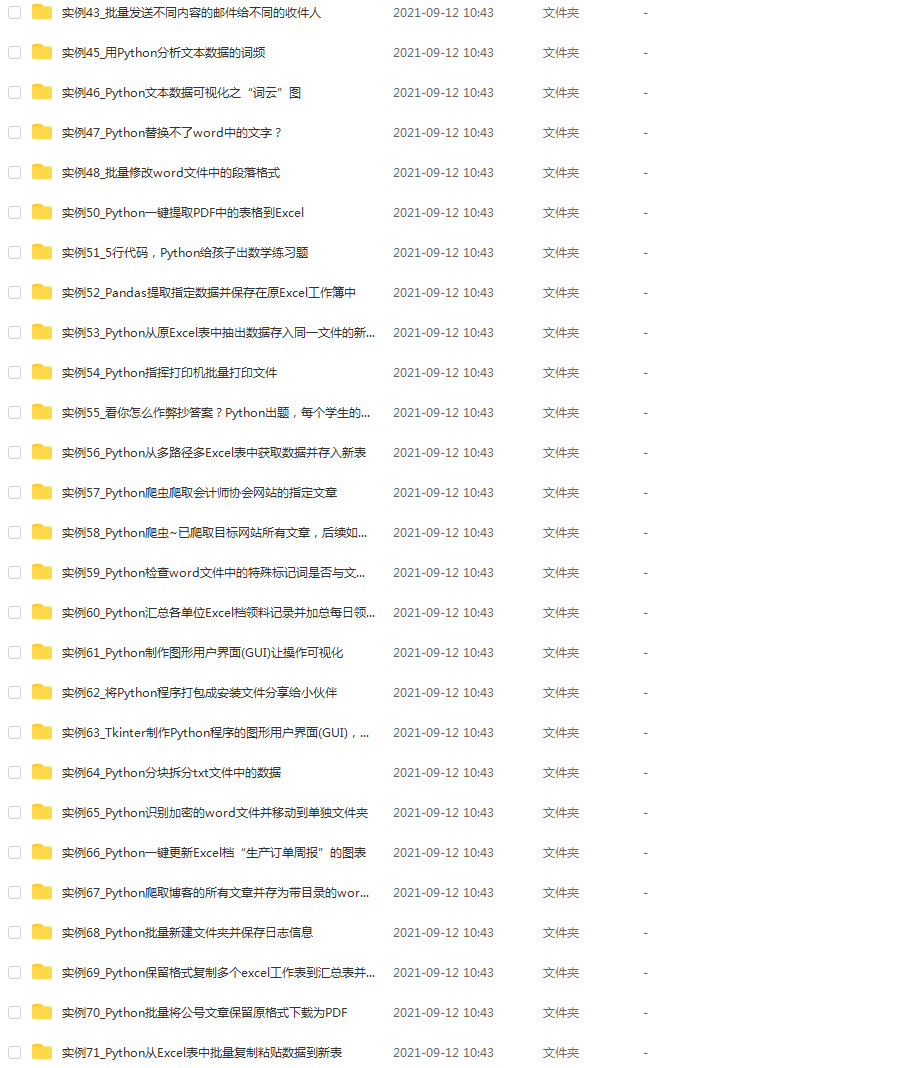

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,基本涵盖了95%以上Python开发知识点,真正体系化!

由于文件比较大,这里只是将部分目录大纲截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频,并且后续会持续更新

如果你觉得这些内容对你有帮助,可以添加V获取:vip1024c (备注Python)

感谢每一个认真阅读我文章的人,看着粉丝一路的上涨和关注,礼尚往来总是要有的:

① 2000多本Python电子书(主流和经典的书籍应该都有了)

② Python标准库资料(最全中文版)

③ 项目源码(四五十个有趣且经典的练手项目及源码)

④ Python基础入门、爬虫、web开发、大数据分析方面的视频(适合小白学习)

⑤ Python学习路线图(告别不入流的学习)

一个人可以走的很快,但一群人才能走的更远。不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎扫码加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

觉得这些内容对你有帮助,可以添加V获取:vip1024c (备注Python)**

[外链图片转存中…(img-VVuMBJtp-1712791515985)]

感谢每一个认真阅读我文章的人,看着粉丝一路的上涨和关注,礼尚往来总是要有的:

① 2000多本Python电子书(主流和经典的书籍应该都有了)

② Python标准库资料(最全中文版)

③ 项目源码(四五十个有趣且经典的练手项目及源码)

④ Python基础入门、爬虫、web开发、大数据分析方面的视频(适合小白学习)

⑤ Python学习路线图(告别不入流的学习)

一个人可以走的很快,但一群人才能走的更远。不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎扫码加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

[外链图片转存中…(img-sEtMXp5V-1712791515986)]

2057

2057

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?