先自我介绍一下,小编浙江大学毕业,去过华为、字节跳动等大厂,目前阿里P7

深知大多数程序员,想要提升技能,往往是自己摸索成长,但自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

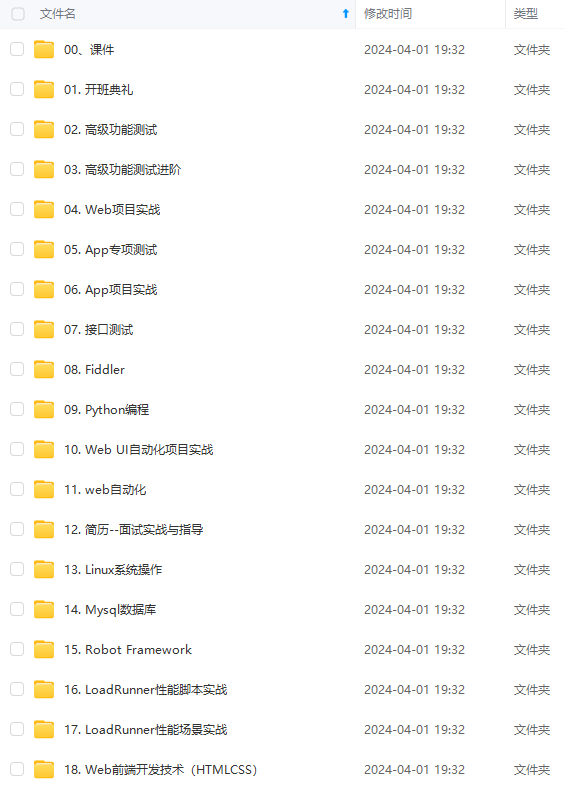

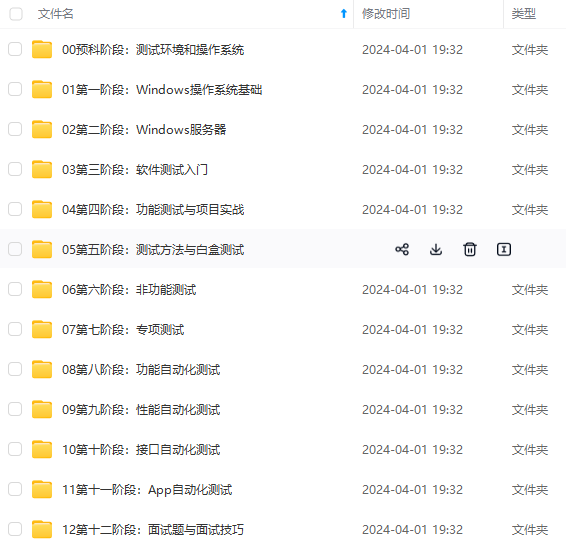

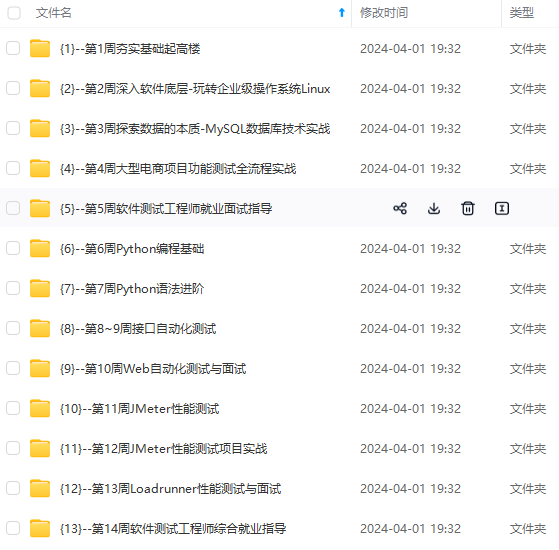

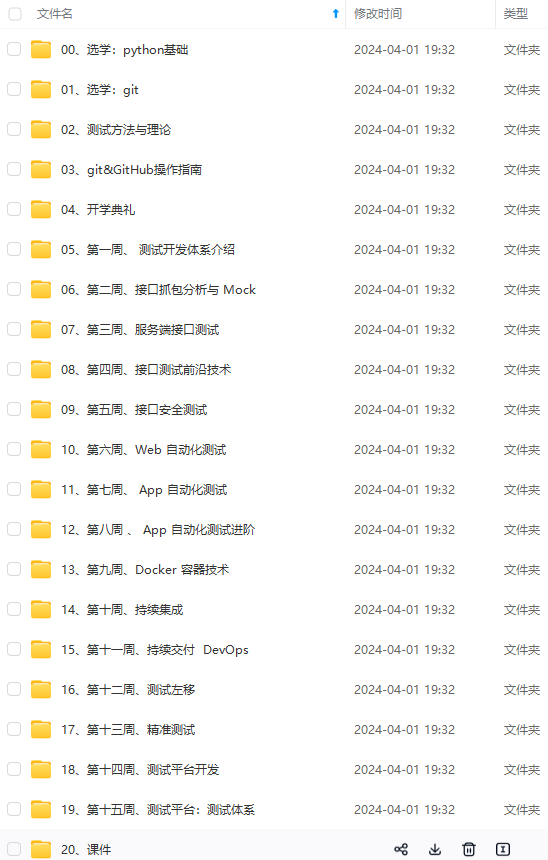

因此收集整理了一份《2024年最新软件测试全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友。

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上软件测试知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

如果你需要这些资料,可以添加V获取:vip1024b (备注软件测试)

正文

Import aliases into ES

elasticdump

–input=./alias.json

–output=http://es.com:9200

–type=alias

Backup templates to a file

elasticdump

–input=http://es.com:9200/template-filter

–output=templates.json

–type=template

Import templates into ES

elasticdump

–input=./templates.json

–output=http://es.com:9200

–type=template

Split files into multiple parts

elasticdump

–input=http://production.es.com:9200/my_index

–output=/data/my_index.json

–fileSize=10mb

Import data from S3 into ES (using s3urls)

elasticdump

–s3AccessKeyId “

a

c

c

e

s

s

_

k

e

y

_

i

d

"

−

−

s

3

S

e

c

r

e

t

A

c

c

e

s

s

K

e

y

"

{access\_key\_id}" \ --s3SecretAccessKey "

access_key_id" −−s3SecretAccessKey"{access_key_secret}”

–input “s3://

b

u

c

k

e

t

_

n

a

m

e

/

{bucket\_name}/

bucket_name/{file_name}.json”

–output=http://production.es.com:9200/my_index

Export ES data to S3 (using s3urls)

elasticdump

–s3AccessKeyId “

a

c

c

e

s

s

_

k

e

y

_

i

d

"

−

−

s

3

S

e

c

r

e

t

A

c

c

e

s

s

K

e

y

"

{access\_key\_id}" \ --s3SecretAccessKey "

access_key_id" −−s3SecretAccessKey"{access_key_secret}”

–input=http://production.es.com:9200/my_index

–output “s3://

b

u

c

k

e

t

_

n

a

m

e

/

{bucket\_name}/

bucket_name/{file_name}.json”

Import data from MINIO (s3 compatible) into ES (using s3urls)

elasticdump

–s3AccessKeyId “

a

c

c

e

s

s

_

k

e

y

_

i

d

"

−

−

s

3

S

e

c

r

e

t

A

c

c

e

s

s

K

e

y

"

{access\_key\_id}" \ --s3SecretAccessKey "

access_key_id" −−s3SecretAccessKey"{access_key_secret}”

–input “s3://

b

u

c

k

e

t

_

n

a

m

e

/

{bucket\_name}/

bucket_name/{file_name}.json”

–output=http://production.es.com:9200/my_index

–s3ForcePathStyle true

–s3Endpoint https://production.minio.co

Export ES data to MINIO (s3 compatible) (using s3urls)

elasticdump

–s3AccessKeyId “

a

c

c

e

s

s

_

k

e

y

_

i

d

"

−

−

s

3

S

e

c

r

e

t

A

c

c

e

s

s

K

e

y

"

{access\_key\_id}" \ --s3SecretAccessKey "

access_key_id" −−s3SecretAccessKey"{access_key_secret}”

–input=http://production.es.com:9200/my_index

–output “s3://

b

u

c

k

e

t

_

n

a

m

e

/

{bucket\_name}/

bucket_name/{file_name}.json”

–s3ForcePathStyle true

–s3Endpoint https://production.minio.co

Import data from CSV file into ES (using csvurls)

elasticdump \

csv:// prefix must be included to allow parsing of csv files

–input “csv://${file_path}.csv” \

–input “csv:///data/cars.csv”

–output=http://production.es.com:9200/my_index

–csvSkipRows 1 # used to skip parsed rows (this does not include the headers row)

–csvDelimiter “;” # default csvDelimiter is ‘,’

2.2 multielasticdump 使用方法

backup ES indices & all their type to the es_backup folder

multielasticdump

–direction=dump

–match=‘^.*$’

–input=http://production.es.com:9200

–output=/tmp/es_backup

Only backup ES indices ending with a prefix of -index (match regex).

Only the indices data will be backed up. All other types are ignored.

NB: analyzer & alias types are ignored by default

multielasticdump

–direction=dump

–match=‘^.*-index$’

–input=http://production.es.com:9200

–ignoreType=‘mapping,settings,template’

–output=/tmp/es_backup

常用参数:

–direction dump/load 导出/导入

–ignoreType 被忽略的类型,data,mapping,analyzer,alias,settings,template

–includeType 包含的类型,data,mapping,analyzer,alias,settings,template

–suffix 加前缀,es6-

i

n

d

e

x

−

−

p

r

e

f

i

x

加后缀,

{index} --prefix 加后缀,

index−−prefix加后缀,{index}-backup-2018-03-13

三、实战

源es地址:http://192.168.1.140:9200

源es索引名:source_index

目标es地址:http://192.168.1.141:9200

目标es索引名:target_index

3.1 迁移

3.1.1 在线迁移

直接将两个ES的数据同步

- 单索引

elasticdump

–input=http://192.168.1.140:9200/source_index

–output=http://192.168.1.141:9200/target_index

–type=mapping

elasticdump

–input=http://192.168.1.140:9200/source_index

–output=http://192.168.1.141:9200/target_index

–type=data

–limit=2000 # 每次操作的objects数量,默认100,数据量大的话,可以调大加快迁移速度

3.1.2 离线迁移

- 单索引

将源es索引数据导出为json文件,然后再导入目标es

导出

elasticdump

–input=http://192.168.1.140:9200/source_index

–output=/data/source_index_mapping.json

–type=mapping

elasticdump

–input=http://192.168.1.140:9200/source_index

–output=/data/source_index.json

–type=data

–limit=2000

导入

elasticdump

–input=/data/source_index_mapping.json

–output=http://192.168.1.141:9200/source_index

–type=mapping

elasticdump

–input=/data/source_index.json

–output=http://192.168.1.141:9200/source_index

–type=data

–limit=2000

- 全索引

导出

multielasticdump

–direction=dump

–match=‘^.*$’

–input=http://192.168.1.140:9200

–output=/tmp/es_backup

–includeType=‘data,mapping’

–limit=2000

导入

multielasticdump

–direction=load

–match=‘^.*$’

–input=/tmp/es_backup

–output=http://192.168.1.141:9200

–includeType=‘data,mapping’

–limit=2000 \

3.2 备份

3.2.1 单索引

将es索引备份成gz文件,减少储存压力

elasticdump

–input=http://192.168.1.140:9200/source_index

–output=$

–limit=2000

| gzip > /data/source_index.json.gz

四、脚本

- 单索引在线迁移

#!/bin/bash

echo -n "源ES地址: "

read source_es

echo -n "目标ES地址: "

read target_es

echo -n "源索引名: "

read source_index

echo -n "目标索引名: "

read target_index

DUMP_HOME=/root/node_modules/elasticdump/bin

D

U

M

P

_

H

O

M

E

/

e

l

a

s

t

i

c

d

u

m

p

−

−

i

n

p

u

t

=

{DUMP\_HOME}/elasticdump --input=

DUMP_HOME/elasticdump−−input={source_es}/

s

o

u

r

c

e

_

i

n

d

e

x

−

−

o

u

t

p

u

t

=

{source\_index} --output=

source_index−−output={target_es}/${target_index} --type=mapping

D

U

M

P

_

H

O

M

E

/

e

l

a

s

t

i

c

d

u

m

p

−

−

i

n

p

u

t

=

{DUMP\_HOME}/elasticdump --input=

DUMP_HOME/elasticdump−−input={source_es}/

s

o

u

r

c

e

_

i

n

d

e

x

−

−

o

u

t

p

u

t

=

{source\_index} --output=

source_index−−output={target_es}/${target_index} --type=data --limit=2000

- 离线单个索引备份

#!/bin/bash

source_es=http://192.168.1.140:9200

target_index=tspa-template-question-answer

data_dir=/opt/es_backup

DUMP_HOME=/root/node_modules/elasticdump/bin

if [ ! -d “${data_dir}” ]; then

mkdir ${data_dir}

fi

D

U

M

P

_

H

O

M

E

/

e

l

a

s

t

i

c

d

u

m

p

−

−

i

n

p

u

t

=

{DUMP\_HOME}/elasticdump --input=

DUMP_HOME/elasticdump−−input={source_es}/

t

a

r

g

e

t

_

i

n

d

e

x

−

−

o

u

t

p

u

t

=

/

{target\_index} --output=/

target_index−−output=/{data_dir}/${target_index}_mapping.json --type=mapping

D

U

M

P

_

H

O

M

E

/

e

l

a

s

t

i

c

d

u

m

p

−

−

i

n

p

u

t

=

{DUMP\_HOME}/elasticdump --input=

DUMP_HOME/elasticdump−−input={source_es}/

t

a

r

g

e

t

_

i

n

d

e

x

−

−

o

u

t

p

u

t

=

/

{target\_index} --output=/

target_index−−output=/{data_dir}/${target_index}.json --type=data --limit=2000

zip -jqrm d a t a _ d i r / {data\_dir}/ data_dir/(date ‘+%Y%m%d-%H%M’).zip ${data_dir}/*.json

- 离线单个索引还原

#!/bin/bash

echo -n “目标ES地址:”

read target_es

echo -n “源索引名:”

read source_index

echo -n “map文件名:”

read map_file

echo -n “data文件名:”

read data_file

DUMP_HOME=/root/node_modules/elasticdump/bin

D

U

M

P

_

H

O

M

E

/

e

l

a

s

t

i

c

d

u

m

p

−

−

i

n

p

u

t

=

{DUMP\_HOME}/elasticdump --input=

DUMP_HOME/elasticdump−−input={map_file} --output=

t

a

r

g

e

t

_

e

s

/

{target\_es}/

target_es/{source_index} --type=mapping

D

U

M

P

_

H

O

M

E

/

e

l

a

s

t

i

c

d

u

m

p

−

−

i

n

p

u

t

=

{DUMP\_HOME}/elasticdump --input=

DUMP_HOME/elasticdump−−input={data_file} --output=

t

a

r

g

e

t

_

e

s

/

{target\_es}/

target_es/{source_index} --type=data --limit=2000

- 离线全量索引备份

#!/bin/bash

source_es=http://192.168.1.140:9200

data_dir=/opt/es_backup

DUMP_HOME=/root/node_modules/elasticdump/bin

if [ ! -d “${data_dir}” ]; then

mkdir ${data_dir}

fi

KaTeX parse error: Undefined control sequence: \* at position 59: …ump --match='^.\̲*̲’ --input= s o u r c e _ e s − − o u t p u t = {source\_es} --output= source_es−−output={data_dir} --includeType=‘data,mapping’ --limit=2000

zip -jqrm d a t a _ d i r / {data\_dir}/ data_dir/(date ‘+%Y%m%d-%H%M’).zip ${data_dir}/*.json

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

需要这份系统化的资料的朋友,可以添加V获取:vip1024b (备注软件测试)

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

d

a

t

a

_

d

i

r

/

{data\_dir}/

data_dir/(date ‘+%Y%m%d-%H%M’).zip ${data_dir}/*.json

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

需要这份系统化的资料的朋友,可以添加V获取:vip1024b (备注软件测试)

[外链图片转存中…(img-nwflqcQm-1713193082620)]

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

431

431

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?