既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

phone_no String

) ENGINE = MergeTree ()

ORDER BY

(appKey, appVersion, deviceId, phone_no);

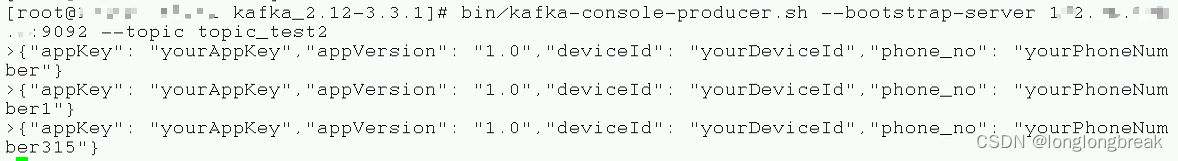

8.起一个Kafka生产者发送一条消息,然后观察clickhouse对应表里的情况

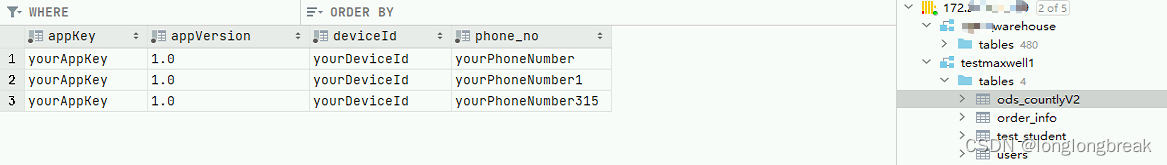

9.观察clickhouse表里数据的情况

### #代码

1.主程序类

package com.kszx;

import com.alibaba.fastjson.JSON;

import com.kszx.Mail;

import com.kszx.MyClickHouseUtil;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer;

import java.util.HashMap;

import java.util.Properties;

public class FlinkSinkClickhouse {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.enableCheckpointing(5000);

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

// source

String topic = "topic_test2";

Properties props = new Properties();

// 设置连接kafka集群的参数

props.setProperty("bootstrap.servers", "172.xx.xxx.x:9092,172.xx.xxx.x:9092,172.xx.xxx.x:9092");

// 定义Flink Kafka Consumer

FlinkKafkaConsumer<String> consumer = new FlinkKafkaConsumer<String>(topic, new SimpleStringSchema(), props);

consumer.setStartFromGroupOffsets();

consumer.setStartFromEarliest(); // 设置每次都从头消费

// 添加source数据流

DataStreamSource<String> source = env.addSource(consumer);

source.print("111");

System.out.println(source);

SingleOutputStreamOperator<Mail> dataStream = source.map(new MapFunction<String, Mail>() {

@Override

public Mail map(String value) throws Exception {

HashMap<String, String> hashMap = JSON.parseObject(value, HashMap.class);

// System.out.println(hashMap);

String appKey = hashMap.get("appKey");

String appVersion = hashMap.get("appVersion");

String deviceId = hashMap.get("deviceId");

String phone_no = hashMap.get("phone_no");

Mail mail = new Mail(appKey, appVersion, deviceId, phone_no);

// System.out.println(mail);

return mail;

}

});

dataStream.print();

// sink

String sql = "INSERT INTO testmaxwell1.ods_countlyV2 (appKey, appVersion, deviceId, phone_no) " +

"VALUES (?, ?, ?, ?)";

MyClickHouseUtil ckSink = new MyClickHouseUtil(sql);

dataStream.addSink(ckSink);

env.execute();

}

}

2.工具类写入clickhouse

package com.kszx;

import com.kszx.Mail;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.functions.sink.RichSinkFunction;

import ru.yandex.clickhouse.ClickHouseConnection;

import ru.yandex.clickhouse.ClickHouseDataSource;

import ru.yandex.clickhouse.settings.ClickHouseProperties;

import ru.yandex.clickhouse.settings.ClickHouseQueryParam;

import java.sql.PreparedStatement;

import java.util.HashMap;

import java.util.Map;

public class MyClickHouseUtil extends RichSinkFunction {

private ClickHouseConnection conn = null;

String sql;

public MyClickHouseUtil(String sql) {

this.sql = sql;

}

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

return ;

}

@Override

public void close() throws Exception {

super.close();

if (conn != null)

{

conn.close();

}

}

@Override

public void invoke(Mail mail, Context context) throws Exception {

String url = "jdbc:clickhouse://172.xx.xxx.xxx:8123/testmaxwell1";

ClickHouseProperties properties = new ClickHouseProperties();

properties.setUser("default");

properties.setPassword("xxxxxx");

properties.setSessionId("default-session-id2");

ClickHouseDataSource dataSource = new ClickHouseDataSource(url, properties);

Map<ClickHouseQueryParam, String> additionalDBParams = new HashMap<>();

additionalDBParams.put(ClickHouseQueryParam.SESSION_ID, "new-session-id2");

try {

conn = dataSource.getConnection();

PreparedStatement preparedStatement = conn.prepareStatement(sql);

preparedStatement.setString(1,mail.getAppKey());

preparedStatement.setString(2, mail.getAppVersion());

preparedStatement.setString(3, mail.getDeviceId());

preparedStatement.setString(4, mail.getPhone_no());

preparedStatement.execute();

}

catch (Exception e){

e.printStackTrace();

}

}

}

3.表属性类

package com.kszx;

//package com.demo.flink.pojo;

public class Mail {

private String appKey;

private String appVersion;

private String deviceId;

private String phone_no;

public Mail(String appKey, String appVersion, String deviceId, String phone_no) {

this.appKey = appKey;

this.appVersion = appVersion;

this.deviceId = deviceId;

this.phone_no = phone_no;

}

public String getAppKey() {

return appKey;

}

public void setAppKey(String appKey) {

this.appKey = appKey;

}

public String getAppVersion() {

return appVersion;

}

public void setAppVersion(String appVersion) {

this.appVersion = appVersion;

}

public String getDeviceId() {

return deviceId;

}

public void setDeviceId(String deviceId) {

this.deviceId = deviceId;

}

public String getPhone_no() {

return phone_no;

}

public void setPhone_no(String phone_no) {

this.phone_no = phone_no;

}

@Override

public String toString() {

return "Mail{" +

"appKey='" + appKey + '\'' +

", appVersion='" + appVersion + '\'' +

", deviceId='" + deviceId + '\'' +

", phone_no='" + phone_no + '\'' +

'}';

}

public Mail of(String appKey, String appVersion, String deviceId, String phone_no)

{

return new Mail(appKey, appVersion, deviceId, phone_no);

}

}

4.pom依赖(注意打包在服务器上运行时会和flink的lib目录下的log4j依赖冲突的问题,如果在服务器上执行jar包时依赖冲突报错的话,最好屏幕代码里的依赖,保留flink原版lib下的依赖)

4.0.0

<groupId>com.kszx</groupId>

<artifactId>flink1kc</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>1.11.1</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.11</artifactId>

<version>1.11.1</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka_2.11</artifactId>

<version>1.11.1</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-java-bridge_2.11</artifactId>

<version>1.11.1</version>

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

.(img-KiRh4UIc-1715286967078)]

[外链图片转存中…(img-IxHvrCRO-1715286967078)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

530

530

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?