网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

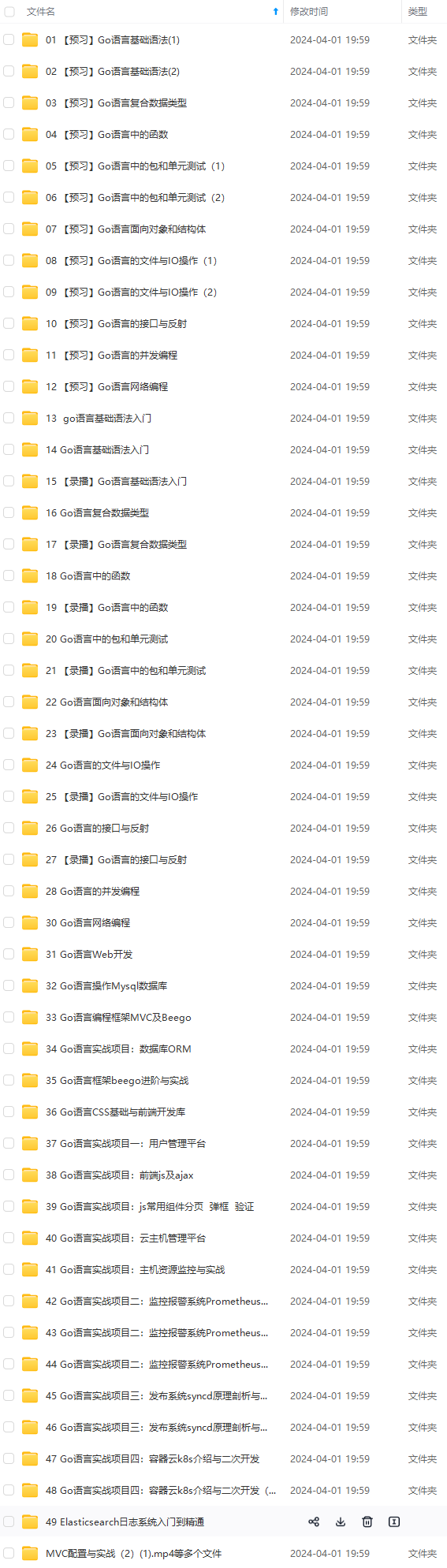

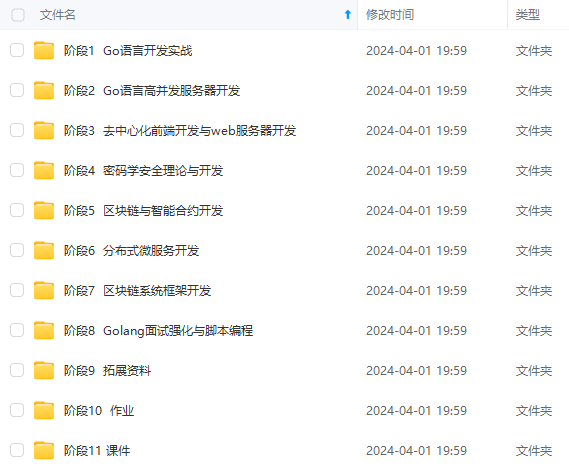

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

设置全局代理后浏览器访问 gcr.io/google-containers 显示如下:

新建仓库

我们这里使用阿里云的镜像仓库作为演示。

阿里云镜像仓库需要注册登录访问地址如下:

https://cr.console.aliyun.com/cn-hangzhou/repositories

根据如下步骤:

创建镜像仓库

命名-公开

本地仓库

创建完成后点击管理

这样我们就拿到了这个镜像仓库的公网地址

registry.cn-qingdao.aliyuncs.com/joe-k8s/k8s

登录命令

docker login --username=xxx registry.cn-qingdao.aliyuncs.com

登录密码在 首页设置

首先登录 仓库

运行命令

登录命令

docker login --username=xxx registry.cn-qingdao.aliyuncs.com

输入密码。

登录成功输出如下:

[root@k8s ~]# docker login --username=xxx registry.cn-qingdao.aliyuncs.com

Password:

Login Succeeded

获取版本号

K8s每个版本需要的镜像版本号在kubeadm Setup Tool Reference Guide这个文档的的Running kubeadm without an internet connection一节里有写。所以可以根据安装的实际版本来调整这个脚本的参数。注意把上面的镜像地址换成自己的。k8s是你创建的一个namespace,而不是仓库名。

如图可以看到 1.11版本的k8s可以使用命令获取需要的镜像版本如下:

kubeadm config images list

输出如下:

k8s.gcr.io/kube-apiserver-amd64:v1.11.2

k8s.gcr.io/kube-controller-manager-amd64:v1.11.2

k8s.gcr.io/kube-scheduler-amd64:v1.11.2

k8s.gcr.io/kube-proxy-amd64:v1.11.2

k8s.gcr.io/pause:3.1

k8s.gcr.io/etcd-amd64:3.2.18

k8s.gcr.io/coredns:1.1.3

准备镜像仓库

方式一有国外服务器节点编写脚本获取推送镜像

在国外的服务器节点创建脚本

vi push.sh

根据我们的经新仓库链接和需要的镜像版本号调整脚本如下:

#!/bin/bash

set -o errexit

set -o nounset

set -o pipefail

KUBE_VERSION=v1.11.2

KUBE_PAUSE_VERSION=3.1

ETCD_VERSION=3.2.18

DNS_VERSION=1.1.3

GCR_URL=gcr.io/google-containers

ALIYUN_URL=registry.cn-qingdao.aliyuncs.com/joe-k8s/k8s

images=(kube-proxy-amd64:${KUBE\_VERSION}

kube-scheduler-amd64:${KUBE\_VERSION}

kube-controller-manager-amd64:${KUBE\_VERSION}

kube-apiserver-amd64:${KUBE\_VERSION}

pause:${KUBE\_PAUSE\_VERSION}

etcd-amd64:${ETCD\_VERSION}

coredns:${DNS\_VERSION})

for imageName in ${images[@]}

do

docker pull $GCR\_URL/$imageName

docker tag $GCR\_URL/$imageName $ALIYUN\_URL/$imageName

docker push $ALIYUN\_URL/$imageName

docker rmi $ALIYUN\_URL/$imageName

done

运行push.sh

sh push.sh

成功获取和push之后我们的镜像仓库registry.cn-qingdao.aliyuncs.com/joe-k8s/k8s中就有了相关的包。

方式二使用别人在国内做好的镜像仓库

如果没有国外的服务器节点,那我们就不能自由的定制需要的版本号镜像了。只能去找找别人已经做好的镜像仓库中有哪些版本,是否有在更新。

目前做的比较好的 持续更新的 k8s镜像仓库推荐 安家的。

Google Container Registry(gcr.io) 中国可用镜像(长期维护) 中国可用镜像(长期维护)")

安家的github

镜像目录

我们在镜像目录中可以看到 v1.11.2版本的镜像也是有了的,可以使用。

这里的镜像目录https://hub.docker.com/u/anjia0532/与我们自己准备的镜像仓库registry.cn-qingdao.aliyuncs.com/joe-k8s/k8s 是同等的作用,下面会用到。

获取镜像

在master节点创建获取的脚本

vi pull.sh

根据自己的镜像仓库获取地址调整脚本,如下:

#!/bin/bash

KUBE_VERSION=v1.11.2

KUBE_PAUSE_VERSION=3.1

ETCD_VERSION=3.2.18

DNS_VERSION=1.1.3

username=registry.cn-qingdao.aliyuncs.com/joe-k8s/k8s

images=(kube-proxy-amd64:${KUBE\_VERSION}

kube-scheduler-amd64:${KUBE\_VERSION}

kube-controller-manager-amd64:${KUBE\_VERSION}

kube-apiserver-amd64:${KUBE\_VERSION}

pause:${KUBE\_PAUSE\_VERSION}

etcd-amd64:${ETCD\_VERSION}

coredns:${DNS\_VERSION}

)

for image in ${images[@]}

do

docker pull ${username}/${image}

docker tag ${username}/${image} k8s.gcr.io/${image}

#docker tag ${username}/${image} gcr.io/google\_containers/${image}

docker rmi ${username}/${image}

done

unset ARCH version images username

运行

sh pull.sh

注意,安家的脚本因为修改过命名使用的pull.sh脚本如下:

#!/bin/bash

KUBE_VERSION=v1.11.2

KUBE_PAUSE_VERSION=3.1

ETCD_VERSION=3.2.18

DNS_VERSION=1.1.3

username=anjia0532

images=(google-containers.kube-proxy-amd64:${KUBE\_VERSION}

google-containers.kube-scheduler-amd64:${KUBE\_VERSION}

google-containers.kube-controller-manager-amd64:${KUBE\_VERSION}

google-containers.kube-apiserver-amd64:${KUBE\_VERSION}

pause:${KUBE\_PAUSE\_VERSION}

etcd-amd64:${ETCD\_VERSION}

coredns:${DNS\_VERSION}

)

for image in ${images[@]}

do

docker pull ${username}/${image}

docker tag ${username}/${image} k8s.gcr.io/${image}

#docker tag ${username}/${image} gcr.io/google\_containers/${image}

docker rmi ${username}/${image}

done

unset ARCH version images username

检查镜像

使用命令

docker images

发现已经有了需要的镜像

[root@k8s ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/google-containers.kube-apiserver-amd64 v1.11.2 821507941e9c 6 days ago 187 MB

k8s.gcr.io/google-containers.kube-proxy-amd64 v1.11.2 46a3cd725628 6 days ago 97.8 MB

k8s.gcr.io/google-containers.kube-controller-manager-amd64 v1.11.2 38521457c799 6 days ago 155 MB

k8s.gcr.io/google-containers.kube-scheduler-amd64 v1.11.2 37a1403e6c1a 6 days ago 56.8 MB

k8s.gcr.io/coredns 1.1.3 b3b94275d97c 2 months ago 45.6 MB

k8s.gcr.io/etcd-amd64 3.2.18 b8df3b177be2 4 months ago 219 MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 7 months ago 742 kB

注意 这里的前缀需要与kubeadm config images list时输出的前缀对应,否则init时仍然会识别不到去下载。

我们这里是k8s.gcr.io所以 脚本中pull 时重命名使用的是

docker tag ${username}/${image} k8s.gcr.io/${image}

如果使用的是anjia0532的版本则还需要调整一遍命名,使用命令如下:

docker tag k8s.gcr.io/google-containers.kube-apiserver-amd64:v1.11.2 k8s.gcr.io/kube-apiserver-amd64:v1.11.2

docker tag k8s.gcr.io/google-containers.kube-controller-manager-amd64:v1.11.2 k8s.gcr.io/kube-controller-manager-amd64:v1.11.2

docker tag k8s.gcr.io/google-containers.kube-scheduler-amd64:v1.11.2 k8s.gcr.io/kube-scheduler-amd64:v1.11.2

docker tag k8s.gcr.io/google-containers.kube-proxy-amd64:v1.11.2 k8s.gcr.io/kube-proxy-amd64:v1.11.2

准备好的镜像如下,与kubeadm config images list时输出的镜像名称版本一致。

[root@k8s ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/kube-controller-manager-amd64 v1.11.2 38521457c799 6 days ago 155 MB

k8s.gcr.io/kube-apiserver-amd64 v1.11.2 821507941e9c 6 days ago 187 MB

k8s.gcr.io/kube-proxy-amd64 v1.11.2 46a3cd725628 6 days ago 97.8 MB

k8s.gcr.io/kube-scheduler-amd64 v1.11.2 37a1403e6c1a 6 days ago 56.8 MB

k8s.gcr.io/coredns 1.1.3 b3b94275d97c 2 months ago 45.6 MB

k8s.gcr.io/etcd-amd64 3.2.18 b8df3b177be2 4 months ago 219 MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 7 months ago 742 kB

使用kubeadm init初始化集群 (只在主节点执行)

初始化前确认 kubelet启动和 cgroup-driver等方式是否对应。

接下来使用kubeadm初始化集群,选择k8s作为Master Node

确保没有设置http_proxy和https_proxy代理

在k8s上执行下面的命令:

kubeadm init --kubernetes-version=v1.11.2 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.11.90 --token-ttl 0

–kubernetes-version根据上面安装成功时的提示:kubectl.x86_64 0:1.11.2-0对应版本。

因为我们选择flannel作为Pod网络插件,所以上面的命令指定–pod-network-cidr=10.244.0.0/16。

对于某些网络解决方案,Kubernetes master 也可以为每个节点分配网络范围(CIDR),这包括一些云提供商和 flannel。通过 –pod-network-cidr 参数指定的子网范围将被分解并发送给每个 node。这个范围应该使用最小的 /16,以让 controller-manager 能够给集群中的每个 node 分配 /24 的子网。如果我们是通过 这个 manifest 文件来使用 flannel,那么您应该使用 –pod-network-cidr=10.244.0.0/16。大部分基于 CNI 的网络解决方案都不需要这个参数。

–apiserver-advertise-address是apiserver的通信地址,一般使用master的ip地址。

通过kubeadm初始化后,都会提供节点加入k8s集群的token。默认token的有效期为24小时,当过期之后,该token就不可用了,需要重新生成token,会比较麻烦,这里–token-ttl设置为0表示永不过期。

token过期后重新创建token的方法看文末。

可能遇到的问题-卡在preflight/images

init过程中kubernetes会自动从google服务器中下载相关的docker镜像

kubeadm init过程首先会检查代理服务器,确定跟 kube-apiserver 的 https 连接方式,如果有代理设置,会提出警告。

如果一直卡在preflight/images

[preflight/images] Pulling images required for setting up a Kubernetes cluster

[preflight/images] This might take a minute or two, depending on the speed of your internet connection

[preflight/images] You can also perform this action in beforehand using 'kubeadm config images pull'

请查看上一小节,先准备相关镜像。

可能遇到的问题-uses proxy

如果报错

[WARNING HTTPProxy]: Connection to "https://192.168.11.90" uses proxy "http://127.0.0.1:8118". If that is not intended, adjust your proxy settings

[WARNING HTTPProxyCIDR]: connection to "10.96.0.0/12" uses proxy "http://127.0.0.1:8118". This may lead to malfunctional cluster setup. Make sure that Pod and Services IP ranges specified correctly as exceptions in proxy configuration

[WARNING HTTPProxyCIDR]: connection to "10.244.0.0/16" uses proxy "http://127.0.0.1:8118". This may lead to malfunctional cluster setup. Make sure that Pod and Services IP ranges specified correctly as exceptions in proxy configuration

则设置no_proxy

使用命令

vi /etc/profile

在最后添加

export no_proxy='anjia0532,127.0.0.1,192.168.11.90,k8s.gcr.io,10.96.0.0/12,10.244.0.0/16'

使用命令让配置生效

source /etc/profile

更多init的错误可以新开一个控制台使用命令跟踪:

journalctl -f -u kubelet.service

可以查看具体的卡住原因。

可能遇到的问题-failed to read kubelet config file “/var/lib/kubelet/config.yaml”

8月 14 20:44:42 k8s systemd[1]: kubelet.service holdoff time over, scheduling restart.

8月 14 20:44:42 k8s systemd[1]: Started kubelet: The Kubernetes Node Agent.

8月 14 20:44:42 k8s systemd[1]: Starting kubelet: The Kubernetes Node Agent...

8月 14 20:44:42 k8s kubelet[5471]: F0814 20:44:42.477878 5471 server.go:190] failed to load Kubelet config file /var/lib/kubelet/config.yaml, error failed to read kubelet config file "/var/lib/kubelet/config.yaml", error: open /var/lib/kubelet/config.yaml: no such file or directory

原因

关键文件缺失,多发生于没有做 kubeadm init就运行了systemctl start kubelet。

解决方法

先成功运行kubeadm init。

可能遇到的问题–cni config uninitialized

KubeletNotReady runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized

原因

因为kubelet配置了network-plugin=cni,但是还没安装,所以状态会是NotReady,不想看这个报错或者不需要网络,就可以修改kubelet配置文件,去掉network-plugin=cni 就可以了。

解决方法

vi /etc/systemd/system/kubelet.service.d/10-kubeadm.conf

删除最后一行里的$KUBELET_NETWORK_ARGS

1.11.2版本的封装在/var/lib/kubelet/kubeadm-flags.env文件中

使用命令

[root@k8s ~]# cat /var/lib/kubelet/kubeadm-flags.env

KUBELET\_KUBEADM\_ARGS=--cgroup-driver=systemd --cni-bin-dir=/opt/cni/bin --cni-conf-dir=/etc/cni/net.d --network-plugin=cni

重启kubelet

systemctl enable kubelet && systemctl start kubelet

重新初始化

kubeadm reset

kubeadm init --kubernetes-version=v1.11.2 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.11.90 --token-ttl 0

正确初始化输出如下:

[root@k8s ~]# kubeadm init --kubernetes-version=v1.11.2 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.11.90 --token-ttl 0

[init] using Kubernetes version: v1.11.2

[preflight] running pre-flight checks

I0815 16:24:34.651252 21183 kernel_validator.go:81] Validating kernel version

I0815 16:24:34.651356 21183 kernel_validator.go:96] Validating kernel config

[preflight/images] Pulling images required for setting up a Kubernetes cluster

[preflight/images] This might take a minute or two, depending on the speed of your internet connection

[preflight/images] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[preflight] Activating the kubelet service

[certificates] Generated ca certificate and key.

[certificates] Generated apiserver certificate and key.

[certificates] apiserver serving cert is signed for DNS names [k8s kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.11.90]

[certificates] Generated apiserver-kubelet-client certificate and key.

[certificates] Generated sa key and public key.

[certificates] Generated front-proxy-ca certificate and key.

[certificates] Generated front-proxy-client certificate and key.

[certificates] Generated etcd/ca certificate and key.

[certificates] Generated etcd/server certificate and key.

[certificates] etcd/server serving cert is signed for DNS names [k8s localhost] and IPs [127.0.0.1 ::1]

[certificates] Generated etcd/peer certificate and key.

[certificates] etcd/peer serving cert is signed for DNS names [k8s localhost] and IPs [192.168.11.90 127.0.0.1 ::1]

[certificates] Generated etcd/healthcheck-client certificate and key.

[certificates] Generated apiserver-etcd-client certificate and key.

[certificates] valid certificates and keys now exist in "/etc/kubernetes/pki"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/admin.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/kubelet.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/controller-manager.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/scheduler.conf"

[controlplane] wrote Static Pod manifest for component kube-apiserver to "/etc/kubernetes/manifests/kube-apiserver.yaml"

[controlplane] wrote Static Pod manifest for component kube-controller-manager to "/etc/kubernetes/manifests/kube-controller-manager.yaml"

[controlplane] wrote Static Pod manifest for component kube-scheduler to "/etc/kubernetes/manifests/kube-scheduler.yaml"

[etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml"

[init] waiting for the kubelet to boot up the control plane as Static Pods from directory "/etc/kubernetes/manifests"

[init] this might take a minute or longer if the control plane images have to be pulled

[apiclient] All control plane components are healthy after 40.004083 seconds

[uploadconfig] storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.11" in namespace kube-system with the configuration for the kubelets in the cluster

[markmaster] Marking the node k8s as master by adding the label "node-role.kubernetes.io/master=''"

[markmaster] Marking the node k8s as master by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "k8s" as an annotation

[bootstraptoken] using token: hu2clf.898he8fnu64w3fur

[bootstraptoken] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstraptoken] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstraptoken] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstraptoken] creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes master has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of machines by running the following on each node

as root:

kubeadm join 192.168.11.90:6443 --token hu2clf.898he8fnu64w3fur --discovery-token-ca-cert-hash sha256:2a196bbd77e4152a700d294a666e9d97336d0f7097f55e19a651c19e03d340a4

上面记录了完成的初始化输出的内容。

其中有以下关键内容:

生成token记录下来,后边使用kubeadm join往集群中添加节点时会用到

下面的命令是配置常规用户如何使用kubectl(客户端)访问集群,因为master节点也需要使用kubectl访问集群,所以也需要运行以下命令:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

最后给出了将节点加入集群的命令

kubeadm join 192.168.11.90:6443 --token hu2clf.898he8fnu64w3fur --discovery-token-ca-cert-hash sha256:2a196bbd77e4152a700d294a666e9d97336d0f7097f55e19a651c19e03d340a4

查看一下集群状态如下:

kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health": "true"}

确认每个组件都处于healthy状态。

可能遇到的问题–net/http: TLS handshake timeout

如果报错主节点的6443端口无法连接,超时,拒绝连接。需要检查网络,一般来说 是因我们我们设置了http_proxy和https_proxy做代理翻墙导致不能访问自身和内网。

需要在/etc/profile中增加no_proxy参数后重试,或者重新init。

如下:

vi /etc/profile

在最后增加

export no_proxy='anjia0532,127.0.0.1,192.168.11.90,192.168.11.91,192.168.11.92,k8s.gcr.io,10.96.0.0/12,10.244.0.0/16,localhost'

保存退出。

让配置生效

source /etc/profile

如果仍然无效,重新init,因为init时会帮助我们去检查网络情况,一般init时没有警告是没问题的。

使用命令:

kubeadm reset

kubeadm init

集群初始化如果遇到问题,可以使用下面的命令进行清理再重新初始化:

kubeadm reset

ifconfig cni0 down

ip link delete cni0

ifconfig flannel.1 down

ip link delete flannel.1

rm -rf /var/lib/cni/

安装Pod Network (只在主节点执行)

接下来安装flannel network add-on:

mkdir -p ~/k8s/

cd ~/k8s

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

kubectl apply -f kube-flannel.yml

输出如下:

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.extensions/kube-flannel-ds-amd64 created

daemonset.extensions/kube-flannel-ds-arm64 created

daemonset.extensions/kube-flannel-ds-arm created

daemonset.extensions/kube-flannel-ds-ppc64le created

daemonset.extensions/kube-flannel-ds-s390x created

这里可以cat查看kube-flannel.yml这个文件里的flannel的镜像的版本

cat kube-flannel.yml|grep quay.io/coreos/flannel

如果Node有多个网卡的话,目前需要在kube-flannel.yml中使用–iface参数指定集群主机内网网卡的名称,否则可能会出现dns无法解析。需要将kube-flannel.yml下载到本地,flanneld启动参数加上–iface=

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.10.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

- --iface=eth1

使用kubectl get pod –all-namespaces -o wide确保所有的Pod都处于Running状态。

[root@k8s k8s]# kubectl get pod --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

kube-system coredns-78fcdf6894-jf5tn 1/1 Running 0 6m 10.244.0.3 k8s <none>

kube-system coredns-78fcdf6894-ljmmh 1/1 Running 0 6m 10.244.0.2 k8s <none>

kube-system etcd-k8s 1/1 Running 0 5m 192.168.11.90 k8s <none>

kube-system kube-apiserver-k8s 1/1 Running 0 5m 192.168.11.90 k8s <none>

kube-system kube-controller-manager-k8s 1/1 Running 0 5m 192.168.11.90 k8s <none>

kube-system kube-flannel-ds-amd64-fvpj7 1/1 Running 0 4m 192.168.11.90 k8s <none>

kube-system kube-proxy-c8rrg 1/1 Running 0 6m 192.168.11.90 k8s <none>

kube-system kube-scheduler-k8s 1/1 Running 0 5m 192.168.11.90 k8s <none>

master node参与工作负载 (只在主节点执行)

使用kubeadm初始化的集群,出于安全考虑Pod不会被调度到Master Node上,也就是说Master Node不参与工作负载。

这里搭建的是测试环境可以使用下面的命令使Master Node参与工作负载:

k8s是master节点的hostname

允许master节点部署pod,使用命令如下:

kubectl taint nodes --all node-role.kubernetes.io/master-

输出如下:

node "k8s" untainted

输出error: taint “node-role.kubernetes.io/master:” not found错误忽略。

禁止master部署pod

kubectl taint nodes k8s node-role.kubernetes.io/master=true:NoSchedule

测试DNS (只在主节点执行)

使用命令

kubectl run curl --image=radial/busyboxplus:curl -i --tty

输出如下:

If you don't see a command prompt, try pressing enter.

[ root@curl-87b54756-vsf2s:/ ]$

进入后执行

nslookup kubernetes.default

确认解析正常,输出如下:

[ root@curl-87b54756-vsf2s:/ ]$ nslookup kubernetes.default

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: kubernetes.default

Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

输入

exit;

可退出image。

向Kubernetes集群添加Node (只在副节点执行)

下面我们将k8s1这个主机添加到Kubernetes集群中,在k8s1上执行之前的加入语句:

kubeadm join 192.168.11.90:6443 --token hu2clf.898he8fnu64w3fur --discovery-token-ca-cert-hash sha256:2a196bbd77e4152a700d294a666e9d97336d0f7097f55e19a651c19e03d340a4

正确加入输出如下:

[root@k8s1 ~]# kubeadm join 192.168.11.90:6443 --token hu2clf.898he8fnu64w3fur --discovery-token-ca-cert-hash sha256:2a196bbd77e4152a700d294a666e9d97336d0f7097f55e19a651c19e03d340a4

[preflight] running pre-flight checks

[WARNING RequiredIPVSKernelModulesAvailable]: the IPVS proxier will not be used, because the following required kernel modules are not loaded: [ip_vs ip_vs_rr ip_vs_wrr ip_vs_sh] or no builtin kernel ipvs support: map[ip_vs:{} ip_vs_rr:{} ip_vs_wrr:{} ip_vs_sh:{} nf_conntrack_ipv4:{}]

you can solve this problem with following methods:

1. Run 'modprobe -- ' to load missing kernel modules;

2. Provide the missing builtin kernel ipvs support

I0815 16:53:55.675009 2758 kernel_validator.go:81] Validating kernel version

I0815 16:53:55.675090 2758 kernel_validator.go:96] Validating kernel config

[discovery] Trying to connect to API Server "192.168.11.90:6443"

[discovery] Created cluster-info discovery client, requesting info from "https://192.168.11.90:6443"

[discovery] Requesting info from "https://192.168.11.90:6443" again to validate TLS against the pinned public key

[discovery] Cluster info signature and contents are valid and TLS certificate validates against pinned roots, will use API Server "192.168.11.90:6443"

[discovery] Successfully established connection with API Server "192.168.11.90:6443"

[kubelet] Downloading configuration for the kubelet from the "kubelet-config-1.11" ConfigMap in the kube-system namespace

[kubelet] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[preflight] Activating the kubelet service

[tlsbootstrap] Waiting for the kubelet to perform the TLS Bootstrap...

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "k8s1" as an annotation

This node has joined the cluster:

* Certificate signing request was sent to master and a response

was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the master to see this node join the cluster.

下面在master节点上执行命令查看集群中的节点:

[root@k8s k8s]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s Ready master 30m v1.11.2

k8s1 NotReady <none> 1m v1.11.2

k8s2 NotReady <none> 1m v1.11.2

[root@k8s k8s]#

如果副节点是NotReady,可以使用命令检查是否有报错

systemctl status kubelet.service

使用命令检查pod是否是running状态

[root@k8s kubernetes]# kubectl get pod --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

default curl-87b54756-vsf2s 1/1 Running 1 1h 10.244.0.4 k8s <none>

kube-system coredns-78fcdf6894-jf5tn 1/1 Running 0 2h 10.244.0.3 k8s <none>

kube-system coredns-78fcdf6894-ljmmh 1/1 Running 0 2h 10.244.0.2 k8s <none>

kube-system etcd-k8s 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-apiserver-k8s 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-controller-manager-k8s 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-flannel-ds-amd64-8p2px 0/1 Init:0/1 0 1h 192.168.11.91 k8s1 <none>

kube-system kube-flannel-ds-amd64-fvpj7 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-flannel-ds-amd64-p6g4w 0/1 Init:0/1 0 1h 192.168.11.92 k8s2 <none>

kube-system kube-proxy-c8rrg 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-proxy-hp4lj 0/1 ContainerCreating 0 1h 192.168.11.92 k8s2 <none>

kube-system kube-proxy-tn2fl 0/1 ContainerCreating 0 1h 192.168.11.91 k8s1 <none>

kube-system kube-scheduler-k8s 1/1 Running 0 2h 192.168.11.90 k8s <none>

如果卡在ContainerCreating状态和Init:0/1一般也是副节点的镜像获取不到的问题,请回到 准备镜像小节。

准备好镜像之后一般很快就会变成running状态如下:

[root@k8s kubernetes]# kubectl get pod --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

default curl-87b54756-vsf2s 1/1 Running 1 1h 10.244.0.4 k8s <none>

kube-system coredns-78fcdf6894-jf5tn 1/1 Running 0 2h 10.244.0.3 k8s <none>

kube-system coredns-78fcdf6894-ljmmh 1/1 Running 0 2h 10.244.0.2 k8s <none>

kube-system etcd-k8s 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-apiserver-k8s 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-controller-manager-k8s 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-flannel-ds-amd64-8p2px 1/1 Running 0 1h 192.168.11.91 k8s1 <none>

kube-system kube-flannel-ds-amd64-fvpj7 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-flannel-ds-amd64-p6g4w 1/1 Running 0 1h 192.168.11.92 k8s2 <none>

kube-system kube-proxy-c8rrg 1/1 Running 0 2h 192.168.11.90 k8s <none>

kube-system kube-proxy-hp4lj 1/1 Running 0 1h 192.168.11.92 k8s2 <none>

kube-system kube-proxy-tn2fl 1/1 Running 0 1h 192.168.11.91 k8s1 <none>

kube-system kube-scheduler-k8s 1/1 Running 0 2h 192.168.11.90 k8s <none>

此时查看nodes也已经变成了ready状态

[root@k8s kubernetes]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s Ready master 2h v1.11.2

k8s1 Ready <none> 1h v1.11.2

k8s2 Ready <none> 1h v1.11.2

[root@k8s kubernetes]#

如果副节点也需要使用kubectl命令则需要把conf文件复制过去,使用命令

mkdir -p $HOME/.kube

sudo cp -i admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

如何从集群中移除Node

如果需要从集群中移除k8s2这个Node执行下面的命令:

在master节点上执行:

kubectl drain k8s2 --delete-local-data --force --ignore-daemonsets

kubectl delete node k8s2

在k8s2上执行:

kubeadm reset

ifconfig cni0 down

ip link delete cni0

ifconfig flannel.1 down

ip link delete flannel.1

rm -rf /var/lib/cni/

到这里我们就算成功安装好了k8s集群了。

一般来说 我们 还需要安装dashboard监控界面。

可参考文章:

k8s—dashboardv1.8.3版本安装详细步骤

CentOS系统中直接使用yum安装

给yum源增加一个Repo

[virt7-docker-common-release]

name=virt7-docker-common-release

baseurl=http://cbs.centos.org/repos/virt7-docker-common-release/x86\_64/os/

gpgcheck=0

安装docker、kubernetes、etcd、flannel一步到位

yum -y install --enablerepo=virt7-docker-common-release kubernetes etcd flannel

安装好了之后需要修改一系列配置文件。

这个repo在CentOS7.3下是毫无意义的,因为CentOS官方源的extras中已经包含了Kubernetes1.5.2,如果你使用的是CentOS7.3的话,会自动下载安装Kubernetes1.5.2(Till March 30,2017)。如果你使用的是CentOS7.2的化,这个源就有用了,但是不幸的是,它会自动下载安装Kubernentes1.1。我们现在要安装目前的最新版本Kubernetes1.6,而使用的又是CentOS7.2,所以我们不使用yum安装(当前yum源支持的最高版本的kuberentes是1.5.2)。

使用二进制文件安装

这种方式安装的话,需要自己一个一个组件的安装。

首先需要分配好哪些节点安装什么组件和服务。

master/node需要安装以下服务:

kube-apiserver kube-controller-manager kube-scheduler kubelet kube-proxy etcd flannel kubectl docker

node需要安装以下服务

node kubectl kube-proxy flannel docker

安装Docker

yum localistall ./docker-engine\*

将使用CentOS的extras repo下载docker。

关闭防火墙和SELinux

这是官网上建议的,我是直接将iptables-services和firewlld卸载掉了。

setenforce 0

systemctl disable iptables-services firewalld

systemctl stop iptables-services firewalld

安装etcd

下载二进制文件

DOWNLOAD_URL=https://storage.googleapis.com/etcd #etcd存储地址

ETCD_VER=v3.1.5 #设置etcd版本号

wget ${DOWNLOAD_URL}/${ETCD_VER}/etcd-${ETCD_VER}-linux-amd64.tar.gz

tar xvf etcd-${ETCD_VER}-linux-amd64.tar.gz

部署文件

将如下内容写入文件 /etc/etcd/etcd.conf 中:

# [member]

ETCD_NAME=default

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

# ETCD\_WAL\_DIR=""

# ETCD\_SNAPSHOT\_COUNT="10000"

# ETCD\_HEARTBEAT\_INTERVAL="100"

# ETCD\_ELECTION\_TIMEOUT="1000"

# ETCD\_LISTEN\_PEER\_URLS="http://localhost:2380"

ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379"

# ETCD\_MAX\_SNAPSHOTS="5"

# ETCD\_MAX\_WALS="5"

# ETCD\_CORS=""

#

# [cluster]

# ETCD\_INITIAL\_ADVERTISE\_PEER\_URLS="http://localhost:2380"

# if you use different ETCD\_NAME (e.g. test), set ETCD\_INITIAL\_CLUSTER value for this name, i.e. "test=http://..."

# ETCD\_INITIAL\_CLUSTER="default=http://localhost:2380"

# ETCD\_INITIAL\_CLUSTER\_STATE="new"

# ETCD\_INITIAL\_CLUSTER\_TOKEN="etcd-cluster"

ETCD_ADVERTISE_CLIENT_URLS="http://0.0.0.0:2379"

# ETCD\_DISCOVERY=""

# ETCD\_DISCOVERY\_SRV=""

# ETCD\_DISCOVERY\_FALLBACK="proxy"

# ETCD\_DISCOVERY\_PROXY=""

#

# [proxy]

# ETCD\_PROXY="off"

# ETCD\_PROXY\_FAILURE\_WAIT="5000"

# ETCD\_PROXY\_REFRESH\_INTERVAL="30000"

# ETCD\_PROXY\_DIAL\_TIMEOUT="1000"

# ETCD\_PROXY\_WRITE\_TIMEOUT="5000"

# ETCD\_PROXY\_READ\_TIMEOUT="0"

#

# [security]

# ETCD\_CERT\_FILE=""

# ETCD\_KEY\_FILE=""

# ETCD\_CLIENT\_CERT\_AUTH="false"

# ETCD\_TRUSTED\_CA\_FILE=""

# ETCD\_PEER\_CERT\_FILE=""

# ETCD\_PEER\_KEY\_FILE=""

# ETCD\_PEER\_CLIENT\_CERT\_AUTH="false"

# ETCD\_PEER\_TRUSTED\_CA\_FILE=""

# [logging]

# ETCD\_DEBUG="false"

# examples for -log-package-levels etcdserver=WARNING,security=DEBUG

# ETCD\_LOG\_PACKAGE\_LEVELS=""

将 etcd, etcdctl放入 /usr/bin/下,并将如下内容写进/usr/lib/systemd/system/etcd.service文件

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

WorkingDirectory=/var/lib/etcd/

EnvironmentFile=-/etc/etcd/etcd.conf

User=etcd

# set GOMAXPROCS to number of processors

ExecStart=/bin/bash -c "GOMAXPROCS=$(nproc) /usr/bin/etcd --name=\"${ETCD\_NAME}\" --data-dir=\"${ETCD\_DATA\_DIR}\" --listen-client-urls=\"${ETCD\_LISTEN\_CLIENT\_URLS}\""

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

启动并校验

systemctl start etcd

systemctl enable etcd

systemctl status etcd

etcdctl ls

集群

若要部署多节点集群也比较简单,只要更改etcd.conf文件以及etcd.service添加相应配置即可

安装flannel

可以直接使用yum install flannel安装。

因为网络这块的配置比较复杂,我将在后续文章中说明。

安装Kubernetes

根据《Kubernetes权威指南(第二版)》中的介绍,直接使用GitHub上的release里的二进制文件安装。

执行下面的命令安装。

wget https://github.com/kubernetes/kubernetes/releases/download/v1.6.0/kubernetes.tar.gz

tar kubernetes.tar.gz

cd kubernetes

./cluster/get-kube-binaries.sh

cd server

tar xvf kubernetes-server-linux-amd64.tar.gz

rm -f *_tag *.tar

chmod 755 *

mv * /usr/bin

从下面的地址下载kubernetes-server-linux-amd64.tar.gz

https://storage.googleapis.com/kubernetes-release/release/v1.6.0

解压完后获得的二进制文件有:

cloud-controller-manager

hyperkube

kubeadm

kube-aggregator

kube-apiserver

kube-controller-manager

kubectl

kubefed

kubelet

kube-proxy

kube-scheduler

在cluster/juju/layers/kubernetes-master/templates目录下有service和环境变量配置文件的模板,这个模板本来是为了使用juju安装写的。

Master节点的配置

Master节点需要配置的kubernetes的组件有:

kube-apiserver

kube-controller-manager

kube-scheduler

kube-proxy

kubectl

配置kube-apiserver

编写/usr/lib/systemd/system/kube-apiserver.service文件。CentOS中的service配置文件参考

[Unit]

Description=Kubernetes API Service

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

After=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/apiserver

ExecStart=/usr/bin/kube-apiserver \

$KUBE\_LOGTOSTDERR \

$KUBE\_LOG\_LEVEL \

$KUBE\_ETCD\_SERVERS \

$KUBE\_API\_ADDRESS \

$KUBE\_API\_PORT \

$KUBELET\_PORT \

$KUBE\_ALLOW\_PRIV \

$KUBE\_SERVICE\_ADDRESSES \

$KUBE\_ADMISSION\_CONTROL \

$KUBE\_API\_ARGS

Restart=on-failure

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

创建kubernetes的配置文件目录/etc/kubernetes。

添加config配置文件。

###

# kubernetes system config

#

# The following values are used to configure various aspects of all

# kubernetes services, including

#

# kube-apiserver.service

# kube-controller-manager.service

# kube-scheduler.service

# kubelet.service

# kube-proxy.service

# logging to stderr means we get it in the systemd journal

KUBE_LOGTOSTDERR="--logtostderr=true"

# journal message level, 0 is debug

KUBE_LOG_LEVEL="--v=0"

# Should this cluster be allowed to run privileged docker containers

KUBE_ALLOW_PRIV="--allow-privileged=false"

# How the controller-manager, scheduler, and proxy find the apiserver

KUBE_MASTER="--master=http://sz-pg-oam-docker-test-001.tendcloud.com:8080"

添加apiserver配置文件。

###

## kubernetes system config

##

## The following values are used to configure the kube-apiserver

##

#

## The address on the local server to listen to.

KUBE_API_ADDRESS="--address=sz-pg-oam-docker-test-001.tendcloud.com"

#

## The port on the local server to listen on.

KUBE_API_PORT="--port=8080"

#

## Port minions listen on

KUBELET_PORT="--kubelet-port=10250"

#

## Comma separated list of nodes in the etcd cluster

KUBE_ETCD_SERVERS="--etcd-servers=http://127.0.0.1:2379"

#

## Address range to use for services

KUBE_SERVICE_ADDREKUBELET_POD_INFRA_CONTAINERSSES="--service-cluster-ip-range=10.254.0.0/16"

#

## default admission control policies

KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota"

#

## Add your own!

#KUBE\_API\_ARGS=""

—admission-control参数是Kubernetes的安全机制配置,这些安全机制都是以插件的形式用来对API Serve进行准入控制,一开始我们没有配置ServiceAccount,这是为了方便集群之间的通信,不需要进行身份验证。如果你需要更高级的身份验证和鉴权的话就需要加上它了。

配置kube-controller-manager

编写/usr/lib/systemd/system/kube-controller.service文件。

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/controller-manager

ExecStart=/usr/bin/kube-controller-manager \

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_MASTER \

$KUBE_CONTROLLER_MANAGER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

在/etc/kubernetes目录下添加controller-manager配置文件。

###

# The following values are used to configure the kubernetes controller-manager

# defaults from config and apiserver should be adequate

# Add your own!

KUBE_CONTROLLER_MANAGER_ARGS=""

配置kube-scheduler

编写/usr/lib/systemd/system/kube-scheduler.service文件。

[Unit]

Description=Kubernetes Scheduler Plugin

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/scheduler

ExecStart=/usr/bin/kube-scheduler \

$KUBE_LOGTOSTDERR \

$KUBE_LOG_LEVEL \

$KUBE_MASTER \

$KUBE_SCHEDULER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

在/etc/kubernetes目录下添加scheduler文件。

###

# kubernetes scheduler config

# default config should be adequate

# Add your own!

KUBE_SCHEDULER_ARGS=""

配置kube-proxy

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

_MANAGER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

在/etc/kubernetes目录下添加controller-manager配置文件。

The following values are used to configure the kubernetes controller-manager

defaults from config and apiserver should be adequate

Add your own!

KUBE_CONTROLLER_MANAGER_ARGS=“”

### 配置kube-scheduler

编写/usr/lib/systemd/system/kube-scheduler.service文件。

[Unit]

Description=Kubernetes Scheduler Plugin

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/scheduler

ExecStart=/usr/bin/kube-scheduler

$KUBE_LOGTOSTDERR

$KUBE_LOG_LEVEL

$KUBE_MASTER

$KUBE_SCHEDULER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

在/etc/kubernetes目录下添加scheduler文件。

kubernetes scheduler config

default config should be adequate

Add your own!

KUBE_SCHEDULER_ARGS=“”

### 配置kube-proxy

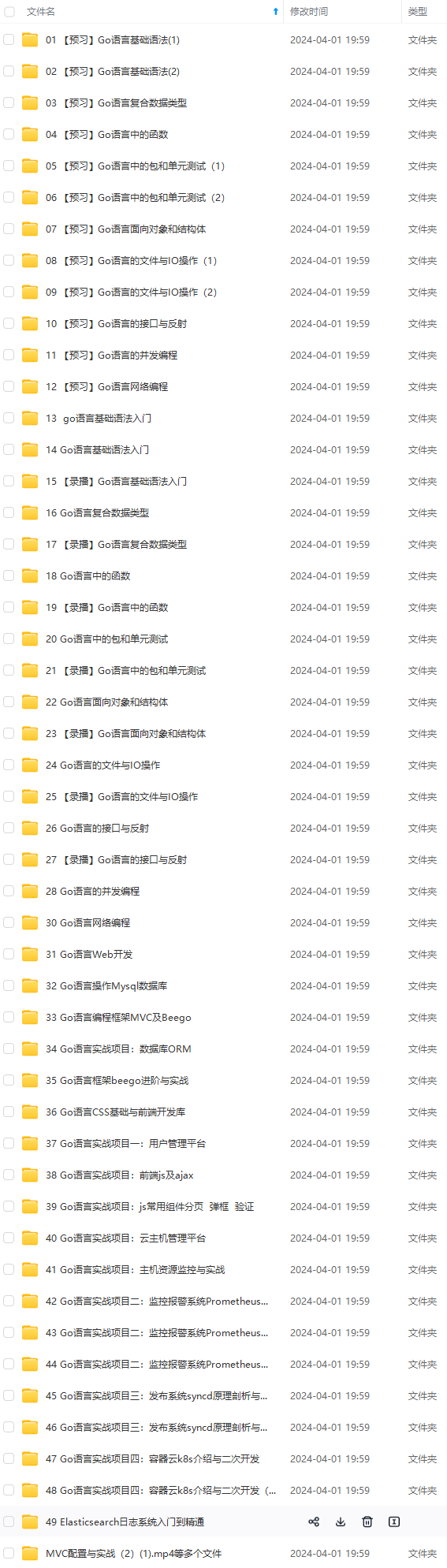

[外链图片转存中...(img-bLITH1wC-1715517586163)]

[外链图片转存中...(img-RFrMMUmA-1715517586164)]

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化的资料的朋友,可以添加戳这里获取](https://bbs.csdn.net/topics/618658159)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?