网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

percentage of training set to use as validation

valid_size = 0.2

test_size = 0.1

obtain training indices that will be used for validation

num_train = len(train_data)

indices = list(range(num_train))

np.random.shuffle(indices)

valid_split = int(np.floor((valid_size) * num_train))

test_split = int(np.floor((valid_size + test_size) * num_train))

valid_idx, test_idx, train_idx = indices[:valid_split], indices[valid_split:test_split], indices[test_split:]

print(len(valid_idx), len(test_idx), len(train_idx))

define samplers for obtaining training and validation batches

train_sampler = SubsetRandomSampler(train_idx)

valid_sampler = SubsetRandomSampler(valid_idx)

test_sampler = SubsetRandomSampler(test_idx)

prepare data loaders (combine dataset and sampler)

train_loader = torch.utils.data.DataLoader(train_data, batch_size=32, sampler=train_sampler, num_workers=num_workers)

valid_loader = torch.utils.data.DataLoader(train_data, batch_size=32, sampler=valid_sampler, num_workers=num_workers)

test_loader = torch.utils.data.DataLoader(train_data, batch_size=32, sampler=test_sampler, num_workers=num_workers)

5511 2756 19291

### 模型训练流程

1. 加载预先训练的模型

device = torch.device(“cuda” if torch.cuda.is_available() else “cpu”)

pretrained=True will download a pretrained network for us

model = models.densenet121(pretrained=True)

#model

PyTorch以及几乎所有其他深度学习框架,都使用CUDA来有效地计算GPU上的前向和后向传递。

在PyTorch中,我们使用model.cuda()将模型参数和其他张量移动到GPU内存,或者从GPU移回,

import tensorwatch as tw

import os

os.environ[“PATH”] += os.pathsep + ‘C:/Program Files (x86)/Graphviz2.38/bin’

tw.draw_model(model, [1, 3, 224, 224])

**Pytorch网络结构可视化**

所需库文件:

* graphviz

* tensorwatch

draw\_model函数需要传入三个参数,第一个为model,第二个参数为input\_shape,第三个参数为orientation,可以选择’LR’或者’TB’,分别代表左右布局与上下布局。

**统计网络参数**

可以通过model\_stats方法统计各层的参数情况。

tw.draw_model(model, [1, 3, 224, 224], orientation=‘LR’)

tw.model_stats(model, [1, 3, 224, 224])

2. 冻结卷积层并使用自定义分类器替换全连接层

#Freezing model parameters and defining the fully connected network to be attached to the model, loss function and the optimizer.

#We there after put the model on the GPUs

for param in model.parameters():

param.require_grad = False

fc = nn.Sequential(

nn.Linear(1024, 460),

nn.ReLU(),

nn.Dropout(0.4),

nn.Linear(460,2),

nn.LogSoftmax(dim=1)

)

model.classifier = fc

criterion = nn.NLLLoss()

#Over here we want to only update the parameters of the classifier so

optimizer = torch.optim.Adam(model.classifier.parameters(), lr=0.003)

model.cuda()

冻结模型参数允许我们为早期卷积层保留预训练模型的权重,其目的是用于特征提取。

然后我们定义我们的全连接网络,示例代码中是1024。

我们还定义了要使用的激活函数,和有助于通过随机关闭层中的神经元,以强制在剩余节点之间共享信息,来避免过度拟合。

在我们定义了自定义全连接网络之后,我们将其连接到预先训练好的模型的完全连接网络。

接下来我们定义损失函数,优化器,并通过将模型移动到GPU来准备训练模型。

3. 为特定任务训练自定义分类器

在训练期间,我们遍历每个时期的DataLoader。 对于每个batch,使用标准函数计算损失。

使用loss.backward()方法计算相对于模型参数的损失梯度。

optimizer.zero\_grad()负责清除任何累积的梯度,因为我们会一遍又一遍地计算梯度。

optimizer.step()使用具有动量的随机梯度下降(Adam)更新模型参数。

为了防止过度拟合,我们使用一种称为早期停止的强大技术。背后的想法很简单,当验证数据集上的性能开始降低时停止训练。

epochs = 10

valid_loss_min = 0.0

torch.device(‘cuda’)

torch.backends.cudnn.benchmark = True

import time

for epoch in range(epochs):

start = time.time()

# scheduler.step()

model.to(device)

model.train()

train_loss = 0.0

valid_loss = 0.0

for index, (inputs, labels) in enumerate(train_loader):

# Move input and label tensors to the default device

inputs, labels = inputs.cuda(), labels.cuda()

logps = model(inputs)

loss = criterion(logps, labels)

optimizer.zero_grad()

loss.backward()

optimizer.step()

train_loss += loss.item()

model.eval()

with torch.no_grad():

accuracy = 0

for inputs, labels in valid_loader:

inputs, labels = inputs.to(device), labels.to(device)

logps = model.forward(inputs)

batch_loss = criterion(logps, labels)

valid_loss += batch_loss.item()

# Calculate accuracy

ps = torch.exp(logps)

top_p, top_class = ps.topk(1, dim=1)

equals = top_class == labels.view(\*top_class.shape)

accuracy += torch.mean(equals.type(torch.FloatTensor)).item()

# calculate average losses

train_loss = train_loss / len(train_loader)

valid_loss = valid_loss / len(valid_loader)

valid_accuracy = accuracy / len(valid_loader)

# print training/validation statistics

print('Epoch: {} \tTraining Loss: {:.6f} \tValidation Loss: {:.6f} \tValidation Accuracy: {:.6f}'.format(

epoch + 1, train_loss, valid_loss, valid_accuracy))

if valid_loss <= valid_loss_min:

print('Validation loss decreased ({:.6f} --> {:.6f}). Saving model ...'.format(

valid_loss_min,

valid_loss))

model_save_name = "Malaria.pt"

path = F"/content/drive/My Drive/{model\_save\_name}"

torch.save(model.state_dict(), path)

valid_loss_min = valid_loss

print(f"Time per epoch: {(time.time() - start):.3f} seconds")

从磁盘加载已保存的模型 进行测试

model.load_state_dict(torch.load(‘Malaria.pt’))

def test(model, criterion):

monitor test loss and accuracy

test_loss = 0.

correct = 0.

total = 0.

for batch_idx, (data, target) in enumerate(test_loader):

# move to GPU

if torch.cuda.is_available():

data, target = data.cuda(), target.cuda()

# forward pass: compute predicted outputs by passing inputs to the model

output = model(data)

# calculate the loss

loss = criterion(output, target)

# update average test loss

test_loss = test_loss + ((1 / (batch_idx + 1)) \* (loss.data - test_loss))

# convert output probabilities to predicted class

pred = output.data.max(1, keepdim=True)[1]

# compare predictions to true label

correct += np.sum(np.squeeze(pred.eq(target.data.view_as(pred))).cpu().numpy())

total += data.size(0)

print('Test Loss: {:.6f}\n'.format(test_loss))

print('\nTest Accuracy: %2d%% (%2d/%2d)' % (

100. \* correct / total, correct, total))

test(model, criterion)

**结果可视化**

def load_input_image(img_path):

image = Image.open(img_path)

prediction_transform = transforms.Compose([transforms.Resize(size=(224, 224)),

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上C C++开发知识点,真正体系化!

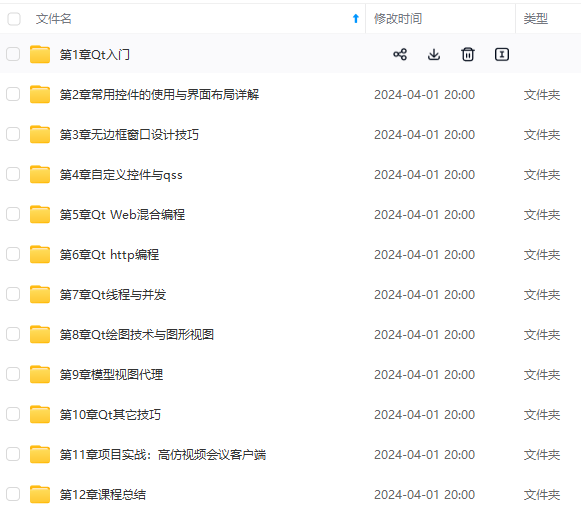

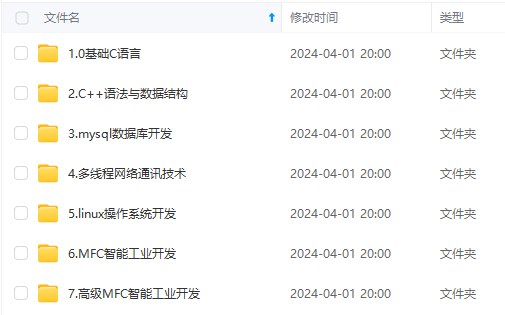

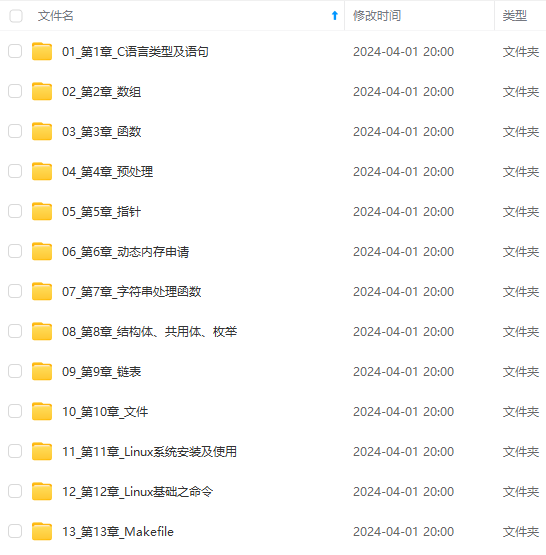

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

g-MmbRAmyX-1715566670383)]

[外链图片转存中…(img-D8sCnGNI-1715566670383)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上C C++开发知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?