环境说明:

三台机器(Centos 6.5):

Master 192.168.203.148

Slave1 192.168.203.149

Slave2 192.168.203.150

第一步:环境

spark环境配置:

spark安装很简单,可以参考网上教程,说下spark的配置:

主要是${SPARK_HOME}/conf/slaves中配置如下:

Master

Slave1

Slave2

export JAVA_HOME=/usr/local/java/jdk1.8.0_65

export SCALA_HOME=/usr/local/scala/scala-2.10.5

export SPARK_MASTER_IP=192.168.203.148

export SPARK_WORKER_MEMORY=1g

export HADOOP_CONF_DIR=/usr/local/hadoop/etc/hadoop

zookeeper搭建

kafka搭建:

参照_zhenwei博客:

http://blog.csdn.net/wang_zhenwei/article/details/48346327

http://blog.csdn.net/wang_zhenwei/article/details/48357131

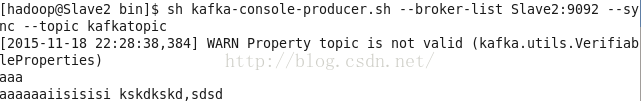

安装完成,发送消息为:

第二步:jar包下载

由于SparkStreaming读取kafka是依赖于其他jar包所以还要下载jar包对应分别为:

spark-streaming-kafka_2.11-0.8.2.2.jar

下载地址:http://mvnrepository.com/artifact/org.apache.kafka/kafka_2.11/0.8.2.2

kafka_2.10-0.8.1.jar,

metrics-core-2.2.0.jar,

zkclient-0.4.jar

本文详细介绍了如何在三台Centos 6.5机器上搭建Spark与Kafka环境,包括环境配置、所需jar包下载、Scala程序创建及运行。文章还提到了在Spark提交任务时可能出现的资源未接受错误及其解决方法,并提供了Spark配置的相关说明。

本文详细介绍了如何在三台Centos 6.5机器上搭建Spark与Kafka环境,包括环境配置、所需jar包下载、Scala程序创建及运行。文章还提到了在Spark提交任务时可能出现的资源未接受错误及其解决方法,并提供了Spark配置的相关说明。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2412

2412

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?