利用XPath爬取豆瓣电影Top250排行信息

一、爬取思路

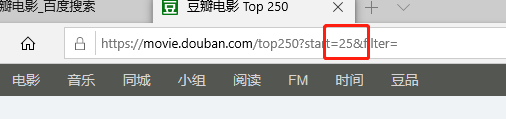

(1)、

此处为都豆瓣电影Top250的第二页(start=25)

此处为都豆瓣电影Top250的第三页(start=50)

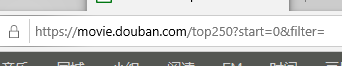

虽然第一页的网址为:

当我们把**start=()**改为 0 后,同样为第一页,可以通过这个思路抓取到25页电影的信息

(2)、先抓大再抓小的方法

这里有我们所需要的信息

先抓取电影信息所在的代码段

movieItemList = selector.xpath('//div[starts-with(@class,"info")]')其次是电影名,电影其他标题、链接、演员、评分、经典语录

title = eachmovie.xpath('div[@class="hd"]/a/span[@class="title"]/text()')

othertitle = eachmovie.xpath('div[@class="hd"]/a/span[@class="other"]/text()')

link = eachmovie.xpath('div[@class="hd"]/a/@href')[0]#[0]去除[' ']

Actor = eachmovie.xpath('div[@class="bd"]/p[@class=""]/text()')

star = eachmovie.xpath('div[@class="bd"]/div[@class="star"]/span[@class="rating_num"]/text()')[0]

quote = eachmovie.xpath('div[@class="bd"]/p[@class="quote"]/span/text()')二、代码实现

(1)、模块

import requests

import lxml.html

import csv(2)、爬取的网页

此处{}是为了电影每页网址不同,会用format方法进行填充

aimurl = "https://movie.douban.com/top250?start={}&filter="(3)、获取源码

headers=head 是为了反爬,爬虫中最简单的反爬技巧。

def getSource(url):

head = {'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.102 Safari/537.36 Edge/18.18362'}

content = requests.get(url, headers=head)#获取源码

content.encoding = "utf-8"#转码为utf-8

return content.content(4)、获取每部电影的信息

replace是为了将多余的部分替换掉。

def getEveryItem(source):

selector = lxml.html.document_fromstring(source)

movieItemList = selector.xpath('//div[starts-with(@class,"info")]')

movieList = []

for eachmovie in movieItemList:

movieDict = {}

title = eachmovie.xpath('div[@class="hd"]/a/span[@class="title"]/text()')

print(title)

othertitle = eachmovie.xpath('div[@class="hd"]/a/span[@class="other"]/text()')

link = eachmovie.xpath('div[@class="hd"]/a/@href')[0]#[0]去除[' ']

Actor = eachmovie.xpath('div[@class="bd"]/p[@class=""]/text()')

star = eachmovie.xpath('div[@class="bd"]/div[@class="star"]/span[@class="rating_num"]/text()')[0]

quote = eachmovie.xpath('div[@class="bd"]/p[@class="quote"]/span/text()')

if quote:#若quote没有信息,则直接quote = ''

quote = quote[0]

else:

quote = ''

#将上述所获取到的信息,储存到字典里

movieDict['title'] = ''.join(title + othertitle)

movieDict['url'] = link

movieDict['Actor'] = ''.join(Actor).replace(" ","").replace("\r","").replace("\n","")

movieDict['star'] = star

movieDict['quote'] = quote

print(movieDict)

movieList.append(movieDict)

return movieList(5)、写入表格中

def writeData(movieList):

with open('movie.csv','w',encoding = 'UTF-8', newline = '') as f:

writer = csv.DictWriter(f, fieldnames = ['title','url','Actor','star','quote'])

writer.writeheader()

for each in movieList:

print(each)

writer.writerow(each)(6)、主程序

由于电影排行榜有10页,需要进行for循环,并将其写入最起先的网页中,

if __name__ == "__main__":

movieList = []

for i in range(10):

pagelink = aimurl.format(i * 25)

print(pagelink)

source = getSource(pagelink)

movieList += getEveryItem(source)

print(movieList[:10])

writeData(movieList)三、结果:

1670

1670

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?