这里简单做一个wordcount代码,代码如下

scala版本

object WordCount {

def main(args: Array[String]): Unit = {

// 创建SparkConf

val conf = new SparkConf().setAppName("WordCount")

// 创建SparkContext

val sc = new SparkContext(conf)

// 通过SparkContext创建RDD

val lines: RDD[String] = sc.textFile(args(0))

// 切分压平

val words = lines.flatMap(_.split(" "))

// 将单词和1组合

val wordOne: RDD[(String, Int)] = words.map((_, 1))

// 分组聚合

val reduced: RDD[(String, Int)] = wordOne.reduceByKey(_ + _)

// 排序

val sorted: RDD[(String, Int)] = reduced.sortBy(_._2, false)

// 将计算好的数据保存到hdfs中

sorted.saveAsTextFile(args(1))

// 释放资源

sc.stop()

}

}

java版本

/**

* 使用Lambda表达式

*/

public class JavaLambdaWordCount {

public static void main(String[] args) {

// 创建conf

SparkConf conf = new SparkConf().setAppName("JavaLambdaWordCount");

// 创建sparkcontext

JavaSparkContext jsc = new JavaSparkContext(conf);

// 读取文件

JavaRDD<String> lines = jsc.textFile(args[0]);

// 切分压平

JavaRDD<String> words = lines.flatMap(line -> Arrays.stream(line.split(" ")).iterator());

// 单词和1组合

JavaPairRDD<String, Integer> wordAndOne = words.mapToPair(word -> Tuple2.apply(word, 1));

// 分组聚合

JavaPairRDD<String, Integer> reduced = wordAndOne.reduceByKey((x, y) -> x + y);

// 元组内元素位置置换

JavaPairRDD<Integer, String> swaped = reduced.mapToPair(tp -> tp.swap());

// 排序

JavaPairRDD<Integer, String> sorted = swaped.sortByKey(false);

// 元组内元素位置置换

JavaRDD<Tuple2<String, Integer>> result = sorted.map(tp -> tp.swap());

// 将结果集保存到hdfs

result.saveAsTextFile(args[1]);

// 释放资源

jsc.stop();

}

}

/**

* 不使用Lambda表达式

*/

public class JavaWordCount {

public static void main(String[] args) {

SparkConf conf = new SparkConf().setAppName("JavaWordCount");

JavaSparkContext jsc = new JavaSparkContext(conf);

JavaRDD<String> lines = jsc.textFile(args[0]);

JavaRDD<String> words = lines.flatMap(new FlatMapFunction<String, String>() {

@Override

public Iterator<String> call(String s) throws Exception {

String[] fields = s.split(" ");

return Arrays.asList(fields).iterator();

}

});

JavaPairRDD<String, Integer> wordAndOne = words.mapToPair(new PairFunction<String, String, Integer>() {

@Override

public Tuple2<String, Integer> call(String s) throws Exception {

return Tuple2.apply(s, 1);

}

});

JavaPairRDD<String, Integer> reduced = wordAndOne.reduceByKey(new Function2<Integer, Integer, Integer>() {

@Override

public Integer call(Integer integer, Integer integer2) throws Exception {

return integer + integer2;

}

});

JavaPairRDD<Integer, String> swap = reduced.mapToPair(new PairFunction<Tuple2<String, Integer>, Integer, String>() {

@Override

public Tuple2<Integer, String> call(Tuple2<String, Integer> tp) throws Exception {

return tp.swap();

}

});

JavaPairRDD<Integer, String> sorted = swap.sortByKey(false);

JavaPairRDD<String, Integer> result = sorted.mapToPair(new PairFunction<Tuple2<Integer, String>, String, Integer>() {

@Override

public Tuple2<String, Integer> call(Tuple2<Integer, String> tp) throws Exception {

return Tuple2.apply(tp._2, tp._1);

}

});

result.saveAsTextFile(args[1]);

jsc.stop();

}

}

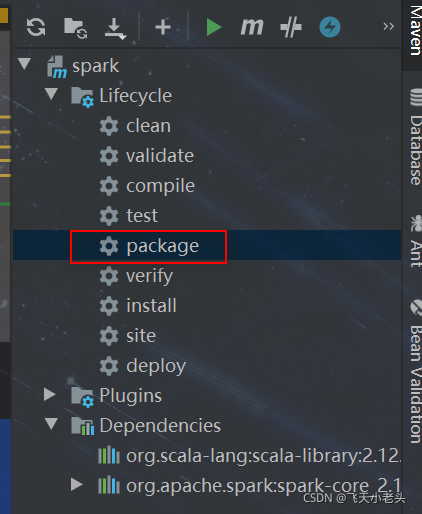

通过maven将代码打成jar包,集群中org.scala-lang和org.apache.spark的jar包资源都是存在的所以不需要将这两个也打进去,可在pom文件中加入scope,这样就不会将这两个的jar包资源打进去,然后双击package即可

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

<scope>provided</scope>

</dependency>

<!-- 导入spark的依赖,core指的是RDD编程API -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>${spark.version}</version>

<scope>provided</scope>

</dependency>

.在target中找到刚刚打好的jar包,上传到集群中,执行spark-submit提交任务即可,如下

./bin/spark-submit --master spark://lx01:7077 --executor-memory 512m --total-executor-cores 8 --class cn.jin.spark.WordCount /opt/apps/spark-3.0.0-bin-hadoop2.7/spark-1.0-SNAPSHOT.jar hdfs://lx01:9000/test/wordcount.txt hdfs://lx01:9000/test/out

# --master 是指定将任务提交给spark的哪个master

# --executor-memory 是指分配的executor的内存资源

# --total-executor-cores 是指分配的executor CPU核数

# --class 是指运行的主类名 然后输入jar包的路径即可,最后两个是传入的参数,在这里是从hdfs读取文件的路径和将结果写入hdfs的路径.

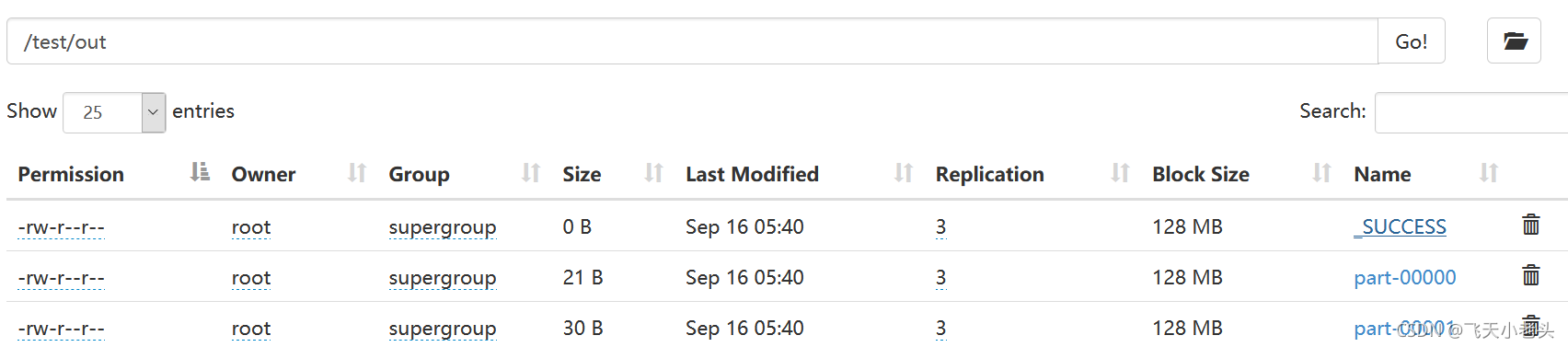

查看结果如下图所示

295

295

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?