#include "stdafx.h"

#include <boost/mpi.hpp>

#include <iostream>

#include <vector>

namespace mpi = boost::mpi;

#define Nelement 17

class cPoint

{

private:

friend class boost::serialization::access;

template<class Archive>

void serialize(Archive &ar, const unsigned int version)

{

ar & x;

ar & y;

ar & z;

ar & m;

}

public:

double x,y,z;

double m;

cPoint(){m=-100;};

cPoint(double xt,double yt,double zt,double mt):x(xt),y(yt),z(zt),m(mt){m=-100;};

};

int main(int argc, char *argv[]) {

mpi::environment env(argc, argv);

mpi::communicator world;

int tag = 99;

std::vector<int> LocNum;

cPoint arr[1000]; //save collected data from all processes

cPoint *pall;

pall = &arr[0]; //initialize pointer

std::vector<cPoint> my_data; //receive data from process 0;

for(int i=0;i<world.size();i++){

if(i == 0) LocNum.push_back(Nelement / world.size() + Nelement % world.size());

else{

LocNum.push_back(Nelement / world.size());}}; // Calculate number of data in each process

if (world.rank() == 0) {

std::vector<cPoint> AllData;

for(int i=0;i<Nelement;i++)AllData.push_back(cPoint(i,i,i,5));

int load = AllData.size() / world.size();

int start = load + AllData.size() % world.size();

for (int i = 1; i < world.size(); ++i){

std::vector<cPoint> to_send (AllData.begin()+start,AllData.begin()+start+load);

world.send(i,tag,to_send); //distribut data to all processes

LocNum.push_back(load);

start+=load;}

for(int i=0;i<load+AllData.size()%world.size();i++){

my_data.push_back(AllData[i]);

my_data[i].m = world.rank()+99; //here can do some computation

}

}

else {

world.recv(0,tag,my_data); //received data from root process

for(int i=0;i<my_data.size();i++){

my_data[i].m = world.rank()+100;} //here can do some computation

}

//void gatherv(const communicator & comm, const std::vector< T > & in_values, T * out_values, const std::vector< int > & sizes, int root);

boost::mpi::gatherv(world,my_data,pall,LocNum,0); //gather all data to root process

if(world.rank() == 0){

for(int i=0;i<Nelement;i++){

std::cout<<"No: "<<i<<'\t'<<arr[i].x<<'\t'<<arr[i].y<<'\t'<<arr[i].z<<'\t'<<arr[i].m<<std::endl;}};

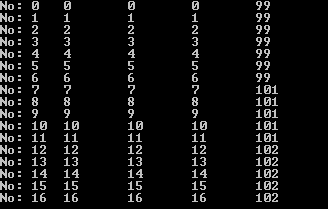

}如果有16组数据用三个processes, 结果如下:

结果正确。

以上程序问题在于新开了数组来存放收集的数据,但应该把数据直接存放在root process上分发之前的vector中。

其实子进程不需要知道收集后数据存放地址的信息,可以在进程上用如下函数模板:

template<typename T>

void gatherv(const communicator & comm, const std::vector< T > & in_values, int root);但在根进程上还要用前面的函数。做修改后,经测试,运行正常。程序如下:

#include "stdafx.h"

#include <boost/mpi.hpp>

#include <iostream>

#include <vector>

namespace mpi = boost::mpi;

#define Nelement 17

class cPoint

{

private:

friend class boost::serialization::access;

template<class Archive>

void serialize(Archive &ar, const unsigned int version)

{

ar & x;

ar & y;

ar & z;

ar & m;

}

public:

double x,y,z;

double m;

cPoint(){m=-100;};

cPoint(double xt,double yt,double zt,double mt):x(xt),y(yt),z(zt),m(mt){m=-100;};

};

int main(int argc, char *argv[]) {

mpi::environment env(argc, argv);

mpi::communicator world;

int tag = 99;

std::vector<int> LocNum;

cPoint arr[1000]; //save collected data from all processes

cPoint *pall;

pall = &arr[0]; //initialize pointer

std::vector<cPoint> my_data; //receive data from process 0;

for(int i=0;i<world.size();i++){

if(i == 0) LocNum.push_back(Nelement / world.size() + Nelement % world.size());

else{

LocNum.push_back(Nelement / world.size());}}; // Calculate number of data in each process

if (world.rank() == 0) {

std::vector<cPoint> AllData;

for(int i=0;i<Nelement;i++)AllData.push_back(cPoint(i,i,i,5));

int load = AllData.size() / world.size();

int start = load + AllData.size() % world.size();

for (int i = 1; i < world.size(); ++i){

std::vector<cPoint> to_send (AllData.begin()+start,AllData.begin()+start+load);

world.send(i,tag,to_send); //distribut data to all processes

LocNum.push_back(load);

start+=load;}

for(int i=0;i<load+AllData.size()%world.size();i++){

my_data.push_back(AllData[i]);

my_data[i].m = world.rank()+99; //here can do some computation

}

**boost::mpi::gatherv(world,my_data,pall,LocNum,0); //gather all data to root** process

}

else {

world.recv(0,tag,my_data); //received data from root process

for(int i=0;i<my_data.size();i++){

my_data[i].m = world.rank()+100;} //here can do some computation

**boost::mpi::gatherv(world,my_data,0); //gather all data to root process**

}

//void gatherv(const communicator & comm, const std::vector< T > & in_values, T * out_values, const std::vector< int > & sizes, int root);

//boost::mpi::gatherv(world,my_data,pall,LocNum,0); //gather all data to root process

if(world.rank() == 0){

for(int i=0;i<Nelement;i++){

std::cout<<"No: "<<i<<'\t'<<arr[i].x<<'\t'<<arr[i].y<<'\t'<<arr[i].z<<'\t'<<arr[i].m<<std::endl;}};

}经过以上修改后,可以看出,其实用来存放搜集后数据的数组不需要在root process之外定义,因此可以把

cPoint arr[1000]; //save collected data from all processes

cPoint *pall;

pall = &arr[0]; //initialize pointer直接移进process 0之内。修改后测试,运行正常。

回到一开始的问题,其实存放搜集后数据的数组可以直接用初始的数组,不需要另开,因此在root process之内,可以直接将指向存数据的pointer处世化为Alldata[0]:

cPoint *pall;

// pall = &arr[0]; //initialize pointer

pall = &AllData[0]; //initialize pointer这样就不需要定义:arr[1000]这个数组了。最后修改后的代码如下:

#include "stdafx.h"

#include <boost/mpi.hpp>

#include <iostream>

#include <vector>

namespace mpi = boost::mpi;

#define Nelement 17

class cPoint

{

private:

friend class boost::serialization::access;

template<class Archive>

void serialize(Archive &ar, const unsigned int version)

{

ar & x;

ar & y;

ar & z;

ar & m;

}

public:

double x,y,z;

double m;

cPoint(){m=-100;};

cPoint(double xt,double yt,double zt,double mt):x(xt),y(yt),z(zt),m(mt){m=-100;};

};

int main(int argc, char *argv[]) {

mpi::environment env(argc, argv);

mpi::communicator world;

int tag = 99;

std::vector<int> LocNum;

std::vector<cPoint> my_data; //receive data from process 0;

for(int i=0;i<world.size();i++){

if(i == 0) LocNum.push_back(Nelement / world.size() + Nelement % world.size());

else{

LocNum.push_back(Nelement / world.size());}}; // Calculate number of data in each process

if (world.rank() == 0) {

std::vector<cPoint> AllData;

for(int i=0;i<Nelement;i++)AllData.push_back(cPoint(i,i,i,5));

cPoint *pall;

pall = &AllData[0]; //initialize pointer

int load = AllData.size() / world.size();

int start = load + AllData.size() % world.size();

for (int i = 1; i < world.size(); ++i){

std::vector<cPoint> to_send (AllData.begin()+start,AllData.begin()+start+load);

world.send(i,tag,to_send); //distribut data to all processes

LocNum.push_back(load);

start+=load;}

for(int i=0;i<load+AllData.size()%world.size();i++){

my_data.push_back(AllData[i]);

my_data[i].m = world.rank()+99; //here can do some computation

}

boost::mpi::gatherv(world,my_data,pall,LocNum,0); //gather all data to root process

for(int i=0;i<Nelement;i++){

// std::cout<<"No: "<<i<<'\t'<<arr[i].x<<'\t'<<arr[i].y<<'\t'<<arr[i].z<<'\t'<<arr[i].m<<std::endl;};

std::cout<<"No: "<<i<<'\t'<<AllData[i].x<<'\t'<<AllData[i].y<<'\t'<<AllData[i].z<<'\t'<<AllData[i].m<<std::endl;};

}

else {

world.recv(0,tag,my_data); //received data from root process

for(int i=0;i<my_data.size();i++){

my_data[i].m = world.rank()+100;} //here can do some computation

boost::mpi::gatherv(world,my_data,0); //gather all data to root process

}

//void gatherv(const communicator & comm, const std::vector< T > & in_values, T * out_values, const std::vector< int > & sizes, int root);

//boost::mpi::gatherv(world,my_data,pall,LocNum,0); //gather all data to root process

}综上,此程序可以将root process上的数据分发到其它进程,然后再搜集回root进程。

3550

3550

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?