res_DeepCrossing模型

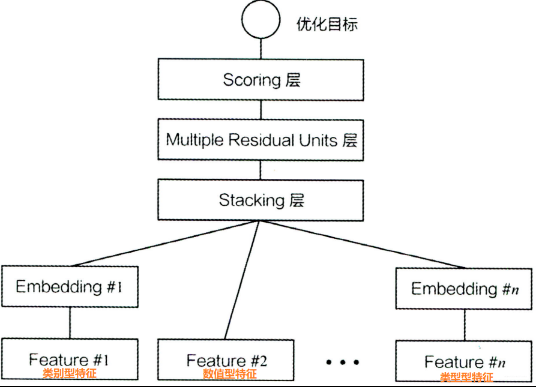

DeepCrossing

4. 代码实现

# 导包

import warnings

warnings.filterwarnings("ignore")

import pandas as pd

import numpy as np

import tensorflow as tf

from tensorflow.keras.layers import *

from tensorflow.keras.models import *

from sklearn.preprocessing import MinMaxScaler, LabelEncoder

# from utils import SparseFeat, DenseFeat, VarLenSparseFeat

# from collections import namedtuple

# # 使用具名元组定义特征标记

# SparseFeat = namedtuple('SparseFeat', ['name', 'vocabulary_size', 'embedding_dim'])

# DenseFeat = namedtuple('DenseFeat', ['name', 'dimension'])

# VarLenSparseFeat = namedtuple('VarLenSparseFeat', ['name', 'vocabulary_size', 'embedding_dim', 'maxlen'])

1.读取并处理数据

- 读取数据

- 划分出数值型特征和类别型特征

- 数值型特征处理 – 控制填充,取对数(归一化?)

- 类别型特征的处理 – one-hot编码

# 读取数据

data = pd.read_csv('./data/criteo_sample.txt')

data.head()

| label | I1 | I2 | I3 | I4 | I5 | I6 | I7 | I8 | I9 | ... | C17 | C18 | C19 | C20 | C21 | C22 | C23 | C24 | C25 | C26 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | NaN | 3 | 260.0 | NaN | 17668.0 | NaN | NaN | 33.0 | NaN | ... | e5ba7672 | 87c6f83c | NaN | NaN | 0429f84b | NaN | 3a171ecb | c0d61a5c | NaN | NaN |

| 1 | 0 | NaN | -1 | 19.0 | 35.0 | 30251.0 | 247.0 | 1.0 | 35.0 | 160.0 | ... | d4bb7bd8 | 6fc84bfb | NaN | NaN | 5155d8a3 | NaN | be7c41b4 | ded4aac9 | NaN | NaN |

| 2 | 0 | 0.0 | 0 | 2.0 | 12.0 | 2013.0 | 164.0 | 6.0 | 35.0 | 523.0 | ... | e5ba7672 | 675c9258 | NaN | NaN | 2e01979f | NaN | bcdee96c | 6d5d1302 | NaN | NaN |

| 3 | 0 | NaN | 13 | 1.0 | 4.0 | 16836.0 | 200.0 | 5.0 | 4.0 | 29.0 | ... | e5ba7672 | 52e44668 | NaN | NaN | e587c466 | NaN | 32c7478e | 3b183c5c | NaN | NaN |

| 4 | 0 | 0.0 | 0 | 104.0 | 27.0 | 1990.0 | 142.0 | 4.0 | 32.0 | 37.0 | ... | e5ba7672 | 25c88e42 | 21ddcdc9 | b1252a9d | 0e8585d2 | NaN | 32c7478e | 0d4a6d1a | 001f3601 | 92c878de |

5 rows × 40 columns

data.info()

<class 'pandas.core.frame.DataFrame'>

RangeIndex: 200 entries, 0 to 199

Data columns (total 40 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 label 200 non-null int64

1 I1 110 non-null float64

2 I2 200 non-null int64

3 I3 166 non-null float64

4 I4 165 non-null float64

5 I5 194 non-null float64

6 I6 149 non-null float64

7 I7 190 non-null float64

8 I8 200 non-null float64

9 I9 190 non-null float64

10 I10 110 non-null float64

11 I11 190 non-null float64

12 I12 43 non-null float64

13 I13 165 non-null float64

14 C1 200 non-null object

15 C2 200 non-null object

16 C3 191 non-null object

17 C4 191 non-null object

18 C5 200 non-null object

19 C6 168 non-null object

20 C7 200 non-null object

21 C8 200 non-null object

22 C9 200 non-null object

23 C10 200 non-null object

24 C11 200 non-null object

25 C12 191 non-null object

26 C13 200 non-null object

27 C14 200 non-null object

28 C15 200 non-null object

29 C16 191 non-null object

30 C17 200 non-null object

31 C18 200 non-null object

32 C19 118 non-null object

33 C20 118 non-null object

34 C21 191 non-null object

35 C22 41 non-null object

36 C23 200 non-null object

37 C24 191 non-null object

38 C25 118 non-null object

39 C26 118 non-null object

dtypes: float64(12), int64(2), object(26)

memory usage: 62.6+ KB

# 将数据划分为sparse_feature和dense_feature

columns = data.columns.values

columns

array(['label', 'I1', 'I2', 'I3', 'I4', 'I5', 'I6', 'I7', 'I8', 'I9',

'I10', 'I11', 'I12', 'I13', 'C1', 'C2', 'C3', 'C4', 'C5', 'C6',

'C7', 'C8', 'C9', 'C10', 'C11', 'C12', 'C13', 'C14', 'C15', 'C16',

'C17', 'C18', 'C19', 'C20', 'C21', 'C22', 'C23', 'C24', 'C25',

'C26'], dtype=object)

dense_features = [ feat for feat in columns if 'I' in feat]

sparse_features = [feat for feat in columns if 'C' in feat]

dense_features+sparse_features # 列表可以相加

['I1',

'I2',

'I3',

'I4',

'I5',

'I6',

'I7',

'I8',

'I9',

'I10',

'I11',

'I12',

'I13',

'C1',

'C2',

'C3',

'C4',

'C5',

'C6',

'C7',

'C8',

'C9',

'C10',

'C11',

'C12',

'C13',

'C14',

'C15',

'C16',

'C17',

'C18',

'C19',

'C20',

'C21',

'C22',

'C23',

'C24',

'C25',

'C26']

# 数据处理的函数

def data_process(data_df,dense_features,sparse_features):

"""

简单处理特征,包括填充缺失值,数值处理,类别编码

param data_df: DataFrame格式的数据

param dense_features: 数值特征名称列表

param sparse_features: 类别特征名称列表

"""

data_df[dense_features] = data_df[dense_features].fillna(0.0) # 空值补0.0

for f in dense_features: # 对数值型特征的处理

data_df[f] = data_df[f].apply(lambda x: np.log(x+1) if x > -1 else -1)

# 将类别型特征的空值填上-1

data_df[sparse_features] = data_df[sparse_features].fillna("-1")

for f in sparse_features:

lbe = LabelEncoder() # 对类别型特征进行了one-hot

data_df[f] = lbe.fit_transform(data_df[f]) # 传入的是个列表

return data_df[dense_features + sparse_features]

train_data = data_process(data, dense_features, sparse_features)

train_data

| I1 | I2 | I3 | I4 | I5 | I6 | I7 | I8 | I9 | I10 | ... | C17 | C18 | C19 | C20 | C21 | C22 | C23 | C24 | C25 | C26 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0.000000 | 1.386294 | 5.564520 | 0.000000 | 9.779567 | 0.000000 | 0.000000 | 3.526361 | 0.000000 | 0.000000 | ... | 8 | 66 | 0 | 0 | 3 | 0 | 1 | 96 | 0 | 0 |

| 1 | 0.000000 | -1.000000 | 2.995732 | 3.583519 | 10.317318 | 5.513429 | 0.693147 | 3.583519 | 5.081404 | 0.000000 | ... | 7 | 52 | 0 | 0 | 47 | 0 | 7 | 112 | 0 | 0 |

| 2 | 0.000000 | 0.000000 | 1.098612 | 2.564949 | 7.607878 | 5.105945 | 1.945910 | 3.583519 | 6.261492 | 0.000000 | ... | 8 | 49 | 0 | 0 | 25 | 0 | 6 | 53 | 0 | 0 |

| 3 | 0.000000 | 2.639057 | 0.693147 | 1.609438 | 9.731334 | 5.303305 | 1.791759 | 1.609438 | 3.401197 | 0.000000 | ... | 8 | 37 | 0 | 0 | 156 | 0 | 0 | 32 | 0 | 0 |

| 4 | 0.000000 | 0.000000 | 4.653960 | 3.332205 | 7.596392 | 4.962845 | 1.609438 | 3.496508 | 3.637586 | 0.000000 | ... | 8 | 14 | 5 | 3 | 9 | 0 | 0 | 5 | 1 | 47 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 195 | 0.000000 | 0.000000 | 4.736198 | 1.386294 | 8.018625 | 6.356108 | 1.098612 | 1.386294 | 5.370638 | 0.000000 | ... | 0 | 74 | 5 | 1 | 30 | 5 | 0 | 118 | 17 | 48 |

| 196 | 0.000000 | 0.693147 | 0.693147 | 0.693147 | 7.382746 | 2.564949 | 0.693147 | 2.564949 | 2.772589 | 0.000000 | ... | 1 | 25 | 0 | 0 | 138 | 0 | 0 | 68 | 0 | 0 |

| 197 | 0.693147 | 0.000000 | 1.945910 | 1.386294 | 0.000000 | 0.000000 | 2.995732 | 1.386294 | 1.386294 | 0.693147 | ... | 4 | 40 | 17 | 2 | 41 | 0 | 0 | 12 | 16 | 11 |

| 198 | 0.000000 | 3.135494 | 1.945910 | 3.135494 | 5.318120 | 5.036953 | 4.394449 | 2.944439 | 6.232448 | 0.000000 | ... | 4 | 7 | 18 | 1 | 123 | 0 | 0 | 10 | 16 | 49 |

| 199 | 0.693147 | -1.000000 | 0.000000 | 0.000000 | 4.934474 | 0.000000 | 0.693147 | 0.000000 | 0.000000 | 0.693147 | ... | 7 | 72 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

200 rows × 39 columns

train_data['label'] = data['label']

train_data

| I1 | I2 | I3 | I4 | I5 | I6 | I7 | I8 | I9 | I10 | ... | C18 | C19 | C20 | C21 | C22 | C23 | C24 | C25 | C26 | label | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0.000000 | 1.386294 | 5.564520 | 0.000000 | 9.779567 | 0.000000 | 0.000000 | 3.526361 | 0.000000 | 0.000000 | ... | 66 | 0 | 0 | 3 | 0 | 1 | 96 | 0 | 0 | 0 |

| 1 | 0.000000 | -1.000000 | 2.995732 | 3.583519 | 10.317318 | 5.513429 | 0.693147 | 3.583519 | 5.081404 | 0.000000 | ... | 52 | 0 | 0 | 47 | 0 | 7 | 112 | 0 | 0 | 0 |

| 2 | 0.000000 | 0.000000 | 1.098612 | 2.564949 | 7.607878 | 5.105945 | 1.945910 | 3.583519 | 6.261492 | 0.000000 | ... | 49 | 0 | 0 | 25 | 0 | 6 | 53 | 0 | 0 | 0 |

| 3 | 0.000000 | 2.639057 | 0.693147 | 1.609438 | 9.731334 | 5.303305 | 1.791759 | 1.609438 | 3.401197 | 0.000000 | ... | 37 | 0 | 0 | 156 | 0 | 0 | 32 | 0 | 0 | 0 |

| 4 | 0.000000 | 0.000000 | 4.653960 | 3.332205 | 7.596392 | 4.962845 | 1.609438 | 3.496508 | 3.637586 | 0.000000 | ... | 14 | 5 | 3 | 9 | 0 | 0 | 5 | 1 | 47 | 0 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 195 | 0.000000 | 0.000000 | 4.736198 | 1.386294 | 8.018625 | 6.356108 | 1.098612 | 1.386294 | 5.370638 | 0.000000 | ... | 74 | 5 | 1 | 30 | 5 | 0 | 118 | 17 | 48 | 0 |

| 196 | 0.000000 | 0.693147 | 0.693147 | 0.693147 | 7.382746 | 2.564949 | 0.693147 | 2.564949 | 2.772589 | 0.000000 | ... | 25 | 0 | 0 | 138 | 0 | 0 | 68 | 0 | 0 | 1 |

| 197 | 0.693147 | 0.000000 | 1.945910 | 1.386294 | 0.000000 | 0.000000 | 2.995732 | 1.386294 | 1.386294 | 0.693147 | ... | 40 | 17 | 2 | 41 | 0 | 0 | 12 | 16 | 11 | 1 |

| 198 | 0.000000 | 3.135494 | 1.945910 | 3.135494 | 5.318120 | 5.036953 | 4.394449 | 2.944439 | 6.232448 | 0.000000 | ... | 7 | 18 | 1 | 123 | 0 | 0 | 10 | 16 | 49 | 0 |

| 199 | 0.693147 | -1.000000 | 0.000000 | 0.000000 | 4.934474 | 0.000000 | 0.693147 | 0.000000 | 0.000000 | 0.693147 | ... | 72 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

200 rows × 40 columns

train_data.info()

<class 'pandas.core.frame.DataFrame'>

RangeIndex: 200 entries, 0 to 199

Data columns (total 40 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 I1 200 non-null float64

1 I2 200 non-null float64

2 I3 200 non-null float64

3 I4 200 non-null float64

4 I5 200 non-null float64

5 I6 200 non-null float64

6 I7 200 non-null float64

7 I8 200 non-null float64

8 I9 200 non-null float64

9 I10 200 non-null float64

10 I11 200 non-null float64

11 I12 200 non-null float64

12 I13 200 non-null float64

13 C1 200 non-null int32

14 C2 200 non-null int32

15 C3 200 non-null int32

16 C4 200 non-null int32

17 C5 200 non-null int32

18 C6 200 non-null int32

19 C7 200 non-null int32

20 C8 200 non-null int32

21 C9 200 non-null int32

22 C10 200 non-null int32

23 C11 200 non-null int32

24 C12 200 non-null int32

25 C13 200 non-null int32

26 C14 200 non-null int32

27 C15 200 non-null int32

28 C16 200 non-null int32

29 C17 200 non-null int32

30 C18 200 non-null int32

31 C19 200 non-null int32

32 C20 200 non-null int32

33 C21 200 non-null int32

34 C22 200 non-null int32

35 C23 200 non-null int32

36 C24 200 non-null int32

37 C25 200 non-null int32

38 C26 200 non-null int32

39 label 200 non-null int64

dtypes: float64(13), int32(26), int64(1)

memory usage: 42.3 KB

dense_features

['I1',

'I2',

'I3',

'I4',

'I5',

'I6',

'I7',

'I8',

'I9',

'I10',

'I11',

'I12',

'I13']

2.模型的创建开始了

2.1数值型特征的处理

- 数值型特征的读入

- 拼接数值型特征

dense_inputs = [] # 用来存放数值型特征的输入

for f in dense_features:

_input = Input([1], name=f)

dense_inputs.append(_input)

dense_inputs

[<tf.Tensor 'I1:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I2:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I3:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I4:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I5:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I6:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I7:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I8:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I9:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I10:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I11:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I12:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I13:0' shape=(None, 1) dtype=float32>]

dense_concat_inputs = Concatenate(axis=1)(dense_inputs)

dense_concat_inputs

<tf.Tensor 'concatenate/concat:0' shape=(None, 13) dtype=float32>

2.2 类别型特征

- 类别型特征的输入

- 转化为Embedding

- 拼接类别型特征

sparse_inputs = [] # 用来存放类别型特征的输入

for f in sparse_features:

_input = Input([1], name=f)

sparse_inputs.append(_input)

sparse_inputs

[<tf.Tensor 'C1:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C2:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C3:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C4:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C5:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C6:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C7:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C8:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C9:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C10:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C11:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C12:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C13:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C14:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C15:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C16:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C17:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C18:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C19:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C20:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C21:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C22:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C23:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C24:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C25:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C26:0' shape=(None, 1) dtype=float32>]

- 对类别型特征进行emb

sparse_1d_embed = [] # 存放各个类别型特征对应的Embedding后的结果

for i, _input in enumerate(sparse_inputs):

f = sparse_features[i] # f : C1 ... C26, sparse_features是个列表

voc_size = train_data[f].nunique()

_embed = Flatten()(Embedding(voc_size+1, 4,)(_input))

sparse_1d_embed.append(_embed)

sparse_1d_embed

[<tf.Tensor 'flatten_26/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_27/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_28/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_29/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_30/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_31/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_32/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_33/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_34/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_35/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_36/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_37/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_38/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_39/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_40/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_41/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_42/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_43/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_44/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_45/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_46/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_47/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_48/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_49/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_50/Reshape:0' shape=(None, 4) dtype=float32>,

<tf.Tensor 'flatten_51/Reshape:0' shape=(None, 4) dtype=float32>]

# 类别型特征的拼接

sparse_1d_embed = Concatenate(axis=1)(sparse_1d_embed) # B x m*dim (n表示类别特征的数量,dim表示embedding的维度)

sparse_1d_embed

<tf.Tensor 'concatenate_1/concat:0' shape=(None, 104) dtype=float32>

2.3数值型特征和类别型特征拼接到一起

# 将dense特征和Sparse特征拼接到一起

all_inputs = Concatenate(axis=1)([dense_concat_inputs, sparse_1d_embed]) # B x (n + m*dim)

all_inputs

<tf.Tensor 'concatenate_2/concat:0' shape=(None, 117) dtype=float32>

2.4 残差

# DNN残差块的定义

class ResidualBlock(Layer):

def __init__(self, units): # units表示的是DNN隐藏层神经元数量

super(ResidualBlock, self).__init__()

self.units = units

def build(self, input_shape):

out_dim = input_shape[-1]

self.dnn1 = Dense(self.units, activation='relu')

self.dnn2 = Dense(out_dim, activation='relu') # 保证输入的维度和输出的维度一致才能进行残差连接

def call(self, inputs):

x = inputs

x = self.dnn1(x)

x = self.dnn2(x)

x = Activation('relu')(x + inputs) # 残差操作

return x

# block_nums表示DNN残差块的数量

def get_dnn_logits(dnn_inputs, block_nums=3):

dnn_out = dnn_inputs

for i in range(block_nums):

dnn_out = ResidualBlock(64)(dnn_out)

# 将dnn的输出转化成logits

dnn_logits = Dense(1, activation='sigmoid')(dnn_out)

return dnn_logits

# 输入到dnn中,需要提前定义需要几个残差块

output_layer = get_dnn_logits(all_inputs, block_nums=3)

output_layer

<tf.Tensor 'dense/Sigmoid:0' shape=(None, 1) dtype=float32>

dense_inputs+sparse_inputs

[<tf.Tensor 'I1:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I2:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I3:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I4:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I5:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I6:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I7:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I8:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I9:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I10:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I11:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I12:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'I13:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C1:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C2:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C3:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C4:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C5:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C6:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C7:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C8:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C9:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C10:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C11:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C12:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C13:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C14:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C15:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C16:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C17:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C18:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C19:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C20:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C21:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C22:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C23:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C24:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C25:0' shape=(None, 1) dtype=float32>,

<tf.Tensor 'C26:0' shape=(None, 1) dtype=float32>]

model = Model(dense_inputs+sparse_inputs, output_layer)

model.summary()

Model: "functional_1"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

C1 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C2 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C3 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C4 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C5 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C6 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C7 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C8 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C9 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C10 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C11 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C12 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C13 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C14 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C15 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C16 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C17 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C18 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C19 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C20 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C21 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C22 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C23 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C24 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C25 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

C26 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

embedding (Embedding) (None, 1, 4) 112 C1[0][0]

__________________________________________________________________________________________________

embedding_1 (Embedding) (None, 1, 4) 372 C2[0][0]

__________________________________________________________________________________________________

embedding_2 (Embedding) (None, 1, 4) 692 C3[0][0]

__________________________________________________________________________________________________

embedding_3 (Embedding) (None, 1, 4) 632 C4[0][0]

__________________________________________________________________________________________________

embedding_4 (Embedding) (None, 1, 4) 52 C5[0][0]

__________________________________________________________________________________________________

embedding_5 (Embedding) (None, 1, 4) 32 C6[0][0]

__________________________________________________________________________________________________

embedding_6 (Embedding) (None, 1, 4) 736 C7[0][0]

__________________________________________________________________________________________________

embedding_7 (Embedding) (None, 1, 4) 80 C8[0][0]

__________________________________________________________________________________________________

embedding_8 (Embedding) (None, 1, 4) 12 C9[0][0]

__________________________________________________________________________________________________

embedding_9 (Embedding) (None, 1, 4) 572 C10[0][0]

__________________________________________________________________________________________________

embedding_10 (Embedding) (None, 1, 4) 696 C11[0][0]

__________________________________________________________________________________________________

embedding_11 (Embedding) (None, 1, 4) 684 C12[0][0]

__________________________________________________________________________________________________

embedding_12 (Embedding) (None, 1, 4) 668 C13[0][0]

__________________________________________________________________________________________________

embedding_13 (Embedding) (None, 1, 4) 60 C14[0][0]

__________________________________________________________________________________________________

embedding_14 (Embedding) (None, 1, 4) 684 C15[0][0]

__________________________________________________________________________________________________

embedding_15 (Embedding) (None, 1, 4) 676 C16[0][0]

__________________________________________________________________________________________________

embedding_16 (Embedding) (None, 1, 4) 40 C17[0][0]

__________________________________________________________________________________________________

embedding_17 (Embedding) (None, 1, 4) 512 C18[0][0]

__________________________________________________________________________________________________

embedding_18 (Embedding) (None, 1, 4) 180 C19[0][0]

__________________________________________________________________________________________________

embedding_19 (Embedding) (None, 1, 4) 20 C20[0][0]

__________________________________________________________________________________________________

embedding_20 (Embedding) (None, 1, 4) 680 C21[0][0]

__________________________________________________________________________________________________

embedding_21 (Embedding) (None, 1, 4) 28 C22[0][0]

__________________________________________________________________________________________________

embedding_22 (Embedding) (None, 1, 4) 44 C23[0][0]

__________________________________________________________________________________________________

embedding_23 (Embedding) (None, 1, 4) 504 C24[0][0]

__________________________________________________________________________________________________

embedding_24 (Embedding) (None, 1, 4) 84 C25[0][0]

__________________________________________________________________________________________________

embedding_25 (Embedding) (None, 1, 4) 364 C26[0][0]

__________________________________________________________________________________________________

I1 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I2 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I3 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I4 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I5 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I6 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I7 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I8 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I9 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I10 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I11 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I12 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

I13 (InputLayer) [(None, 1)] 0

__________________________________________________________________________________________________

flatten (Flatten) (None, 4) 0 embedding[0][0]

__________________________________________________________________________________________________

flatten_1 (Flatten) (None, 4) 0 embedding_1[0][0]

__________________________________________________________________________________________________

flatten_2 (Flatten) (None, 4) 0 embedding_2[0][0]

__________________________________________________________________________________________________

flatten_3 (Flatten) (None, 4) 0 embedding_3[0][0]

__________________________________________________________________________________________________

flatten_4 (Flatten) (None, 4) 0 embedding_4[0][0]

__________________________________________________________________________________________________

flatten_5 (Flatten) (None, 4) 0 embedding_5[0][0]

__________________________________________________________________________________________________

flatten_6 (Flatten) (None, 4) 0 embedding_6[0][0]

__________________________________________________________________________________________________

flatten_7 (Flatten) (None, 4) 0 embedding_7[0][0]

__________________________________________________________________________________________________

flatten_8 (Flatten) (None, 4) 0 embedding_8[0][0]

__________________________________________________________________________________________________

flatten_9 (Flatten) (None, 4) 0 embedding_9[0][0]

__________________________________________________________________________________________________

flatten_10 (Flatten) (None, 4) 0 embedding_10[0][0]

__________________________________________________________________________________________________

flatten_11 (Flatten) (None, 4) 0 embedding_11[0][0]

__________________________________________________________________________________________________

flatten_12 (Flatten) (None, 4) 0 embedding_12[0][0]

__________________________________________________________________________________________________

flatten_13 (Flatten) (None, 4) 0 embedding_13[0][0]

__________________________________________________________________________________________________

flatten_14 (Flatten) (None, 4) 0 embedding_14[0][0]

__________________________________________________________________________________________________

flatten_15 (Flatten) (None, 4) 0 embedding_15[0][0]

__________________________________________________________________________________________________

flatten_16 (Flatten) (None, 4) 0 embedding_16[0][0]

__________________________________________________________________________________________________

flatten_17 (Flatten) (None, 4) 0 embedding_17[0][0]

__________________________________________________________________________________________________

flatten_18 (Flatten) (None, 4) 0 embedding_18[0][0]

__________________________________________________________________________________________________

flatten_19 (Flatten) (None, 4) 0 embedding_19[0][0]

__________________________________________________________________________________________________

flatten_20 (Flatten) (None, 4) 0 embedding_20[0][0]

__________________________________________________________________________________________________

flatten_21 (Flatten) (None, 4) 0 embedding_21[0][0]

__________________________________________________________________________________________________

flatten_22 (Flatten) (None, 4) 0 embedding_22[0][0]

__________________________________________________________________________________________________

flatten_23 (Flatten) (None, 4) 0 embedding_23[0][0]

__________________________________________________________________________________________________

flatten_24 (Flatten) (None, 4) 0 embedding_24[0][0]

__________________________________________________________________________________________________

flatten_25 (Flatten) (None, 4) 0 embedding_25[0][0]

__________________________________________________________________________________________________

concatenate (Concatenate) (None, 13) 0 I1[0][0]

I2[0][0]

I3[0][0]

I4[0][0]

I5[0][0]

I6[0][0]

I7[0][0]

I8[0][0]

I9[0][0]

I10[0][0]

I11[0][0]

I12[0][0]

I13[0][0]

__________________________________________________________________________________________________

concatenate_1 (Concatenate) (None, 104) 0 flatten[0][0]

flatten_1[0][0]

flatten_2[0][0]

flatten_3[0][0]

flatten_4[0][0]

flatten_5[0][0]

flatten_6[0][0]

flatten_7[0][0]

flatten_8[0][0]

flatten_9[0][0]

flatten_10[0][0]

flatten_11[0][0]

flatten_12[0][0]

flatten_13[0][0]

flatten_14[0][0]

flatten_15[0][0]

flatten_16[0][0]

flatten_17[0][0]

flatten_18[0][0]

flatten_19[0][0]

flatten_20[0][0]

flatten_21[0][0]

flatten_22[0][0]

flatten_23[0][0]

flatten_24[0][0]

flatten_25[0][0]

__________________________________________________________________________________________________

concatenate_2 (Concatenate) (None, 117) 0 concatenate[0][0]

concatenate_1[0][0]

__________________________________________________________________________________________________

residual_block (ResidualBlock) (None, 117) 15157 concatenate_2[0][0]

__________________________________________________________________________________________________

residual_block_1 (ResidualBlock (None, 117) 15157 residual_block[0][0]

__________________________________________________________________________________________________

residual_block_2 (ResidualBlock (None, 117) 15157 residual_block_1[0][0]

__________________________________________________________________________________________________

dense (Dense) (None, 1) 118 residual_block_2[0][0]

==================================================================================================

Total params: 54,805

Trainable params: 54,805

Non-trainable params: 0

__________________________________________________________________________________________________

model.compile(optimizer="adam",

loss="binary_crossentropy",

metrics=[tf.keras.metrics.AUC(name='auc')])

# 将输入数据转化成字典的形式输入 -- 这样可以

train_model_input = {name: data[name] for name in dense_features + sparse_features}

# 这样还不行,这是个列表

# train_model_input_1 = [data[name] for name in dense_features + sparse_features]

# type(train_model_input_1)

list

# array数组可以

train_dense_x = [train_data[f].values for f in dense_features]

train_sparse_x = [train_data[f].values for f in sparse_features]

train_dense_x

[array([0. , 0. , 0. , 0. , 0. ,

0. , 0. , 2.99573227, 0. , 1.09861229,

1.09861229, 0. , 0. , 2.19722458, 0. ,

0. , 0. , 0. , 1.79175947, 1.09861229,

0. , 0. , 0. , 0. , 0. ,

2.30258509, 0. , 0. , 2.19722458, 0. ,

0.69314718, 0.69314718, 2.48490665, 2.39789527, 0. ,

0. , 0. , 0. , 0. , 2.39789527,

0. , 0. , 0. , 0.69314718, 1.09861229,

0. , 1.60943791, 1.09861229, 0. , 0. ,

0. , 0. , 0. , 1.09861229, 0. ,

0. , 2.07944154, 0. , 1.79175947, 0. ,

0. , 0. , 0. , 1.09861229, 0. ,

1.60943791, 0. , 0.69314718, 0. , 0.69314718,

0. , 0. , 0. , 0. , 0.69314718,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 1.09861229, 0. ,

0. , 0. , 0. , 0. , 1.38629436,

0. , 0. , 0.69314718, 0. , 0. ,

0. , 0. , 0. , 0.69314718, 1.38629436,

0. , 0. , 0. , 2.19722458, 0. ,

0. , 0. , 0. , 0. , 1.38629436,

0. , 0. , 3.63758616, 1.79175947, 0. ,

0. , 0. , 0. , 1.38629436, 0. ,

0. , 1.09861229, 0.69314718, 0. , 2.07944154,

0. , 0. , 0. , 0. , 1.09861229,

0. , 2.56494936, 0. , 0. , 0. ,

0. , 0. , 0.69314718, 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0.69314718, 2.30258509, 0.69314718, 0. , 0. ,

0. , 0. , 0. , 0. , 1.60943791,

0. , 0. , 0. , 1.09861229, 0. ,

0. , 2.19722458, 0. , 0. , 0. ,

0. , 0. , 0. , 0.69314718, 0.69314718,

0.69314718, 0. , 1.09861229, 0.69314718, 0. ,

0. , 1.79175947, 0. , 0. , 0. ,

0. , 1.60943791, 0. , 0. , 1.09861229,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0.69314718, 0. , 0.69314718]),

array([ 1.38629436, -1. , 0. , 2.63905733, 0. ,

-1. , 5.91620206, 2.39789527, 0. , 2.48490665,

0.69314718, 1.09861229, 1.79175947, -1. , 0. ,

4.41884061, 3.21887582, 4.66343909, 4.4543473 , 1.38629436,

0.69314718, 1.60943791, 0. , 3.78418963, 0.69314718,

0.69314718, 0.69314718, 3.29583687, -1. , 0.69314718,

5.71373281, 0. , 5.52942909, 0.69314718, -1. ,

3.58351894, 0.69314718, 0. , 5.36129217, 2.48490665,

1.79175947, 6.65929392, 5.19295685, 0.69314718, 4.29045944,

4.06044301, 0.69314718, 1.60943791, 1.79175947, 3.91202301,

7.96067261, 4.78749174, 3.25809654, 5.19849703, 1.09861229,

0. , 4.44265126, 0. , 0.69314718, 2.07944154,

4.00733319, 0. , 0.69314718, 1.79175947, 2.99573227,

0. , 1.79175947, 0.69314718, 0.69314718, -1. ,

2.94443898, 1.79175947, 3.33220451, 3.91202301, 2.99573227,

0.69314718, 0.69314718, 4.07753744, 2.48490665, 7.84815309,

3.4339872 , 3.91202301, -1. , 2.07944154, 4.2341065 ,

5.72031178, 1.09861229, 0. , 0. , 3.13549422,

0.69314718, 0.69314718, 1.60943791, 4.17438727, 0. ,

0.69314718, 3.98898405, 0. , 0.69314718, 0.69314718,

0. , 0. , 6.2126061 , 2.07944154, 1.60943791,

1.94591015, 4.06044301, 4.51085951, 3.40119738, -1. ,

1.09861229, 4.36944785, 4.73619845, 0.69314718, 0. ,

0.69314718, 0.69314718, 1.79175947, 1.09861229, 2.19722458,

0. , 0.69314718, 7.52294092, 4.18965474, 5.10594547,

2.39789527, 2.77258872, -1. , 1.38629436, 0. ,

0.69314718, 0.69314718, 5.03043792, 0.69314718, -1. ,

2.7080502 , -1. , 1.09861229, 2.89037176, 0.69314718,

5.21493576, 2.63905733, 0.69314718, 0.69314718, 0.69314718,

1.09861229, 0.69314718, 2.56494936, 0. , -1. ,

5.91620206, 5.47227067, 0. , 1.38629436, 0.69314718,

2.39789527, 1.09861229, 0.69314718, 0.69314718, 0.69314718,

0.69314718, 0.69314718, -1. , 4.31748811, 3.66356165,

0.69314718, 2.7080502 , 2.94443898, 1.09861229, 0. ,

7.98002359, 4.02535169, 2.19722458, 6.20253552, 3.58351894,

2.19722458, 0. , 0. , 5.5174529 , 0. ,

0. , -1. , 3.66356165, 0. , 1.94591015,

3.33220451, 8.00703401, 5.19295685, 0.69314718, 0. ,

0. , 1.09861229, 2.89037176, 1.09861229, 2.99573227,

0. , 0.69314718, 0. , 3.13549422, -1. ]),

array([5.56452041, 2.99573227, 1.09861229, 0.69314718, 4.65396035,

4.15888308, 1.60943791, 3.4339872 , 3.61091791, 2.19722458,

5.25227343, 1.09861229, 0. , 0. , 3.21887582,

3.04452244, 1.38629436, 1.60943791, 3.97029191, 1.60943791,

1.79175947, 0. , 0. , 1.09861229, 1.09861229,

1.09861229, 1.09861229, 2.07944154, 4.11087386, 2.63905733,

4.27666612, 0.69314718, 2.30258509, 1.60943791, 0.69314718,

2.63905733, 2.63905733, 0. , 0. , 1.38629436,

3.13549422, 0.69314718, 4.12713439, 1.79175947, 3.04452244,

4.11087386, 3.40119738, 3.98898405, 1.38629436, 0.69314718,

3.17805383, 1.60943791, 1.79175947, 4.55387689, 0. ,

2.30258509, 0. , 0.69314718, 3.8501476 , 1.60943791,

0.69314718, 3.55534806, 0.69314718, 2.48490665, 0.69314718,

4.88280192, 1.09861229, 1.79175947, 0.69314718, 0. ,

2.30258509, 0. , 0. , 0. , 2.94443898,

1.09861229, 0. , 0. , 2.48490665, 1.09861229,

1.09861229, 0. , 0. , 0. , 0.69314718,

0.69314718, 1.60943791, 1.38629436, 1.79175947, 2.07944154,

0. , 1.60943791, 4.33073334, 1.38629436, 1.09861229,

1.79175947, 2.89037176, 2.07944154, 2.7080502 , 3.25809654,

3.63758616, 1.60943791, 0. , 3.04452244, 0.69314718,

3.97029191, 1.09861229, 0. , 1.60943791, 1.38629436,

1.38629436, 2.19722458, 7.94307272, 3.36729583, 0.69314718,

2.7080502 , 3.33220451, 3.80666249, 3.63758616, 2.19722458,

3.58351894, 2.99573227, 1.60943791, 0. , 3.52636052,

1.79175947, 2.30258509, 0. , 0. , 1.94591015,

2.39789527, 0.69314718, 1.38629436, 2.30258509, 0. ,

1.38629436, 0. , 1.94591015, 3.55534806, 2.07944154,

1.38629436, 1.38629436, 3.25809654, 2.63905733, 1.09861229,

6.4035742 , 3.68887945, 1.60943791, 3.09104245, 4.20469262,

0. , 0.69314718, 1.09861229, 0. , 1.09861229,

0.69314718, 1.09861229, 2.07944154, 1.38629436, 1.38629436,

1.60943791, 1.38629436, 0. , 1.38629436, 2.7080502 ,

0. , 2.77258872, 3.17805383, 4.34380542, 3.40119738,

0. , 2.39789527, 1.94591015, 5.04985601, 0. ,

2.19722458, 0.69314718, 0.69314718, 0.69314718, 1.38629436,

2.39789527, 1.94591015, 0. , 1.94591015, 0. ,

0. , 1.09861229, 1.79175947, 2.07944154, 2.19722458,

1.79175947, 0.69314718, 1.79175947, 3.17805383, 6.28413416,

4.73619845, 0.69314718, 1.94591015, 1.94591015, 0. ]),

array([0. , 3.58351894, 2.56494936, 1.60943791, 3.33220451,

3.71357207, 0.69314718, 2.39789527, 3.13549422, 3.17805383,

3.25809654, 0.69314718, 0. , 0. , 3.61091791,

1.60943791, 1.09861229, 0.69314718, 1.94591015, 0.69314718,

3.61091791, 0. , 0. , 1.38629436, 0.69314718,

1.79175947, 2.83321334, 0.69314718, 2.48490665, 0.69314718,

1.38629436, 0. , 1.79175947, 1.60943791, 3.17805383,

1.79175947, 1.09861229, 0.69314718, 0. , 1.38629436,

1.79175947, 0.69314718, 0. , 2.07944154, 2.48490665,

3.04452244, 3.4339872 , 2.7080502 , 1.79175947, 0.69314718,

0. , 1.60943791, 1.60943791, 2.07944154, 0. ,

0. , 2.07944154, 0. , 1.94591015, 1.38629436,

0.69314718, 1.38629436, 0. , 2.30258509, 0.69314718,

0.69314718, 0.69314718, 2.94443898, 2.94443898, 0. ,

0. , 2.63905733, 0. , 0. , 2.83321334,

1.79175947, 0. , 3.04452244, 1.79175947, 0. ,

2.77258872, 1.38629436, 0. , 3.13549422, 0.69314718,

0. , 2.07944154, 1.09861229, 0.69314718, 2.30258509,

0. , 0.69314718, 3.09104245, 2.07944154, 0. ,

0. , 1.60943791, 2.7080502 , 0.69314718, 2.30258509,

2.39789527, 0. , 0. , 2.19722458, 0.69314718,

2.77258872, 1.94591015, 0. , 1.60943791, 1.09861229,

0. , 0. , 1.79175947, 3.13549422, 3.52636052,

1.38629436, 3.66356165, 1.60943791, 4.47733681, 1.79175947,

1.60943791, 3.04452244, 0. , 2.07944154, 2.56494936,

1.38629436, 0.69314718, 0. , 1.79175947, 1.09861229,

1.94591015, 2.77258872, 1.38629436, 0. , 0. ,

1.09861229, 0. , 1.09861229, 2.48490665, 2.19722458,

1.38629436, 2.39789527, 3.13549422, 1.38629436, 1.09861229,

2.48490665, 1.94591015, 1.09861229, 1.79175947, 3.40119738,

1.38629436, 0.69314718, 1.38629436, 3.21887582, 2.89037176,

0. , 1.38629436, 2.56494936, 0.69314718, 0.69314718,

1.09861229, 2.7080502 , 0. , 1.60943791, 3.8501476 ,

1.09861229, 2.48490665, 0. , 1.60943791, 1.79175947,

0. , 2.56494936, 1.38629436, 1.09861229, 0. ,

2.56494936, 0. , 0. , 0.69314718, 1.09861229,

1.09861229, 1.94591015, 0. , 1.94591015, 0. ,

0. , 0. , 0.69314718, 1.09861229, 1.94591015,

1.94591015, 2.48490665, 2.07944154, 3.04452244, 2.07944154,

1.38629436, 0.69314718, 1.38629436, 3.13549422, 0. ]),

array([ 9.77956697, 10.31731758, 7.60787807, 9.73133412, 7.5963923 ,

7.29369772, 7.48885296, 0.69314718, 8.45212119, 3.4339872 ,

2.19722458, 8.61866616, 9.82146372, 6.5971457 , 8.52178264,

13.13692484, 9.22975077, 7.69666708, 3.61091791, 1.60943791,

12.3872352 , 7.3607399 , 7.28961052, 7.43897159, 7.98616486,

2.94443898, 9.57533065, 8.13534695, 2.48490665, 8.05547514,

5.60211882, 1.09861229, 3.09104245, 0.69314718, 8.06148687,

8.50512061, 10.99986401, 9.7251381 , 7.39817409, 6.93439721,

9.24232342, 6.51767127, 8.10681604, 7.12205988, 1.60943791,

9.38117959, 4.72738782, 7.31322039, 9.76457025, 8.04462628,

10.0683662 , 9.51259081, 0. , 5.02388052, 0. ,

9.79300282, 2.39789527, 8.20740183, 6.95368421, 11.22806607,

0. , 0. , 8.96380024, 3.21887582, 8.91958692,

0. , 7.33106031, 6.16541785, 9.2865604 , 6.27098843,

0. , 9.25607826, 10.23113525, 8.22496748, 5.18738581,

8.79694389, 10.27890574, 9.98322252, 8.37239861, 11.05962948,

7.90581031, 7.45760929, 5.88053299, 3.63758616, 10.10699966,

9.51782507, 7.35819375, 0. , 7.46851327, 5.59842196,

9.57831128, 12.3676216 , 5.50938834, 9.59886276, 8.37054761,

9.37067191, 7.32514896, 8.23004431, 4.77912349, 7.24208236,

2.77258872, 9.35314117, 4.53259949, 1.79175947, 5.60211882,

5.95064255, 7.42892719, 7.28344823, 9.41295463, 5.65599181,

8.53542596, 10.46891499, 1.09861229, 2.48490665, 9.37373392,

8.00936308, 7.31322039, 9.4045905 , 5.25227343, 10.15272761,

5.25227343, 0.69314718, 3.36729583, 9.2444519 , 4.44265126,

9.09537835, 9.93081085, 7.3395377 , 7.56060116, 4.26267988,

9.36443391, 6.30809844, 7.52185925, 8.76904081, 9.93532531,

12.63146129, 0. , 2.19722458, 7.48717369, 8.41227702,

8.66198594, 3.8918203 , 10.58215541, 8.63887971, 7.49331725,

1.09861229, 3.8918203 , 1.79175947, 7.96067261, 7.98650494,

5.88053299, 8.43814998, 9.24261393, 7.52510075, 2.07944154,

8.66250492, 8.12563099, 8.01035959, 4.15888308, 6.33505425,

9.06935268, 5.86363118, 7.24351297, 9.79768249, 8.76826315,

9.58169701, 8.32093497, 10.64601996, 0. , 3.71357207,

3.8918203 , 5.70378247, 1.79175947, 0.69314718, 12.58808146,

10.58009876, 4.53259949, 9.85686753, 11.28503316, 8.05547514,

10.63842426, 6.77193556, 10.67373465, 11.72826263, 5.70711026,

11.63407173, 8.05038445, 7.28961052, 7.97625194, 4.83628191,

8.77369415, 7.65917137, 8.74655735, 5.00394631, 11.03438954,

8.01862547, 7.38274645, 0. , 5.31811999, 4.93447393]),

array([0. , 5.51342875, 5.10594547, 5.30330491, 4.96284463,

4.12713439, 4.18965474, 1.38629436, 5.38449506, 2.48490665,

3.29583687, 0.69314718, 6.13556489, 1.09861229, 6.0799332 ,

0. , 0. , 0. , 3.61091791, 0.69314718,

0. , 0. , 1.60943791, 3.09104245, 3.68887945,

1.79175947, 4.38202663, 4.65396035, 2.07944154, 5.09986643,

2.99573227, 0. , 1.94591015, 0. , 4.99721227,

4.94875989, 5.68017261, 1.09861229, 4.18965474, 1.38629436,

0. , 0. , 0. , 2.63905733, 2.48490665,

3.04452244, 3.4339872 , 3.04452244, 0. , 4.29045944,

0. , 0. , 0. , 3.66356165, 0. ,

4.09434456, 1.94591015, 3.76120012, 4.72738782, 0. ,

0. , 0. , 4.78749174, 2.30258509, 2.30258509,

0.69314718, 1.38629436, 4.15888308, 0. , 2.77258872,

0. , 5.14166356, 0. , 3.04452244, 3.49650756,

4.65396035, 0. , 6.94119006, 4.12713439, 5.98896142,

5.35185813, 3.04452244, 0. , 3.13549422, 3.78418963,

0. , 4.26267988, 0. , 3.63758616, 2.48490665,

5.79605775, 0. , 4.24849524, 3.66356165, 0. ,

6.19644413, 4.47733681, 6.47234629, 0.69314718, 3.68887945,

0. , 0. , 0. , 3.13549422, 5.14166356,

0. , 6.31173481, 0. , 0. , 1.79175947,

0. , 6.74993119, 1.38629436, 3.21887582, 0. ,

2.77258872, 4.30406509, 0. , 4.51085951, 0. ,

4.4543473 , 3.04452244, 0. , 4.21950771, 4.15888308,

4.2341065 , 0. , 4.75359019, 3.13549422, 2.39789527,

0. , 3.21887582, 4.57471098, 4.91998093, 0. ,

0. , 0. , 2.30258509, 3.93182563, 5.22035583,

0. , 2.83321334, 4.20469262, 3.91202301, 1.60943791,

2.48490665, 2.7080502 , 1.38629436, 0. , 4.47733681,

0. , 3.98898405, 6.47543272, 3.61091791, 1.60943791,

5.10594547, 0. , 4.84418709, 0.69314718, 0. ,

2.48490665, 3.93182563, 0. , 3.49650756, 6.79009724,

6.79794041, 4.83628191, 0. , 1.60943791, 1.79175947,

2.89037176, 0. , 1.38629436, 0. , 0. ,

7.50714108, 0. , 2.48490665, 0. , 3.09104245,

4.44265126, 3.4657359 , 6.52356231, 0. , 3.25809654,

7.65302041, 3.87120101, 1.94591015, 1.09861229, 4.81218436,

4.54329478, 4.38202663, 0. , 0. , 0. ,

6.35610766, 2.56494936, 0. , 5.0369526 , 0. ]),

array([0. , 0.69314718, 1.94591015, 1.79175947, 1.60943791,

1.60943791, 2.7080502 , 3.52636052, 2.30258509, 1.09861229,

1.09861229, 3.73766962, 3.17805383, 3.13549422, 3.25809654,

0. , 0. , 0. , 3.4339872 , 1.09861229,

0. , 0. , 1.79175947, 1.94591015, 2.89037176,

2.30258509, 1.09861229, 2.39789527, 2.30258509, 0.69314718,

0.69314718, 1.60943791, 3.55534806, 2.39789527, 4.14313473,

0.69314718, 0. , 0.69314718, 3.21887582, 4.48863637,

0. , 0. , 0. , 2.30258509, 3.21887582,

0.69314718, 3.33220451, 2.48490665, 0. , 1.38629436,

0. , 0. , 0. , 1.09861229, 0. ,

1.09861229, 3.40119738, 1.09861229, 1.79175947, 0. ,

0. , 0. , 0.69314718, 4.7095302 , 2.30258509,

2.7080502 , 2.30258509, 2.77258872, 0. , 2.19722458,

0. , 1.60943791, 0. , 0.69314718, 3.55534806,

0.69314718, 0. , 2.30258509, 1.60943791, 0. ,

1.79175947, 0.69314718, 0. , 1.60943791, 1.60943791,

0.69314718, 1.60943791, 0. , 0.69314718, 2.56494936,

2.77258872, 0. , 0.69314718, 1.60943791, 2.19722458,

2.39789527, 0.69314718, 0. , 1.60943791, 1.79175947,

0. , 0. , 0. , 5.15329159, 0.69314718,

0. , 1.79175947, 0. , 0. , 1.94591015,

1.94591015, 1.09861229, 3.29583687, 1.79175947, 0. ,

1.94591015, 2.7080502 , 0. , 1.38629436, 0. ,

3.78418963, 1.09861229, 0.69314718, 0.69314718, 2.19722458,

1.09861229, 0. , 2.89037176, 3.93182563, 5.5174529 ,

0. , 2.56494936, 2.56494936, 1.09861229, 0. ,

0. , 0. , 0.69314718, 0.69314718, 1.09861229,

0. , 2.48490665, 0.69314718, 1.38629436, 0.69314718,

1.09861229, 2.63905733, 3.25809654, 0. , 4.24849524,

0. , 2.89037176, 2.48490665, 2.39789527, 1.60943791,

1.79175947, 0. , 1.79175947, 3.09104245, 0. ,

0.69314718, 2.19722458, 0.69314718, 2.48490665, 2.56494936,

1.38629436, 1.60943791, 0. , 0.69314718, 0.69314718,

3.04452244, 0. , 3.25809654, 2.19722458, 0. ,

0. , 1.79175947, 2.48490665, 0. , 1.60943791,

0. , 3.63758616, 0. , 0. , 1.09861229,

0. , 0.69314718, 4.26267988, 5.71042702, 1.79175947,

2.99573227, 1.94591015, 0. , 0. , 0. ,

1.09861229, 0.69314718, 2.99573227, 4.39444915, 0.69314718]),

array([3.52636052, 3.58351894, 3.58351894, 1.60943791, 3.49650756,

3.63758616, 3.25809654, 3.87120101, 3.58351894, 2.19722458,

3.33220451, 0.69314718, 1.60943791, 1.09861229, 3.49650756,

1.60943791, 3.49650756, 0.69314718, 3.21887582, 0.69314718,

0. , 2.89037176, 1.38629436, 2.39789527, 1.38629436,

1.79175947, 2.83321334, 1.09861229, 3.4339872 , 0.69314718,

1.94591015, 0. , 1.79175947, 1.60943791, 0. ,

3.13549422, 1.09861229, 0.69314718, 0.69314718, 1.38629436,

1.79175947, 1.60943791, 0.69314718, 2.77258872, 2.7080502 ,

2.99573227, 3.52636052, 2.99573227, 2.19722458, 0.69314718,

1.09861229, 2.07944154, 1.60943791, 3.4339872 , 0. ,

0. , 3.73766962, 3.4339872 , 3.78418963, 1.38629436,

0.69314718, 1.38629436, 2.99573227, 2.30258509, 0.69314718,

2.39789527, 1.09861229, 1.60943791, 0.69314718, 1.09861229,

2.07944154, 2.63905733, 1.38629436, 1.38629436, 3.55534806,

2.89037176, 0. , 0.69314718, 2.7080502 , 2.07944154,

3.78418963, 2.7080502 , 2.39789527, 0.69314718, 2.56494936,

0. , 3.76120012, 1.38629436, 2.19722458, 2.77258872,

0. , 1.38629436, 3.52636052, 2.83321334, 0. ,

2.63905733, 1.79175947, 3.63758616, 0.69314718, 3.49650756,

2.39789527, 0. , 0. , 3.09104245, 2.99573227,

3.09104245, 3.8918203 , 1.94591015, 2.99573227, 2.19722458,

0. , 0. , 3.91202301, 3.13549422, 0.69314718,

1.79175947, 3.58351894, 1.60943791, 3.91202301, 1.38629436,

2.94443898, 2.7080502 , 0. , 2.83321334, 2.99573227,

3.76120012, 2.56494936, 3.04452244, 1.79175947, 0.69314718,

2.39789527, 2.94443898, 1.94591015, 1.94591015, 1.79175947,

1.09861229, 0. , 1.09861229, 3.25809654, 1.60943791,

1.38629436, 2.39789527, 3.36729583, 1.38629436, 1.09861229,

2.48490665, 3.4339872 , 2.99573227, 3.4657359 , 3.58351894,

1.60943791, 2.83321334, 1.38629436, 2.30258509, 2.94443898,

1.94591015, 1.79175947, 3.73766962, 1.09861229, 1.79175947,

1.38629436, 3.58351894, 0. , 2.30258509, 2.30258509,

2.07944154, 3.58351894, 0. , 1.60943791, 1.79175947,

2.39789527, 3.17805383, 2.48490665, 2.07944154, 2.07944154,

2.99573227, 0. , 0. , 0.69314718, 1.38629436,

1.79175947, 3.76120012, 1.09861229, 1.79175947, 3.25809654,

1.09861229, 0. , 1.94591015, 1.38629436, 3.55534806,

2.07944154, 1.09861229, 3.76120012, 3.04452244, 2.07944154,

1.38629436, 2.56494936, 1.38629436, 2.94443898, 0. ]),

array([0. , 5.08140436, 6.26149168, 3.40119738, 3.63758616,

3.8501476 , 6.19440539, 4.84418709, 4.91265489, 3.17805383,

3.25809654, 3.52636052, 5.44673737, 1.09861229, 5.26269019,

1.60943791, 4.02535169, 0.69314718, 5.64190707, 0.69314718,

4.82028157, 4.02535169, 1.60943791, 3.09104245, 6.08221891,

1.79175947, 4.6443909 , 1.94591015, 3.68887945, 3.49650756,

2.99573227, 0. , 1.79175947, 1.60943791, 6.62539237,

4.12713439, 4.47733681, 0.69314718, 4.73619845, 4.88280192,

2.63905733, 1.60943791, 0. , 4.49980967, 4.24849524,

3.04452244, 4.97673374, 4.59511985, 2.19722458, 3.8918203 ,

3.87120101, 3.58351894, 1.60943791, 3.29583687, 0. ,

4.59511985, 5.66642669, 3.63758616, 4.71849887, 1.38629436,

0.69314718, 1.38629436, 3.4339872 , 5.00394631, 2.30258509,

3.71357207, 1.09861229, 6.68959927, 5.64190707, 6.37331979,

2.83321334, 4.57471098, 0. , 3.04452244, 5.30330491,

4.31748811, 0. , 5.02388052, 4.2341065 , 4.81218436,

5.49306144, 2.83321334, 2.48490665, 4.91265489, 4.14313473,

0. , 4.77068462, 2.63905733, 2.48490665, 6.3526294 ,

6.07073773, 0.69314718, 3.52636052, 3.25809654, 0. ,

4.94875989, 2.48490665, 6.07073773, 3.49650756, 3.63758616,

2.39789527, 0.69314718, 0. , 6.34388043, 5.28320373,

3.09104245, 6.02344759, 2.39789527, 4.30406509, 3.4339872 ,

1.38629436, 4.59511985, 4.36944785, 3.13549422, 6.22059017,

4.99043259, 5.59842196, 1.60943791, 4.48863637, 1.79175947,

5.18178355, 3.04452244, 0. , 4.21950771, 2.94443898,

5.12989871, 0. , 5.62401751, 4.59511985, 6.94215671,

1.94591015, 3.04452244, 2.48490665, 4.59511985, 2.19722458,

4.66343909, 0. , 1.09861229, 4.63472899, 5.22035583,

2.30258509, 5.09986643, 4.11087386, 4.09434456, 1.09861229,

2.48490665, 4.2341065 , 4.73619845, 0.69314718, 4.41884061,

1.79175947, 5.6094718 , 4.85203026, 5.170484 , 2.94443898,

5.08140436, 1.60943791, 4.80402104, 4.69134788, 1.38629436,

2.07944154, 3.63758616, 0. , 4.59511985, 6.76041469,

4.07753744, 4.71849887, 0. , 1.60943791, 1.79175947,

4.44265126, 3.29583687, 6.58340922, 3.8286414 , 0. ,

5.7651911 , 0. , 4.49980967, 0. , 3.21887582,

3.91202301, 5.81413053, 3.04452244, 2.99573227, 4.2341065 ,

4.56434819, 0.69314718, 2.83321334, 4.00733319, 4.68213123,

3.63758616, 4.74493213, 0.69314718, 3.04452244, 1.09861229,

5.37063803, 2.77258872, 1.38629436, 6.23244802, 0. ]),

array([0. , 0. , 0. , 0. , 0. ,

0. , 0. , 1.38629436, 0. , 0.69314718,

0.69314718, 0. , 0. , 0.69314718, 0. ,

0. , 0. , 0. , 0.69314718, 0.69314718,

0. , 0. , 0. , 0. , 0. ,

0.69314718, 0. , 0. , 0.69314718, 0. ,

0.69314718, 0.69314718, 0.69314718, 0.69314718, 0. ,

0. , 0. , 0. , 0. , 0.69314718,

0. , 0. , 0. , 0. , 0.69314718,

0. , 1.09861229, 0. , 0. , 0. ,

0. , 0. , 0. , 0.69314718, 0. ,

0. , 0.69314718, 0. , 0.69314718, 0. ,

0. , 0. , 0. , 0.69314718, 0. ,

0.69314718, 0. , 0.69314718, 0. , 0.69314718,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0.69314718, 0. ,

0. , 0. , 0. , 0. , 0.69314718,

0. , 0. , 0.69314718, 0. , 0. ,

0. , 0. , 0. , 0.69314718, 0. ,

0. , 0. , 0. , 0.69314718, 0. ,

0. , 0. , 0. , 0. , 0.69314718,

0. , 0. , 0. , 1.38629436, 0. ,

0. , 0. , 0. , 1.09861229, 0. ,

0. , 0.69314718, 0.69314718, 0. , 0.69314718,

0. , 0. , 0. , 0. , 0.69314718,

0. , 1.09861229, 0. , 0. , 0. ,

0. , 0. , 0.69314718, 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0.69314718, 1.09861229, 0.69314718, 0. , 0. ,

0. , 0. , 0. , 0. , 0.69314718,

0. , 0. , 0. , 1.09861229, 0. ,

0. , 0.69314718, 0. , 0. , 0. ,

0. , 0. , 0. , 0.69314718, 0.69314718,

0.69314718, 0. , 0.69314718, 0.69314718, 0. ,

0. , 0.69314718, 0. , 0. , 0. ,

0. , 0.69314718, 0. , 0. , 0.69314718,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0.69314718, 0. , 0.69314718]),

array([0. , 0.69314718, 1.38629436, 1.09861229, 0.69314718,

0.69314718, 2.07944154, 1.79175947, 0.69314718, 0.69314718,

0.69314718, 1.79175947, 1.09861229, 1.60943791, 2.30258509,

0. , 0. , 0. , 1.79175947, 0.69314718,

0. , 0. , 0.69314718, 0.69314718, 2.07944154,

0.69314718, 0.69314718, 0.69314718, 1.09861229, 0.69314718,

0.69314718, 1.09861229, 1.60943791, 0.69314718, 2.30258509,

0.69314718, 0. , 0.69314718, 1.94591015, 2.77258872,

0. , 0. , 0. , 1.38629436, 2.07944154,

0.69314718, 1.60943791, 1.38629436, 0. , 0.69314718,

0. , 0. , 0. , 0.69314718, 0. ,

0.69314718, 1.60943791, 0.69314718, 0.69314718, 0. ,

0. , 0. , 0.69314718, 2.39789527, 0.69314718,

1.38629436, 0.69314718, 1.60943791, 0. , 1.60943791,

0. , 0.69314718, 0. , 0.69314718, 2.30258509,

0.69314718, 0. , 1.09861229, 1.09861229, 0. ,

1.09861229, 0.69314718, 0. , 1.38629436, 0.69314718,

0.69314718, 0.69314718, 0. , 0.69314718, 2.07944154,

2.30258509, 0. , 0.69314718, 1.38629436, 0.69314718,

0.69314718, 0.69314718, 0. , 0.69314718, 1.09861229,

0. , 0. , 0. , 3.09104245, 0.69314718,

0. , 0.69314718, 0. , 0. , 1.60943791,

1.79175947, 0.69314718, 0.69314718, 1.38629436, 0. ,

1.38629436, 1.60943791, 0. , 1.09861229, 0. ,

1.38629436, 0.69314718, 0.69314718, 0.69314718, 1.09861229,

1.09861229, 0. , 1.79175947, 1.60943791, 3.49650756,

0. , 1.09861229, 0.69314718, 0.69314718, 0. ,

0. , 0. , 0.69314718, 0.69314718, 0.69314718,

0. , 1.38629436, 0. , 0.69314718, 0.69314718,

1.09861229, 1.60943791, 1.09861229, 0. , 1.79175947,

0. , 0.69314718, 1.38629436, 1.09861229, 0.69314718,

1.79175947, 0. , 1.09861229, 2.30258509, 0. ,

0.69314718, 0.69314718, 0.69314718, 2.39789527, 0.69314718,

0.69314718, 0.69314718, 0. , 0.69314718, 0.69314718,

1.09861229, 0. , 1.94591015, 2.07944154, 0. ,

0. , 0.69314718, 1.09861229, 0. , 1.09861229,

0. , 2.83321334, 0. , 0. , 0.69314718,

0. , 0.69314718, 2.39789527, 2.77258872, 1.38629436,

0.69314718, 1.38629436, 0. , 0. , 0. ,

0.69314718, 0.69314718, 2.30258509, 2.48490665, 0.69314718]),

array([0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 1.09861229, 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0.69314718, 0. , 0. ,

0. , 0. , 0. , 0. , 0.69314718,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 2.07944154, 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0.69314718, 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0.69314718, 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 1.09861229, 0. , 0. ,

0. , 0. , 0. , 0. , 1.38629436,

0. , 0. , 0. , 1.09861229, 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0.69314718, 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ,

0.69314718, 0.69314718, 0. , 0. , 0. ,

0. , 0. , 0. , 0. , 0. ]),

array([0. , 3.58351894, 2.94443898, 1.60943791, 3.33220451,

3.71357207, 3.25809654, 1.09861229, 3.78418963, 2.48490665,

3.25809654, 0.69314718, 0. , 0. , 3.61091791,

1.60943791, 1.09861229, 0.69314718, 1.94591015, 0.69314718,

4.14313473, 0. , 0. , 2.07944154, 0.69314718,

1.79175947, 2.83321334, 0.69314718, 2.07944154, 0.69314718,

2.99573227, 0. , 1.79175947, 0. , 3.68887945,

2.48490665, 1.09861229, 0.69314718, 0. , 1.38629436,

1.79175947, 0.69314718, 0. , 2.07944154, 2.48490665,

3.04452244, 3.4339872 , 2.7080502 , 1.94591015, 0.69314718,

1.09861229, 1.60943791, 0.69314718, 3.25809654, 0. ,

0. , 1.79175947, 0. , 1.94591015, 1.38629436,

0.69314718, 1.38629436, 0. , 2.30258509, 0.69314718,

0. , 0.69314718, 4.15888308, 2.94443898, 0. ,

2.07944154, 2.63905733, 0. , 0. , 2.83321334,

1.79175947, 0. , 3.78418963, 1.79175947, 0.69314718,

2.77258872, 1.38629436, 0. , 3.13549422, 0.69314718,

0. , 3.61091791, 1.09861229, 0.69314718, 2.30258509,

0. , 0.69314718, 3.4657359 , 2.89037176, 0. ,

0.69314718, 1.60943791, 2.7080502 , 0.69314718, 2.39789527,

2.39789527, 0. , 0. , 0. , 0.69314718,

2.77258872, 4.63472899, 1.09861229, 1.60943791, 1.79175947,

0. , 0. , 1.38629436, 3.09104245, 3.52636052,

1.38629436, 3.66356165, 1.60943791, 4.48863637, 1.79175947,

2.19722458, 2.56494936, 0. , 2.07944154, 2.94443898,

1.38629436, 1.60943791, 0. , 1.79175947, 1.09861229,

1.94591015, 2.83321334, 1.38629436, 1.09861229, 0. ,

1.09861229, 0. , 1.09861229, 2.48490665, 3.8501476 ,

1.38629436, 1.94591015, 3.40119738, 1.38629436, 1.09861229,

2.48490665, 1.94591015, 1.09861229, 3.4657359 , 3.49650756,

1.38629436, 0.69314718, 1.38629436, 3.21887582, 1.38629436,

1.79175947, 1.38629436, 2.56494936, 0.69314718, 0.69314718,

1.09861229, 2.94443898, 0. , 1.60943791, 3.8501476 ,

1.09861229, 2.7080502 , 0. , 1.60943791, 1.79175947,

0. , 3.29583687, 1.38629436, 0. , 0. ,

2.56494936, 0. , 0. , 0.69314718, 1.09861229,

1.09861229, 1.94591015, 0. , 1.94591015, 0. ,

0. , 0. , 1.38629436, 1.09861229, 3.21887582,

2.07944154, 2.48490665, 3.58351894, 3.04452244, 2.07944154,

1.38629436, 2.56494936, 0. , 3.13549422, 0. ])]

train_model_input

{'I1': 0 0.000000

1 0.000000

2 0.000000

3 0.000000

4 0.000000

...

195 0.000000

196 0.000000

197 0.693147

198 0.000000

199 0.693147

Name: I1, Length: 200, dtype: float64,

'I2': 0 1.386294

1 -1.000000

2 0.000000

3 2.639057

4 0.000000

...

195 0.000000

196 0.693147

197 0.000000

198 3.135494

199 -1.000000

Name: I2, Length: 200, dtype: float64,

'I3': 0 5.564520

1 2.995732

2 1.098612

3 0.693147

4 4.653960

...

195 4.736198

196 0.693147

197 1.945910

198 1.945910

199 0.000000

Name: I3, Length: 200, dtype: float64,

'I4': 0 0.000000

1 3.583519

2 2.564949

3 1.609438

4 3.332205

...

195 1.386294

196 0.693147

197 1.386294

198 3.135494

199 0.000000

Name: I4, Length: 200, dtype: float64,

'I5': 0 9.779567

1 10.317318

2 7.607878

3 9.731334

4 7.596392

...

195 8.018625

196 7.382746

197 0.000000

198 5.318120

199 4.934474

Name: I5, Length: 200, dtype: float64,

'I6': 0 0.000000

1 5.513429

2 5.105945

3 5.303305

4 4.962845

...

195 6.356108

196 2.564949

197 0.000000

198 5.036953

199 0.000000

Name: I6, Length: 200, dtype: float64,

'I7': 0 0.000000

1 0.693147

2 1.945910

3 1.791759

4 1.609438

...

195 1.098612

196 0.693147

197 2.995732

198 4.394449

199 0.693147

Name: I7, Length: 200, dtype: float64,

'I8': 0 3.526361

1 3.583519

2 3.583519

3 1.609438

4 3.496508

...

195 1.386294

196 2.564949

197 1.386294

198 2.944439

199 0.000000

Name: I8, Length: 200, dtype: float64,

'I9': 0 0.000000

1 5.081404

2 6.261492

3 3.401197

4 3.637586

...

195 5.370638

196 2.772589

197 1.386294

198 6.232448

199 0.000000

Name: I9, Length: 200, dtype: float64,

'I10': 0 0.000000

1 0.000000

2 0.000000

3 0.000000

4 0.000000

...

195 0.000000

196 0.000000

197 0.693147

198 0.000000

199 0.693147

Name: I10, Length: 200, dtype: float64,

'I11': 0 0.000000

1 0.693147

2 1.386294

3 1.098612

4 0.693147

...

195 0.693147

196 0.693147

197 2.302585

198 2.484907

199 0.693147

Name: I11, Length: 200, dtype: float64,

'I12': 0 0.0

1 0.0

2 0.0

3 0.0

4 0.0

...

195 0.0

196 0.0

197 0.0

198 0.0

199 0.0

Name: I12, Length: 200, dtype: float64,

'I13': 0 0.000000

1 3.583519

2 2.944439

3 1.609438

4 3.332205

...

195 1.386294

196 2.564949

197 0.000000

198 3.135494

199 0.000000

Name: I13, Length: 200, dtype: float64,

'C1': 0 0

1 11

2 0

3 0

4 0

..

195 0

196 21

197 0

198 0

199 21

Name: C1, Length: 200, dtype: int32,

'C2': 0 4

1 1

2 18

3 45

4 11

..

195 0

196 64

197 5

198 84

199 68

Name: C2, Length: 200, dtype: int32,

'C3': 0 96

1 98

2 39

3 7

4 59

...

195 66

196 44

197 153

198 84

199 0

Name: C3, Length: 200, dtype: int32,

'C4': 0 146

1 98

2 52

3 117

4 77

...

195 107

196 65

197 143

198 41

199 0

Name: C4, Length: 200, dtype: int32,

'C5': 0 1

1 1

2 3

3 1

4 1

..

195 1

196 1

197 1

198 5

199 5

Name: C5, Length: 200, dtype: int32,

'C6': 0 4

1 6

2 4

3 0

4 5

..

195 4

196 6

197 0

198 4

199 0

Name: C6, Length: 200, dtype: int32,

'C7': 0 163

1 179

2 140

3 164

4 18

...

195 10

196 4

197 141

198 27

199 19

Name: C7, Length: 200, dtype: int32,

'C8': 0 1

1 0

2 2

3 1

4 1

..

195 15

196 2

197 1

198 1

199 1

Name: C8, Length: 200, dtype: int32,

'C9': 0 1

1 1

2 1

3 0

4 1

..

195 1

196 1

197 1

198 1

199 1

Name: C9, Length: 200, dtype: int32,

'C10': 0 72

1 89

2 93

3 20

4 45

...

195 11

196 9

197 118

198 96

199 61

Name: C10, Length: 200, dtype: int32,

'C11': 0 117

1 58

2 31

3 61

4 171

...

195 87

196 130

197 30

198 110

199 149

Name: C11, Length: 200, dtype: int32,

'C12': 0 127

1 97

2 122

3 104

4 162

...

195 6

196 96

197 128

198 67

199 0

Name: C12, Length: 200, dtype: int32,

'C13': 0 157

1 79

2 16

3 36

4 96

...

195 73

196 57

197 69

198 63

199 14

Name: C13, Length: 200, dtype: int32,

'C14': 0 7

1 7

2 7

3 1

4 4

..

195 2

196 1

197 13

198 1

199 1

Name: C14, Length: 200, dtype: int32,

'C15': 0 127

1 72

2 129

3 43

4 36

...

195 53

196 106

197 23

198 121

199 24

Name: C15, Length: 200, dtype: int32,

'C16': 0 126

1 26

2 97

3 43

4 121

...

195 49

196 1

197 4

198 75

199 0

Name: C16, Length: 200, dtype: int32,

'C17': 0 8

1 7

2 8

3 8

4 8

..

195 0

196 1

197 4

198 4

199 7

Name: C17, Length: 200, dtype: int32,

'C18': 0 66

1 52

2 49

3 37

4 14

..

195 74

196 25

197 40

198 7

199 72

Name: C18, Length: 200, dtype: int32,

'C19': 0 0

1 0

2 0

3 0

4 5

..

195 5

196 0

197 17

198 18

199 0

Name: C19, Length: 200, dtype: int32,

'C20': 0 0

1 0

2 0

3 0

4 3

..

195 1

196 0

197 2

198 1

199 0

Name: C20, Length: 200, dtype: int32,

'C21': 0 3

1 47

2 25

3 156

4 9

...

195 30

196 138

197 41

198 123

199 0

Name: C21, Length: 200, dtype: int32,

'C22': 0 0

1 0

2 0

3 0

4 0

..

195 5

196 0

197 0

198 0

199 0

Name: C22, Length: 200, dtype: int32,

'C23': 0 1

1 7

2 6

3 0

4 0

..

195 0

196 0

197 0

198 0

199 0

Name: C23, Length: 200, dtype: int32,

'C24': 0 96

1 112

2 53

3 32

4 5

...

195 118

196 68

197 12

198 10

199 0

Name: C24, Length: 200, dtype: int32,

'C25': 0 0

1 0

2 0

3 0

4 1

..

195 17

196 0

197 16

198 16

199 0

Name: C25, Length: 200, dtype: int32,

'C26': 0 0

1 0

2 0

3 0

4 47

..

195 48

196 0

197 11

198 49

199 0

Name: C26, Length: 200, dtype: int32}

from keras.utils import plot_model

plot_model(model, to_file='./DeepCrossing.png',show_shapes=True)

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-lVocUFRC-1615871597237)(output_49_0.png)]

# 模型训练

model.fit(train_model_input, train_data['label'].values,

batch_size=64, epochs=5, validation_split=0.2, )

Epoch 1/5

3/3 [==============================] - 0s 77ms/step - loss: 0.4399 - auc: 0.7993 - val_loss: 0.5972 - val_auc: 0.7208

Epoch 2/5

3/3 [==============================] - 0s 26ms/step - loss: 0.4174 - auc: 0.8225 - val_loss: 0.5646 - val_auc: 0.7051

Epoch 3/5

3/3 [==============================] - 0s 20ms/step - loss: 0.4097 - auc: 0.8430 - val_loss: 0.5561 - val_auc: 0.6909

Epoch 4/5

3/3 [==============================] - 0s 19ms/step - loss: 0.4073 - auc: 0.8641 - val_loss: 0.5570 - val_auc: 0.6937

Epoch 5/5

3/3 [==============================] - 0s 19ms/step - loss: 0.3897 - auc: 0.8763 - val_loss: 0.5719 - val_auc: 0.7051

<tensorflow.python.keras.callbacks.History at 0x24a506dff40>

# 模型训练

model.fit(train_dense_x+train_sparse_x, train_data['label'].values,

batch_size=64, epochs=5, validation_split=0.2, )

Epoch 1/5

3/3 [==============================] - 0s 60ms/step - loss: 0.3778 - auc: 0.8881 - val_loss: 0.5980 - val_auc: 0.7066

Epoch 2/5

3/3 [==============================] - 0s 22ms/step - loss: 0.3670 - auc: 0.8998 - val_loss: 0.5927 - val_auc: 0.6909

Epoch 3/5

3/3 [==============================] - 0s 20ms/step - loss: 0.3501 - auc: 0.9107 - val_loss: 0.5797 - val_auc: 0.7009

Epoch 4/5

3/3 [==============================] - 0s 23ms/step - loss: 0.3332 - auc: 0.9247 - val_loss: 0.5725 - val_auc: 0.6994

Epoch 5/5

3/3 [==============================] - 0s 20ms/step - loss: 0.3211 - auc: 0.9316 - val_loss: 0.5785 - val_auc: 0.6923

2. 模型结构及原理

为了完成端到端的训练, DeepCrossing模型要在内部网络结构中解决如下问题:

- 离散类特征编码后过于稀疏, 不利于直接输入神经网络训练, 需要解决稀疏特征向量稠密化的问题

- 如何解决特征自动交叉组合的问题

- 如何在输出层中达成问题设定的优化目标

DeepCrossing分别设置了不同神经网络层解决上述问题。模型结构如下

下面分别介绍一下各层的作用:

2.1 Embedding Layer

将稀疏的类别型特征转成稠密的Embedding向量,Embedding的维度会远小于原始的稀疏特征向量。 Embedding是NLP里面常用的一种技术,这里的Feature #1表示的类别特征(one-hot编码后的稀疏特征向量), Feature #2是数值型特征,不用embedding, 直接到了Stacking Layer。 关于Embedding Layer的实现, 往往一个全连接层即可,Tensorflow中有实现好的层可以直接用。 和NLP里面的embedding技术异曲同工, 比如Word2Vec, 语言模型等。

2.2 Stacking Layer

这个层是把不同的Embedding特征和数值型特征拼接在一起,形成新的包含全部特征的特征向量,该层通常也称为连接层, 具体的实现如下,先将所有的数值特征拼接起来,然后将所有的Embedding拼接起来,最后将数值特征和Embedding特征拼接起来作为DNN的输入,这里TF是通过Concatnate层进行拼接。

2.3 Multiple Residual Units Layer

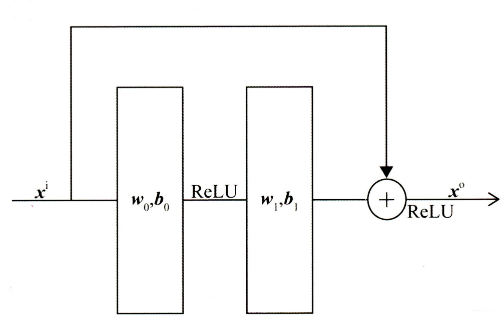

该层的主要结构是MLP, 但DeepCrossing采用了残差网络进行的连接。通过多层残差网络对特征向量各个维度充分的交叉组合, 使得模型能够抓取更多的非线性特征和组合特征信息, 增加模型的表达能力。残差网络结构如下图所示:

Deep Crossing模型使用稍微修改过的残差单元,它不使用卷积内核,改为了两层神经网络。我们可以看到,残差单元是通过两层ReLU变换再将原输入特征相加回来实现的。具体代码实现如下:

2.4 Scoring Layer

这个作为输出层,为了拟合优化目标存在。 对于CTR预估二分类问题, Scoring往往采用逻辑回归,模型通过叠加多个残差块加深网络的深度,最后将结果转换成一个概率值输出。

200

200

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?