目录

1、实现目的:

爬取完本小说并将其写入txt文本中

2、分析

1、分析章节页面:怎么才能获取纯洁的所有的章节网址

假设正文标签如下

通过分析可知,连接全部存放在此标签下

<ul class="links" >.........

<li class=" link">

<a href="https://xxxxxx.html" target="_blank" title="第一章 xxxx ">第一章 xxxx</a>

</li>且都放在 li 中

那么怎么获取?

def getTarget(t):

targets = []

req = requests.get(t,headers=headers).content.decode('utf-8')

soup = BeautifulSoup(req, "html.parser")

links = soup.find('ul', class_='links').find_all('a')

for link in links:

# print(link.get('href'))

targets.append(link.get('href'))

return targets

输出:成功获取所有链接所构成的列表

2、怎么爬取某一章节的正文和标题?

通过审查元素分析正文和标题所在标签:

发现都在div标签下的class=“reader-box下的p标签中

章节名在此之下的class="title”下

正文内容在此之下的class=“content"下

# 获取文本

def getContent(urls):

contents = []

index = 1

for url in urls:

print("第{}章正在下载。。。".format(index))

req = requests.get(url, headers=headers, timeout=100).content.decode('utf-8')

soup = BeautifulSoup(req, "html.parser")

t = soup.find('div', class_='reader_box')

name = t.find('div', class_='title').text

content = t.find('div', class_='content').text.replace(" ", '\n')

contents.append(name + '\n' + content) # 返回章节名和正文

index += 1

print("下载完成,正在写入txt文本...")

return contents

3、怎么写入txt文本

def Download():

contents = getContent(getTarget(target))

with open("文本.txt", 'a') as f:

for content in contents:

f.writelines(content)

f.write('\n')

f.write('********************************')

f.write('\n')

f.close()

print("写入成功!请在本目录查找小说文件")4、基本反反爬措施

headers = { "User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) 。。。。。。。", "authority": "xxx.com", }头文件和休眠时间的设置

4、总的代码:

# -*- coding: utf-8 -*-

"""

@File : 多章节小说爬取.py

@author: FxDr

@Time : 2022/10/30 0:52

"""

import random

import requests

from bs4 import BeautifulSoup

url = 'https://xxxxx.html' # 第一章地址

target = 'http://xxxxxxx.html' # 目录页

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) 。。。。。。",

"authority": "xxxx.com",

}

# 获取文本

def getContent(urls):

contents = []

index = 1

for url in urls:

print("第{}章正在下载。。。".format(index))

req = requests.get(url, headers=headers, timeout=100).content.decode('utf-8')

soup = BeautifulSoup(req, "html.parser")

t = soup.find('div', class_='reader_box')

name = t.find('div', class_='title').text

content = t.find('div', class_='content').text.replace(" ", '\n')

contents.append(name + '\n' + content) # 返回章节名和正文

index += 1

print("下载完成,正在写入txt文本...")

return contents

def getTarget(t):

targets = []

req = requests.get(t, headers=headers).content.decode('utf-8')

soup = BeautifulSoup(req, "html.parser")

links = soup.find('ul', class_='links').find_all('a')

for link in links:

# print(link.get('href'))

targets.append(link.get('href'))

return targets

def Download():

contents = getContent(getTarget(target))

with open("文本.txt", 'a') as f:

for content in contents:

f.writelines(content)

f.write('\n')

f.write('********************************')

f.write('\n')

f.close()

print("写入成功!请在本目录查找小说文件")

# print(getContent(url))

# print(getTarget(target))

Download()

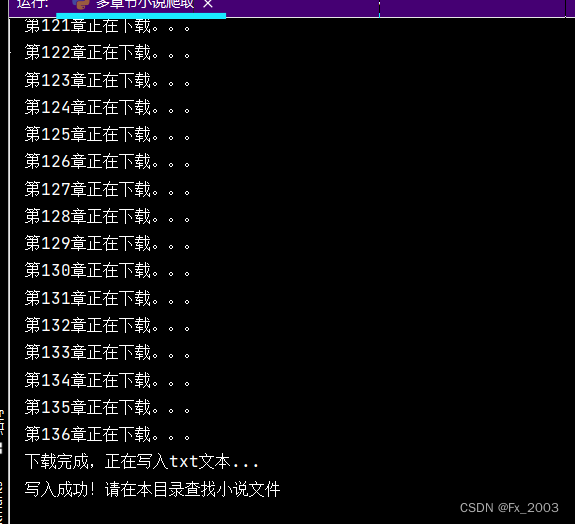

5、运行效果

文件下载如下:当前py文件所在文件夹下

文本.txt

1208

1208

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?