celery

- celery是一个处理大量消息的分布式系统,是一个专注于实时处理的异步队列。

- celery可以执行异步任务,延迟任务,定时任务

- celery架构:消息中间件(broker)+任务执行单元(worker)+任务执行结果存储。

- 消息中间件:可以用redis或专门的消息队列。

- worker:从broker中获取任务信息并执行任务,结果丢个backend。

- backend:存储任务执行结果。

安装

pip install celery ==4.4.6

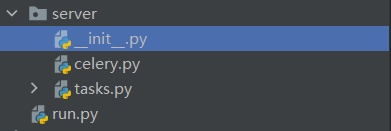

目录

启动方法

- windows:(需要安装eventlet)

celery worker -A server -l info -P eventlet - linux:

celey worker -A server -l info

参数说明:-A 包含celery的模块,-l 日志级别

使用

# celery.py

from celery import Celery

broker = 'redis://127.0.0.1:6379/1'

backend = 'redis://127.0.0.1:6379/2'

app = Celery(__name__, broker=broker, backend=backend, include=['server.tasks'])

异步任务

# tasks.py

from .celery import app

# 定义任务

@app.task

def add(x, y):

return x + y

# run.py (可以在任意处执行,包括某个项目内部)

from server.celery import app

from server.tasks import add

from celery.result import AsyncResult

# tid: the id of task

tid = add.delay(1, 2)

print(type(tid))

res = AsyncResult(str(tid), app=app)

if res.successful():

print(res.get())

>>> <class 'celery.result.AsyncResult'>

>>> 3

延迟任务

# run.py

from server.celery import app

from server.tasks import add

from celery.result import AsyncResult

from datetime import datetime, timedelta

eta = datetime.utcnow() + timedelta(seconds=10)

tid = add.apply_async(args=(200, 50), eta=eta)

print(type(tid))

res = AsyncResult(str(tid), app=app)

if res.successful():

print(res.get())

===

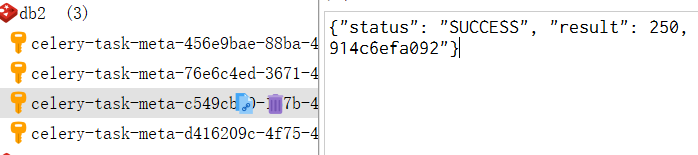

10s后可以在redis中看到结果

>>> {"status": "SUCCESS", "result": 250, "traceback": null, "children": [], "date_done": "2022-02-16T02:11:20.155155", "task_id": "b82399bf-d476-47dd-8a8e-0d024600275b"}

定时任务

# celery.py

需要增加定时任务配置

# 统一时间

app.conf.timezone = 'Asia/Shanghai'

# 禁用utc

app.conf.enable_utc = False

# 配置定时任务

app.conf.beat_schedule = {

'add-task': {

# 利用反射取到add任务

'task': 'server.tasks.add',

# 三秒提交一次

'schedule': timedelta(seconds=3),

# 任务参数

'args': (200, 50)

}

}

启动beat:!!再开一个终端,不要关闭worker打工人。

celery beat -A server -l info

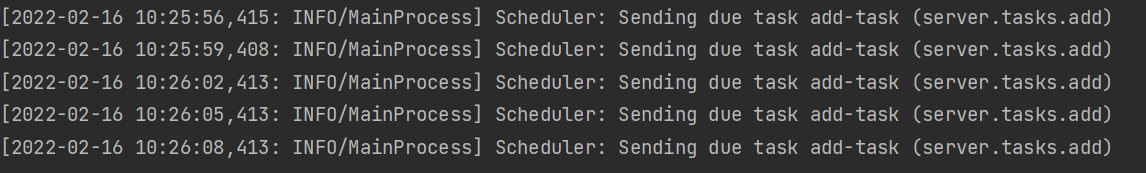

每三秒提交一次任务:

redis中的结果:

关于定时任务

from celery.schedule import crontab

'schedule':crontab(hour=8,day_of_week =1 )每周一早上八点提交一次任务

可以定义如:每周五晚上23点将redis的数据存储到mysql中的任务......

django使用

-

由于django 和 celery是独立的进程,所以需要导入django 环境

from celery import Celery import django import os os.environ.setdefault('DJANGO_SETTINGS_MODULE', 'luffyapi.settings.dev') django.setup() broker = 'redis://127.0.0.1:6379/1' backend = 'redis://127.0.0.1:6379/2' app = Celery(__name__, broker=broker, backend=backend, include=['celery_task.home_task']) -

定义异步任务

@app.task def banner_update(): from home import serializer from home import models from django.conf import settings queryset = models.Banner.objects.filter(is_delete=False, is_show=True).order_by('display_order')[ :settings.BANNER_COUNT] banner_ser = serializer.BannerModelSerializer(instance=queryset, many=True) for banner in banner_ser: banner['img'] = 'http://127.0.0.1:8000' + banner['img'] cache.set('banner_list', banner_ser.data) return True -

执行任务

class BannerView(GenericViewSet, ListModelMixin): # 限定只展示三条 queryset = models.Banner.objects.filter(is_delete=False, is_show=True).order_by('orders')[ :settings.BANNER_COUNT] serializer_class = serializer.BannerModelSerializer def list(self, request, *args, **kwargs): banner_list = cache.get('banner_list') if not banner_list: # 缓存中没有 banner_update.dalay() response = super().list(request, *args, **kwargs) cache.set('banner_list', response.data) return Response(data=response.data) return Response(data=banner_list)

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?