实现目标:

1、加载一张图片,应用深度学习框架Caffe训练好的模型分类图片,显示图片的类别,输出到控制台;

2、加载一个文件夹,分类所有文件夹内的图片,非图片文件选择无视,生成同名txt保存所属类别。

3、工程project实现

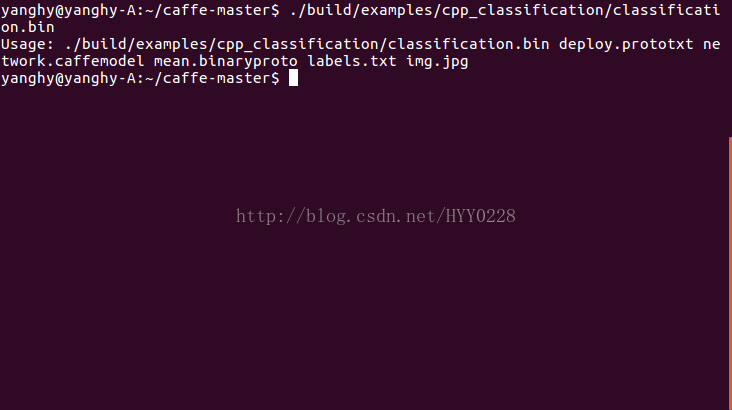

一、/home/name/caffe-master/examples/cpp_classification/classification.cpp这个文件是调用Caffe框架接口的事例,它对应的程序是这个:/home/name/caffe-master/build/examples/cpp_classification/classification.bin

运行这个程序并填上网络、模型、均值文件、标签参数和图片即可对单张图片进行分类。C++分类的调用接口也参照这个CPP。

二、现在仿照这个CPP对本地某个文件内的所有jpg图片分类。假设需要对某些输入的照片进行分类,修改图片来源接口即可。

txt按行为程序提供参数:文件夹名、模型网络、模型、均值文件、标签

修改如下:

//#define USE_OPENCV

#include <caffe/caffe.hpp>

#ifdef USE_OPENCV

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#endif // USE_OPENCV

#include <algorithm>

#include <iosfwd>

#include <memory>

#include <string>

#include <utility>

#include <vector>

#include <sys/types.h>

#include<dirent.h>

#include<stdio.h>

#include<sys/stat.h>

#include<iostream>

#include<fstream>

//下面这行不可用!

//using namespace std;

#ifdef USE_OPENCV

using namespace caffe; // NOLINT(build/namespaces)

using std::string;

/* Pair (label, confidence) representing a prediction. */

typedef std::pair<string, float> Prediction;

class Classifier {

public:

Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file);

std::vector<Prediction> Classify(const cv::Mat& img, int N = 5);

private:

void SetMean(const string& mean_file);

std::vector<float> Predict(const cv::Mat& img);

void WrapInputLayer(std::vector<cv::Mat>* input_channels);

void Preprocess(const cv::Mat& img,

std::vector<cv::Mat>* input_channels);

private:

shared_ptr<Net<float> > net_;

cv::Size input_geometry_;

int num_channels_;

cv::Mat mean_;

std::vector<string> labels_;

};

Classifier::Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file) {

#ifdef CPU_ONLY

Caffe::set_mode(Caffe::CPU);

#else

Caffe::set_mode(Caffe::GPU);

#endif

/* Load the network. */

net_.reset(new Net<float>(model_file, TEST));

net_->CopyTrainedLayersFrom(trained_file);

CHECK_EQ(net_->num_inputs(), 1) << "Network should have exactly one input.";

CHECK_EQ(net_->num_outputs(), 1) << "Network should have exactly one output.";

Blob<float>* input_layer = net_->input_blobs()[0];

num_channels_ = input_layer->channels();

CHECK(num_channels_ == 3 || num_channels_ == 1)

<< "Input layer should have 1 or 3 channels.";

input_geometry_ = cv::Size(input_layer->width(), input_layer->height());

/* Load the binaryproto mean file. */

SetMean(mean_file);

/* Load labels. */

std::ifstream labels(label_file.c_str());

CHECK(labels) << "Unable to open labels file " << label_file;

string line;

while (std::getline(labels, line))

labels_.push_back(string(line));

Blob<float>* output_layer = net_->output_blobs()[0];

CHECK_EQ(labels_.size(), output_layer->channels())

<< "Number of labels is different from the output layer dimension.";

}

static bool PairCompare(const std::pair<float, int>& lhs,

const std::pair<float, int>& rhs) {

return lhs.first > rhs.first;

}

/* Return the indices of the top N values of vector v. */

static std::vector<int> Argmax(const std::vector<float>& v, int N) {

std::vector<std::pair<float, int> > pairs;

for (size_t i = 0; i < v.size(); ++i)

pairs.push_back(std::make_pair(v[i], i));

std::partial_sort(pairs.begin(), pairs.begin() + N, pairs.end(), PairCompare);

std::vector<int> result;

for (int i = 0; i < N; ++i)

result.push_back(pairs[i].second);

return result;

}

/* Return the top N predictions. */

std::vector<Prediction> Classifier::Classify(const cv::Mat& img, int N) {

std::vector<float> output = Predict(img);

N = std::min<int>(labels_.size(), N);

std::vector<int> maxN = Argmax(output, N);

std::vector<Prediction> predictions;

for (int i = 0; i < N; ++i) {

int idx = maxN[i];

predictions.push_back(std::make_pair(labels_[idx], output[idx]));

}

return predictions;

}

/* Load the mean file in binaryproto format. */

void Classifier::SetMean(const string& mean_file) {

BlobProto blob_proto;

ReadProtoFromBinaryFileOrDie(mean_file.c_str(), &blob_proto);

/* Convert from BlobProto to Blob<float> */

Blob<float> mean_blob;

mean_blob.FromProto(blob_proto);

CHECK_EQ(mean_blob.channels(), num_channels_)

<< "Number of channels of mean file doesn't match input layer.";

/* The format of the mean file is planar 32-bit float BGR or grayscale. */

std::vector<cv::Mat> channels;

float* data = mean_blob.mutable_cpu_data();

for (int i = 0; i < num_channels_; ++i) {

/* Extract an individual channel. */

cv::Mat channel(mean_blob.height(), mean_blob.width(), CV_32FC1, data);

channels.push_back(channel);

data += mean_blob.height() * mean_blob.width();

}

/* Merge the separate channels into a single image. */

cv::Mat mean;

cv::merge(channels, mean);

/* Compute the global mean pixel value and create a mean image

* filled with this value. */

cv::Scalar channel_mean = cv::mean(mean);

mean_ = cv::Mat(input_geometry_, mean.type(), channel_mean);

}

std::vector<float> Classifier::Predict(const cv::Mat& img) {

Blob<float>* input_layer = net_->input_blobs()[0];

input_layer->Reshape(1, num_channels_,

input_geometry_.height, input_geometry_.width);

/* Forward dimension change to all layers. */

net_->Reshape();

std::vector<cv::Mat> input_channels;

WrapInputLayer(&input_channels);

Preprocess(img, &input_channels);

net_->Forward();

/* Copy the output layer to a std::vector */

Blob<float>* output_layer = net_->output_blobs()[0];

const float* begin = output_layer->cpu_data();

const float* end = begin + output_layer->channels();

return std::vector<float>(begin, end);

}

/* Wrap the input layer of the network in separate cv::Mat objects

* (one per channel). This way we save one memcpy operation and we

* don't need to rely on cudaMemcpy2D. The last preprocessing

* operation will write the separate channels directly to the input

* layer. */

void Classifier::WrapInputLayer(std::vector<cv::Mat>* input_channels) {

Blob<float>* input_layer = net_->input_blobs()[0];

int width = input_layer->width();

int height = input_layer->height();

float* input_data = input_layer->mutable_cpu_data();

for (int i = 0; i < input_layer->channels(); ++i) {

cv::Mat channel(height, width, CV_32FC1, input_data);

input_channels->push_back(channel);

input_data += width * height;

}

}

void Classifier::Preprocess(const cv::Mat& img,

std::vector<cv::Mat>* input_channels) {

/* Convert the input image to the input image format of the network. */

cv::Mat sample;

if (img.channels() == 3 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGR2GRAY);

else if (img.channels() == 4 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGRA2GRAY);

else if (img.channels() == 4 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_BGRA2BGR);

else if (img.channels() == 1 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_GRAY2BGR);

else

sample = img;

cv::Mat sample_resized;

if (sample.size() != input_geometry_)

cv::resize(sample, sample_resized, input_geometry_);

else

sample_resized = sample;

cv::Mat sample_float;

if (num_channels_ == 3)

sample_resized.convertTo(sample_float, CV_32FC3);

else

sample_resized.convertTo(sample_float, CV_32FC1);

cv::Mat sample_normalized;

cv::subtract(sample_float, mean_, sample_normalized);

/* This operation will write the separate BGR planes directly to the

* input layer of the network because it is wrapped by the cv::Mat

* objects in input_channels. */

cv::split(sample_normalized, *input_channels);

CHECK(reinterpret_cast<float*>(input_channels->at(0).data)

== net_->input_blobs()[0]->cpu_data())

<< "Input channels are not wrapping the input layer of the network.";

}

//遍历文件夹类文件名函数

char filename[256][256];

int len = 0;

int trave_dir(char* path, int depth)

{

DIR *d; //声明一个句柄

struct dirent *file; //readdir函数的返回值就存放在这个结构体中

struct stat sb;

if(!(d = opendir(path)))

{

printf("error opendir %s!!!\n",path);

return -1;

}

while((file = readdir(d)) != NULL)

{

//把当前目录.,上一级目录..及隐藏文件都去掉,避免死循环遍历目录

if(strncmp(file->d_name, ".", 1) == 0)

continue;

strcpy(filename[len++], file->d_name); //保存遍历到的文件名

//判断该文件是否是目录,及是否已搜索了三层,这里我定义只搜索了三层目录,太深就不搜了,省得搜出太多文件

//stat

if(stat(file->d_name, &sb) >= 0 && S_ISDIR(sb.st_mode) && depth <= 3)

{

trave_dir(file->d_name, depth + 1);

}

}

closedir(d);

return 0;

}

int main(int argc, char** argv) {

// if (argc != 6) {

// std::cerr << "Usage: " << argv[0]

// << " deploy.prototxt network.caffemodel"

// << " mean.binaryproto labels.txt img.jpg" << std::endl;

// return 1;

// }

::google::InitGoogleLogging(argv[0]);

string ar[10];

if(argc!=2){

std::cerr<<"程序需要一个包含5行文本的参数:"<<std::endl<<"第1行文件夹名(含路径),然后各行依次分别是模型网络、模型、均值文件、标签"<<std::endl;

return 1;

}

fstream arg_file;

arg_file.open(argv[1]);

if(!arg_file){

std::cerr<<"打不开参数文件"<<std::endl;

return 1;

}

else{

for(int i=0;!arg_file.eof();i++){

getline(arg_file,ar[i],'\n');

}

}

// string model_file = argv[1];

// string trained_file = argv[2];

// string mean_file = argv[3];

// string label_file = argv[4];

string dir=ar[0];

char* p=(char*)dir.data();

string model_file = ar[1];

string trained_file = ar[2];

string mean_file = ar[3];

string label_file = ar[4];

Classifier classifier(model_file, trained_file, mean_file, label_file);

// string file = argv[5];

int depth=1;

//遍历获取文件名、总数了

trave_dir(p,depth);

for(int j=0;j<len;j++){

//char转化为string

string filename_str=filename[j];

//判断是不是jpg图片

int len1=filename_str.length();

string argv2;

for(int i=3;i>=0;i--){

argv2.push_back(filename_str[len1-i-1]);

}

if(argv2!=".jpg"){

std::cout<<filename[j]<<"不是jpg图片~~~~~"<<std::endl;

continue;

}

string file=dir+"//"+filename[j];

std::cout << "---------- Prediction for "

<< filename[j] << " ----------" << std::endl;

cv::Mat img = cv::imread(file, -1);

CHECK(!img.empty()) << "Unable to decode image " << file;

std::vector<Prediction> predictions = classifier.Classify(img);

/* Print the top N predictions. */

for (size_t i = 0; i < predictions.size(); ++i) {

Prediction p = predictions[i];

std::cout << std::fixed << std::setprecision(4) << p.second << " - \""

<< p.first << "\"" << std::endl;

}

}

}

#else

int main(int argc, char** argv) {

LOG(FATAL) << "This example requires OpenCV; compile with USE_OPENCV.";

}

#endif // USE_OPENCV

313

313

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?