Flink Window Join就是将两条数据流通过窗口划分为有界流,在划分的窗口内两条流的元素进行inner join,将共有的元素发送出去。这里的窗口支持滚动/滑动/会话窗口等。

固定的Api模板如下

stream.join(otherStream)

.where(<KeySelector>)

.equalTo(<KeySelector>)

.window(<WindowAssigner>)

.apply(<JoinFunction>);滚动时间窗口的Window Join

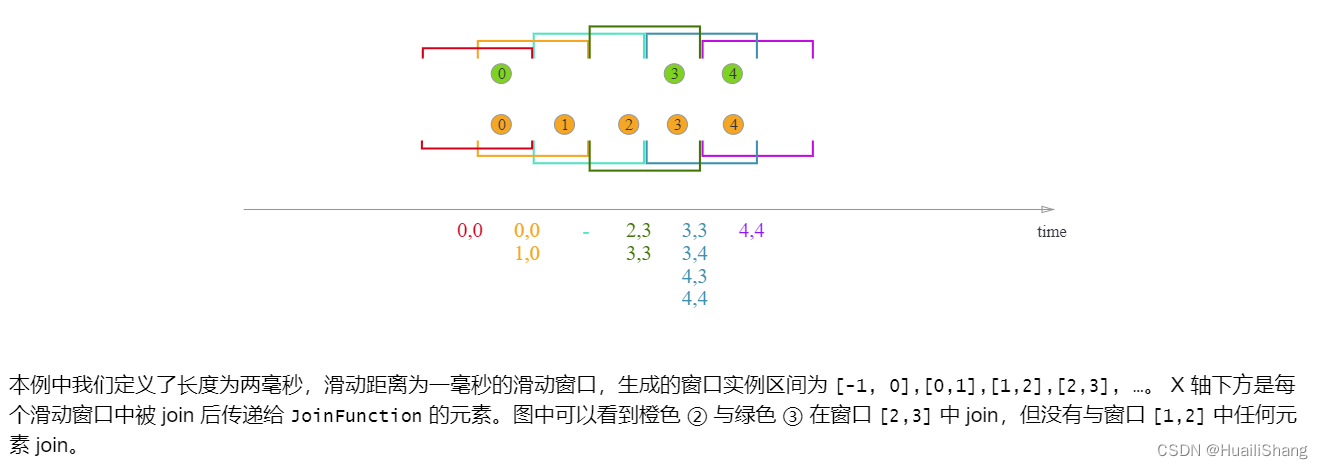

滑动时间窗口的Window Join

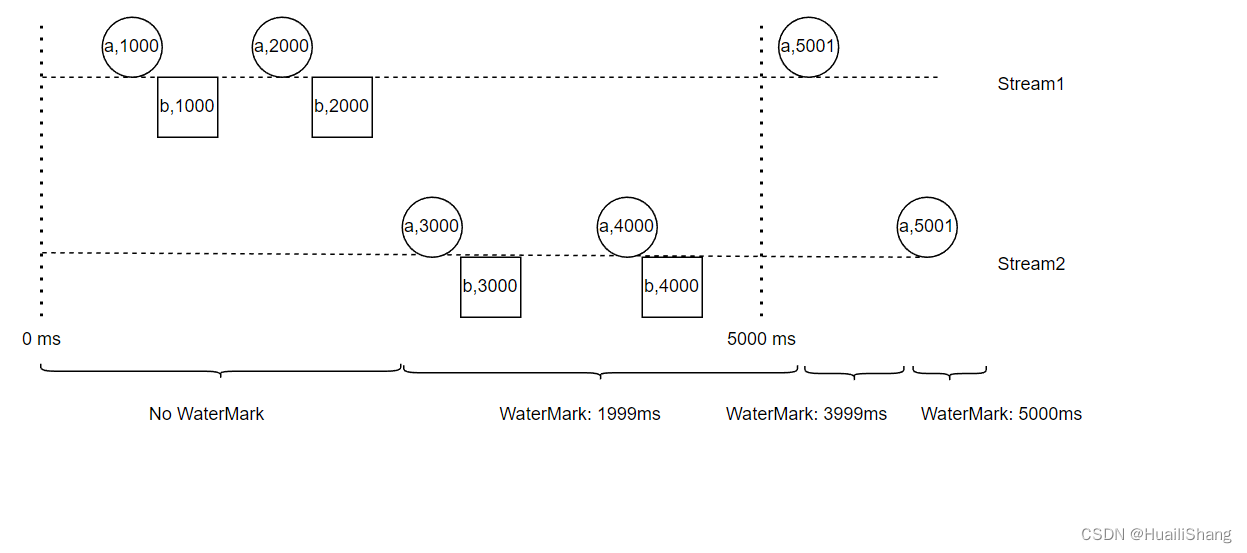

假设有两条流,流元素分别是(id,timestamp)。对两条流进行Window Join,使用了一个窗口长度为5秒的滚动时间窗口。相关代码见文末。

整个Flink作业的Watermark是两条流watermark的最小值。只有当整个作业的Watermark超过了窗口的结束时候,此窗口内Join上的元素才会被输出。

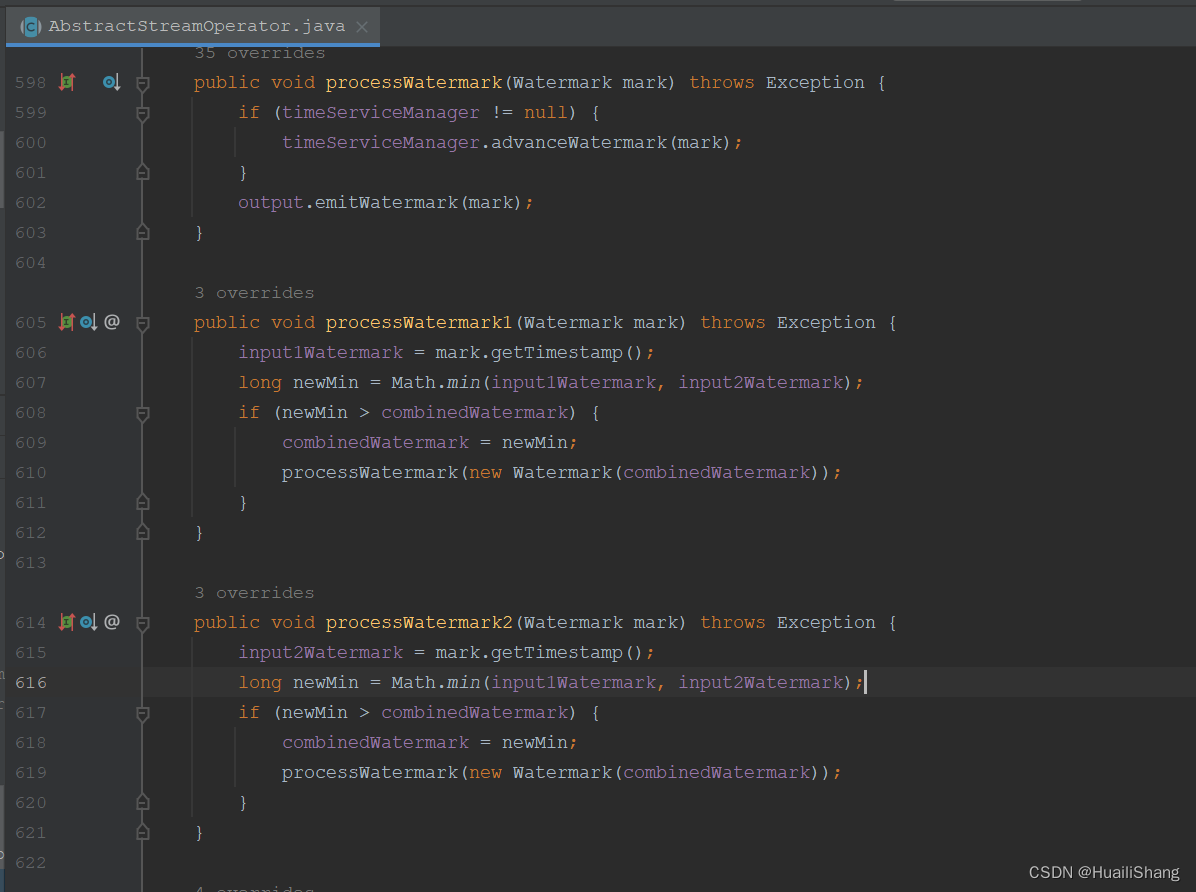

查看org.apache.flink.streaming.api.operators.AbstractStreamOperator类中processWatermark方法,可看到两条流中的最小watermark值作为整个作业的watermark.

附文中案例的相关程序

public class test {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setRestartStrategy(RestartStrategies.fixedDelayRestart(3,5000));

env.setParallelism(1);

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

Properties pros = new Properties();

pros.setProperty("bootstrap.servers", "xxx");

DataStream<String> is1 = env.addSource(new FlinkKafkaConsumer<String>("topicName", new SimpleStringSchema(), pros));

SingleOutputStreamOperator<Tuple2<String, Long>> watermarks1 = is1

.map(new MapFunction<String, Tuple2<String, Long>>() {

@Override

public Tuple2<String, Long> map(String s) throws Exception {

String[] split = s.split(",");

return Tuple2.of(split[0], Long.parseLong(split[1]));

}

}).assignTimestampsAndWatermarks(

WatermarkStrategy

.<Tuple2<String, Long>>forBoundedOutOfOrderness(Duration.ofSeconds(0))

.withTimestampAssigner(new SerializableTimestampAssigner<Tuple2<String, Long>>() {

@Override

public long extractTimestamp(Tuple2<String, Long> stringStringLongTuple2, long l) {

return stringStringLongTuple2.f1;

}

})

);

watermarks1.print("watermark1");

DataStream<String> is2 = env.addSource(new FlinkKafkaConsumer<String>("topicName", new SimpleStringSchema(), pros));

SingleOutputStreamOperator<Tuple2<String, Long>> watermarks2 = is2

.map(new MapFunction<String, Tuple2<String, Long>>() {

@Override

public Tuple2<String, Long> map(String s) throws Exception {

String[] split = s.split(",");

return Tuple2.of(split[0], Long.parseLong(split[1]));

}

}).assignTimestampsAndWatermarks(

WatermarkStrategy

.<Tuple2<String, Long>>forBoundedOutOfOrderness(Duration.ofSeconds(0))

.withTimestampAssigner(new SerializableTimestampAssigner<Tuple2<String, Long>>() {

@Override

public long extractTimestamp(Tuple2<String, Long> stringStringLongTuple2, long l) {

return stringStringLongTuple2.f1;

}

})

);

watermarks2.print("watermark2");

watermarks1.join(watermarks2)

.where(a -> a.f0)

.equalTo(b -> b.f0)

.window(TumblingEventTimeWindows.of(Time.seconds(5)))

.apply(new JoinFunction<Tuple2<String, Long>, Tuple2<String, Long>, String>() {

@Override

public String join(Tuple2<String, Long> stringLongTuple2, Tuple2<String, Long> stringLongTuple22) throws Exception {

return stringLongTuple2 + " => " + stringLongTuple22;

}

})

.print();

env.execute("ConnectStreamViaWindowJoin");

}

}

3702

3702

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?