共同好友的实现

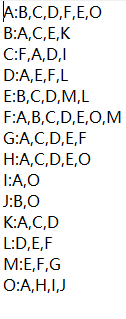

源数据:

目标数据:

利用两个MapReduce来进行实现:

第一部分Step1:

Step1Mapper:

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class Step1Mapper extends Mapper<LongWritable,Text,Text,Text> {

//输入数据如下格式 A(用户):B,C,D,F,E,O (用户的好友)

//把好友当作key,拥有该好友的用户作为value

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String line = value.toString();

//用户与好友列表

String[] split = line.split(":"); //切割单独的用户列表

//好友列表

String[] friendList = split[1].split(","); //切割出好友列表

for (String friend : friendList) {

context.write(new Text(friend),new Text(split[0])); //

}

}

}

Step1Reducer:

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class Step1Reducer extends Reducer<Text,Text,Text,Text>{

//reduce接收到的数据 B [A,E]

// B 是我们的好友 集合里面装的是多个用户

//将数据最终转换成这样的形式进行输出 A-B-E-F-G-H-K- C

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

StringBuffer sb = new StringBuffer();

for (Text value : values) {

sb.append(value.toString()).append("-"); //用-把用户都连接起来

}

context.write(new Text(sb.toString()),key); //输出 用户列表 共同好友

}

}

Step1Main:

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class Step1Main extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

Job job = Job.getInstance(super.getConf(), "step1");// 获取任务对象

job.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(job,new Path("file:///F:\\共同好友\\input1")); //输入路径

job.setMapperClass(Step1Mapper.class);

job.setMapOutputKeyClass(Text.class); //设置Mapper类

job.setMapOutputValueClass(Text.class);

job.setReducerClass(Step1Reducer.class);

job.setOutputKeyClass(Text.class); //设置reducer类

job.setOutputValueClass(Text.class);

job.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(job,new Path("file:///F:\\共同好友\\mystep1output")); //输出路径

boolean b = job.waitForCompletion(true);

return b?0:1;

}

public static void main(String[] args) throws Exception {

int run = ToolRunner.run(new Configuration(), new Step1Main(), args);

System.exit(run);

}

}

第二部分:

Step2Mapper:

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

import java.util.Arrays;

public class Step2Mapper extends Mapper<LongWritable,Text,Text,Text>{

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// F-E- M

//用户列表与好友

String[] split = value.toString()

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

248

248

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?