启动Kafka及Zookeeper

单机Kafka

新建一个kafka节点(名字就叫kafka)

docker run --net=host -e TZ=Asia/Shanghai -v /etc/localtime:/etc/localtime:ro --name=kafka\

-e KAFKA_ENABLE_KRAFT=yes\

-e KAFKA_CFG_PROCESS_ROLES=broker,controller\

-e KAFKA_CFG_CONTROLLER_LISTENER_NAMES=CONTROLLER\

-e KAFKA_CFG_LISTENERS=PLAINTEXT://0.0.0.0:9092,CONTROLLER://0.0.0.0:9093\

-e KAFKA_CFG_LISTENER_SECURITY_PROTOCOL_MAP=CONTROLLER:PLAINTEXT,PLAINTEXT:PLAINTEXT\

-e KAFKA_CFG_CONTROLLER_QUORUM_VOTERS=1@0.0.0.0:9093\

-e KAFKA_BROKER_ID=1\

-e ALLOW_PLAINTEXT_LISTENER=yes\

bitnami/kafka:3.3

如有需要,移除命令如下:

docker rm -f kafka

Kafka集群

首先参考另一篇博客进行docker和docker-compose的安装

新建yml配置文件

vi docker-compose,yml

内容如下:

version: "2"

services:

zookeeper:

image: docker.io/bitnami/zookeeper:3.8

ports:

- "2181"

environment:

- ALLOW_ANONYMOUS_LOGIN=yes

volumes:

- zookeeper_data:/bitnami/zookeeper

kafka-0:

image: docker.io/bitnami/kafka:3.3

ports:

- "9092"

environment:

- KAFKA_CFG_ZOOKEEPER_CONNECT=zookeeper:2181

- KAFKA_CFG_BROKER_ID=0

- ALLOW_PLAINTEXT_LISTENER=yes

volumes:

- kafka_0_data:/bitnami/kafka

depends_on:

- zookeeper

kafka-1:

image: docker.io/bitnami/kafka:3.3

ports:

- "9092"

environment:

- KAFKA_CFG_ZOOKEEPER_CONNECT=zookeeper:2181

- KAFKA_CFG_BROKER_ID=1

- ALLOW_PLAINTEXT_LISTENER=yes

volumes:

- kafka_1_data:/bitnami/kafka

depends_on:

- zookeeper

kafka-2:

image: docker.io/bitnami/kafka:3.3

ports:

- "9092"

environment:

- KAFKA_CFG_ZOOKEEPER_CONNECT=zookeeper:2181

- KAFKA_CFG_BROKER_ID=2

- ALLOW_PLAINTEXT_LISTENER=yes

volumes:

- kafka_2_data:/bitnami/kafka

depends_on:

- zookeeper

volumes:

zookeeper_data:

driver: local

kafka_0_data:

driver: local

kafka_1_data:

driver: local

kafka_2_data:

driver: local

启动zookeeper和kafka集群

docker-compose up -d

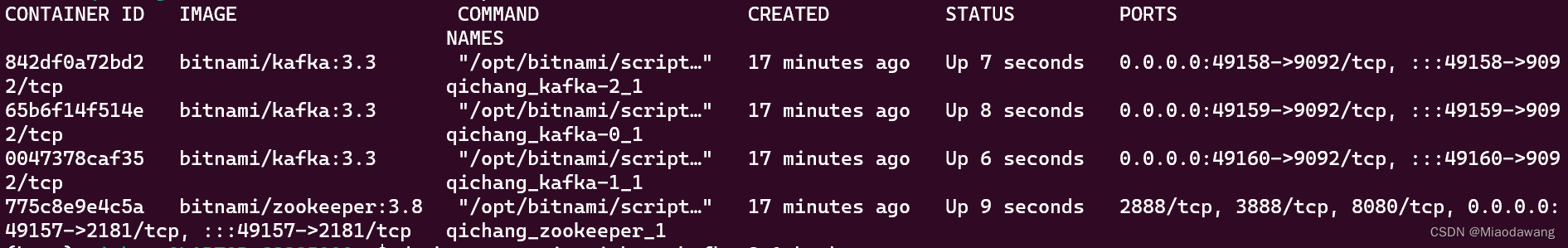

使用docker ps查看可以看到启动的进程

使用Kafka命令

topic命令

选择其中的一个kafka节点

docker exec -it qichang_kafka-2_1 bash

cd /opt/bitnami/kafka

在这个目录下就可以像普通使用kafka命令一样了

- 新建一个名为test_first的topic(partition数设置为1,副本数设置为3)

bin/kafka-topics.sh --bootstrap-server localhost:9092 --create --partitions 1 --replication-factor 3 --topic test_first

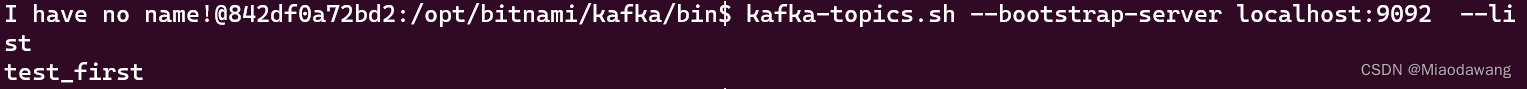

- 查看全部topic

bin/kafka-topics.sh --bootstrap-server localhost:9092 --list

可以看到刚创建的topic

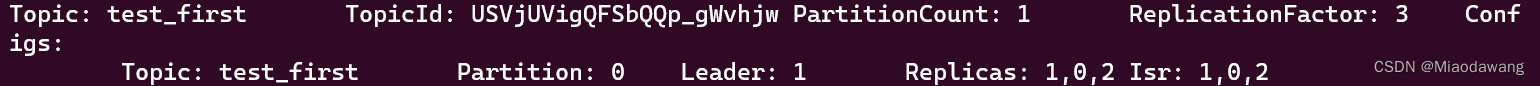

- 查看test_first这个topic的详细情况

bin/kafka-topics.sh --bootstrap-server localhost:9092 --describe --topic test_first

- 删除topic

bin/kafka-topics.sh --bootstrap-server localhost:9092 --delete --topic test_first

可以再次查看所有列表验证一下

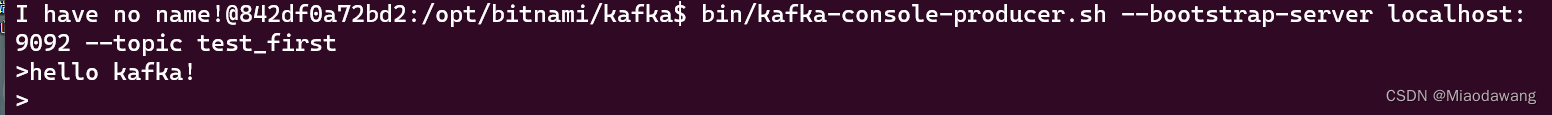

生产者命令行操作

bin/kafka-console-producer.sh --bootstrap-server localhost:9092 --topic test_first

可以在此键入消息:

如下,通过qichang_kafka-2_1这个节点的生产者向test_first主题发送一条"hello kafka!"消息

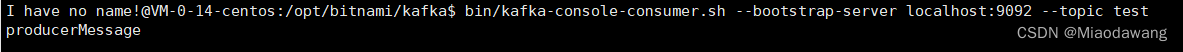

消费者命令行操作

切换到qichang_kafka-0_1节点对消息进行消费

docker exec -it qichang_kafka-2_1 bash

cd /opt/bitnami/kafka

bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --from-beginning --topic test_first

以上为将该主题中的所有数据都读取出来(包括历史数据)

异步发送API

pom.xml里添加依赖

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>3.0.0</version>

</dependency>

package com.kafka;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import rx.Producer;

import java.util.Properties;

public class CustomProducer {

public static void main(String[] args) throws InterruptedException{

Properties properties = new Properties();

//改成自己的主机ip加端口

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"62.234.136.29:9092");

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG,"org.apache.kafka.common.serialization.StringSerializer");

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,"org.apache.kafka.common.serialization.StringSerializer");

//创建生产者

KafkaProducer<String,String> kafkaProducer = new KafkaProducer<String, String>(properties);

//调用send方法发送消息

kafkaProducer.send(new ProducerRecord<>("test","producerMessage"));

//关闭资源

kafkaProducer.close();

}

}

使用上述命令用消费者接收消息,可以看到send过去的内容

861

861

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?