import numpy as np

import tensorflow as tf

import matplotlib as mpl

import matplotlib.pyplot as plt

HIDDEN_SIZE = 30

NUM_LAYERS = 2

TIMESTEPS = 10

TRAINING_STEPS = 10000

BATCH_SIZE = 32

TRAINING_EXAMPLES = 10000

TESTING_EXAMPLES = 1000

SAMPLE_GAP = 0.01

def generate_data(seq):

X = []

y = []

for i in range(len(seq) - TIMESTEPS):

X.append([seq[i: i + TIMESTEPS]])

y.append([seq[i + TIMESTEPS]])

return np.array(X, dtype=np.float32), np.array(y, dtype=np.float32)

def lstm_model(X, y, is_training):

cell = tf.nn.rnn_cell.MultiRNNCell([

tf.nn.rnn_cell.BasicLSTMCell(HIDDEN_SIZE)

for _ in range(NUM_LAYERS)])

outputs, _ = tf.nn.dynamic_rnn(cell, X, dtype=tf.float32)

output = outputs[:, -1, :]

predictions = tf.contrib.layers.fully_connected(

output, 1, activation_fn=None)

if not is_training:

return predictions, None, None

loss = tf.losses.mean_squared_error(labels=y, predictions=predictions)

train_op = tf.contrib.layers.optimize_loss(

loss, tf.train.get_global_step(),

optimizer="Adagrad", learning_rate=0.1)

return predictions, loss, train_op

def run_eval(sess, test_X, test_y):

ds = tf.data.Dataset.from_tensor_slices((test_X, test_y))

ds = ds.batch(1)

X, y = ds.make_one_shot_iterator().get_next()

with tf.variable_scope("model", reuse=True):

prediction, _, _ = lstm_model(X, [0.0], False)

predictions = []

labels = []

for i in range(TESTING_EXAMPLES):

p, l = sess.run([prediction, y])

predictions.append(p)

labels.append(l)

predictions = np.array(predictions).squeeze()

labels = np.array(labels).squeeze()

rmse = np.sqrt(((predictions - labels) ** 2).mean(axis=0))

print("Root Mean Square Error is: %f" % rmse)

plt.figure()

plt.plot(predictions, label='predictions')

plt.plot(labels, label='real_sin')

plt.legend()

plt.show()

test_start = (TRAINING_EXAMPLES + TIMESTEPS) * SAMPLE_GAP

test_end = test_start + (TESTING_EXAMPLES + TIMESTEPS) * SAMPLE_GAP

train_X, train_y = generate_data(np.sin(np.linspace(

0, test_start, TRAINING_EXAMPLES + TIMESTEPS, dtype=np.float32)))

test_X, test_y = generate_data(np.sin(np.linspace(

test_start, test_end, TESTING_EXAMPLES + TIMESTEPS, dtype=np.float32)))

ds = tf.data.Dataset.from_tensor_slices((train_X, train_y))

ds = ds.repeat().shuffle(1000).batch(BATCH_SIZE)

X, y = ds.make_one_shot_iterator().get_next()

with tf.variable_scope("model"):

_, loss, train_op = lstm_model(X, y, True)

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

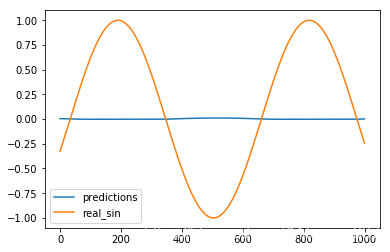

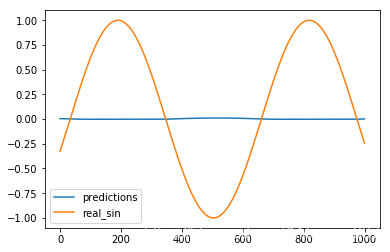

print "Evaluate model before training."

run_eval(sess, test_X, test_y)

for i in range(TRAINING_STEPS):

_, l = sess.run([train_op, loss])

if i % 1000 == 0:

print("train step: " + str(i) + ", loss: " + str(l))

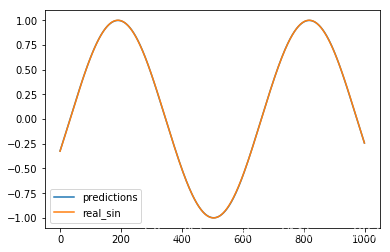

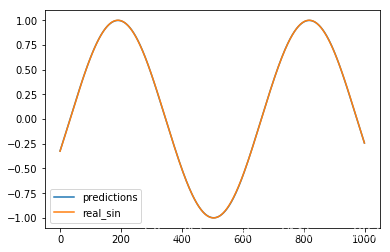

print "Evaluate model after training."

run_eval(sess, test_X, test_y)

Evaluate model before training.

Root Mean Square Error is: 0.690985

train step: 0, loss: 0.38567966

train step: 1000, loss: 0.0011199473

train step: 2000, loss: 0.00040280726

train step: 3000, loss: 5.9529444e-05

train step: 4000, loss: 1.1362208e-05

train step: 5000, loss: 3.1860066e-06

train step: 6000, loss: 5.6501494e-06

train step: 7000, loss: 5.7930047e-06

train step: 8000, loss: 4.321275e-06

train step: 9000, loss: 4.822319e-06

Evaluate model after training.

Root Mean Square Error is: 0.001971

内容解析

test_start = (TRAINING_EXAMPLES + TIMESTEPS) * SAMPLE_GAP

print(test_start)

100.1

test_end = test_start + (TESTING_EXAMPLES + TIMESTEPS) * SAMPLE_GAP

print(test_end)

110.2

contents = np.linspace(0, test_start, TRAINING_EXAMPLES + TIMESTEPS, dtype=np.float32)

print(contents)

print(len(contents))

count = 0

for content in contents:

print(content)

[0.00000000e+00 1.00009991e-02 2.00019982e-02 ... 1.00079994e+02

1.00089996e+02 1.00099998e+02]

10010

0.0

0.010000999

0.020001998

0.030002998

0.040003996

0.050004996

0.060005996

0.070007

0.08000799

0.09000899

0.10000999

0.11001099

0.12001199

0.13001299

0.140014

0.15001498

0.16001599

0.17001699

0.18001798

0.19001898

0.20001999

..........

..........

99.90998

99.91998

99.929985

99.93999

99.94998

99.959984

99.969986

99.97999

99.98999

99.99999

100.009995

100.01999

100.02999

100.03999

100.049995

100.06

100.07

100.079994

100.09

100.1

seq=np.sin(contents)

print(np.sin(contents))

[ 0. 0.01000083 0.02000066 ... -0.4358394 -0.4268156

-0.41774908]

X = []

for i in range(len(seq) - TIMESTEPS):

X.append([seq[i: i + TIMESTEPS]])

print(len(X))

print(X[0])

print(X[1])

10000

[array([0. , 0.01000083, 0.02000066, 0.0299985 , 0.03999333,

0.04998416, 0.05996999, 0.06994983, 0.07992266, 0.0898875 ],

dtype=float32)]

[array([0.01000083, 0.02000066, 0.0299985 , 0.03999333, 0.04998416,

0.05996999, 0.06994983, 0.07992266, 0.0898875 , 0.09984336],

dtype=float32)]

print(len(seq) - TIMESTEPS)

10000

for i in range(5):

print(i)

0

1

2

3

4

8541

8541

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?