1、部署 InternLM2-Chat-1.8B 模型进行智能对话

1.1、环境

# 1、创建并激活 虚拟环境

studio-conda -o internlm-base -t demo

# 与 studio-conda 等效的配置方案

# conda create -n demo python==3.10 -y

# conda activate demo

# conda install pytorch==2.0.1 torchvision==0.15.2 torchaudio==2.0.2 pytorch-cuda=11.7 -c pytorch -c nvidia

conda activate demo

# 2、环境包安装

pip install huggingface-hub==0.17.3

pip install transformers==4.34

pip install psutil==5.9.8

pip install accelerate==0.24.1

pip install streamlit==1.32.2

pip install matplotlib==3.8.3

pip install modelscope==1.9.5

pip install sentencepiece==0.1.99

# 3、下载 InternLM2-Chat-1.8B 模型

mkdir -p /root/demo

touch /root/demo/cli_demo.py

touch /root/demo/download_mini.py

cd /root/demo

python /root/demo/download_mini.py

# 4、运行 cli_demo

conda activate demo

python /root/demo/cli_demo.py2、使用InternLM创作一个 300 字的小故事

2、部署实战营优秀作品 八戒-Chat-1.8B 模型

2.1、环境

# 激活虚拟环境

conda activate demo

# 下载代码

cd /root/

git clone https://gitee.com/InternLM/Tutorial -b camp2

cd /root/Tutorial

# 下载运行 Chat-八戒 Demo

python /root/Tutorial/helloworld/bajie_download.py

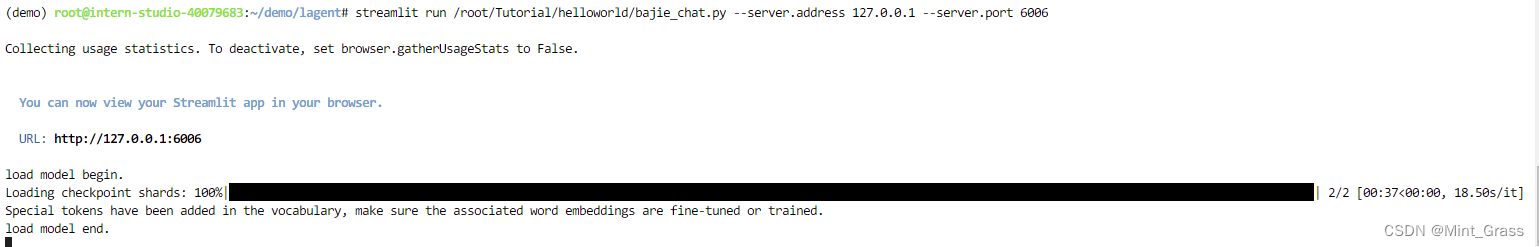

streamlit run /root/Tutorial/helloworld/bajie_chat.py --server.address 127.0.0.1 --server.port 6006

2.2、运行效果

3、使用 Lagent 运行 InternLM2-Chat-7B 模型

3.1、环境

# 激活虚拟环境

conda activate demo

# 下载代码

cd /root/demo

git clone https://gitee.com/internlm/lagent.git

cd lagent

git checkout 581d9fb8987a5d9b72bb9ebd37a95efd47d479ac

pip install -e . # 源码安装

# 构造模型软链接

ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm2-chat-7b /root/models/internlm2-chat-7b

# 修改模型路径 (71行)

vim examples/internlm2_agent_web_demo_hf.py

# 启动(大约需要 5 分钟完成模型加载)

streamlit run /root/demo/lagent/examples/internlm2_agent_web_demo_hf.py --server.address 127.0.0.1 --server.port 6006

3.2、运行效果

注:需要勾选【数据分析】

4、实践部署 浦语·灵笔2 模型

4.1、环境

conda activate demo

# 补充环境包

pip install timm==0.4.12 sentencepiece==0.1.99 markdown2==2.4.10 xlsxwriter==3.1.2 gradio==4.13.0 modelscope==1.9.5

# 下载 InternLM-XComposer 仓库 相关的代码资源:

cd /root/demo

git clone https://gitee.com/internlm/InternLM-XComposer.git

# git clone https://github.com/internlm/InternLM-XComposer.git

cd /root/demo/InternLM-XComposer

git checkout f31220eddca2cf6246ee2ddf8e375a40457ff626

# 创建模型软链接

ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm-xcomposer2-7b /root/models/internlm-xcomposer2-7b

ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm-xcomposer2-vl-7b /root/models/internlm-xcomposer2-vl-7b

# 启动 InternLM-XComposer

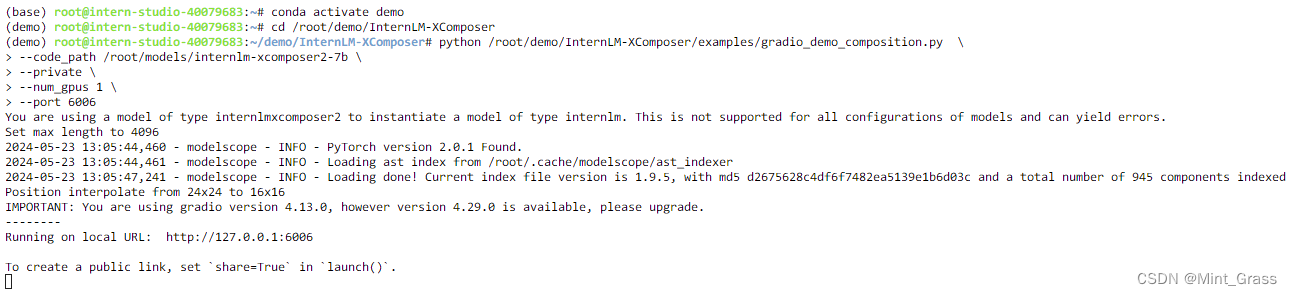

cd /root/demo/InternLM-XComposer

python /root/demo/InternLM-XComposer/examples/gradio_demo_composition.py \

--code_path /root/models/internlm-xcomposer2-7b \

--private \

--num_gpus 1 \

--port 6006

4.2、运行效果

5、图片理解实战

5.1、环境

conda activate demo

cd /root/demo/InternLM-XComposer

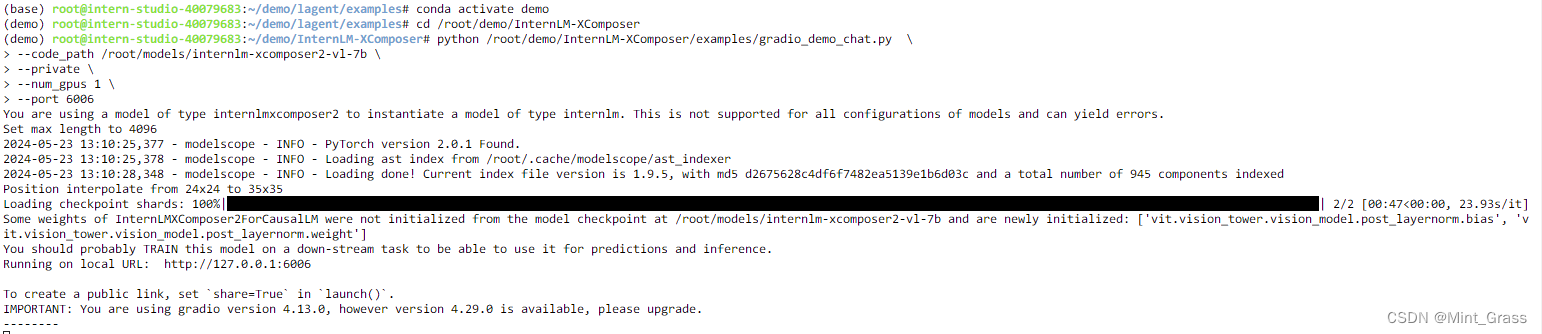

python /root/demo/InternLM-XComposer/examples/gradio_demo_chat.py \

--code_path /root/models/internlm-xcomposer2-vl-7b \

--private \

--num_gpus 1 \

--port 6006

5.2、运行效果

844

844

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?