实验环境:

master:192.168.1.11

node01:192.168.1.33

node02:192.168.1.44

Harbor:192.168.1.55

准备环境,关闭防火墙,SELinux,swap,加载 ip_vs 模块

systemctl stop firewalld

systemctl disable firewalld

setenforce 0

sed -i 's/enforcing/disabled/' /etc/selinux/config

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i >/dev/null 2>&1 && /sbin/modprobe $i;done分别修改主机名并添加hosts文件

//修改主机名

hostnamectl set-hostname master01

hostnamectl set-hostname node01

hostnamectl set-hostname node02

//所有节点修改hosts文件

vim /etc/hosts

192.168.1.11 master01

192.168.1.33 node01

192.168.1.44 node02调整内核参数

cat > /etc/sysctl.d/kubernetes.conf << EOF

net.bridge.bridge-nf-call-ip6tables=1

net.bridge.bridge-nf-call-iptables=1

net.ipv6.conf.all.disable_ipv6=1

net.ipv4.ip_forward=1

EOF

sysctl --system 在所有节点安装docker

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install -y docker-ce docker-ce-cli containerd.io

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://6ijb8ubo.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

}

}

EOF

systemctl daemon-reload

systemctl restart docker.service

systemctl enable docker.service

docker info | grep "Cgroup Driver"在所有节点安装kubeadm,kubelet和kubectl

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum install -y kubelet-1.20.11 kubeadm-1.20.11 kubectl-1.20.11

systemctl enable kubelet.service在master操作

unzip v1.20.11.zip -d /opt/k8s

cd /opt/k8s/v1.20.11

for i in $(ls *.tar); do docker load -i $i; done

scp -r /opt/k8s root@node01:/opt

scp -r /opt/k8s root@node02:/opt在所有node操作

cd /opt

for i in $(ls *.tar); do docker load -i $i; done初始化kubeadm

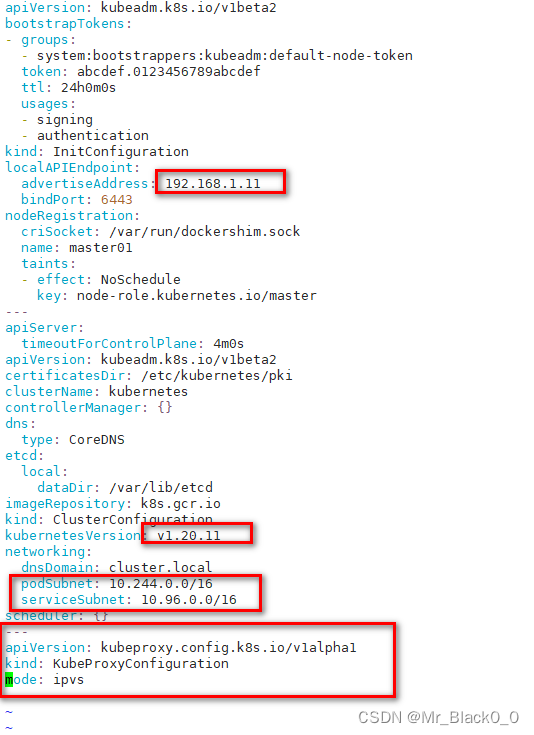

kubeadm config print init-defaults > /opt/kubeadm-config.yaml

cd /opt/

vim kubeadm-config.yaml修改配置文件

使用指定的配置文件 kubeadm-config.yaml 进行 Kubernetes 集群的初始化

kubeadm init --config=kubeadm-config.yaml --upload-certs | tee kubeadm-init.log--upload-certs 参数可以在后续执行加入节点时自动分发证书文件

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

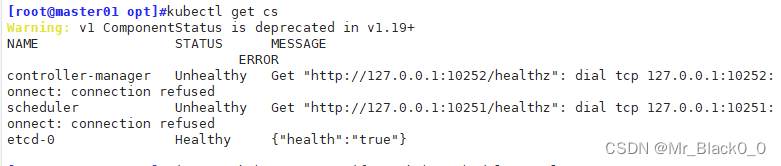

kubectl get cs

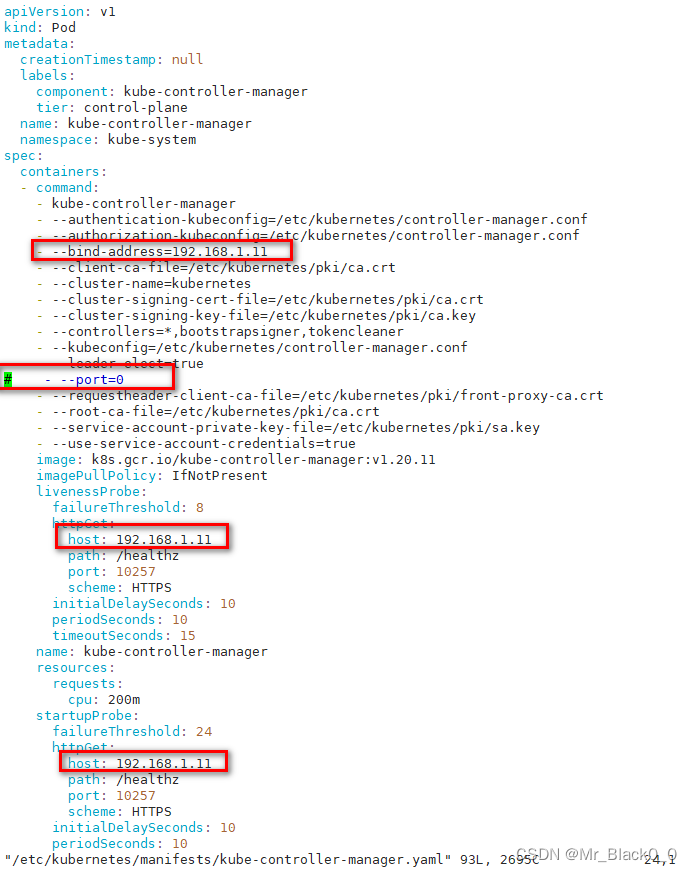

kubectl get cs 发现集群不健康,更改以下两个文件

/etc/kubernetes/manifests/kube-scheduler.yaml

/etc/kubernetes/manifests/kube-controller-manager.yaml

systemctl restart kubelet在所有节点安装网络插件

在所有节点准备flannel-cni-v1.2.0.tar flannel-v0.22.2.tar网络插件,在master准备kube-flannel.yml

docker load -i flannel-cni-v1.2.0.tar

docker load -i flannel-v0.22.2.tar在master操作

kubectl apply -f kube-flannel.yml 最后在node节点执行 kubeadm join,此处命令在先前 Kubernetes 集群的初始化后会打印到屏幕上,注意保存下来。

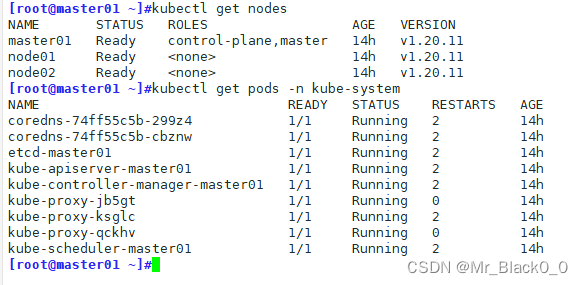

在master节点查询集群状态

kubectl get nodes

kubectl get pods -n kube-system

812

812

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?