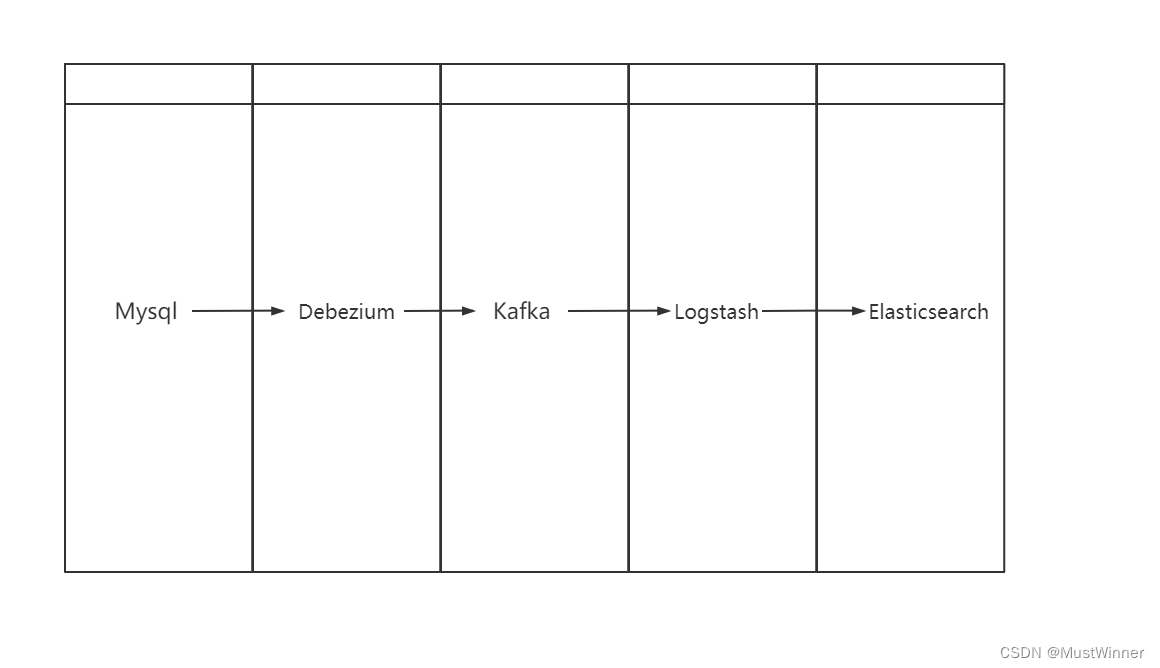

本文目标 mysql → debezium → kafka → logstash → elasticsearch 实现数据实时同步

我这里有两台机器

192.168.16.132 安装mysql 、debezium debezium-ui kafka-manager

192.168.16.137 安装elasticsearch集群、logstash、kafka

1、环境准备

centos 7

docker

2、安装顺序

mysql

kafka

debezium

logstash

elasticsearch

3、安装

3.1 mysql 安装和启动

docker pull mysql:8.0.25

docker run --name mysql01 /usr/mysql/data-3306/:/var/lib/mysql -p 3306:3306 -e MYSQL_ROOT_PASSWORD=123456 mysql:8.0.25

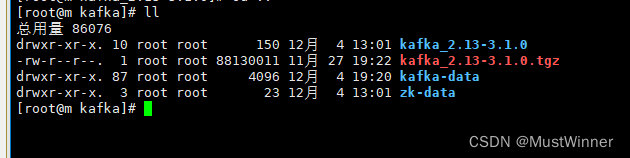

3.2 kafka安装和启动

下载并解压kafka

在同级目录下创建 kafka-data、zk-data

修改 zookeeper.properties

dataDir=/usr/kafka/zk-data

修改 server.properties

broker.id=3

log.dirs=/usr/kafka/kafka-data

要先启动zookeeper

启动zk

./bin/zookeeper-server-start.sh config/zookeeper.properties

在打开另一个窗口

启动kafka

./bin/kafka-server-start.sh config/server.properties

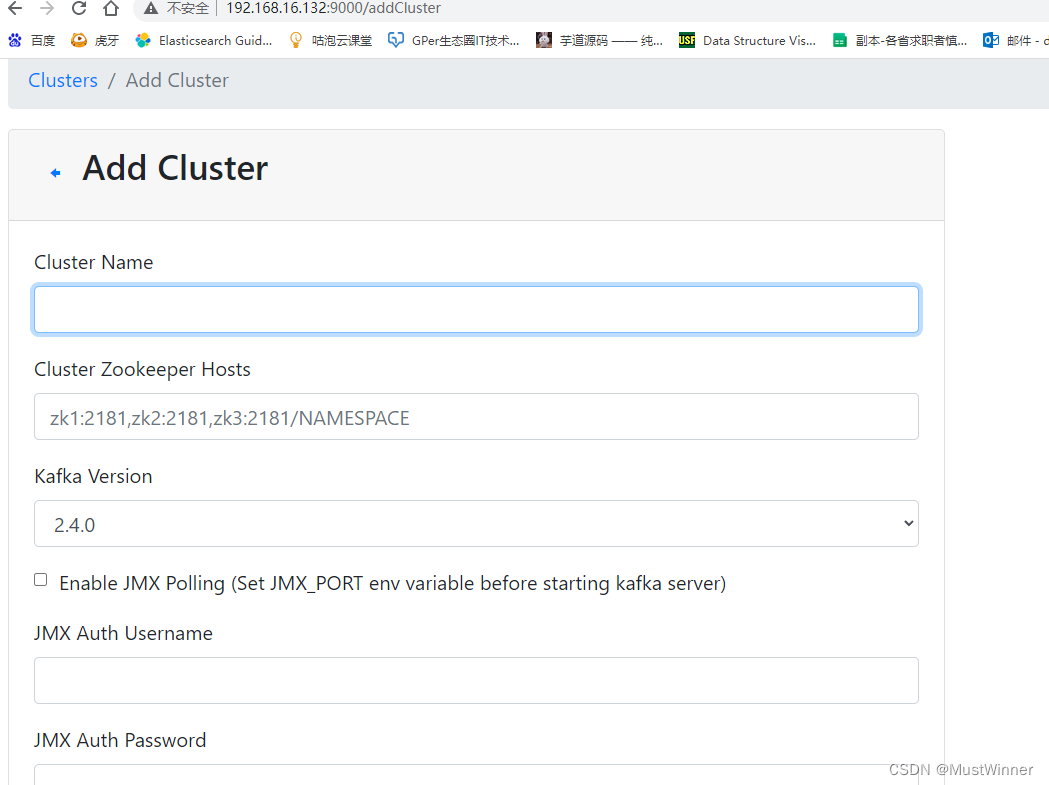

3.3 (这个是kafka可视化工具,可以不用安装)

安装 kafkamanager/kafka-manager

docker pull kafkamanager/kafka-manager

docker run --name kafka-manager01 --network host -e ZK_HOSTS=192.168.16.137 kafkamanager/kafka-manager

#192.168.16.137 为kafka启动所在的地址

访问 http://192.168.16.132:9000/

手动添加kafka

这里只需要填 name和 zk host即可

3.4

安装debezium(这里一定要安装1.9版本,如果你拉最新的版本订阅mysql时会有问题)

docker pull debezium/connect:1.9

docker run -it --rm --name connect01 -p 8083:8083 --network host -e GROUP_ID=1 -e CONFIG_STORAGE_TOPIC=my_connect_configs -e OFFSET_STORAGE_TOPIC=my_connect_offsets -e STATUS_STORAGE_TOPIC=my_connect_statuses -e BOOTSTRAP_SERVERS=192.168.16.137:9092 -e HOST_NAME=m debezium/connect:1.9

3.5

安装 debezium/debezium-ui(可不安装)

docker pull debezium/debezium-ui

docker run -it --name debezium-ui01 -p 8080:8080 --network host -e KAFKA_CONNECT_URI=192.168.16.137 debezium/debezium-ui

3.6

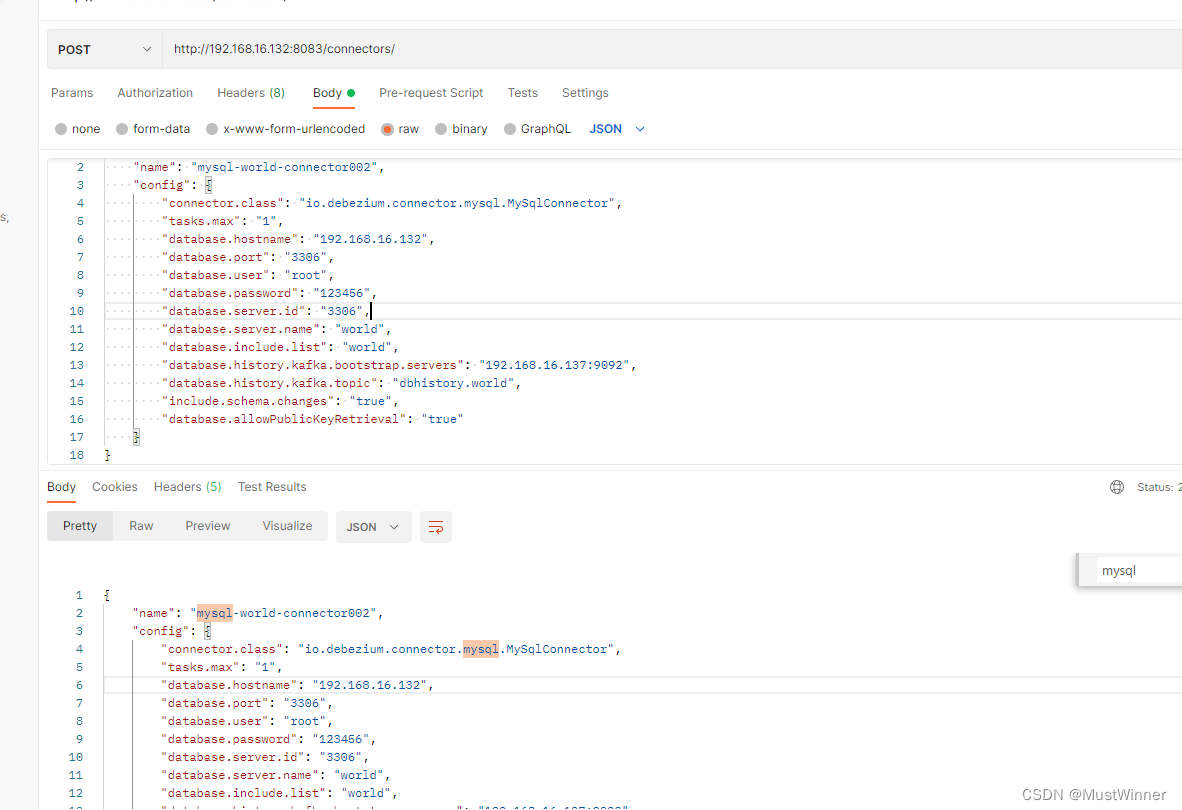

订阅mysql

http://192.168.16.132:8083/connectors/

{

"name": "mysql-world-connector002",

"config": {

"connector.class": "io.debezium.connector.mysql.MySqlConnector",

"tasks.max": "1",

"database.hostname": "192.168.16.132",

"database.port": "3306",

"database.user": "root",

"database.password": "123456",

"database.server.id": "3306",

"database.server.name": "world",

"database.include.list": "world",

"database.history.kafka.bootstrap.servers": "192.168.16.137:9092",

"database.history.kafka.topic": "dbhistory.world",

"include.schema.changes": "true",

"database.allowPublicKeyRetrieval": "true"

}

}

#database.user 数据库用户名

#database.password 数据库密码

#database.server.id 这个只要不重复的行

#database.server.name 自定义

#database.include.list 数据库名

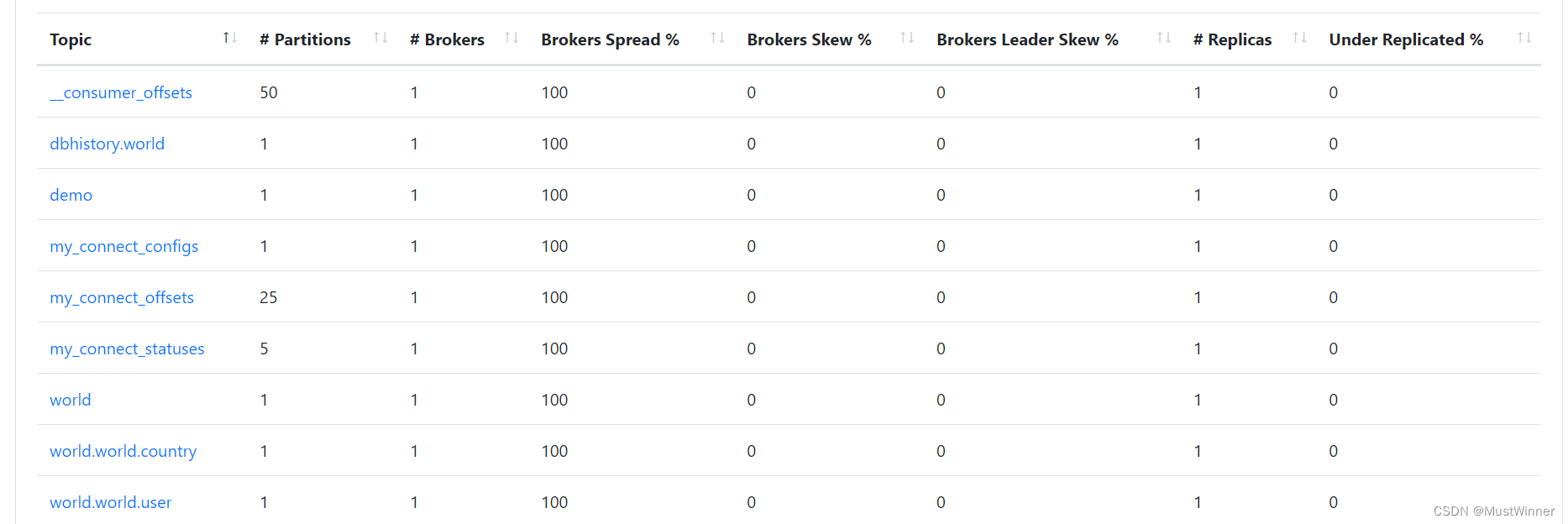

订阅成功后

kafka会生成多个相关topic

3.7

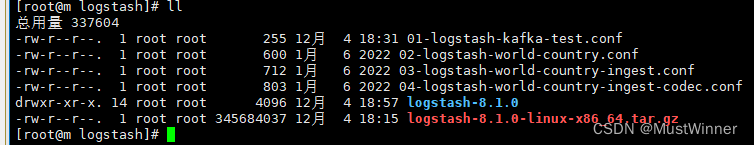

安装logstash8.1(为了和es版本保持一致)

安装并解压

#01-logstash-kafka-test.conf文件

input {

kafka {

bootstrap_servers => "192.168.16.137:9092"

group_id => "logstash-002"

client_id => "logstash-002-108"

consumer_threads => 1

topics => "world"

}

}

filter {

}

output {

stdout { }

}

启动

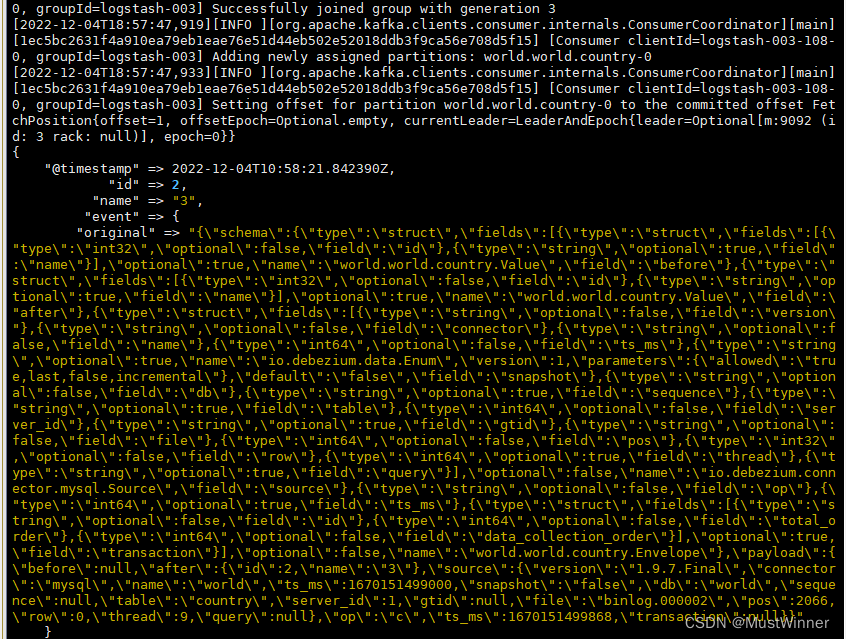

./logstash-8.1.0/bin/logstash -f 01-logstash-kafka-test.conf

消费kafka topic 会显示如图

修改 01-logstash-kafka-test.conf

input {

kafka {

bootstrap_servers => "192.168.16.137:9092"

group_id => "logstash-003"

client_id => "logstash-003-108"

consumer_threads => 1

topics => "world.world.country"

codec => "json"

}

}

filter {

mutate {

add_field => { "country137" => "%{[payload][after]}"}

}

json {

source => "country137"

}

mutate {

#remove_field => ["@version","@timestamp"]

remove_field => ["@version","payload","schema","country137"]

}

}

output {

#stdout { codec => json }

stdout { }

elasticsearch {

hosts => ["http://192.168.16.137:9200"]

index => "world-world-country-codec-%{+YYYY.MM.dd}"

document_id => "%{[Code]}-%{[Name]}"

#user => "elastic"

#password => "changeme"

}

}

重新启动

./logstash-8.1.0/bin/logstash -f 01-logstash-kafka-test.conf

随意修改数据库数据

3.8

es安装(在我的文章中有,安装7.x版本,如果安装8.x需要关闭认证或者在logstash配置文件中配置用户名和密码)

查询es数据,可以看数据库数据变化已经同步到es中了

这边的数据没有json格式化,我比较懒

END

注:此文借用李猛老师的视频来实现

1891

1891

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?