播放/下载

播放是指把服务器发送来的音视频数据显示在屏幕上,下载则是把这些音视频数据保存在文件中,因此实际它们是类似的。下面以下载为例进行讲解。请注意,下载的是FLV类型的文件(播放同理)。

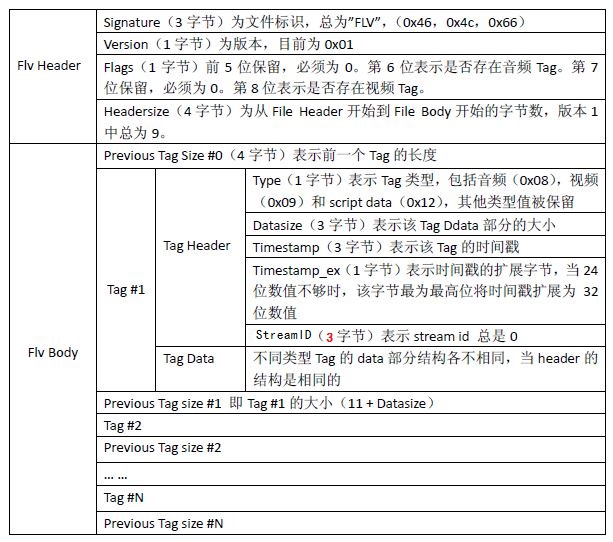

FLV的格式

简要说明一下:

1、FLV文件包含FLV header和FLV body两部分

2、FLV body是由一个个的Tag组成的,Tag之间有一个4字节的字段叫做previousTagSize,用于存放前一个Tag的大小

3、Tag由Tag header和Tag body构成

4、对于普通的音视频来说,Tag header的长度是11 字节,包含:

(1)type。1字节,表明Tag的类型(音频、视频、信息)

(2)dataSize。3字节,表示Tag body的长度

(3)timestamp。3字节,时间戳

(4)timestamp_ex。1字节,时间戳扩展

(5)stream id。3字节

5、Tag body存放的是音视频的信息和数据

具体请参考:http://blog.csdn.net/nb_vol_1/article/details/57084683

FLV头部的代码定义

static const char flvHeader[] = { 'F', 'L', 'V', 0x01,

0x00, /* 0x04 == audio, 0x01 == video */

0x00, 0x00, 0x00, 0x09,

0x00, 0x00, 0x00, 0x00

};

读取服务器的数据

流程如下:

1、读取FLV的头部

2、读取FLV的Tag

int

RTMP_Read(RTMP *r, char *buf, int size)

{

int nRead = 0, total = 0;

/* can't continue */

fail:

switch (r->m_read.status) {

case RTMP_READ_EOF:

case RTMP_READ_COMPLETE:

return 0;

case RTMP_READ_ERROR: /* corrupted stream, resume failed */

SetSockError(EINVAL);

return -1;

default:

break;

}

/* first time thru */

// 读取FLV文件的头部

if (!(r->m_read.flags & RTMP_READ_HEADER))

{

if (!(r->m_read.flags & RTMP_READ_RESUME))

{

char *mybuf = malloc(HEADERBUF), *end = mybuf + HEADERBUF;

int cnt = 0;

r->m_read.buf = mybuf;

r->m_read.buflen = HEADERBUF;

memcpy(mybuf, flvHeader, sizeof(flvHeader));

r->m_read.buf += sizeof(flvHeader);

r->m_read.buflen -= sizeof(flvHeader);

while (r->m_read.timestamp == 0)

{

// 读取FLV头部

nRead = Read_1_Packet(r, r->m_read.buf, r->m_read.buflen);

if (nRead < 0)

{

free(mybuf);

r->m_read.buf = NULL;

r->m_read.buflen = 0;

r->m_read.status = nRead;

goto fail;

}

/* buffer overflow, fix buffer and give up */

if (r->m_read.buf < mybuf || r->m_read.buf > end) {

mybuf = realloc(mybuf, cnt + nRead);

memcpy(mybuf + cnt, r->m_read.buf, nRead);

r->m_read.buf = mybuf + cnt + nRead;

break;

}

cnt += nRead;

r->m_read.buf += nRead;

r->m_read.buflen -= nRead;

if (r->m_read.dataType == 5)

break;

}

mybuf[4] = r->m_read.dataType;

r->m_read.buflen = r->m_read.buf - mybuf;

r->m_read.buf = mybuf;

r->m_read.bufpos = mybuf;

}

r->m_read.flags |= RTMP_READ_HEADER;

}

if ((r->m_read.flags & RTMP_READ_SEEKING) && r->m_read.buf)

{

/* drop whatever's here */

free(r->m_read.buf);

r->m_read.buf = NULL;

r->m_read.bufpos = NULL;

r->m_read.buflen = 0;

}

/* If there's leftover data buffered, use it up */

if (r->m_read.buf)

{

nRead = r->m_read.buflen;

if (nRead > size)

nRead = size;

memcpy(buf, r->m_read.bufpos, nRead);

r->m_read.buflen -= nRead;

if (!r->m_read.buflen)

{

free(r->m_read.buf);

r->m_read.buf = NULL;

r->m_read.bufpos = NULL;

}

else

{

r->m_read.bufpos += nRead;

}

buf += nRead;

total += nRead;

size -= nRead;

}

// 读取FLV的数据

while (size > 0 && (nRead = Read_1_Packet(r, buf, size)) >= 0)

{

if (!nRead) continue;

buf += nRead;

total += nRead;

size -= nRead;

break;

}

if (nRead < 0)

r->m_read.status = nRead;

if (size < 0)

total += size;

return total;

}

读取一个FLV Tag

Read_1_Packet函数的功能就是:

1、读取一个音视频类型的chunk

2、解析chunk的头部信息,并把它们设置为Tag 头部的信息

3、把chunk body中的数据复制到Tag body中

4、结束

// 读取一个音视频类型的chunk

static int

Read_1_Packet(RTMP *r, char *buf, unsigned int buflen)

{

uint32_t prevTagSize = 0;

int rtnGetNextMediaPacket = 0, ret = RTMP_READ_EOF;

RTMPPacket packet = { 0 };

int recopy = FALSE;

unsigned int size;

char *ptr, *pend;

uint32_t nTimeStamp = 0;

unsigned int len;

// 读取一个音视频类型的chunk

rtnGetNextMediaPacket = RTMP_GetNextMediaPacket(r, &packet);

while (rtnGetNextMediaPacket)

{

char *packetBody = packet.m_body;

unsigned int nPacketLen = packet.m_nBodySize;

/* Return RTMP_READ_COMPLETE if this was completed nicely with

* invoke message Play.Stop or Play.Complete

*/

if (rtnGetNextMediaPacket == 2)

{

RTMP_Log(RTMP_LOGDEBUG,

"Got Play.Complete or Play.Stop from server. "

"Assuming stream is complete");

ret = RTMP_READ_COMPLETE;

break;

}

// 判断chunk中数据的类型:音频、视频

r->m_read.dataType |= (((packet.m_packetType == RTMP_PACKET_TYPE_AUDIO) << 2) |

(packet.m_packetType == RTMP_PACKET_TYPE_VIDEO));

// 丢弃太小的视频chunk

if (packet.m_packetType == RTMP_PACKET_TYPE_VIDEO && nPacketLen <= 5)

{

RTMP_Log(RTMP_LOGDEBUG, "ignoring too small video packet: size: %d",

nPacketLen);

ret = RTMP_READ_IGNORE;

break;

}

// 丢弃太小的音频chunk

if (packet.m_packetType == RTMP_PACKET_TYPE_AUDIO && nPacketLen <= 1)

{

RTMP_Log(RTMP_LOGDEBUG, "ignoring too small audio packet: size: %d",

nPacketLen);

ret = RTMP_READ_IGNORE;

break;

}

if (r->m_read.flags & RTMP_READ_SEEKING)

{

ret = RTMP_READ_IGNORE;

break;

}

#ifdef _DEBUG

RTMP_Log(RTMP_LOGDEBUG, "type: %02X, size: %d, TS: %d ms, abs TS: %d",

packet.m_packetType, nPacketLen, packet.m_nTimeStamp,

packet.m_hasAbsTimestamp);

if (packet.m_packetType == RTMP_PACKET_TYPE_VIDEO)

RTMP_Log(RTMP_LOGDEBUG, "frametype: %02X", (*packetBody & 0xf0));

#endif

// 如果是恢复播放/下载

if (r->m_read.flags & RTMP_READ_RESUME)

{

/* check the header if we get one */

// 如果时间戳等于0

if (packet.m_nTimeStamp == 0)

{

// 信息类型的chunk

if (r->m_read.nMetaHeaderSize > 0

&& packet.m_packetType == RTMP_PACKET_TYPE_INFO)

{

AMFObject metaObj;

// 解码元数据

int nRes =

AMF_Decode(&metaObj, packetBody, nPacketLen, FALSE);

if (nRes >= 0)

{

AVal metastring;

AMFProp_GetString(AMF_GetProp(&metaObj, NULL, 0),

&metastring);

if (AVMATCH(&metastring, &av_onMetaData))

{

/* compare */

if ((r->m_read.nMetaHeaderSize != nPacketLen) ||

(memcmp

(r->m_read.metaHeader, packetBody,

r->m_read.nMetaHeaderSize) != 0))

{

ret = RTMP_READ_ERROR;

}

}

AMF_Reset(&metaObj);

if (ret == RTMP_READ_ERROR)

break;

}

}

/* check first keyframe to make sure we got the right position

* in the stream! (the first non ignored frame)

*/

if (r->m_read.nInitialFrameSize > 0)

{

/* video or audio data */

if (packet.m_packetType == r->m_read.initialFrameType

&& r->m_read.nInitialFrameSize == nPacketLen)

{

/* we don't compare the sizes since the packet can

* contain several FLV packets, just make sure the

* first frame is our keyframe (which we are going

* to rewrite)

*/

if (memcmp

(r->m_read.initialFrame, packetBody,

r->m_read.nInitialFrameSize) == 0)

{

RTMP_Log(RTMP_LOGDEBUG, "Checked keyframe successfully!");

r->m_read.flags |= RTMP_READ_GOTKF;

/* ignore it! (what about audio data after it? it is

* handled by ignoring all 0ms frames, see below)

*/

ret = RTMP_READ_IGNORE;

break;

}

}

/* hande FLV streams, even though the server resends the

* keyframe as an extra video packet it is also included

* in the first FLV stream chunk and we have to compare

* it and filter it out !!

*/

if (packet.m_packetType == RTMP_PACKET_TYPE_FLASH_VIDEO)

{

/* basically we have to find the keyframe with the

* correct TS being nResumeTS

*/

unsigned int pos = 0;

uint32_t ts = 0;

while (pos + 11 < nPacketLen)

{

/* size without header (11) and prevTagSize (4) */

uint32_t dataSize =

AMF_DecodeInt24(packetBody + pos + 1);

ts = AMF_DecodeInt24(packetBody + pos + 4);

ts |= (packetBody[pos + 7] << 24);

#ifdef _DEBUG

RTMP_Log(RTMP_LOGDEBUG,

"keyframe search: FLV Packet: type %02X, dataSize: %d, timeStamp: %d ms",

packetBody[pos], dataSize, ts);

#endif

/* ok, is it a keyframe?:

* well doesn't work for audio!

*/

if (packetBody[pos /*6928, test 0 */] ==

r->m_read.initialFrameType

/* && (packetBody[11]&0xf0) == 0x10 */)

{

if (ts == r->m_read.nResumeTS)

{

RTMP_Log(RTMP_LOGDEBUG,

"Found keyframe with resume-keyframe timestamp!");

if (r->m_read.nInitialFrameSize != dataSize

|| memcmp(r->m_read.initialFrame,

packetBody + pos + 11,

r->m_read.

nInitialFrameSize) != 0)

{

RTMP_Log(RTMP_LOGERROR,

"FLV Stream: Keyframe doesn't match!");

ret = RTMP_READ_ERROR;

break;

}

r->m_read.flags |= RTMP_READ_GOTFLVK;

/* skip this packet?

* check whether skippable:

*/

if (pos + 11 + dataSize + 4 > nPacketLen)

{

RTMP_Log(RTMP_LOGWARNING,

"Non skipable packet since it doesn't end with chunk, stream corrupt!");

ret = RTMP_READ_ERROR;

break;

}

packetBody += (pos + 11 + dataSize + 4);

nPacketLen -= (pos + 11 + dataSize + 4);

goto stopKeyframeSearch;

}

else if (r->m_read.nResumeTS < ts)

{

/* the timestamp ts will only increase with

* further packets, wait for seek

*/

goto stopKeyframeSearch;

}

}

pos += (11 + dataSize + 4);

}

if (ts < r->m_read.nResumeTS)

{

RTMP_Log(RTMP_LOGERROR,

"First packet does not contain keyframe, all "

"timestamps are smaller than the keyframe "

"timestamp; probably the resume seek failed?");

}

stopKeyframeSearch:

;

if (!(r->m_read.flags & RTMP_READ_GOTFLVK))

{

RTMP_Log(RTMP_LOGERROR,

"Couldn't find the seeked keyframe in this chunk!");

ret = RTMP_READ_IGNORE;

break;

}

}

}

}

if (packet.m_nTimeStamp > 0

&& (r->m_read.flags & (RTMP_READ_GOTKF | RTMP_READ_GOTFLVK)))

{

/* another problem is that the server can actually change from

* 09/08 video/audio packets to an FLV stream or vice versa and

* our keyframe check will prevent us from going along with the

* new stream if we resumed.

*

* in this case set the 'found keyframe' variables to true.

* We assume that if we found one keyframe somewhere and were

* already beyond TS > 0 we have written data to the output

* which means we can accept all forthcoming data including the

* change between 08/09 <-> FLV packets

*/

r->m_read.flags |= (RTMP_READ_GOTKF | RTMP_READ_GOTFLVK);

}

/* skip till we find our keyframe

* (seeking might put us somewhere before it)

*/

if (!(r->m_read.flags & RTMP_READ_GOTKF) &&

packet.m_packetType != RTMP_PACKET_TYPE_FLASH_VIDEO)

{

RTMP_Log(RTMP_LOGWARNING,

"Stream does not start with requested frame, ignoring data... ");

r->m_read.nIgnoredFrameCounter++;

if (r->m_read.nIgnoredFrameCounter > MAX_IGNORED_FRAMES)

ret = RTMP_READ_ERROR; /* fatal error, couldn't continue stream */

else

ret = RTMP_READ_IGNORE;

break;

}

/* ok, do the same for FLV streams */

if (!(r->m_read.flags & RTMP_READ_GOTFLVK) &&

packet.m_packetType == RTMP_PACKET_TYPE_FLASH_VIDEO)

{

RTMP_Log(RTMP_LOGWARNING,

"Stream does not start with requested FLV frame, ignoring data... ");

r->m_read.nIgnoredFlvFrameCounter++;

if (r->m_read.nIgnoredFlvFrameCounter > MAX_IGNORED_FRAMES)

ret = RTMP_READ_ERROR;

else

ret = RTMP_READ_IGNORE;

break;

}

/* we have to ignore the 0ms frames since these are the first

* keyframes; we've got these so don't mess around with multiple

* copies sent by the server to us! (if the keyframe is found at a

* later position there is only one copy and it will be ignored by

* the preceding if clause)

*/

if (!(r->m_read.flags & RTMP_READ_NO_IGNORE) &&

packet.m_packetType != RTMP_PACKET_TYPE_FLASH_VIDEO)

{

/* exclude type RTMP_PACKET_TYPE_FLASH_VIDEO since it can

* contain several FLV packets

*/

if (packet.m_nTimeStamp == 0)

{

ret = RTMP_READ_IGNORE;

break;

}

else

{

/* stop ignoring packets */

r->m_read.flags |= RTMP_READ_NO_IGNORE;

}

}

}

/* calculate packet size and allocate slop buffer if necessary */

// 计算实际需要的缓冲区大小,然后分配内存

/*

** 其中nPacketLen表示数据的长度,如果是音视频Tag,那么Tag的头部是11字节:

** 1字节的type

** 3字节的dataSize

** 3字节的timestamp

** 1字节的timestamp_ex

** 3字节的stream id

*/

size = nPacketLen +

((packet.m_packetType == RTMP_PACKET_TYPE_AUDIO

|| packet.m_packetType == RTMP_PACKET_TYPE_VIDEO

|| packet.m_packetType == RTMP_PACKET_TYPE_INFO) ? 11 : 0) +

(packet.m_packetType != RTMP_PACKET_TYPE_FLASH_VIDEO ? 4 : 0);

// FLV中tag之间有一个4字节的字段用于存放上一个Tag的长度

if (size + 4 > buflen)

{

/* the extra 4 is for the case of an FLV stream without a last

* prevTagSize (we need extra 4 bytes to append it) */

r->m_read.buf = malloc(size + 4);

if (r->m_read.buf == 0)

{

RTMP_Log(RTMP_LOGERROR, "Couldn't allocate memory!");

ret = RTMP_READ_ERROR; /* fatal error */

break;

}

recopy = TRUE;

ptr = r->m_read.buf;

}

else

{

ptr = buf;

}

pend = ptr + size + 4;

/* use to return timestamp of last processed packet */

/* audio (0x08), video (0x09) or metadata (0x12) packets :

* construct 11 byte header then add rtmp packet's data */

if (packet.m_packetType == RTMP_PACKET_TYPE_AUDIO

|| packet.m_packetType == RTMP_PACKET_TYPE_VIDEO

|| packet.m_packetType == RTMP_PACKET_TYPE_INFO)

{

// 取得时间戳字段

nTimeStamp = r->m_read.nResumeTS + packet.m_nTimeStamp;

// 计算上一个Tag的长度

prevTagSize = 11 + nPacketLen;

// 把Tag的类型放进目标缓冲区中

*ptr = packet.m_packetType;

ptr++;

// 把dataSize解析出来

ptr = AMF_EncodeInt24(ptr, pend, nPacketLen);

#if 0

if(packet.m_packetType == RTMP_PACKET_TYPE_VIDEO) {

/* H264 fix: */

if((packetBody[0] & 0x0f) == 7) { /* CodecId = H264 */

uint8_t packetType = *(packetBody+1);

uint32_t ts = AMF_DecodeInt24(packetBody+2); /* composition time */

int32_t cts = (ts+0xff800000)^0xff800000;

RTMP_Log(RTMP_LOGDEBUG, "cts : %d\n", cts);

nTimeStamp -= cts;

/* get rid of the composition time */

CRTMP::EncodeInt24(packetBody+2, 0);

}

RTMP_Log(RTMP_LOGDEBUG, "VIDEO: nTimeStamp: 0x%08X (%d)\n", nTimeStamp, nTimeStamp);

}

#endif

// 把时间戳解析出来

ptr = AMF_EncodeInt24(ptr, pend, nTimeStamp);

*ptr = (char)((nTimeStamp & 0xFF000000) >> 24);

ptr++;

/* stream id */

// 解析 stream id

ptr = AMF_EncodeInt24(ptr, pend, 0);

}

// 复制Tag的数据,到缓冲区中

memcpy(ptr, packetBody, nPacketLen);

len = nPacketLen;

/* correct tagSize and obtain timestamp if we have an FLV stream */

// 对于Flash类型的视频,现在不用去关注

if (packet.m_packetType == RTMP_PACKET_TYPE_FLASH_VIDEO)

{

unsigned int pos = 0;

int delta;

/* grab first timestamp and see if it needs fixing */

nTimeStamp = AMF_DecodeInt24(packetBody + 4);

nTimeStamp |= (packetBody[7] << 24);

delta = packet.m_nTimeStamp - nTimeStamp + r->m_read.nResumeTS;

while (pos + 11 < nPacketLen)

{

/* size without header (11) and without prevTagSize (4) */

uint32_t dataSize = AMF_DecodeInt24(packetBody + pos + 1);

nTimeStamp = AMF_DecodeInt24(packetBody + pos + 4);

nTimeStamp |= (packetBody[pos + 7] << 24);

if (delta)

{

nTimeStamp += delta;

AMF_EncodeInt24(ptr + pos + 4, pend, nTimeStamp);

ptr[pos + 7] = nTimeStamp >> 24;

}

/* set data type */

r->m_read.dataType |= (((*(packetBody + pos) == 0x08) << 2) |

(*(packetBody + pos) == 0x09));

if (pos + 11 + dataSize + 4 > nPacketLen)

{

if (pos + 11 + dataSize > nPacketLen)

{

RTMP_Log(RTMP_LOGERROR,

"Wrong data size (%u), stream corrupted, aborting!",

dataSize);

ret = RTMP_READ_ERROR;

break;

}

RTMP_Log(RTMP_LOGWARNING, "No tagSize found, appending!");

/* we have to append a last tagSize! */

prevTagSize = dataSize + 11;

AMF_EncodeInt32(ptr + pos + 11 + dataSize, pend,

prevTagSize);

size += 4;

len += 4;

}

else

{

prevTagSize =

AMF_DecodeInt32(packetBody + pos + 11 + dataSize);

#ifdef _DEBUG

RTMP_Log(RTMP_LOGDEBUG,

"FLV Packet: type %02X, dataSize: %lu, tagSize: %lu, timeStamp: %lu ms",

(unsigned char)packetBody[pos], dataSize, prevTagSize,

nTimeStamp);

#endif

if (prevTagSize != (dataSize + 11))

{

#ifdef _DEBUG

RTMP_Log(RTMP_LOGWARNING,

"Tag and data size are not consitent, writing tag size according to dataSize+11: %d",

dataSize + 11);

#endif

prevTagSize = dataSize + 11;

AMF_EncodeInt32(ptr + pos + 11 + dataSize, pend,

prevTagSize);

}

}

pos += prevTagSize + 4; /*(11+dataSize+4); */

}

}

ptr += len;

if (packet.m_packetType != RTMP_PACKET_TYPE_FLASH_VIDEO)

{

/* FLV tag packets contain their own prevTagSize */

AMF_EncodeInt32(ptr, pend, prevTagSize);

}

/* In non-live this nTimeStamp can contain an absolute TS.

* Update ext timestamp with this absolute offset in non-live mode

* otherwise report the relative one

*/

/* RTMP_Log(RTMP_LOGDEBUG, "type: %02X, size: %d, pktTS: %dms, TS: %dms, bLiveStream: %d", packet.m_packetType, nPacketLen, packet.m_nTimeStamp, nTimeStamp, r->Link.lFlags & RTMP_LF_LIVE); */

r->m_read.timestamp = (r->Link.lFlags & RTMP_LF_LIVE) ? packet.m_nTimeStamp : nTimeStamp;

ret = size;

break;

}

if (rtnGetNextMediaPacket)

RTMPPacket_Free(&packet);

if (recopy)

{

len = ret > buflen ? buflen : ret;

memcpy(buf, r->m_read.buf, len);

r->m_read.bufpos = r->m_read.buf + len;

r->m_read.buflen = ret - len;

}

return ret;

}

946

946

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?