概述

分布式文件系统

适合:一次写入,多次读出,且不支持修改

文件块大小

128M

HDFS的shell操作(重点)

基本语法

hadoop fs 具体命令

或者

hdfs dfs 具体命名

命令大全

Usage: hadoop fs [generic options]

[-appendToFile <localsrc> ... <dst>] # 追加

[-cat [-ignoreCrc] <src> ...] # 查看

[-checksum <src> ...]

[-chgrp [-R] GROUP PATH...] # 改组

[-chmod [-R] <MODE[,MODE]... | OCTALMODE> PATH...] # 改权限

[-chown [-R] [OWNER][:[GROUP]] PATH...] # 改所有者

[-copyFromLocal [-f] [-p] [-l] [-d] [-t <thread count>] <localsrc> ... <dst>] # 上传

[-copyToLocal [-f] [-p] [-ignoreCrc] [-crc] <src> ... <localdst>]# 下载

[-count [-q] [-h] [-v] [-t [<storage type>]] [-u] [-x] [-e] <path> ...]

[-cp [-f] [-p | -p[topax]] [-d] <src> ... <dst>] # 复制

[-createSnapshot <snapshotDir> [<snapshotName>]]

[-deleteSnapshot <snapshotDir> <snapshotName>]

[-df [-h] [<path> ...]]

[-du [-s] [-h] [-v] [-x] <path> ...] # 统计磁盘文件大小

[-expunge]

[-find <path> ... <expression> ...]

[-get [-f] [-p] [-ignoreCrc] [-crc] <src> ... <localdst>] # 下载

[-getfacl [-R] <path>]

[-getfattr [-R] {-n name | -d} [-e en] <path>]

[-getmerge [-nl] [-skip-empty-file] <src> <localdst>]

[-head <file>]

[-help [cmd ...]]

[-ls [-C] [-d] [-h] [-q] [-R] [-t] [-S] [-r] [-u] [-e] [<path> ...]] # 查看列表

[-mkdir [-p] <path> ...] # 创建

[-moveFromLocal <localsrc> ... <dst>] # 剪切到hdfs

[-moveToLocal <src> <localdst>] # 剪切到本地

[-mv <src> ... <dst>] #移动

[-put [-f] [-p] [-l] [-d] <localsrc> ... <dst>] # 上传

[-renameSnapshot <snapshotDir> <oldName> <newName>]

[-rm [-f] [-r|-R] [-skipTrash] [-safely] <src> ...] # 删除

[-rmdir [--ignore-fail-on-non-empty] <dir> ...]

[-setfacl [-R] [{-b|-k} {-m|-x <acl_spec>} <path>]|[--set <acl_spec> <path>]]

[-setfattr {-n name [-v value] | -x name} <path>]

[-setrep [-R] [-w] <rep> <path> ...] # 设置副本数

[-stat [format] <path> ...]

[-tail [-f] <file>]

[-test -[defsz] <path>]

[-text [-ignoreCrc] <src> ...]

[-touch [-a] [-m] [-t TIMESTAMP ] [-c] <path> ...]

[-touchz <path> ...]

[-truncate [-w] <length> <path> ...]

[-usage [cmd ...]]

Generic options supported are:

-conf <configuration file> specify an application configuration file

-D <property=value> define a value for a given property

-fs <file:///|hdfs://namenode:port> specify default filesystem URL to use, overrides 'fs.defaultFS' property from configurations.

-jt <local|resourcemanager:port> specify a ResourceManager

-files <file1,...> specify a comma-separated list of files to be copied to the map reduce cluster

-libjars <jar1,...> specify a comma-separated list of jar files to be included in the classpath

-archives <archive1,...> specify a comma-separated list of archives to be unarchived on the compute machines

The general command line syntax is:

command [genericOptions] [commandOptions]

查看详细命令

hadoop fs -help 命令(如cat)

更改hdfs的权限

vi core-site.xml

<property>

<name>hadoop.http.staticuser.user</name>

<value>root</value>

</property>

HDFS客户端API操作

Windows环境配置

- 将Windows依赖放到文件夹,

- 配置环境变量,添加HADOOP_HOME ,编辑Path添加%HADOOP_HOME%/bin

- 拷贝hadoop.dll和winutils.exe到C:\Windows\System32

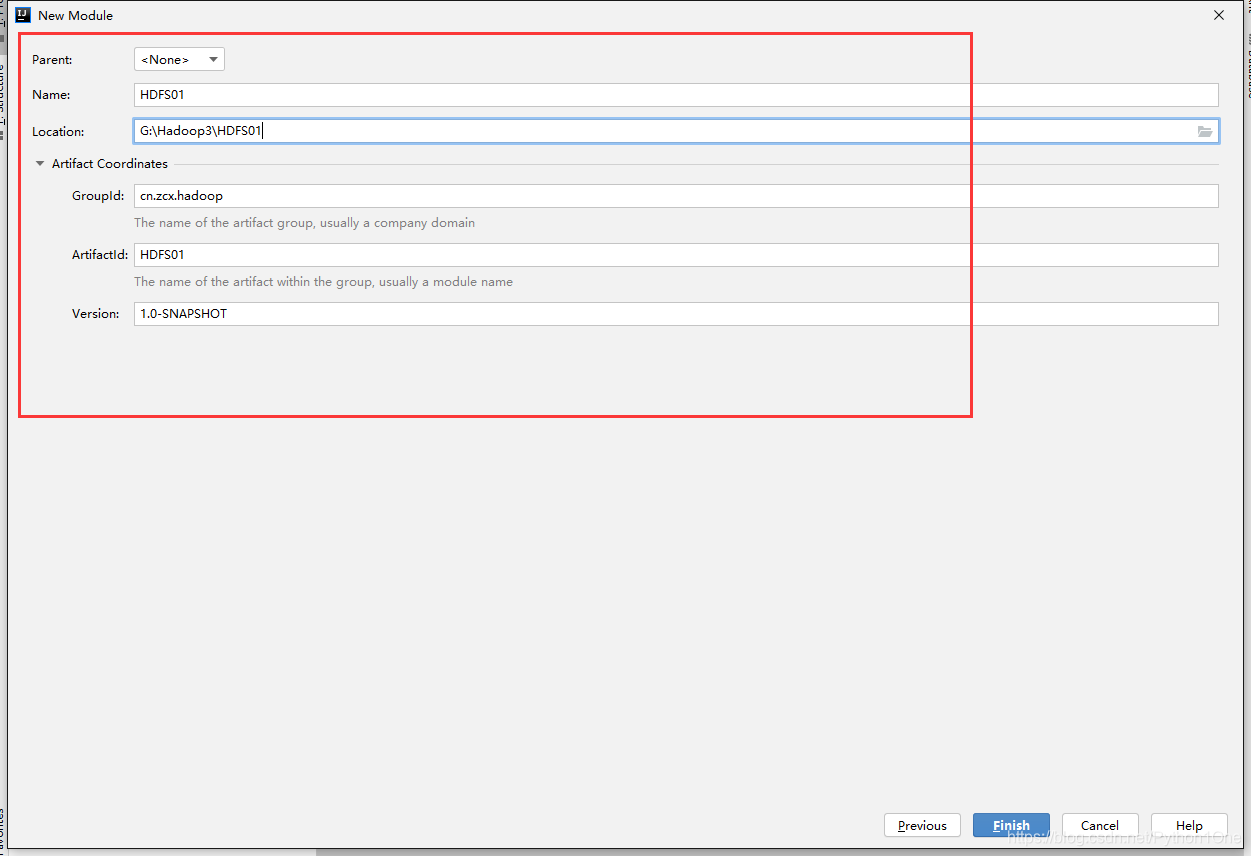

创建java项目

配置

编辑pom.xml

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-slf4j-impl</artifactId>

<version>2.12.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

</dependencies>

在src/main/resources中建立log4j2.xml

打印日志到控制台

<?xml version="1.0" encoding="UTF-8"?>

<Configuration status="WARN">

<Appenders>

<Console name="Console" target="SYSTEM_OUT">

<PatternLayout pattern="%d{HH:mm:ss.SSS} [%t] %-5level %logger{36} - %msg%n"/>

</Console>

</Appenders>

<Loggers>

<Root level="error">

<AppenderRef ref="Console"/>

</Root>

</Loggers>

</Configuration>

编写代码

在/src/main/java/cn.zcx.hdfs创建TestHDFS类

public class TestHDFS {

// 创建全局变量

private FileSystem fs;

private Configuration conf;

private URI uri;

private String user;

// 从本地上传文件

@Test

public void testUpload() throws IOException {

fs.copyFromLocalFile(false,true,

new Path("F:\\Download\\使用前说明.txt"),

new Path("/testhdfs"));

}

/*

* @Before 方法在@Test方法执行之前执行

* */

@Before

public void init() throws IOException, InterruptedException {

uri = URI.create("hdfs://master:8020");

conf = new Configuration();

user = "root";

fs = FileSystem.get(uri,conf,user);

}

/*

* @After方法在@Test方法结束后执行

* */

@After

public void close() throws IOException {

fs.close();

}

@Test

public void testHDFS() throws IOException, InterruptedException {

//1. 创建文件系统对象

/*

URI uri = URI.create("hdfs://master:8020");

Configuration conf = new Configuration();

String user = "root";

FileSystem fs = FileSystem.get(uri,conf,user);

System.out.println("fs: " + fs);

*/

// 2. 创建一个目录

boolean b = fs.mkdirs(new Path("/testhdfs"));

System.out.println(b);

// 3. 关闭

fs.close();

}

}

参数优先级

xxx-default.xml < xxx-site.xml < IDEA中resource中创建xxx-site.xml < 在代码中通过更改Configuration 参数

文件下载

@Test

public void testDownload() throws IOException {

fs.copyToLocalFile(false,

new Path("/testhdfs/使用前说明.txt"),

new Path("F:\\Download\\"),

true);

}

文件更改移动

//改名or移动(路径改变就可以)

@Test

public void testRename() throws IOException {

boolean b = fs.rename(new Path("/testhdfs/使用前说明.txt"),

new Path("/testhdfs/zcx.txt"));

System.out.println(b);

}

查看文件详细信息

// 查看文件详情

@Test

public void testListFiles() throws IOException {

RemoteIterator<LocatedFileStatus> listFiles = fs.listFiles(new Path("/"), true);

//迭代操作

while (listFiles.hasNext()){

LocatedFileStatus fileStatus = listFiles.next();

//获取文件详情

System.out.println("文件路径:"+fileStatus.getPath());

System.out.println("文件权限:"+fileStatus.getPermission());

System.out.println("文件主人:"+fileStatus.getOwner());

System.out.println("文件组:"+fileStatus.getGroup());

System.out.println("文件大小:"+fileStatus.getLen());

System.out.println("文件副本数:"+fileStatus.getReplication());

System.out.println("文件块位置:"+ Arrays.toString(fileStatus.getBlockLocations()));

System.out.println("===============================");

}

}

文件删除

第二参数,true递归删除

//文件删除

@Test

public void testDelete() throws IOException {

boolean b = fs.delete(new Path("/testhdfs/"), true);

System.out.println(b);

}

NN与2NN工作原理

4314

4314

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?